流处理,这里用netcat来完成

package com.smalltiger.flinkWC

import org.apache.flink.api.java.utils.ParameterTool

import org.apache.flink.streaming.api.scala._

/**

* Created by smalltiger on 2019/11/6.

* flink基于流处理的一个WordCount统计

*/

object StreamWC {

def main(args: Array[String]): Unit = {

//从外部命令中获取参数

var params: ParameterTool = ParameterTool.fromArgs(args)

var host: String = params.get("host")

var port: Int = params.getInt("port")

//1.获取当前执行环境

var env: StreamExecutionEnvironment = StreamExecutionEnvironment.getExecutionEnvironment

//接受socket文本流

val textDstrem:DataStream[String] = env.socketTextStream(host,port)

//flatMap和Map需要引入引入隐式转换

import org.apache.flink.api.scala._

val dataStream:DataStream[(String,Int)] = textDstrem.flatMap(_.split(" ")).filter(_.nonEmpty).map((_,1)).keyBy(0).sum(1)

dataStream.print().setParallelism(1)

//启动executor,执行任务

env.execute("启动任务")

}

}

批处理,直接处理文件内容

package com.smalltiger.flinkWC

import org.apache.flink.api.scala._

/**

* Created by smalltiger on 2019/11/6.

* flink基于批处理统计wordcount

*/

object WordCount {

def main(args: Array[String]): Unit = {

//创建执行环境

val env = ExecutionEnvironment.getExecutionEnvironment

//从文件中读取数据

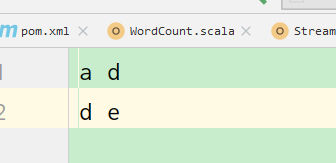

val inpath = "D:\\WorkSpace\\flinkWC\\src\\main\\resources\\abc.txt";

var inputDS: DataSet[String] = env.readTextFile(inpath)

//按照空格进行一个分词,对单词进行groupby分组,然后用sum进行一个聚合

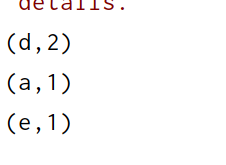

var wordCounts: AggregateDataSet[(String, Int)] = inputDS.flatMap(_.split(" ")).map((_, 1)).groupBy(0).sum(1)

wordCounts.print()

}

}

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?