大家都知道开发项目,写单元测试是个好习惯😊但是有些模块依赖第三方组件、尤其是大数据的第三方组件使用门槛又这么高😵很多时候并不是不愿意写单测,而是写单测太麻烦了…

为此,我特意总结了一些模版,用来测试HDFS、Hive这些,接着往下看👀吧~

HDFS

依赖

第一步只需要导入依赖

<dependency>

<groupId>com.github.sakserv</groupId>

<artifactId>hadoop-mini-clusters-hdfs</artifactId>

<version>0.1.16</version>

</dependency>

代码

第二部直接启动cluster,后面在进行自己需要的操作

import com.github.sakserv.minicluster.impl.HdfsLocalCluster;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FileStatus;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.junit.Test;

public class HdfsTest {

@Test

public void test() throws Exception{

//启动一个HDFS

HdfsLocalCluster hdfsLocalCluster = new HdfsLocalCluster.Builder()

.setHdfsNamenodePort(12345)

.setHdfsNamenodeHttpPort(12341)

.setHdfsTempDir("embedded_hdfs")

.setHdfsNumDatanodes(1)

.setHdfsEnablePermissions(false)

.setHdfsFormat(true)

.setHdfsEnableRunningUserAsProxyUser(true)

.setHdfsConfig(new Configuration())

.build();

hdfsLocalCluster.start();

//获得文件系统并进行一些操作,可以自定义自己测试需要的内容

FileSystem fs = hdfsLocalCluster.getHdfsFileSystemHandle();

fs.mkdirs(new Path("/test"));

FileStatus[] status = fs.listStatus(new Path("/"));

System.out.println(status.length);

System.out.println(status[0].getModificationTime());

}

}

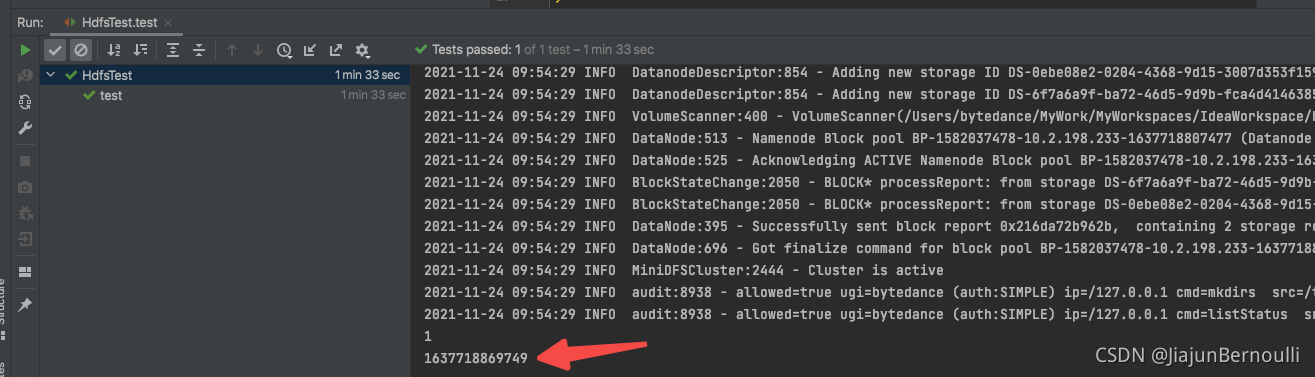

结果

HS2

依赖

HiveServer2需要依赖HMS和ZK,用hive-jdbc连接启动好的HS2

如果只需要HMS,可以只保留hivemetastore的依赖

<dependency>

<groupId>com.github.sakserv</groupId>

<artifactId>hadoop-mini-clusters-zookeeper</artifactId>

<version>0.1.16</version>

</dependency>

<dependency>

<groupId>com.github.sakserv</groupId>

<artifactId>hadoop-mini-clusters-hivemetastore</artifactId>

<version>0.1.16</version>

</dependency>

<dependency>

<groupId>com.github.sakserv</groupId>

<artifactId>hadoop-mini-clusters-hiveserver2</artifactId>

<version>0.1.16</version>

</dependency>

<dependency>

<groupId>org.apache.hive</groupId>

<artifactId>hive-jdbc</artifactId>

<version>1.2.1000.2.6.5.0-292</version>

</dependency>

代码

下面的模块就链接HS2查询了一句SELECT 1

import com.github.sakserv.minicluster.impl.HiveLocalMetaStore;

import com.github.sakserv.minicluster.impl.HiveLocalServer2;

import com.github.sakserv.minicluster.impl.ZookeeperLocalCluster;

import java.sql.Connection;

import java.sql.DriverManager;

import java.sql.ResultSet;

import java.sql.ResultSetMetaData;

import java.sql.Statement;

import org.apache.hadoop.hive.conf.HiveConf;

import org.junit.After;

import org.junit.Before;

import org.junit.Test;

public class HS2Test {

HiveLocalMetaStore hiveLocalMetaStore;

HiveLocalServer2 hiveLocalServer2;

ZookeeperLocalCluster zookeeperLocalCluster;

@Before

public void setup() throws Exception {

zookeeperLocalCluster = new ZookeeperLocalCluster.Builder()

.setPort(12345)

.setTempDir("embedded_zookeeper")

.setZookeeperConnectionString("localhost:12345")

.setMaxClientCnxns(60)

.setElectionPort(20001)

.setQuorumPort(20002)

.setDeleteDataDirectoryOnClose(false)

.setServerId(1)

.setTickTime(2000)

.build();

zookeeperLocalCluster.start();

hiveLocalMetaStore = new HiveLocalMetaStore.Builder()

.setHiveMetastoreHostname("localhost")

.setHiveMetastorePort(12347)

.setHiveMetastoreDerbyDbDir("metastore_db")

.setHiveScratchDir("hive_scratch_dir")

.setHiveWarehouseDir("warehouse_dir")

.setHiveConf(new HiveConf())

.build();

hiveLocalMetaStore.start();

hiveLocalServer2 = new HiveLocalServer2.Builder()

.setHiveServer2Hostname("localhost")

.setHiveServer2Port(12348)

.setHiveMetastoreHostname("localhost")

.setHiveMetastorePort(12347)

.setHiveMetastoreDerbyDbDir("metastore_db")

.setHiveScratchDir("hive_scratch_dir")

.setHiveWarehouseDir("warehouse_dir")

.setHiveConf(new HiveConf())

.setZookeeperConnectionString("localhost:12345")

.build();

hiveLocalServer2.start();

}

@Test

public void testSelect() throws Exception{

Class.forName("org.apache.hive.jdbc.HiveDriver");

// URL parameters

String url = "jdbc:hive2://localhost:12348";

Connection connection = DriverManager.getConnection(url);

Statement statement = connection.createStatement();

statement.execute("select 1");

ResultSet resultSet = statement.getResultSet();

ResultSetMetaData meta = resultSet.getMetaData();

while (resultSet.next()) {

for (int i = 1; i <= meta.getColumnCount(); i++) {

System.out.print(resultSet.getString(i) + " ");

}

System.out.println();

}

}

@After

public void cleanup() throws Exception {

hiveLocalServer2.stop();

hiveLocalMetaStore.stop();

zookeeperLocalCluster.stop();

}

}

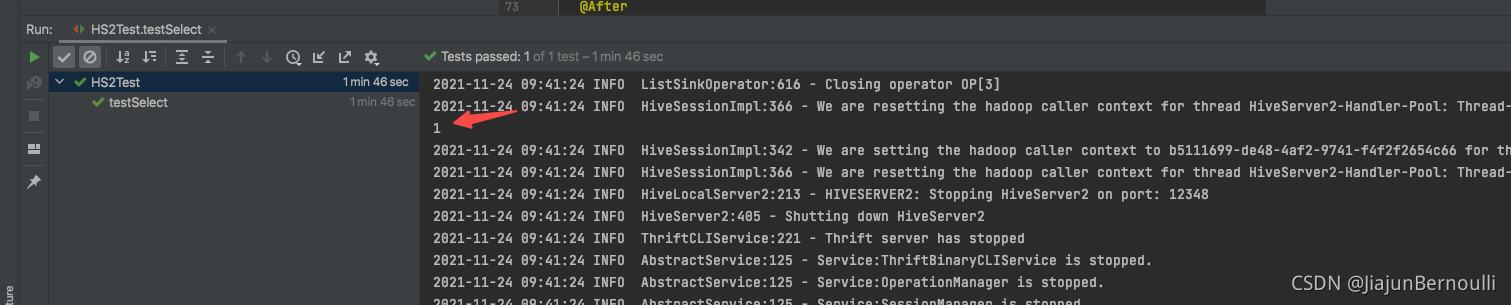

结果

如果你还有更多的需求,可以去看看hadoop-mini-clusters,这里面可能有大佬们造好了的轮子。不过那里面没有Spark的,另外找了个关于Spark的UT

182

182

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?