1.爬取西祠代理的网站

(1)基本代码如下:

from urllib import request

# 1.确定目标

base_url = 'http://www.xicidaili.com/'

# 2.发送http请求,返回类文件对象

response = request.urlopen(base_url)

html = response.read()

print(html)

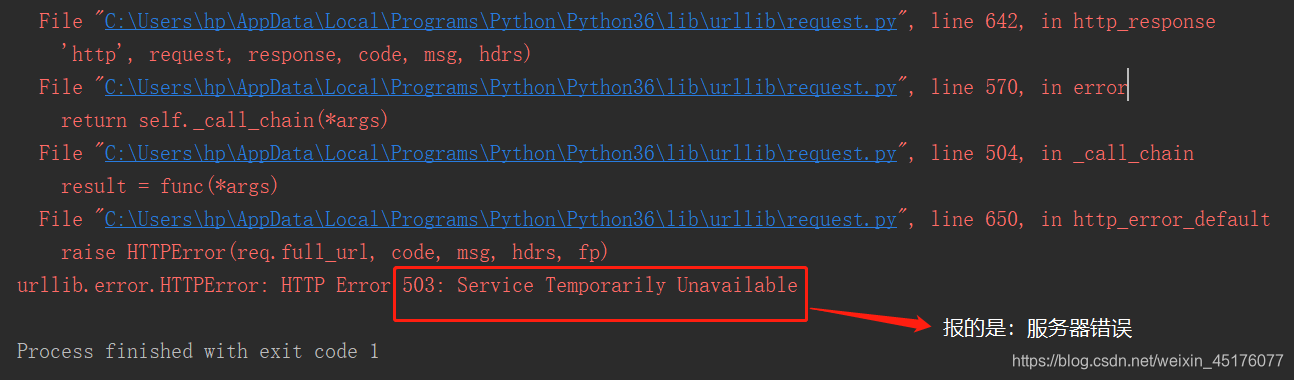

结果如下:按urllib发送的请求(报错)

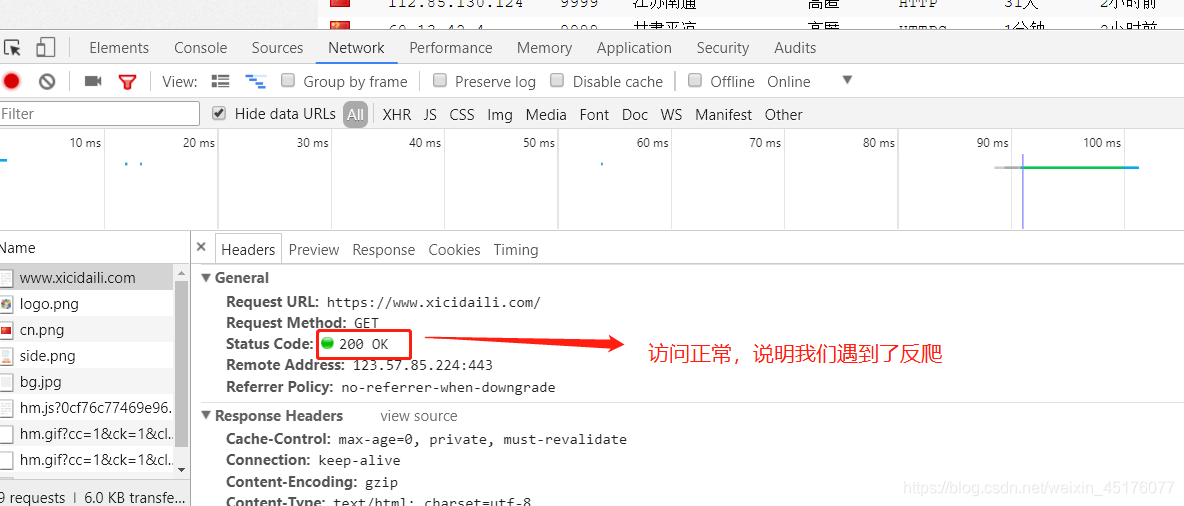

(2)然后我们用浏览器发送请求(F12或右键检查都可以,然后刷新),如下:

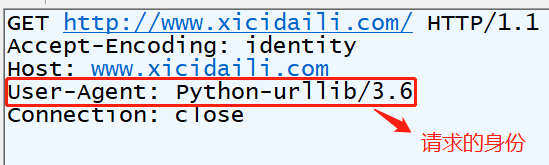

(3)用抓包软件(fiddler)看一看为什么会不一样((1)代码发送的请求,fiddler查看的)

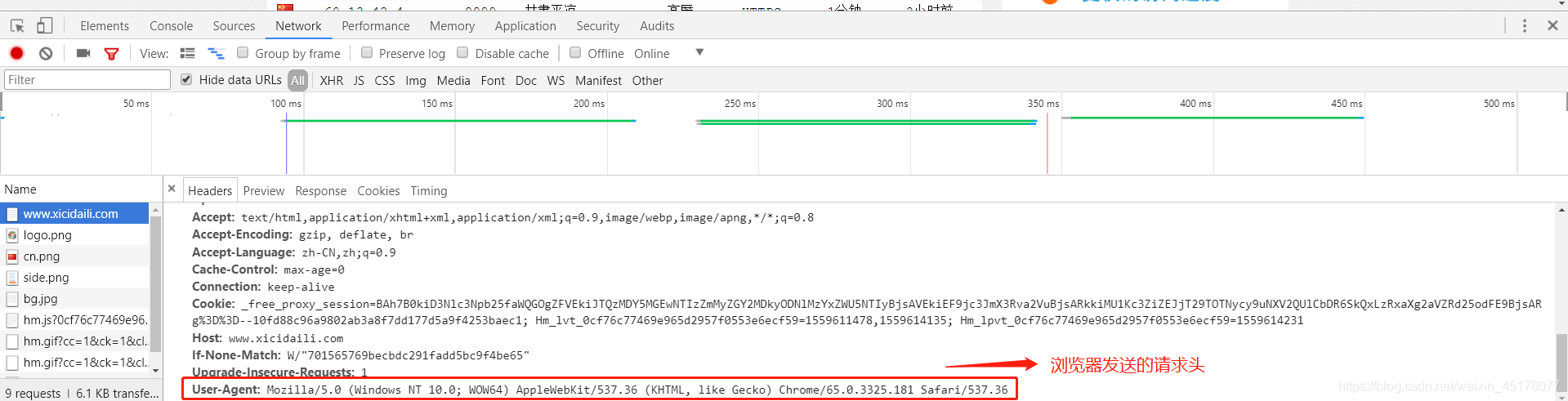

(4)浏览器发送的请求,如下

(5)解决反爬,构建浏览器身份请求头(2,3步),代码如下:

from urllib import request

# 1.确定目标

base_url = 'http://www.xicidaili.com/'

# 2.构建浏览器身份请求头

headers = {

'User-Agent':'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/65.0.3325.181 Safari/537.36'

}

# 3.构建请求对象

req = request.Request(url=base_url,headers=headers)

# 4.发送http请求

response = request.urlopen(req)

html = response.read()

html = html.decode('utf-8')

print(html)

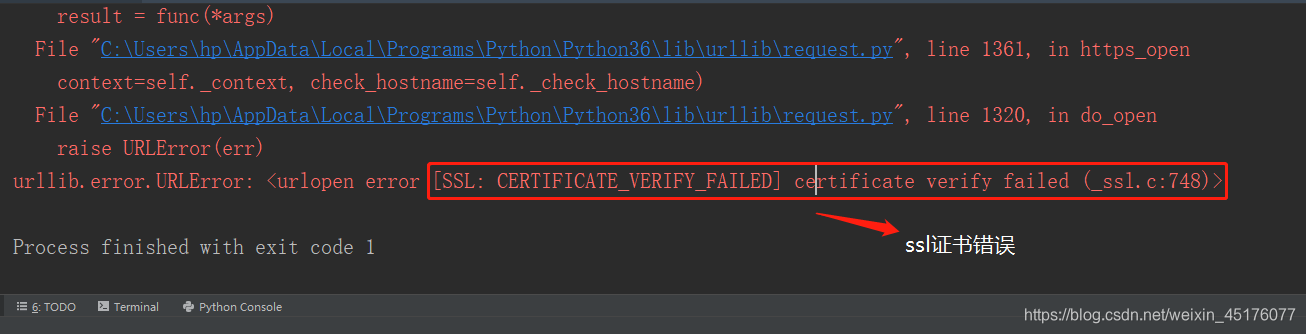

以上会请求出html的网页格式,但注意如果用fiddler代理发送请求时会报如下错误:

解决:在代码里加上如下代码:

import ssl

ssl._create_default_https_context = ssl._create_unverified_context

2.如下代码是随机更换请求头

from urllib import request

import random

import ssl

ssl._create_default_https_context = ssl._create_unverified_context

# 1.确定目标

base_url = 'http://www.xicidaili.com/'

user_agents = [

'Mozilla/5.0 (Macintosh; Intel Mac OS X 10.6; rv:2.0.1) Gecko/20100101 Firefox/4.0.1',

'Opera/9.80 (Macintosh; Intel Mac OS X 10.6.8; U; en) Presto/2.8.131 Version/11.11',

'Mozilla/5.0 (Macintosh; U; Intel Mac OS X 10_6_8; en-us) AppleWebKit/534.50 (KHTML, like Gecko) Version/5.1 Safari/534.50',

'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/63.0.3239.84 Safari/537.36'

]

# 2.构建浏览器身份请求头

headers = {

'User-Agent':random.choice(user_agents)

}

# 3.构建请求对象

req = request.Request(url=base_url,headers=headers)

# 2.发送http请求

response = request.urlopen(req)

html = response.read()

html = html.decode('utf-8')

print(html)

random.choice():随机选择

1269

1269

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?