1.Logisitc 回归梯度上升优化算法

# coding:utf-8

'''

Created on 2018 5 13

Logistic Regression Working Module

@author: flyfish

'''

from numpy import *

# 加载数据

def loadDataSet():

dataMat = []; labelMat = []

fr = open(r'E:\bookFiles\machinelearninginaction\Ch05\testSet.txt')

for line in fr.readlines():

lineArr = line.strip().split()

dataMat.append([1.0, float(lineArr[0]), float(lineArr[1])]) # 开始加入的1.0就是X0,对应参数W0

labelMat.append(int(lineArr[2]))

return dataMat,labelMat #返回数据矩阵和标签向量

def sigmoid(inX):

return 1.0/(1+exp(-inX))

# 梯度上升法

def gradAscent(dataMatIn, classLabels): #Logistic回归梯度上升优化算法

dataMatrix = mat(dataMatIn) #转换为Numpy 矩阵,dataMatrix是一个100×3的矩阵

labelMat = mat(classLabels).transpose() #转换为Numpy 矩阵,并从行矩阵转换为列矩阵,便于计算 labelMat是一个100×1的矩阵

m,n = shape(dataMatrix) # shape函数取得矩阵的行数和列数,m=100,n=3

#print dataMatrix

print type(dataMatrix) # <class 'numpy.matrixlib.defmatrix.matrix'>

#print '0000000000'

print type(lableMat) # <type 'list'>

#print lableMat

print '111111111111'

alpha = 0.001 #向目标移动的步长

maxCycles = 500 #迭代次数

weights = ones((n,1)) #3行1列的矩阵,这个矩阵为最佳的回归系数,和原来的100×3相乘,可以得到100×1的结果

#print type(weights) # <type 'numpy.ndarray'>

for k in range(maxCycles): #heavy on matrix operations

#print dataMatrix*weights

#print shape(dataMatrix.transpose())

h = sigmoid(dataMatrix*weights) #矩阵相乘,得到100×1的矩阵,即把dataMat的每一行的所有元素相加

error = (labelMat - h) #求出和目标向量之间的误差

'''定性地来说,这里是在计算真实数据类别与预测类别的差值,按照差值的方向调整回归系数

w: = w + alpha* ∇f(w)

'''

weights = weights + alpha * dataMatrix.transpose()* error #3×100的矩阵乘以100×1的矩阵,weights是梯度算子,总是指向函数值增长最快的方向

return weights

'''主要测试加载、分析、回归系数的操作'''

# if __name__ == '__main__':

# dataArr, lableMat = loadDataSet()

# # 调整后的回归系数

# w = gradAscent(dataArr, lableMat)

# print w

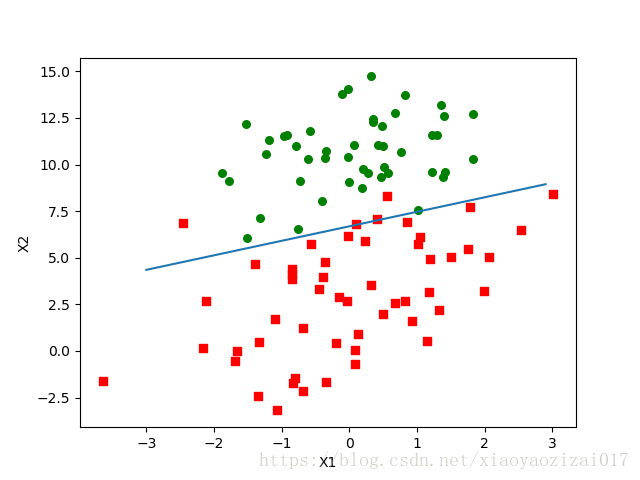

def plotBestFit(weights):

import matplotlib.pyplot as plt

dataMat , labelMat = loadDataSet()

dataArr = array(dataMat)

n = shape(dataArr)[0]

print shape(dataArr)

xcord1 = []; ycord1 = []

xcord2 = []; ycord2 = []

for i in range(n):

if int(labelMat[i])== 1:

xcord1.append(dataArr[i,1]); ycord1.append(dataArr[i,2])

else:

xcord2.append(dataArr[i,1]); ycord2.append(dataArr[i,2])

fig = plt.figure()

ax = fig.add_subplot(111)

ax.scatter(xcord1, ycord1, s=30, c='red', marker='s') # s 表示正方形

ax.scatter(xcord2, ycord2, s=30, c='green')

x = arange(-3.0, 3.0, 0.1)

'''x为numpy.arange格式,并且以0.1为步长从-3.0到3.0切分。

回顾5.2 节,0是两个分类(类别1和类别0)的分界出。因此,

拟合曲线为0 = w0*x0+w1*x1+w2*x2, 故x2 = (-w0*x0-w1*x1)/w2, x0为1,x1为x, x2为y,故有

'''

y = (-weights[0]-weights[1]*x)/weights[2]

'''

#x为array格式,weights为matrix格式,故需要调用getA()方法,其将matrix()格式矩阵转为array()格式

'''

ax.plot(x, y)

plt.xlabel('X1'); plt.ylabel('X2');

plt.show()

if __name__ == '__main__':

dataArr, lableMat = loadDataSet()

# 调整后的回归系数

w = gradAscent(dataArr, lableMat)

plotBestFit(w.getA())

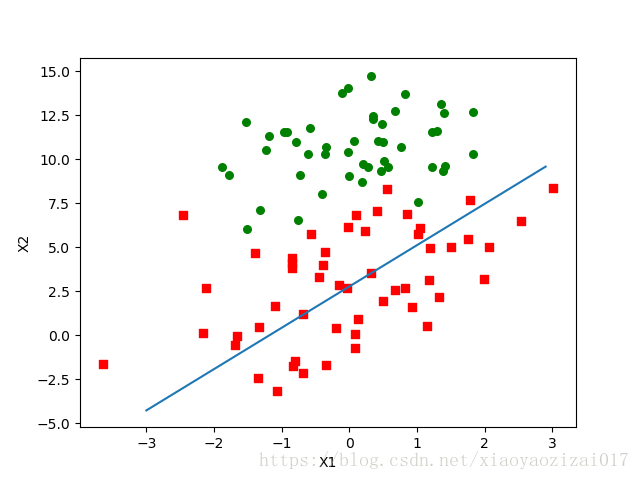

2.随机梯度上升

梯度上升算法中,每次更新回归系数需要遍历整个数据集。数据量若是大了,计算复杂度较高。

改进方法:一次仅用一个样本点更新回归系数,这便是随机梯度上升算法。

# 随机梯度上升算法

def stocGradAscent0(dataMatrix, classLabels):

m,n = shape(dataMatrix)

alpha = 0.01

weights = ones(n) #初始化回归系数

#print weights # [ 1. 1. 1.]

for i in range(m): #从0到149开始循环

h = sigmoid(sum(dataMatrix[i]*weights)) #此处h为具体数值

error = classLabels[i] - h # error也为具体数值

weights = weights + alpha * error * dataMatrix[i] #每次对一个样本进行处理,更新权值

return weights

随机梯度上升算法与梯度上升算法的区别:

第一,后者的变量 h 和误差error 都是向量,而前者则全是数值;第二,前者没有矩阵的转换过程,所有的数据类型都是 Numpy 数组。

效果有点不好,迭代次数太少。

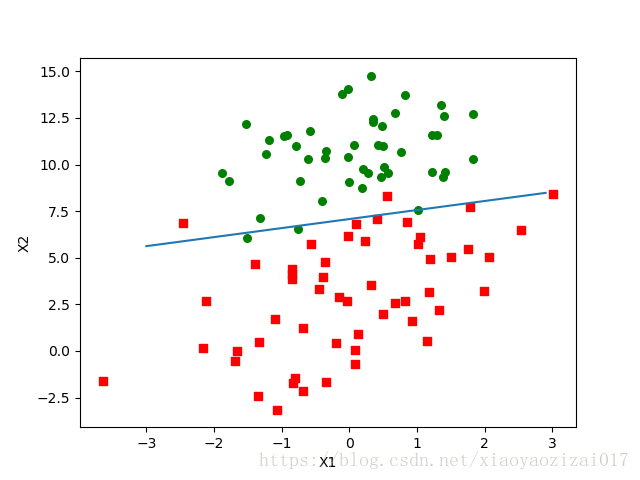

3.改进的梯度上升算法

def stocGradAscent1(dataMatrix, classLabels, numIter=150):

m,n = shape(dataMatrix)

weights = ones(n) #initialize to all ones

for j in range(numIter): #从0到149开始循环

'''随机抽取100行的数据进行计算,是随机的,但是没有少一个,只是打乱了次序。'''

dataIndex = range(m)

for i in range(m): #从0到99开始循环

alpha = 4/(1.0+j+i)+0.0001 #步进alpha的值逐渐减小,j=0-150,i=1-100,使得收敛的速度加快

randIndex = int(random.uniform(0,len(dataIndex))) #样本随机选择0-99中的一个数计算回归系数,减小周期性波动的现象

h = sigmoid(sum(dataMatrix[randIndex]*weights))

error = classLabels[randIndex] - h

weights = weights + alpha * error * dataMatrix[randIndex]

del(dataIndex[randIndex])

return weights

结果:

def classifyVector(inX, weights):

prob = sigmoid(sum(inX*weights))

if prob > 0.5: return 1.0

else: return 0.0

def colicTest():

frTrain = open('horseColicTraining.txt'); frTest = open('horseColicTest.txt')

trainingSet = []; trainingLabels = []

for line in frTrain.readlines():

currLine = line.strip().split('\t')

lineArr =[]

for i in range(21):

lineArr.append(float(currLine[i]))

trainingSet.append(lineArr)

trainingLabels.append(float(currLine[21]))

trainWeights = stocGradAscent1(array(trainingSet), trainingLabels, 1000)

errorCount = 0; numTestVec = 0.0

for line in frTest.readlines():

numTestVec += 1.0

currLine = line.strip().split('\t')

lineArr =[]

for i in range(21):

lineArr.append(float(currLine[i]))

if int(classifyVector(array(lineArr), trainWeights))!= int(currLine[21]):

errorCount += 1

errorRate = (float(errorCount)/numTestVec)

print "the error rate of this test is: %f" % errorRate

return errorRate

def multiTest():

numTests = 10; errorSum=0.0

for k in range(numTests):

errorSum += colicTest()

print "after %d iterations the average error rate is: %f" % (numTests, errorSum/float(numTests))

##总结:

Logistic 回归的目的是需找一个非线性函数Sigmod 的最佳拟合函数,求解过程可以由最优化算法来完成。在最优化算法中,做常用的就是梯度上升算法,而梯度上升算法又可以简化为随机梯度上升算法。

随机梯度算法可以可以减少计算资源。(计算的每个数值,而不是矩阵)

参考链接:

matplotlib.pyplot中add_subplot方法参数111的含义

数字的可视化:python画图之散点图sactter函数详解

https://blog.csdn.net/u010454729/article/details/48274955

https://www.cnblogs.com/tonglin0325/p/6064730.html

635

635

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?