创建一个maven工程。

一、添加pom依赖。

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_2.12</artifactId>

<version>3.1.1</version>

</dependency>我搭建的服务器安装的scala版本是2.12.10,spark版本是3.1.1,所以依赖一个版本匹配的pom包。大数据处理工程依赖包和服务器版本一致是必须要考虑的一个问题。

二、测试类。

package com.chris.spark;

import org.apache.spark.SparkConf;

import org.apache.spark.api.java.JavaPairRDD;

import org.apache.spark.api.java.JavaRDD;

import org.apache.spark.api.java.JavaSparkContext;

import org.apache.spark.api.java.function.FlatMapFunction;

import org.apache.spark.api.java.function.Function2;

import org.apache.spark.api.java.function.PairFunction;

import org.apache.spark.api.java.function.VoidFunction;

import scala.Serializable;

import scala.Tuple2;

import java.util.Arrays;

import java.util.Iterator;

import java.util.stream.Collectors;

/**

* @author Chris Chan

* Create on 2021/5/22 15:16

* Use for:

* Explain: WordCount

*/

public class WordCountTest implements Serializable {

public static void main(String[] args) {

new WordCountTest().execute(args);

}

private void execute(String[] args) {

//配置

SparkConf conf = new SparkConf();

conf.setAppName("SparkWordCountTest");

conf.setMaster("local");

//获取上下文

JavaSparkContext sparkContext = new JavaSparkContext(conf);

String filePath = getClass().getClassLoader().getResource("spark.txt").getFile();

JavaRDD<String> linesRDD = sparkContext.textFile(filePath);

//map计算 拆分单词

JavaRDD<String> wordJavaRDD = linesRDD.flatMap(new FlatMapFunction<String, String>() {

@Override

public Iterator<String> call(String s) throws Exception {

return Arrays.stream(s.split(" "))

.map(String::trim)

.filter(word -> !"".equals(word))

.collect(Collectors.toList()).iterator();

}

});

//map计算 转换类型

JavaPairRDD<String, Long> javaPairRDD = wordJavaRDD.mapToPair(new PairFunction<String, String, Long>() {

@Override

public Tuple2<String, Long> call(String word) throws Exception {

return new Tuple2<>(word, 1L);

}

});

//reduce计算 统计

JavaPairRDD<String, Long> wordCounts = javaPairRDD.reduceByKey(new Function2<Long, Long, Long>() {

@Override

public Long call(Long v1, Long v2) throws Exception {

return v1 + v2;

}

});

//输出结果

wordCounts.foreach(new VoidFunction<Tuple2<String, Long>>() {

@Override

public void call(Tuple2<String, Long> stringLongTuple2) throws Exception {

System.out.printf("%s,%d\n", stringLongTuple2._1, stringLongTuple2._2);

}

});

}

}

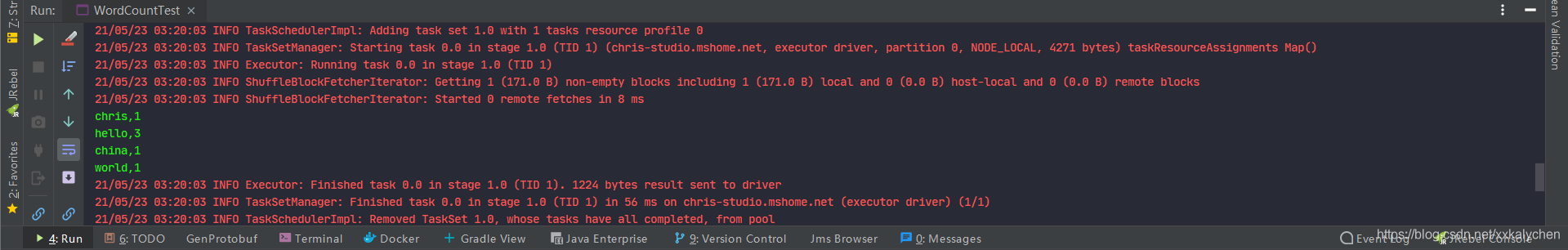

从本地文本文件获取数据,经过map和reduce两次计算,获取单词使用频次统计结果并输出到控制台。

2万+

2万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?