This tutorial assumes you are starting fresh and have no existing Kafka™ or ZooKeeper data. Since Kafka console scripts are different for Unix-based and Windows platforms, on Windows platforms use bin\windows\ instead of bin/, and change the script extension to .bat.

本指导假定你刚开始学习kafka,对kafka以及zookeeper还没有相关知识。由于kafka脚本在unix系统和windows系统上是不同的,所以windows平台上一般使用bin\windows而不是bin/,同时脚本名后缀一般是.bat

Step 1: Download the code

Download the 0.10.1.0 release and un-tar it.> tar -xzf kafka_2.11-0.10.1.0.tgz > cd kafka_2.11-0.10.1.0

Step 2: Start the server

Kafka uses ZooKeeper so you need to first start a ZooKeeper server if you don't already have one. You can use the convenience script packaged with kafka to get a quick-and-dirty single-node ZooKeeper instance.

kafka依赖于zookeeper,因此在启动kafka之前,需要首先启动zookeeper server。可以使用官方版启动脚本,启动单例模式的zookeeper实例,然后启动kafka。

> bin/zookeeper-server-start.sh config/zookeeper.properties

[2013-04-22 15:01:37,495] INFO Reading configuration from: config/zookeeper.properties (org.apache.zookeeper.server.quorum.QuorumPeerConfig)

...

Now start the Kafka server:

> bin/kafka-server-start.sh config/server.properties

[2013-04-22 15:01:47,028] INFO Verifying properties (kafka.utils.VerifiableProperties)

[2013-04-22 15:01:47,051] INFO Property socket.send.buffer.bytes is overridden to 1048576 (kafka.utils.VerifiableProperties)

...

Step 3: Create a topic

Let's create a topic named "test" with a single partition and only one replica:

按照以下方式创建名为test的topic,此topic只包含一个partition以及一个备份。

> bin/kafka-topics.sh --create --zookeeper localhost:2181 --replication-factor 1 --partitions 1 --topic test

We can now see that topic if we run the list topic command:

可以使用以下命令列出当前集群中所有的topic

> bin/kafka-topics.sh --list --zookeeper localhost:2181

test

Alternatively, instead of manually creating topics you can also configure your brokers to auto-create topics when a non-existent topic is published to.

如果不想每次都手动创建topic,可以配置broker在首次读写topic时自动创建此topic。

Step 4: Send some messages

Kafka comes with a command line client that will take input from a file or from standard input and send it out as messages to the Kafka cluster. By default, each line will be sent as a separate message.

Run the producer and then type a few messages into the console to send to the server.

kafka可以通过命令行从一个文件或者标准输入读取数据,然后发送到kafka集群。默认情况下,每行是一条消息。

按照以下命令行运行,在终端输入一些消息,然后就会发送到server

> bin/kafka-console-producer.sh --broker-list localhost:9092 --topic test

This is a message

This is another message

Step 5: Start a consumer

Kafka also has a command line consumer that will dump out messages to standard output.

kafka也可以通过命令行方式的consumer从集群获取一些消息并输出到标准输出

> bin/kafka-console-consumer.sh --bootstrap-server localhost:9092 --topic test --from-beginning

This is a message

This is another message

If you have each of the above commands running in a different terminal then you should now be able to type messages into the producer terminal and see them appear in the consumer terminal.

All of the command line tools have additional options; running the command with no arguments will display usage information documenting them in more detail.

如果你在不同的中断运行以上命令,你就可以在producer终端输入消息,然后在consumer终端查看消息

所有命令行都有其他选项。运行不带参数的命令就可以打印更详细的使用信息。

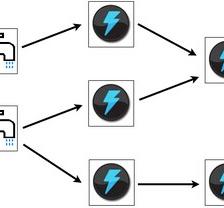

Step 6: Setting up a multi-broker cluster

So far we have been running against a single broker, but that's no fun. For Kafka, a single broker is just a cluster of size one, so nothing much changes other than starting a few more broker instances. But just to get feel for it, let's expand our cluster to three nodes (still all on our local machine).

First we make a config file for each of the brokers (on Windows use the copy command instead):

目前为止,我们已经测试了单例broker,但是这还不够。对于kafka来说,单例模式的broker只是一个节点的集群,多节点的集群也不过是多启动几个节点。下面可以体验一下多节点集群,将集群扩展为3个节点,依然在当前机器上。

首先需要创建每个broker的配置文件(windows上使用copy命令)

> cp config/server.properties config/server-1.properties > cp config/server.properties config/server-2.properties

Now edit these new files and set the following properties:

下面编辑新拷贝的配置文件,并设置以下配置:

config/server-1.properties:

broker.id=1

listeners=PLAINTEXT://:9093

log.dir=/tmp/kafka-logs-1

config/server-2.properties:

broker.id=2

listeners=PLAINTEXT://:9094

log.dir=/tmp/kafka-logs-2

The broker.id property is the unique and permanent name of each node in the cluster. We have to override the port and log directory only because we are running these all on the same machine and we want to keep the brokers from all trying to register on the same port or overwrite each other's data.

broker.id是唯一的,而且是每个节点在集群中的永久性名字。由于是在同一台机器上运行多个broker,所以需要改变port以及日志目录,可以避免争夺同一个port或者覆盖各自的数据。

We already have Zookeeper and our single node started, so we just need to start the two new nodes:

刚才已经启动了zookeeper以及一个kafka节点,现在需要启动两个新节点

> bin/kafka-server-start.sh config/server-1.properties & ... > bin/kafka-server-start.sh config/server-2.properties & ...

Now create a new topic with a replication factor of three:

现在来创建一个备份数目为3的topic

> bin/kafka-topics.sh --create --zookeeper localhost:2181 --replication-factor 3 --partitions 1 --topic my-replicated-topic

Okay but now that we have a cluster how can we know which broker is doing what? To see that run the "describe topics" command:

好了,现在想知道kafka集群都做了什么,可以通过“describe topics ” 命令查看:

> bin/kafka-topics.sh --describe --zookeeper localhost:2181 --topic my-replicated-topic

Topic:my-replicated-topic PartitionCount:1 ReplicationFactor:3 Configs:

Topic: my-replicated-topic Partition: 0 Leader: 1 Replicas: 1,2,0 Isr: 1,2,0

Here is an explanation of output. The first line gives a summary of all the partitions, each additional line gives information about one partition. Since we have only one partition for this topic there is only one line.

- "leader" is the node responsible for all reads and writes for the given partition. Each node will be the leader for a randomly selected portion of the partitions.

- "replicas" is the list of nodes that replicate the log for this partition regardless of whether they are the leader or even if they are currently alive.

- "isr" is the set of "in-sync" replicas. This is the subset of the replicas list that is currently alive and caught-up to the leader.

此处解释一下输出信息,第一行给出所有partitions的汇总信息,下面的每一行给出一个partition的信息。由于刚才只创建了一个partition,所以只有一行信息。

-“leader”负责每个partition的读写操作。每个节点都是某个随机partition的leader。

- “replicas”列举了当前partition的备份节点,包括leader以及死掉的备份节点

-“isr”是活跃的备份节点。它是replicas的子集,是replicas中依然活跃并且可以和leader进行通信的节点

Note that in my example node 1 is the leader for the only partition of the topic.

We can run the same command on the original topic we created to see where it is:

注意,上面例子中,节点1是topic仅有partition的leader

可以运行相同的命令查看test这个topic的信息:

> bin/kafka-topics.sh --describe --zookeeper localhost:2181 --topic test

Topic:test PartitionCount:1 ReplicationFactor:1 Configs:

Topic: test Partition: 0 Leader: 0 Replicas: 0 Isr: 0

So there is no surprise there—the original topic has no replicas and is on server 0, the only server in our cluster when we created it.

Let's publish a few messages to our new topic:

没什么意外的,test没有备份节点,他只有一个leader节点0,即最初单例模式时的broker的id。

下面输入一些消息给新topic

> bin/kafka-console-producer.sh --broker-list localhost:9092 --topic my-replicated-topic ... my test message 1 my test message 2 ^C

Now let's consume these messages:

> bin/kafka-console-consumer.sh --bootstrap-server localhost:9092 --from-beginning --topic my-replicated-topic ... my test message 1 my test message 2 ^C

Now let's test out fault-tolerance. Broker 1 was acting as the leader so let's kill it:

接下来测试一下容错。broker 1是leader,因此杀掉这个进程看一下:

> ps aux | grep server-1.properties 7564 ttys002 0:15.91 /System/Library/Frameworks/JavaVM.framework/Versions/1.8/Home/bin/java... > kill -9 7564On Windows use:

> wmic process get processid,caption,commandline | find "java.exe" | find "server-1.properties" java.exe java -Xmx1G -Xms1G -server -XX:+UseG1GC ... build\libs\kafka_2.10-0.10.1.0.jar" kafka.Kafka config\server-1.properties 644 > taskkill /pid 644 /f

Leadership has switched to one of the slaves and node 1 is no longer in the in-sync replica set:

leader已经变成原来slaves节点中的一个,节点1也不在活跃的备份列表中了

> bin/kafka-topics.sh --describe --zookeeper localhost:2181 --topic my-replicated-topic

Topic:my-replicated-topic PartitionCount:1 ReplicationFactor:3 Configs:

Topic: my-replicated-topic Partition: 0 Leader: 2 Replicas: 1,2,0 Isr: 2,0

But the messages are still available for consumption even though the leader that took the writes originally is down:

即使leader刚才被杀掉了,但是消息依然是可用的:

> bin/kafka-console-consumer.sh --bootstrap-server localhost:9092 --from-beginning --topic my-replicated-topic ... my test message 1 my test message 2 ^C

Step 7: Use Kafka Connect to import/export data

Writing data from the console and writing it back to the console is a convenient place to start, but you'll probably want to use data from other sources or export data from Kafka to other systems. For many systems, instead of writing custom integration code you can use Kafka Connect to import or export data.

Kafka Connect is a tool included with Kafka that imports and exports data to Kafka. It is an extensible tool that runs connectors, which implement the custom logic for interacting with an external system. In this quickstart we'll see how to run Kafka Connect with simple connectors that import data from a file to a Kafka topic and export data from a Kafka topic to a file.

First, we'll start by creating some seed data to test with:

> echo -e "foo\nbar" > test.txt

使用Kafka连接器导入或导出数据

从终端导入数据,然后再将数据导出到终端,这是开始学习时比较方便的方式,但是你可能想导入其他来源的数据,或者将数据导出到其他系统。对于很多系统而言,不需要你写一个客户端代码,kafka提供相应的连接器进行导入导出数据

kafka连接器是一个可以将数据导入或导出kafka的工具。这是可扩展的工具,可以实现与其他系统的交互。在本文中,可以看到如何使用kafka连接器进行简单的导入导出数据--从kafka到文件

首先,创建一些种子数据:

Next, we'll start two connectors running in standalone mode, which means they run in a single, local, dedicated process. We provide three configuration files as parameters. The first is always the configuration for the Kafka Connect process, containing common configuration such as the Kafka brokers to connect to and the serialization format for data. The remaining configuration files each specify a connector to create. These files include a unique connector name, the connector class to instantiate, and any other configuration required by the connector.

接下来,启动两个单例模式的连接器,即单例的、本地的、专门的进程。提供三个配置好的文件路径作为参数。第一个是kafka链接器的配置文件,包含一些通常的配置,例如kafka brokers以及数据序列化格式等。其他的配置问津啊每个都指定了需要创建的连接器。这些文件包含一个独一无二的连接器名字,连接器类别,以及连接器需要的其他配置。

> bin/connect-standalone.sh config/connect-standalone.properties config/connect-file-source.properties config/connect-file-sink.properties

These sample configuration files, included with Kafka, use the default local cluster configuration you started earlier and create two connectors: the first is a source connector that reads lines from an input file and produces each to a Kafka topic and the second is a sink connector that reads messages from a Kafka topic and produces each as a line in an output file.

这些简单的配置文件都包含在kafka中,配置文件使用默认本地集群配置,创建两个连接器:第一个是源连接器,从输入文件中按行读取消息,然后发送消息到kafka topic中;第二个是目的连接器,从kafka topic读取消息,然后按行输出到文件中。

During startup you'll see a number of log messages, including some indicating that the connectors are being instantiated. Once the Kafka Connect process has started, the source connector should start reading lines from test.txt and producing them to the topic connect-test, and the sink connector should start reading messages from the topic connect-testand write them to the file test.sink.txt. We can verify the data has been delivered through the entire pipeline by examining the contents of the output file:

在启动过程中,你会看到大量的日志消息,包括一些指示,指明了连接器正在实例化;一旦kafka连接器进程已经启动,源连接器应当从test.txt中读取消息,然后将它们发往topic connect-test,同时目的连接器应当开始从connect-test中读取消息,然后将它们写入test.sink.txt中。我们可以确认一下通过数据管道传递过来的数据是否和发送的数据一致。

> cat test.sink.txt

foo

bar

Note that the data is being stored in the Kafka topic connect-test, so we can also run a console consumer to see the data in the topic (or use custom consumer code to process it):

注意:数据已经存储到kafka topic connect-test中,因此,可以运行一个终端consumer来看一下topic中的数据是什么样的(或者使用客户端consumer):

> bin/kafka-console-consumer.sh --bootstrap-server localhost:9092 --topic connect-test --from-beginning

{"schema":{"type":"string","optional":false},"payload":"foo"}

{"schema":{"type":"string","optional":false},"payload":"bar"}

...

The connectors continue to process data, so we can add data to the file and see it move through the pipeline:

连接器不断的处理数据,因此可以向输入文件中增加数据,然后可以看到数据通过管道传递到目的连接器

> echo "Another line" >> test.txt

You should see the line appear in the console consumer output and in the sink file.

你可以看到这行数据会出现终端consumer的输出以及输出文件中

Step 8: Use Kafka Streams to process data

Kafka Streams is a client library of Kafka for real-time stream processing and analyzing data stored in Kafka brokers. This quickstart example will demonstrate how to run a streaming application coded in this library. Here is the gist of the WordCountDemo example code (converted to use Java 8 lambda expressions for easy reading).

kafka Streams是kafka进行实时流式处理以及分析存储在kafka brokers中数据的客户端库。本文例子将说明如何使用这个库。下面是WordCountDemo 例程代码(使用java 8的lambda表达式比较易于理解)

KTable wordCounts = textLines

// Split each text line, by whitespace, into words.

.flatMapValues(value -> Arrays.asList(value.toLowerCase().split("\\W+")))

// Ensure the words are available as record keys for the next aggregate operation.

.map((key, value) -> new KeyValue<>(value, value))

// Count the occurrences of each word (record key) and store the results into a table named "Counts".

.countByKey("Counts")

It implements the WordCount algorithm, which computes a word occurrence histogram from the input text. However, unlike other WordCount examples you might have seen before that operate on bounded data, the WordCount demo application behaves slightly differently because it is designed to operate on an infinite, unbounded stream of data. Similar to the bounded variant, it is a stateful algorithm that tracks and updates the counts of words. However, since it must assume potentially unbounded input data, it will periodically output its current state and results while continuing to process more data because it cannot know when it has processed "all" the input data.

We will now prepare input data to a Kafka topic, which will subsequently be processed by a Kafka Streams application.

它实现了WordCount算法,计算了输入文本中的单词出现统计结果,这不像你以前看到那些WordCount例子-计算有限的数据,本例程表现稍有不同,因为本例程设计是处理无限的数据流。和有限统计类似,这是有状态的算法-持续并不断更新词的总数。然而,由于它已经假定输入数据是持续不断的,它将周期性的输出当前计算结果,因为它并不知道数据流的“尾”在哪。

现在将输入数据写入kafka topic中,这些数据都将顺序的由Kafka Streams应用所处理。

> echo -e "all streams lead to kafka\nhello kafka streams\njoin kafka summit" > file-input.txt

Or on Windows:

> echo all streams lead to kafka> file-input.txt > echo hello kafka streams>> file-input.txt > echo|set /p=join kafka summit>> file-input.txt

Next, we send this input data to the input topic named streams-file-input using the console producer (in practice, stream data will likely be flowing continuously into Kafka where the application will be up and running):

接下来,需要将输入数据发送到名为streams-file-input的topic,可以借助终端producer实现(实际中,数据将不断的流入kafka)

> bin/kafka-topics.sh --create \

--zookeeper localhost:2181 \

--replication-factor 1 \

--partitions 1 \

--topic streams-file-input

> bin/kafka-console-producer.sh --broker-list localhost:9092 --topic streams-file-input < file-input.txt

We can now run the WordCount demo application to process the input data:

现在可以运行WordCount 例程应用来处理输入数据

> bin/kafka-run-class.sh org.apache.kafka.streams.examples.wordcount.WordCountDemo

There won't be any STDOUT output except log entries as the results are continuously written back into another topic named streams-wordcount-output in Kafka. The demo will run for a few seconds and then, unlike typical stream processing applications, terminate automatically.

We can now inspect the output of the WordCount demo application by reading from its output topic:

这不会有任何标准输出,而是会把结果不断的写回名为streams-wordcount-output的topic中。例程将会运行几秒钟,他不会像典型的流式处理应用自动终止。

我们可以通过从输出topic读取数据来查看WordCount例程应用的输出结果。

> bin/kafka-console-consumer.sh --bootstrap-server localhost:9092 \

--topic streams-wordcount-output \

--from-beginning \

--formatter kafka.tools.DefaultMessageFormatter \

--property print.key=true \

--property print.value=true \

--property key.deserializer=org.apache.kafka.common.serialization.StringDeserializer \

--property value.deserializer=org.apache.kafka.common.serialization.LongDeserializer

with the following output data being printed to the console:

终端输出结果如下:

all 1 lead 1 to 1 hello 1 streams 2 join 1 kafka 3 summit 1

Here, the first column is the Kafka message key, and the second column is the message value, both in in java.lang.String format. Note that the output is actually a continuous stream of updates, where each data record (i.e. each line in the original output above) is an updated count of a single word, aka record key such as "kafka". For multiple records with the same key, each later record is an update of the previous one.

结果中的第一列是kafka消息的key,第二列是消息的value,二者都是java.lang.String格式。注意,输出实际是一个不断更新的数据流,流中的每条记录(例如上面输出的每行数据)都是一个单词更新后的数量,例如“kafka”这个单词。对于有相同key的多条记录,后面的记录是对前面记录的更新。

Now you can write more input messages to the streams-file-input topic and observe additional messages added to streams-wordcount-output topic, reflecting updated word counts (e.g., using the console producer and the console consumer, as described above).

You can stop the console consumer via Ctrl-C.

现在你可以输入更多的数据到streams-file-inut这个topic中,可以看到更多的词被添加到streams-wordcount-output的topic,更多的统计结果也会输出。如果想要停止,可以Ctrl-C

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?