1 准备

-

zookeeper1

-

zookeeper2

-

zookeeper3

-

namenode1

-

namenode2

2 配置

2.1 core-site.xml

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

|

<

configuration

>

<

property

>

<

name

>fs.defaultFS</

name

>

<

value

>hdfs://mycluster</

value

>

</

property

>

<

property

>

<

name

>hadoop.tmp.dir</

name

>

<

value

>/home/tmp/hadoop2.0</

value

>

</

property

>

<

property

>

<

name

>ha.zookeeper.quorum</

name

>

<

value

>zookeeper1:2181,zookeeper2:2181,zookeeper3:2181</

value

>

</

property

>

</

configuration

>

|

2.2 hdfs-site.xml

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

|

<

configuration

>

<

property

>

<

name

>dfs.replication</

name

>

<

value

>1</

value

>

</

property

>

<

property

>

<

name

>dfs.namenode.name.dir</

name

>

<

value

>/home/dfs/name</

value

>

</

property

>

<

property

>

<

name

>dfs.datanode.data.dir</

name

>

<

value

>/home/dfs/data</

value

>

</

property

>

<

property

>

<

name

>dfs.permissions</

name

>

<

value

>false</

value

>

</

property

>

<

property

>

<

name

>dfs.nameservices</

name

>

<

value

>mycluster</

value

>

</

property

>

<

property

>

<

name

>dfs.ha.namenodes.mycluster</

name

>

<

value

>nn1,nn2</

value

>

</

property

>

<

property

>

<

name

>dfs.namenode.rpc-address.mycluster.nn1</

name

>

<

value

>namenode1:8020</

value

>

</

property

>

<

property

>

<

name

>dfs.namenode.rpc-address.mycluster.nn2</

name

>

<

value

>namenode2:8020</

value

>

</

property

>

<

property

>

<

name

>dfs.namenode.http-address.mycluster.nn1</

name

>

<

value

>namenode1:50070</

value

>

</

property

>

<

property

>

<

name

>dfs.namenode.http-address.mycluster.nn2</

name

>

<

value

>namenode2:50070</

value

>

</

property

>

<

property

>

<

name

>dfs.namenode.shared.edits.dir</

name

>

<

value

>qjournal://journalnode1:8485;journalnode2:8485;journalnode3:8485/mycluster</

value

>

</

property

>

<

property

>

<

name

>dfs.journalnode.edits.dir</

name

>

<

value

>/home/dfs/journal</

value

>

</

property

>

<

property

>

<

name

>dfs.client.failover.proxy.provider.mycluster</

name

>

<

value

>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider</

value

>

</

property

>

<

property

>

<

name

>dfs.ha.fencing.methods</

name

>

<

value

>shell(/bin/true)</

value

>

</

property

>

<

property

>

<

name

>dfs.ha.automatic-failover.enabled</

name

>

<

value

>true</

value

>

</

property

>

</

configuration

>

|

-

dfs.ha.automatic-failover.enabled

-

dfs.ha.fencing.methods

3 在zookeeper中初始化

|

1

|

$HADOOP_HOME

/bin/hdfs

zkfc -formatZK

|

4 启动zkfc(zookeeper failover controller)

|

1

|

$HADOOP_HOME

/sbin/hadoop-daemon

.sh start zkfc

|

5 启动HDFS

6 测试

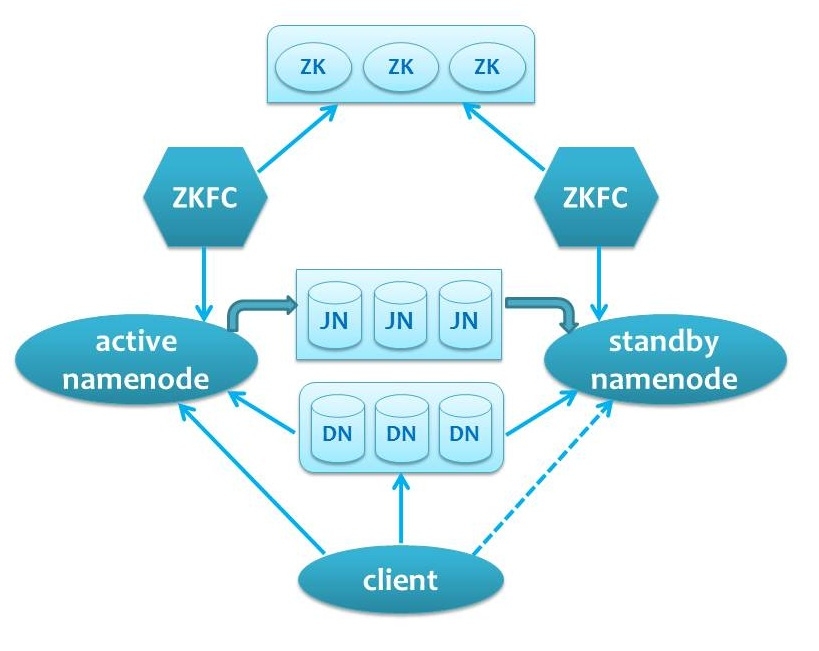

7 QJM方式HA automatic failover的结构图

8 实战tips

-

zookeeper可以在hadoop集群中选几台机器同时作为zookeeper节点,给HA私用。

-

在实践中建议采用手工切换的方式,这样更可靠,也方便查找问题。

2582

2582

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?