如前所述,DStream其实是内部一系列的不同时间点的RDD构成,因此大部分RDD的转换操作,DStream都支持。其中一些操作在下面会详细解释。

| Transformation | Meaning |

|---|---|

| map(func) | Return a new DStream by passing each element of the source DStream through a function func. |

| flatMap(func) | Similar to map, but each input item can be mapped to 0 or more output items. |

| filter(func) | Return a new DStream by selecting only the records of the source DStream on which func returns true. |

| repartition(numPartitions) | Changes the level of parallelism in this DStream by creating more or fewer partitions. |

| union(otherStream) | Return a new DStream that contains the union of the elements in the source DStream and otherDStream. |

| count() | Return a new DStream of single-element RDDs by counting the number of elements in each RDD of the source DStream. |

| reduce(func) | Return a new DStream of single-element RDDs by aggregating the elements in each RDD of the source DStream using a function func (which takes two arguments and returns one). The function should be associative and commutative so that it can be computed in parallel. |

| countByValue() | When called on a DStream of elements of type K, return a new DStream of (K, Long) pairs where the value of each key is its frequency in each RDD of the source DStream. |

| reduceByKey(func, [numTasks]) | When called on a DStream of (K, V) pairs, return a new DStream of (K, V) pairs where the values for each key are aggregated using the given reduce function. Note: By default, this uses Spark's default number of parallel tasks (2 for local mode, and in cluster mode the number is determined by the config propertyspark.default.parallelism) to do the grouping. You can pass an optional numTasks argument to set a different number of tasks. |

| join(otherStream, [numTasks]) | When called on two DStreams of (K, V) and (K, W) pairs, return a new DStream of (K, (V, W)) pairs with all pairs of elements for each key. |

| cogroup(otherStream, [numTasks]) | When called on a DStream of (K, V) and (K, W) pairs, return a new DStream of (K, Seq[V], Seq[W]) tuples. |

| transform(func) | Return a new DStream by applying a RDD-to-RDD function to every RDD of the source DStream. This can be used to do arbitrary RDD operations on the DStream. |

| updateStateByKey(func) | Return a new "state" DStream where the state for each key is updated by applying the given function on the previous state of the key and the new values for the key. This can be used to maintain arbitrary state data for each key. |

UpdateStateByKey 操作

def updateFunction(newValues, runningCount):

if runningCount is None:

runningCount = 0

return sum(newValues, runningCount) # add the new values with the previous running count to get the new countrunningCounts = pairs.updateStateByKey(updateFunction)Transform 操作

spamInfoRDD = sc.pickleFile(...) # RDD containing spam information

# join data stream with spam information to do data cleaning

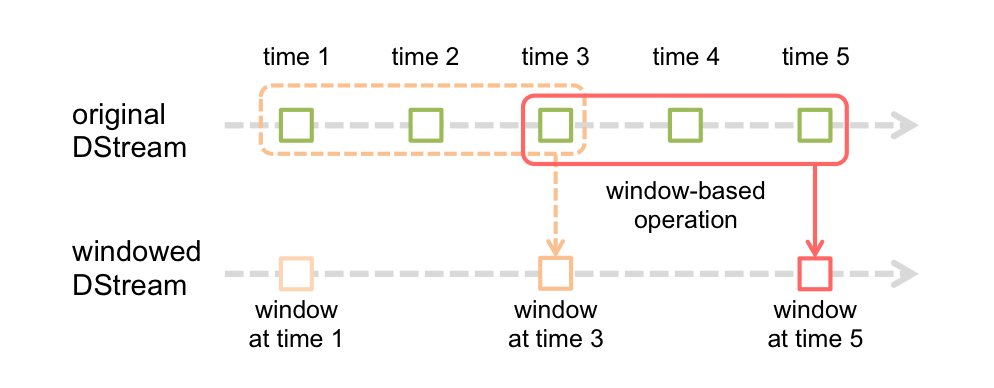

cleanedDStream = wordCounts.transform(lambda rdd: rdd.join(spamInfoRDD).filter(...))Window 操作

# Reduce last 30 seconds of data, every 10 seconds

windowedWordCounts = pairs.reduceByKeyAndWindow(lambda x, y: x + y, 30, 10)# Reduce last 30 seconds of data, every 10 seconds

windowedWordCounts = pairs.reduceByKeyAndWindow(lambda x, y: x + y, lambda x, y: x - y, 30, 10)附上此函数的signature,附上API文档。

| reduceByKeyAndWindow(func, invFunc,windowLength, slideInterval, [numTasks]) | A more efficient version of the above |

| Transformation | Meaning |

|---|---|

| window(windowLength, slideInterval) | Return a new DStream which is computed based on windowed batches of the source DStream. |

| countByWindow(windowLength,slideInterval) | Return a sliding window count of elements in the stream. |

| reduceByWindow(func, windowLength,slideInterval) | Return a new single-element stream, created by aggregating elements in the stream over a sliding interval using func. The function should be associative and commutative so that it can be computed correctly in parallel. |

| reduceByKeyAndWindow(func,windowLength, slideInterval, [numTasks]) | When called on a DStream of (K, V) pairs, returns a new DStream of (K, V) pairs where the values for each key are aggregated using the given reduce function func over batches in a sliding window. Note: By default, this uses Spark's default number of parallel tasks (2 for local mode, and in cluster mode the number is determined by the config property spark.default.parallelism) to do the grouping. You can pass an optional numTasks argument to set a different number of tasks. |

| reduceByKeyAndWindow(func, invFunc,windowLength, slideInterval, [numTasks]) | A more efficient version of the above |

| countByValueAndWindow(windowLength,slideInterval, [numTasks]) | When called on a DStream of (K, V) pairs, returns a new DStream of (K, Long) pairs where the value of each key is its frequency within a sliding window. Like in reduceByKeyAndWindow, the number of reduce tasks is configurable through an optional argument. |

577

577

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?