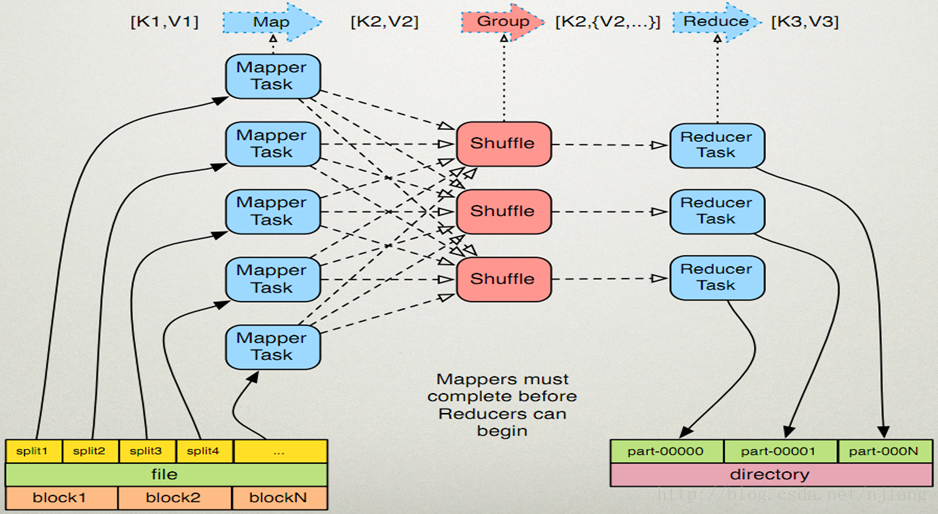

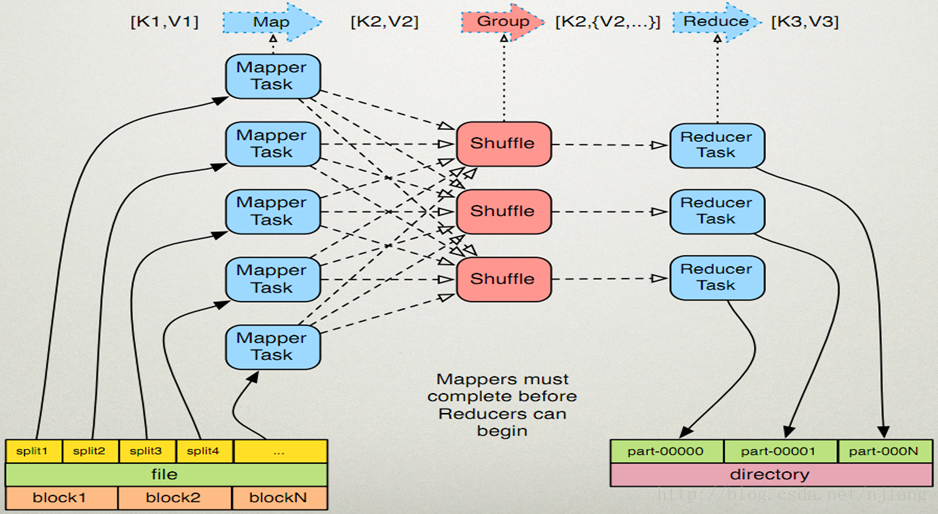

1.hadoop中map个数确定

(1)一个文件file

(2)占用3个block块,这个是物理切分。

(3)运行map的时候,根据配置文件进行split切分,切分为split1、split2、split3、split4、...这里的切分是根据配置文件进行切分的。这里的切分是逻辑切分。

(4)一个切片就会运行一个map。

(5)默认情况下一个切片对应一个block块。

(6)自己在进行设置切片的时候最好能够一个block块运行一个map,这样减少IO,从而提高性能。

源码分析(关键看红色行)

1.提交任务

package

com.jiangning.mr.wordcount;

import

org.apache.hadoop.conf.Configuration;

import

org.apache.hadoop.fs.Path;

import

org.apache.hadoop.io.LongWritable;

import

org.apache.hadoop.io.Text;

import

org.apache.hadoop.mapreduce.Job;

import

org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import

org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

public

class

WordCount {

public

static

void

main(String[]

args

)

throws

Exception {

Configuration

conf

=

new

Configuration();

Job

job

= Job. getInstance(

conf

);

//notice

job

.setJarByClass(WordCount.

class

);

//set mapper `s property

job

.setMapperClass(WCMapper.

class

);

job

.setMapOutputKeyClass(Text.

class

);

job

.setMapOutputValueClass(LongWritable.

class

);

FileInputFormat. setInputPaths(

job

,

new

Path(

args

[0]));

//set reducer`s property

job

.setReducerClass(WCReducer.

class

);

job

.setOutputKeyClass(Text.

class

);

job

.setOutputValueClass(LongWritable.

class

);

FileOutputFormat. setOutputPath(

job

,

new

Path(

args

[1]));

//submit

job.waitForCompletion( true);

}

}

2.job.java类中第1310行

eclipse快捷键ctrl+l

/**

* Submit the job to the cluster and wait for it to finish.

*

@param

verbose print the progress to the user

*

@return

true if the job succeeded

*

@throws

IOException thrown if the communication with the

*

<code>

JobTracker

</code>

is lost

*/

public

boolean

waitForCompletion(

boolean

verbose

)

throws

IOException, InterruptedException,

ClassNotFoundException {

if

(

state

== JobState.

DEFINE

) {

submit();

}

if

(

verbose

) {

monitorAndPrintJob();

}

else

{

// get the completion poll interval from the client.

int

completionPollIntervalMillis

=

Job. getCompletionPollInterval(

cluster

.getConf());

while

(!isComplete()) {

try

{

Thread. sleep(

completionPollIntervalMillis

);

}

catch

(InterruptedException

ie

) {

}

}

}

return

isSuccessful();

}

3.job.java的submit()方法,1286行

/**

* Submit the job to the cluster and return immediately.

*

@throws

IOException

*/

public

void

submit()

throws

IOException, InterruptedException, ClassNotFoundException {

ensureState(JobState.

DEFINE

);

setUseNewAPI();

connect();

final

JobSubmitter

submitter

=

getJobSubmitter(

cluster

.getFileSystem(),

cluster

.getClient());

status

=

ugi

.doAs(

new

PrivilegedExceptionAction<JobStatus>() {

public

JobStatus run()

throws

IOException, InterruptedException,

ClassNotFoundException {

return submitter .submitJobInternal(Job.this, cluster);

}

});

state

= JobState.

RUNNING

;

LOG

.info(

"The url to track the job: "

+ getTrackingURL());

}

4.JobSubmitter.java类

submitJobInternal

方法,428行

/**

* Internal method for submitting jobs to the system.

*

*

<p>

The job submission process involves:

*

<ol>

*

<li>

* Checking the input and output specifications of the job.

*

</li>

*

<li>

* Computing the

{@link InputSplit}

s for the job.

*

</li>

*

<li>

* Setup the requisite accounting information for the

*

{@link DistributedCache}

of the job, if necessary.

*

</li>

*

<li>

* Copying the job's jar and configuration to the map

-

reduce system

* directory on the distributed file

-

system.

*

</li>

*

<li>

* Submitting the job to the

<code>

JobTracker

</code>

and optionally

* monitoring it's status.

*

</li>

*

</ol></p>

*

@param

job the configuration to submit

*

@param

cluster the handle to the Cluster

*

@throws

ClassNotFoundException

*

@throws

InterruptedException

*

@throws

IOException

*/

JobStatus submitJobInternal (Job

job

, Cluster

cluster

)

throws

ClassNotFoundException, InterruptedException, IOException {

//validate the jobs output specs

checkSpecs(

job

);

Configuration

conf

=

job

.getConfiguration();

addMRFrameworkToDistributedCache(

conf

);

Path

jobStagingArea

= JobSubmissionFiles.getStagingDir(

cluster

,

conf

);

//configure the command line options correctly on the submitting dfs

InetAddress

ip

= InetAddress.getLocalHost();

if

(

ip

!=

null

) {

submitHostAddress

=

ip

.getHostAddress();

submitHostName

=

ip

.getHostName();

conf

.set(MRJobConfig.

JOB_SUBMITHOST

,

submitHostName

);

conf

.set(MRJobConfig.

JOB_SUBMITHOSTADDR

,

submitHostAddress

);

}

JobID

jobId

=

submitClient

.getNewJobID();

job

.setJobID(

jobId

);

Path

submitJobDir

=

new

Path(

jobStagingArea

,

jobId

.toString());

JobStatus

status

=

null

;

try

{

conf

.set(MRJobConfig.

USER_NAME

,

UserGroupInformation.getCurrentUser().getShortUserName());

conf

.set(

"hadoop.http.filter.initializers"

,

"org.apache.hadoop.yarn.server.webproxy.amfilter.AmFilterInitializer"

);

conf

.set(MRJobConfig.

MAPREDUCE_JOB_DIR

,

submitJobDir

.toString());

LOG

.debug(

"Configuring job "

+

jobId

+

" with "

+

submitJobDir

+

" as the submit dir"

);

// get delegation token for the dir

TokenCache.obtainTokensForNamenodes(

job

.getCredentials(),

new

Path[] {

submitJobDir

},

conf

);

populateTokenCache(

conf

,

job

.getCredentials());

// generate a secret to authenticate shuffle transfers

if

(TokenCache.getShuffleSecretKey(

job

.getCredentials()) ==

null

) {

KeyGenerator

keyGen

;

try

{

int

keyLen

= CryptoUtils.isShuffleEncrypted(

conf

)

?

conf

.getInt(MRJobConfig.

MR_ENCRYPTED_INTERMEDIATE_DATA_KEY_SIZE_BITS

,

MRJobConfig.

DEFAULT_MR_ENCRYPTED_INTERMEDIATE_DATA_KEY_SIZE_BITS

)

:

SHUFFLE_KEY_LENGTH

;

keyGen

= KeyGenerator.getInstance(

SHUFFLE_KEYGEN_ALGORITHM

);

keyGen

.init(

keyLen

);

}

catch

(NoSuchAlgorithmException

e

) {

throw

new

IOException(

"Error generating shuffle secret key"

,

e

);

}

SecretKey

shuffleKey

=

keyGen

.generateKey();

TokenCache.setShuffleSecretKey(

shuffleKey

.getEncoded(),

job

.getCredentials());

}

copyAndConfigureFiles(

job

,

submitJobDir

);

Path

submitJobFile

= JobSubmissionFiles.getJobConfPath(

submitJobDir

);

// Create the splits for the job

LOG

.debug(

"Creating splits at "

+

jtFs

.makeQualified(

submitJobDir

));

int maps = writeSplits( job, submitJobDir);

conf

.setInt(MRJobConfig.

NUM_MAPS

,

maps

);

LOG

.info(

"number of splits:"

+

maps

);

// write "queue admins of the queue to which job is being submitted"

// to job file.

String

queue

=

conf

.get(MRJobConfig.

QUEUE_NAME

,

JobConf.

DEFAULT_QUEUE_NAME

);

AccessControlList

acl

=

submitClient

.getQueueAdmins(

queue

);

conf

.set(toFullPropertyName(

queue

,

QueueACL.

ADMINISTER_JOBS

.getAclName()),

acl

.getAclString());

// removing jobtoken referrals before copying the jobconf to HDFS

// as the tasks don't need this setting, actually they may break

// because of it if present as the referral will point to a

// different job.

TokenCache.cleanUpTokenReferral(

conf

);

if

(

conf

.getBoolean(

MRJobConfig.

JOB_TOKEN_TRACKING_IDS_ENABLED

,

MRJobConfig.

DEFAULT_JOB_TOKEN_TRACKING_IDS_ENABLED

)) {

// Add HDFS tracking ids

ArrayList<String>

trackingIds

=

new

ArrayList<String>();

for

(Token<?

extends

TokenIdentifier>

t

:

job

.getCredentials().getAllTokens()) {

trackingIds

.add(

t

.decodeIdentifier().getTrackingId());

}

conf

.setStrings(MRJobConfig.

JOB_TOKEN_TRACKING_IDS

,

trackingIds

.toArray(

new

String[

trackingIds

.size()]));

}

// Set reservation info if it exists

ReservationId

reservationId

=

job

.getReservationId();

if

(

reservationId

!=

null

) {

conf

.set(MRJobConfig.

RESERVATION_ID

,

reservationId

.toString());

}

// Write job file to submit dir

writeConf(

conf

,

submitJobFile

);

//

// Now, actually submit the job (using the submit name)

//

printTokens(

jobId

,

job

.getCredentials());

status

=

submitClient

.submitJob(

jobId

,

submitJobDir

.toString(),

job

.getCredentials());

if

(

status

!=

null

) {

return

status

;

}

else

{

throw

new

IOException(

"Could not launch job"

);

}

}

finally

{

if

(

status

==

null

) {

LOG

.info(

"Cleaning up the staging area "

+

submitJobDir

);

if

(

jtFs

!=

null

&&

submitJobDir

!=

null

)

jtFs

.delete(

submitJobDir

,

true

);

}

}

}

5.JobSubmitter.java类的

writeSplits方法,608行

private

int

writeSplits(org.apache.hadoop.mapreduce.JobContext

job

,

Path

jobSubmitDir

)

throws

IOException,

InterruptedException, ClassNotFoundException {

JobConf

jConf

= (JobConf)

job

.getConfiguration();

int

maps

;

if

(

jConf

.getUseNewMapper()) {

maps = writeNewSplits( job, jobSubmitDir);

}

else

{

maps

= writeOldSplits(

jConf

,

jobSubmitDir

);

}

return

maps

;

}

6.JobSubmitter.java类的

writeNewSplits

方法,590行

private

<T

extends

InputSplit>

int

writeNewSplits(JobContext

job

, Path

jobSubmitDir

)

throws

IOException,

InterruptedException, ClassNotFoundException {

Configuration

conf

=

job

.getConfiguration();

InputFormat<?, ?>

input

=

ReflectionUtils.newInstance(

job

.getInputFormatClass(),

conf

);

List<InputSplit>

splits = input.getSplits( job);

T[]

array

= (T[])

splits

.toArray(

new

InputSplit[

splits

.size()]);

// sort the splits into order based on size, so that the biggest

// go first

Arrays.sort(

array

,

new

SplitComparator());

JobSplitWriter.createSplitFiles(

jobSubmitDir

,

conf

,

jobSubmitDir

.getFileSystem(

conf

),

array

);

return

array

.

length

;

}

7.org.apache.hadoop.mapreuce.lib.input.FileInputFormat.java类的getSplits方法,378行

/**

* Generate the list of files and make them into FileSplits.

*

@param

job the job context

*

@throws

IOException

*/

public

List<InputSplit> getSplits(JobContext

job

)

throws

IOException {

Stopwatch

sw

=

new

Stopwatch().start();

long minSize = Math. max(getFormatMinSplitSize(), getMinSplitSize(job));

long maxSize = getMaxSplitSize(job);

// generate splits

List<InputSplit>

splits

=

new

ArrayList<InputSplit>();

List<FileStatus>

files

= listStatus(

job

);

for

(FileStatus

file

:

files

) {

Path

path

=

file

.getPath();

long

length

=

file

.getLen();

if

(

length

!= 0) {

BlockLocation[]

blkLocations

;

if

(

file

instanceof

LocatedFileStatus) {

blkLocations

= ((LocatedFileStatus)

file

).getBlockLocations();

}

else

{

FileSystem

fs

=

path

.getFileSystem(

job

.getConfiguration());

blkLocations

=

fs

.getFileBlockLocations(

file

, 0,

length

);

}

if

(isSplitable(

job

,

path

)) {

long

blockSize

=

file

.getBlockSize();

long splitSize = computeSplitSize(blockSize, minSize, maxSize );

long

bytesRemaining

=

length

;

while

(((

double

)

bytesRemaining

)/

splitSize

>

SPLIT_SLOP

) {

int

blkIndex

= getBlockIndex(

blkLocations

,

length

-

bytesRemaining

);

splits

.add(makeSplit(

path

,

length

-

bytesRemaining

,

splitSize

,

blkLocations

[

blkIndex

].getHosts(),

blkLocations

[

blkIndex

].getCachedHosts()));

bytesRemaining

-=

splitSize

;

}

if

(

bytesRemaining

!= 0) {

int

blkIndex

= getBlockIndex(

blkLocations

,

length

-

bytesRemaining

);

splits

.add(makeSplit(

path

,

length

-

bytesRemaining

,

bytesRemaining

,

blkLocations

[

blkIndex

].getHosts(),

blkLocations

[

blkIndex

].getCachedHosts()));

}

}

else

{

// not splitable

splits

.add(makeSplit(

path

, 0,

length

,

blkLocations

[0].getHosts(),

blkLocations

[0].getCachedHosts()));

}

}

else

{

//Create empty hosts array for zero length files

splits

.add(makeSplit(

path

, 0,

length

,

new

String[0]));

}

}

// Save the number of input files for metrics/loadgen

job

.getConfiguration().setLong(

NUM_INPUT_FILES

,

files

.size());

sw

.stop();

if

(

LOG

.isDebugEnabled()) {

LOG

.debug(

"Total # of splits generated by getSplits: "

+

splits

.size()

+

", TimeTaken: "

+

sw

.elapsedMillis());

}

return

splits

;

}

分析:

(1)

getFormatMinSplitSize()方法返回的值为1,

(2)

getMinSplitSize(

job

)

/**

* Get the minimum split size

*

@param

job the job

*

@return

the minimum number of bytes that can be in a split

*/

public

static

long

getMinSplitSize(JobContext

job

) {

return

job

.getConfiguration().getLong(

SPLIT_MINSIZE

, 1L);

}

SPLIT_MINSIZE

看配置

mapreduce.input.fileinputformat.split.minsize的值,默认在hadoop-mapreduce-client-core-2.6.0.jar配置文件mapred-default.xml中

<property>

<name>mapreduce.input.fileinputformat.split.minsize</name>

<value>0</value>

<description>The minimum size chunk that map input should be split

into. Note that some file formats may have minimum split sizes that

take priority over this setting.</description>

</property>

<name>mapreduce.input.fileinputformat.split.minsize</name>

<value>0</value>

<description>The minimum size chunk that map input should be split

into. Note that some file formats may have minimum split sizes that

take priority over this setting.</description>

</property>

(3)

getMaxSplitSize(

job

)方法

SPLIT_MAXSIZE

看配置mapreduce.input.fileinputformat.split.maxsize

的值,默认在hadoop-mapreduce-client-core-2.6.0.jar配置文件mapred-default.xml中没有进行配置,

Long.

MAX_VALUE= 2的63次方减1

所以最大值为

/**

* Get the maximum split size.

*

@param

context the job to look at.

*

@return

the maximum number of bytes a split can include

*/

public

static

long

getMaxSplitSize(JobContext

context

) {

return

context

.getConfiguration().getLong(

SPLIT_MAXSIZE

,

Long.

MAX_VALUE

);

},

(4)

long

minSize

= Math. max(getFormatMinSplitSize(), getMinSplitSize(

job

));

Math.max(1,0)=1

minSize=1

8.

FileInputFormat.java类的

computeSplitSize

方法,435行

protected

long

computeSplitSize(

long

blockSize

,

long

minSize

,

long

maxSize

) {

return

Math. max(

minSize

, Math.min(

maxSize

,

blockSize

));

}

分析:

minSize = 1

maxSize =

2的63次方减1

blockSize=一个块大小,默认为128M

Math.min(

maxSize

,

blockSize

)=128*1024

Math.max(

1

,

128*1024)=

128*1024

所以默认情况下一个块就是一个map,这样做的好处是在执行map的时候不需要讲数据拷贝到map端,因为有的数据可能没有在map端需要进行拷贝。

1684

1684

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?