机器列表

192.168.88.109 node1 (mon, ceph-deploy)

192.168.88.107 node2 (osd)

192.168.88.108 node3 (osd)关闭selinux

修改/etc/selinux/config, 将值设为disabled, reboot创建用户并赋予root权限(每个节点都要)

这里创建的用户为:cent 密码是cent

sudo useradd -d /home/cent -m cent

sudo passwd cent

echo "cent ALL = (root) NOPASSWD:ALL" | sudo tee /etc/sudoers.d/cent

sudo chmod 0440 /etc/sudoers.d/cent

su cent (切换到cent用户,不能用root或sudo执行ceph-deploy命令)

sudo visudo (修改其中`Defaults requiretty`为`Defaults:cent !requiretty`)

hostname node1 (每个节点的hostname都要改)

sudo yum install ntp ntpdate ntp-doc

sudo yum install openssh-server修改主节点node1的hosts文件

修改node1的hosts文件,配置节点信息

vim /etc/hosts

192.168.88.109 node1

192.168.88.107 node2

192.168.88.108 node3配置node1的yum源

在/etc/yum.repos.d/ 下创建一个ceph.repo文件,写入以下内容

[Ceph]

name=Ceph packages for $basearch

baseurl=http://mirrors.163.com/ceph/rpm-jewel/el7/$basearch

enabled=1

gpgcheck=0

type=rpm-md

gpgkey=https://mirrors.163.com/ceph/keys/release.asc

priority=1

[Ceph-noarch]

name=Ceph noarch packages

baseurl=http://mirrors.163.com/ceph/rpm-jewel/el7/noarch

enabled=1

gpgcheck=0

type=rpm-md

gpgkey=https://mirrors.163.com/ceph/keys/release.asc

priority=1

[ceph-source]

name=Ceph source packages

baseurl=http://mirrors.163.com/ceph/rpm-jewel/el7/SRPMS

enabled=1

gpgcheck=0

type=rpm-md

gpgkey=https://mirrors.163.com/ceph/keys/release.asc

priority=1在node1安装ceph-deploy

sudo yum install yum-plugin-priorities

sudo yum install ceph-deploy配置node1的ssh

ssh-keygen (一直回车,使用默认配置)

ssh-copy-id cent@node1

ssh-copy-id cent@node2

ssh-copy-id cent@node3

vim ~/.ssh/config (创建config文件并写入以下内容)

Host node1

Hostname node1

User cent

Host node2

Hostname node2

User cent

Host node3

Hostname node3

User cent

sudo chmod 600 config (赋予config文件权限)在node1上创建集群

mkdir my-cluster

cd my-cluster

ceph-deploy new node1 (成功后会有ceph.conf)

vim ceph.conf (在global段最后添加)

osd pool default size = 2安装cpeh

在node1中执行:ceph-deploy install node1 node2 node3

安装完成后在node1中执行:ceph-deploy mon create-initial

添加并激活osd

ssh node2

sudo mkdir /var/local/osd0

sudo chmod -R 777 /var/local/osd0/

exit

ssh node3

sudo mkdir /var/local/osd1

sudo chmod -R 777 /var/local/osd1/

exit

ceph-deploy osd prepare node2:/var/local/osd0 node3:/var/local/osd1

ceph-deploy osd activate node2:/var/local/osd0 node3:/var/local/osd1

ceph-deploy admin node1 node2 node3

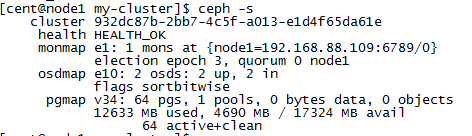

sudo chmod +r /etc/ceph/ceph.client.admin.keyring查看ceph集群状态

ceph -s

656

656

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?