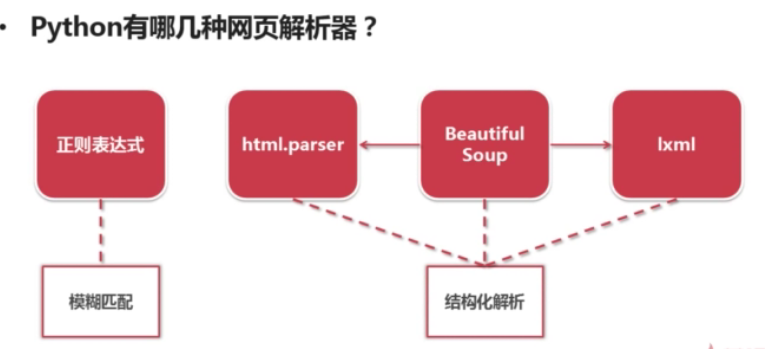

网页解析器:从网页中提取有价值数据的工具

二、Beautiful Soup

Beautiful Soup :是Python第三方库,用于从HTML或XML中提取数据,官网:https://www.crummy.com/software/BeautifulSoup/bs4/doc/

安装BeautifulSoup,先安装pip

sudo apt-get install python-pip

sudo pip install beautifulsoup4

import bs4

print bs4| <module 'bs4' from '/usr/lib/python2.7/dist-packages/bs4/__init__.pyc'> |

举个例子如下

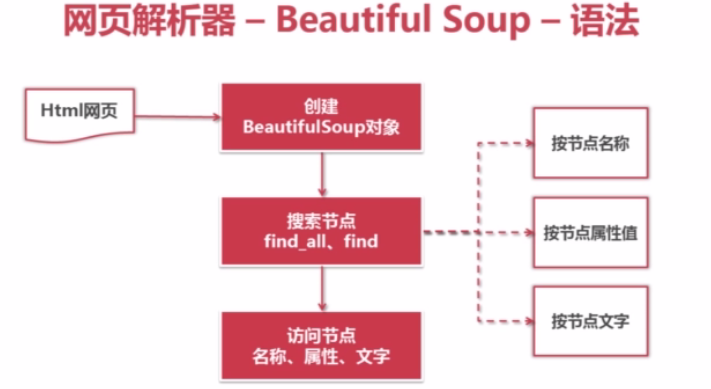

2.1.创建BeautifulSoup对象

from bs4 import BeautifulSoup

#根据HTML网页字符串创建BeautifulSoup对象

soup = BeautifulSoup(

html_doc, # HTML文档字符串

'html.parser' # HTML解析器

from_encoding='utf8' # HTML文档的编码

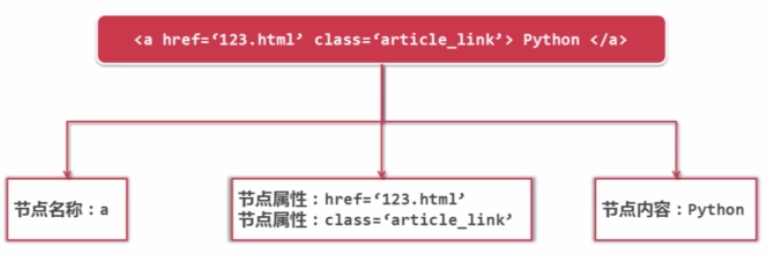

)#方法:find_all(name, attrs, string)

# 查找所有标签为a的节点

soup.find_all('a')

# 查找所有标签为a, 链接符合/view/123.html形式的节点

soup.find_all('a', href='/view/123.htm')

# 传入正则表达式来匹配对应的内容

soup.find_all('a', href=re.compile(r'/view/\d+\.htm'))

# 查找所有标签为idv, class(下面的代码有加下划线,是因为python的关键字有class,防冲突)为 abc, 文字为Python的节点

soup.find_all('div', class_='abc', string='Python')# 得到节点:<a href='1.html'>Python</a>

# 获取查找到的节点的标签名称

node.name

# 获取查找到的a节点的href属性

node['href']

# 获取查找到的a节点的链接文字

node.get_text()

三、实例测试

# coding:utf8

from bs4 import BeautifulSoup

import re

html_doc = """

<html><head><title>The Dormouse's story</title></head>

<body>

<p class="title"><b>The Dormouse's story</b></p>

<p class="story">Once upon a time there were three little sisters; and their names were

<a href="http://example.com/elsie" class="sister" id="link1">Elsie</a>,

<a href="http://example.com/lacie" class="sister" id="link2">Lacie</a> and

<a href="http://example.com/tillie" class="sister" id="link3">Tillie</a>;

and they lived at the bottom of a well.</p>

<p class="story">...</p>

"""

soup = BeautifulSoup(html_doc, 'html.parser', from_encoding='utf8')

print '测试1:获取所有的链接'

links = soup.find_all('a')

for link in links:

print link.name, link['href'], link.get_text()

print '\n测试2:获取Lacie的链接'

link_node = soup.find('a', href='http://example.com/lacie')

print link_node.name, link_node['href'], link_node.get_text()

print '\n测试3:正则匹配'

# 要匹配的内容前加’r',这样如果要转义,只用加一个/就行了

link_node = soup.find('a', href=re.compile(r"ill") )

print link_node.name, link_node['href'], link_node.get_text()

print '\n测试4:获取P段落文字'

p_node = soup.find('p', class_='title' )

print p_node.name, p_node.get_text()| 测试1:获取所有的链接 a http://example.com/elsie Elsie a http://example.com/lacie Lacie a http://example.com/tillie Tillie 测试2:获取Lacie的链接 a http://example.com/lacie Lacie 测试3:正则匹配 a http://example.com/tillie Tillie 测试4:获取P段落文字 p The Dormouse's story |

1164

1164

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?