说明

kafka版本:kafka_2.10-0.8.2.1(kafka0.9.xx版本提供了新的API)

IED环境:intellij14 + maven3.3

语言:java

consumer低级别API开发

低级别API适用场景

低级别API和高级别最大的不同就是你可以自己控制一个topic的不同partition的消费和offset。适用于:1)你想多次读一条消息,2)你只想消费一个topic的部分partition,3)你想对partition的offset有更加严格的控制等。

当然,更多的控制同时带来了更多的工作,比如1)你必须在程序中自己控制offset,2)你必须自己控制并处理不同partition的kafka broker的leader问题等。

程序示例

本程序实现一个低级别API的kafka consumer,实现对offset保持至本地文件,下次启动时,自己从offset文件读取offset位置。

maven依赖

<dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka_2.10</artifactId>

<version>0.8.2.1</version>

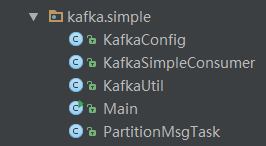

</dependency>程序包组织

配置文件consumer.properties

brokerList=xxxx,xxxx,xxxx,xxxx

port=9092

topic=myTopic

partitionNum=8

#offset file path

checkpoint=./checkpoint

#once subscribe size

patchSize=10

#latest or earliest

subscribeStartPoint=earliestKafkaConfig.java代码

package kafka.simple;

import java.util.List;

public class KafkaConfig {

public String topic = null; // topic

public int partitionNum = 0; // partition个数

public int port = 0; // kafka broker端口号

public List<String> replicaBrokers = null; // kafka broker ip列表

public String checkpoint; // checkpoint目录,即保存partition offset的目录

public int patchSize = 10; // 一次读取partition最大消息个数

public String subscribeStartPoint = null; // 默认开始订阅点,latest or earliest(最近或者最早)

public KafkaConfig() { }

@Override

public String toString() {

return "[brokers:" + replicaBrokers.toString()

+ "] [port:" + port

+ "] [topic:" + topic

+ "] [partition num:" + partitionNum

+ "] [patch size:" + patchSize

+ "] [start point:" + subscribeStartPoint

+ "]";

}

}KafkaUtil.java代码

package kafka.simple;

import kafka.api.PartitionOffsetRequestInfo;

import kafka.common.TopicAndPartition;

import kafka.javaapi.OffsetResponse;

import kafka.javaapi.PartitionMetadata;

import kafka.javaapi.TopicMetadata;

import kafka.javaapi.TopicMetadataRequest;

import kafka.javaapi.consumer.SimpleConsumer;

import org.apache.log4j.LogManager;

import org.apache.log4j.Logger;

import java.util.Collections;

import java.util.HashMap;

import java.util.List;

import java.util.Map;

public class KafkaUtil {

private static final Logger logger = LogManager.getLogger(KafkaUtil.class);

/**

* 找一个broker leader

* @param seedBrokers 配置的broker列表

* @param port broker端口

* @param topic

* @param partition

* @return

*/

public static PartitionMetadata findLeader(List<String> seedBrokers, int port, String topic, int partition) {

PartitionMetadata returnMeataData = null;

logger.info("find leader begin. brokers:[" + seedBrokers.toString() + "]");

loop:

for (String seed : seedBrokers) {

SimpleConsumer consumer = null;

try {

consumer = new SimpleConsumer(seed, port, 100000, 64 * 1024, "leaderLookup");

List<String> topics = Collections.singletonList(topic);

TopicMetadataRequest req = new TopicMetadataRequest(topics);

kafka.javaapi.TopicMetadataResponse res = consumer.send(req);

List<TopicMetadata> metadatas = res.topicsMetadata();

for (TopicMetadata item : metad

本文介绍Kafka 0.8.2.1版本的低级别consumer API使用,适合需要精细控制分区消费和offset的情况。通过一个示例程序展示如何在Java中实现consumer,包括配置、依赖、代码组织,并将offset存储到本地文件。

本文介绍Kafka 0.8.2.1版本的低级别consumer API使用,适合需要精细控制分区消费和offset的情况。通过一个示例程序展示如何在Java中实现consumer,包括配置、依赖、代码组织,并将offset存储到本地文件。

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

1075

1075

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?