- 新建项目选择Gradle项目

- build.gradle

group 'org.fashj'

version '1.2'

apply plugin: 'idea'

apply plugin: 'scala'

sourceCompatibility = 1.8

repositories {

maven {

url 'http://maven.aliyun.com/nexus/content/groups/public/'

}

mavenCentral()

}

dependencies {

testCompile group: 'junit', name: 'junit', version: '4.11'

compile group: 'org.scala-lang', name: 'scala-library', version: '2.10.4'

testCompile "org.scala-lang:scala-library:2.11.8"

compile group: 'org.apache.spark', name: 'spark-core_2.10', version: '2.0.1'

}

3.编写代码

package org.fashj.spark

import org.apache.spark.{SparkConf, SparkContext}

/**

- @author zhengsd

*/

object SparkPi {

def main(args: Array[String]) {

val conf = new SparkConf()

val sc = new SparkContext(conf)

val text = sc.textFile("file:///usr/local/spark/README.md")

val result = text.flatMap(_.split(' ')).map((_, 1)).reduceByKey(_ + _).collect()

result.foreach(println)

}

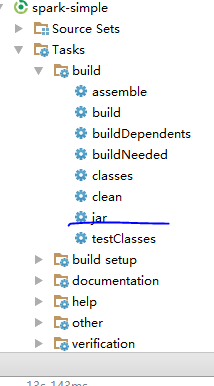

}- 生成Jar

- 在linux服务器上运行

./spark-submit --class "org.fashj.spark.SparkPi" /home/hadoop/spark-simple-1.2.jar

3795

3795

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?