刚开始是在做最近地物查询,然后突然想到可以结合前后端做一个医院信息的动态展示

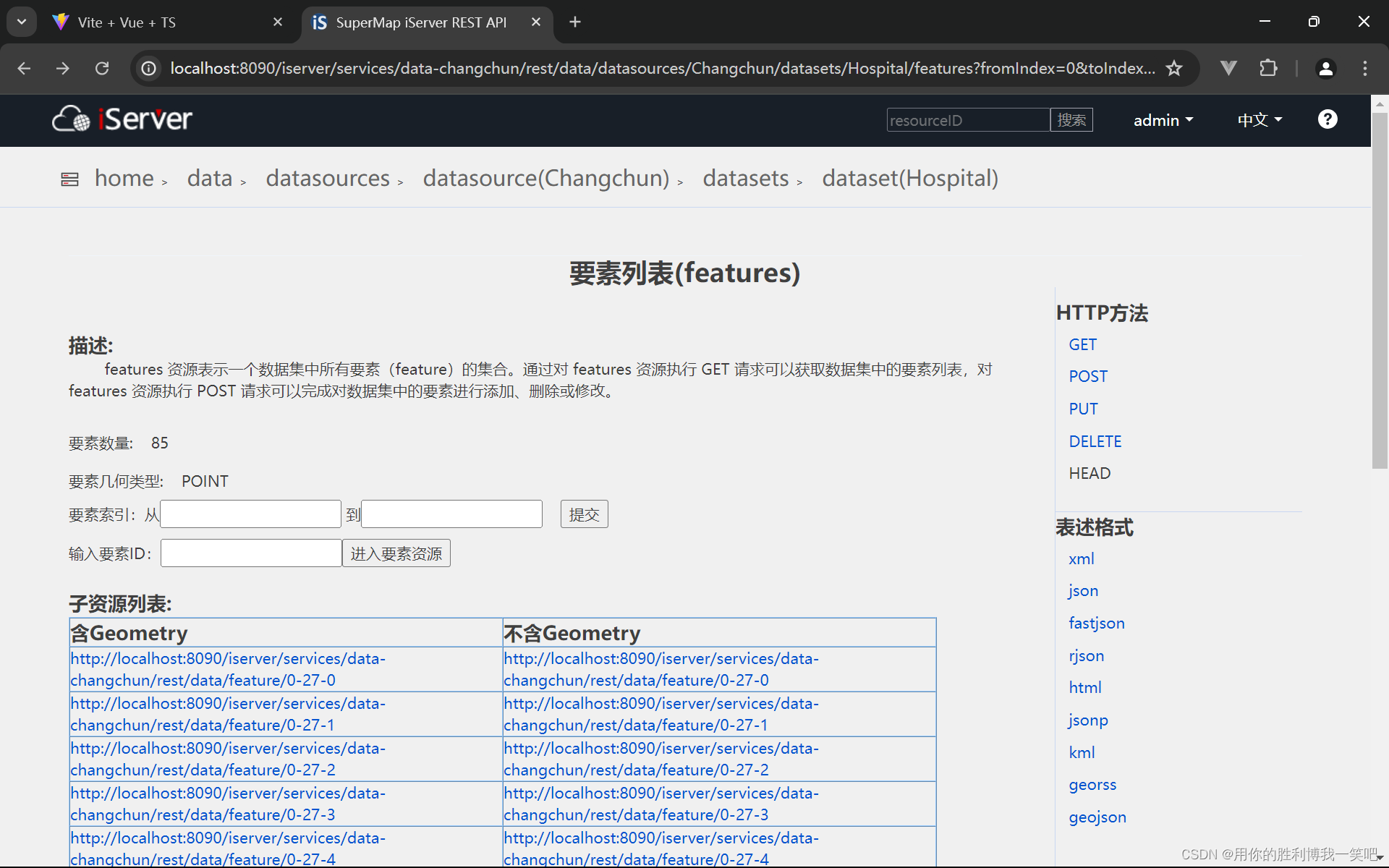

首先打开iserver看一个个医院的的列表

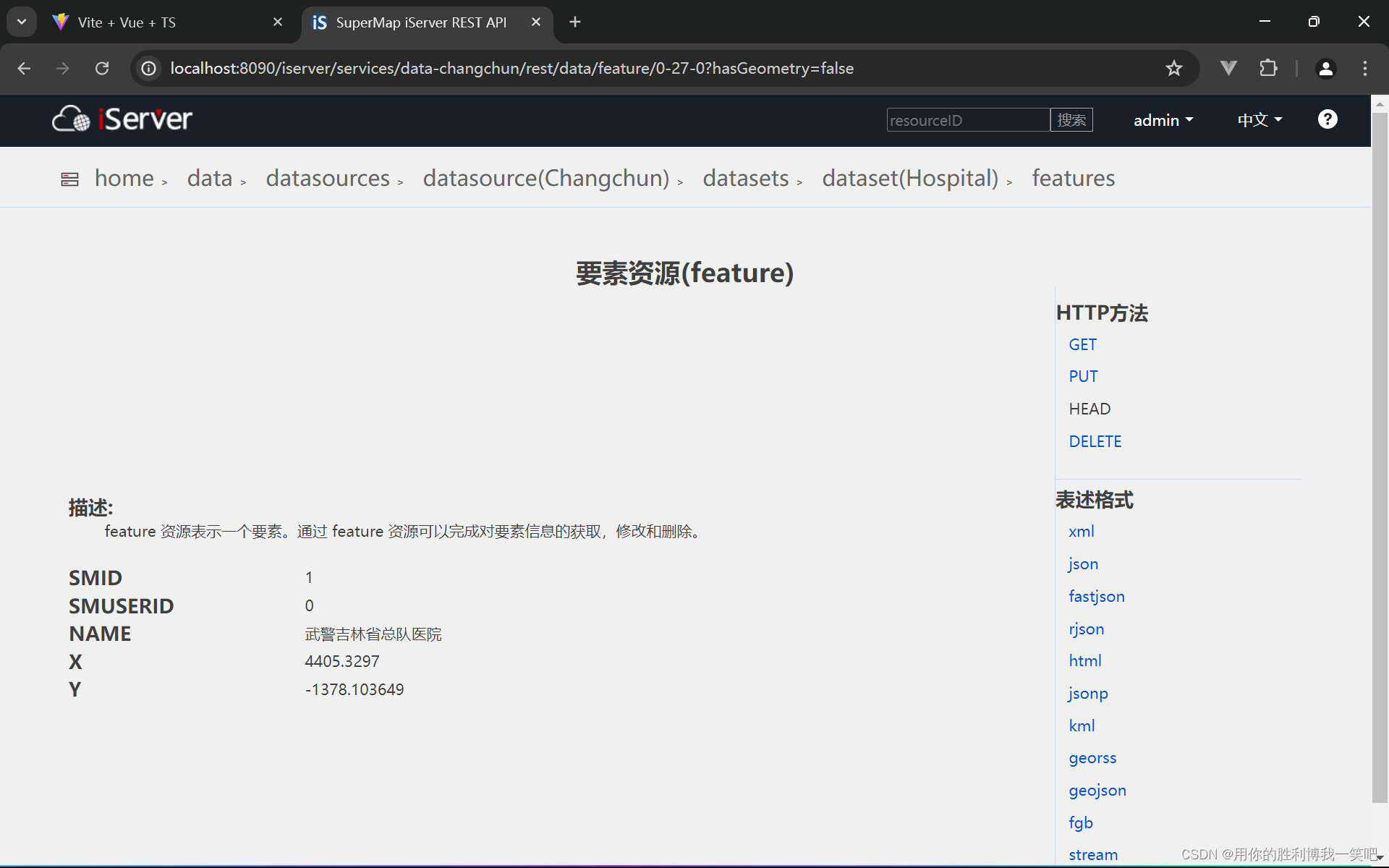

然后随便打开一个,里面有医院的id和name,可以依据名字,然后查找医院的信息(等级还有成立时间等等)

http://localhost:8090/iserver/services/data-changchun/rest/data/feature/0-27-0

发现第一个前面是不变的,就是fromindex和toindex两个在变。然后看第二个,就是最后的0-27-?,最后?这个数字变一下。Ok,然后打开pycharm,我们开始第一步,提取医院名字和id

import pymssql #引入对数据库操作模块

import requests #等会获得html

from bs4 import BeautifulSoup #

import re #

serverName = '127.0.0.1'

userName = 'sa'

passWord = 'xxxx' #密码

database='hosipital'

conn = pymssql.connect(server=serverName,user=userName,password=passWord,database=database)

cursor = conn.cursor()

creTab='CREATE TABLE inf ( id int, name varchar(100));' #创建一张表

cursor.execute(creTab)

startindex=0

count=0

for startindex in range(0,70,17):

endindex=startindex+17

baseUrl = f'http://localhost:8090/iserver/services/data-changchun/rest/data/datasources/Changchun/datasets/Hospital/features?fromIndex={startindex}&toIndex={endindex}'

r=requests.get(baseUrl)

r.encoding='utf-8'

html=r.text

soup = BeautifulSoup(html, 'html.parser')

tds=soup.find_all('td')

for td in tds:

a=td.find('a')

if a:

txt=a.get('href')

if re.match('^http.*false$',txt):

r2=requests.get(txt)

html2=r2.text

soup2=BeautifulSoup(html2,'html.parser')

div=soup2.find('div',class_='bodyDIv').find('div',id="resourceContent")

restBody=div.find('div',class_='restBody')

table=restBody.find('table')

td=table.find_all('tr')[2].find_all('td')[1]

count=count+1

print(count)

insert = f"insert into inf (id,name) values ({count},'{td.text}')"

cursor.execute(insert) #写入数据库

conn.commit()

cursor.close()

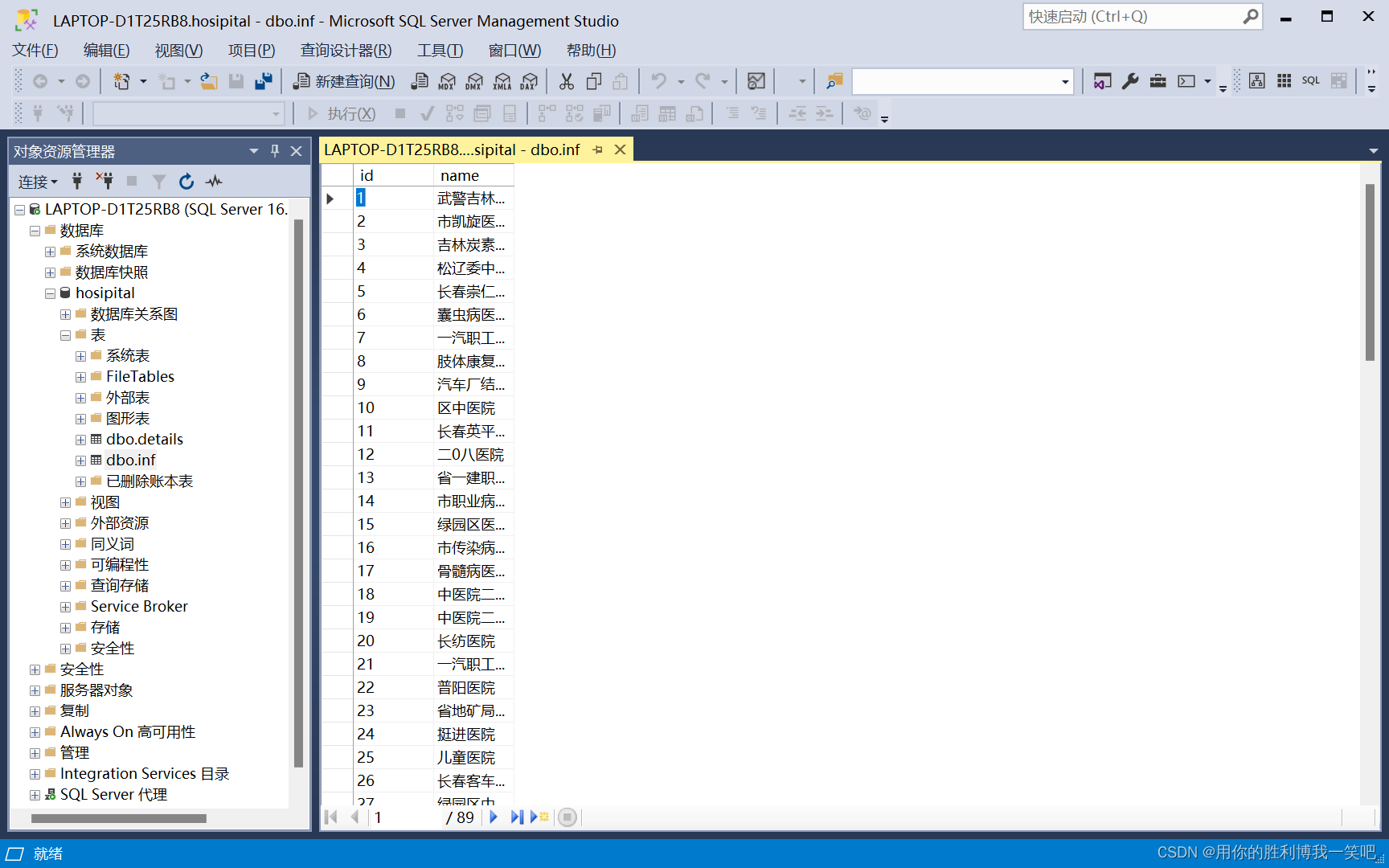

conn.close()得到的结果差不多长这样

这里有一个查询医院数据的网站'https://y.dxy.cn/hospital',点进去搜几个医院,观察下网址变化就知道了

import requests

import pymssql

from bs4 import BeautifulSoup

import json

serverName = '127.0.0.1'

userName = 'sa'

passWord = 'xxxxx'

database='hosipital'

conn = pymssql.connect(server=serverName,user=userName,password=passWord,database=database,charset='GBK')

cursor = conn.cursor()

# de='drop table details'

# cursor.execute(de)

cursor.execute('CREATE TABLE details ( id int, name varchar(100),location varchar(100),type varchar(20),edge varchar(30),level varchar(20),time varchar(40))')

conn.commit()

cursor.execute("SELECT * FROM inf")

count=0

ls = cursor.fetchall()

for l in ls:

count=count+1

print(f'正在进行第{count}次')

name = l[1]

print(name)

baseurl = f'https://y.dxy.cn/hospital/?page=1&name={name}&location=220000'

r=requests.get(baseurl)

r.encoding='utf-8'

html=r.text

soup=BeautifulSoup(html,'html.parser')

num=soup.find('div',class_='data').find('span').text

if(num=='0'):

insert = f"insert into details (id,name) values ({l[0]},'{name}')"

cursor.execute(insert)

conn.commit()

else:

print(f'找到{num}条')

div=soup.find('div',class_='main-listsbox')

table=div.find('div',class_='table')

tbody=table.find('div',class_='tbody')

trs=tbody.find_all('div',class_='tr')

for tr in trs:

tds=tr.find_all('div',class_='td')

if '长春市' in tds[1].text:

str=tds[0].find('div',class_='hospital-title').text

data = {

'id': l[0],

'name':str.strip(),

'location': tds[1].text,

'type': tds[2].text,

'edge': tds[3].text,

'level': tds[4].text,

'time': tds[6].text

}

insert = "insert into details (id,name,location,type,edge,level,time) values (%d, %s, %s, %s, %s, %s, %s)"

da=(data['id'],data['name'],data['location'],data['type'],data['edge'],data['level'],data['time'])

cursor.execute(insert,da)

conn.commit()

cursor.close()

conn.close()

但是不知道为什么,我最后数据库里面是乱码,爬取医院名字的时候数据库那里用的是utf8编码,写进去是正常的,现在爬取医院名字的时候也是utf8编码,但是结果是乱码,我换成gbk也是乱码,不知道为什么,但是不影响下面操作,先看下乱码表

找了半天也解决不了,索性二合一,然后就行了

import requests

import pymssql

from bs4 import BeautifulSoup

import re

serverName = '127.0.0.1'

userName = 'sa'

passWord = 'xxxxx'

database='hosipital'

conn = pymssql.connect(server=serverName,user=userName,password=passWord,database=database)

cursor = conn.cursor()

cursor.execute('CREATE TABLE details ( id int, name varchar(100),location varchar(100),type varchar(20),edge varchar(30),level varchar(20),time varchar(40))')

conn.commit()

count=0

startindex=0

for startindex in range(0,70,17):

endindex=startindex+17

baseUrl = f'http://localhost:8090/iserver/services/data-changchun/rest/data/datasources/Changchun/datasets/Hospital/features?fromIndex={startindex}&toIndex={endindex}'

r=requests.get(baseUrl)

r.encoding='utf-8'

html=r.text

soup = BeautifulSoup(html, 'html.parser')

tds=soup.find_all('td')

for td in tds:

a=td.find('a')

if a:

txt=a.get('href')

if re.match('^http.*false$',txt):

r2=requests.get(txt)

html2=r2.text

soup2=BeautifulSoup(html2,'html.parser')

div=soup2.find('div',class_='bodyDIv').find('div',id="resourceContent")

restBody=div.find('div',class_='restBody')

table=restBody.find('table')

td=table.find_all('tr')[2].find_all('td')[1]

name=td.text

count=count+1

print(f'正在进行第{count}次')

baseurl = f'https://y.dxy.cn/hospital/?page=1&name={name}&location=220000'

r=requests.get(baseurl)

r.encoding='utf-8'

html=r.text

soup=BeautifulSoup(html,'html.parser')

num=soup.find('div',class_='data').find('span').text

if(num=='0'):

insert = f"insert into details (id,name) values ({count},'{name}')"

cursor.execute(insert)

conn.commit()

else:

print(f'找到{num}条')

div=soup.find('div',class_='main-listsbox')

table=div.find('div',class_='table')

tbody=table.find('div',class_='tbody')

trs=tbody.find_all('div',class_='tr')

for tr in trs:

tds=tr.find_all('div',class_='td')

if '长春市' in tds[1].text:

str=tds[0].find('div',class_='hospital-title').text

insert = "insert into details (id,name,location,type,edge,level,time) values (%d, %s, %s, %s, %s, %s, %s)"

da=(count,str.strip(),tds[1].text,tds[2].text,tds[3].text,tds[4].text,tds[6].text)

cursor.execute(insert,da)

conn.commit()

cursor.close()

conn.close()

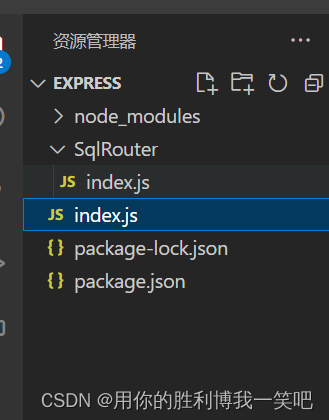

然后就是nodejs

index.js里是这样,用了express,用了路由,没用中间件

const express=require('express')

const app=express()

const SqlRouter=require('./SqlRouter')

app.use('/hosipital',SqlRouter)

app.get('*',(req,res)=>

{

res.send('网址输错了,请修改')

})

app.listen(3090,()=>

{

console.log('begin listen')

})sqlRouter里面是这样的

const express=require('express')

const router=express.Router()

const sql=require('mssql')

const mssql=require('mssql')

const config={

user:'sa',

password:'xxxx',

server:'localhost',

database:'hosipital',

port: 1433,

encrypt: false //这一步很重要

}

const query=async(num)=>

{

await mssql.connect(config)

const request = new mssql.Request()

const {recordset}=await request.query(`select * from inf where id=${num}`)

return recordset

}

router.get('/inf/:id',async(req,res)=>

{

const result=await query(req.params.id)

res.send(result)

})

router.get('*',(req,res)=>

{

res.send("<h1>进入了医院信息首页<h1/>")

})

module.exports=router用的mssql,里面有一个很重要的参数encrypt,刚开始一直连接失败,加上就好了,

然后异步,等数据库连接上之后,依据传入的id查数据库,也就是说等会我们发送ajax请求要带上id,后端就这些,然后看下leaflet

首当其冲的就是代理跨域在vite.config.ts

import { defineConfig } from 'vite'

import commonjs from 'vite-plugin-commonjs'

import vue from '@vitejs/plugin-vue'

import path from 'path'

// https://vitejs.dev/config/

export default defineConfig({

plugins: [commonjs(),vue()],

resolve:{

alias:{

'@':path.resolve(__dirname,'src')

}

},

server: {

proxy: {

"/api": { //代理的请求

target: "http://localhost:3090", //后端的地址

changeOrigin: true, //开启跨域访问

rewrite: (path) => path.replace(/^\/api/,''), //重写前缀(如果后端本身就有api这个通用前缀,那么就不用重写)

},

},

}

})我是这么理解的,就是你给代理服务器发必须要带上/api这个路径在最前面,然后代理服务器在向后端发送的时候rewrite把/api去掉,因此我们接下对axios封装要写上baseurl,下面对axios进行简单封装在utils/index.ts里面

import axios from 'axios'

const request=axios.create({

baseURL:'/api',

timeout:5000

})

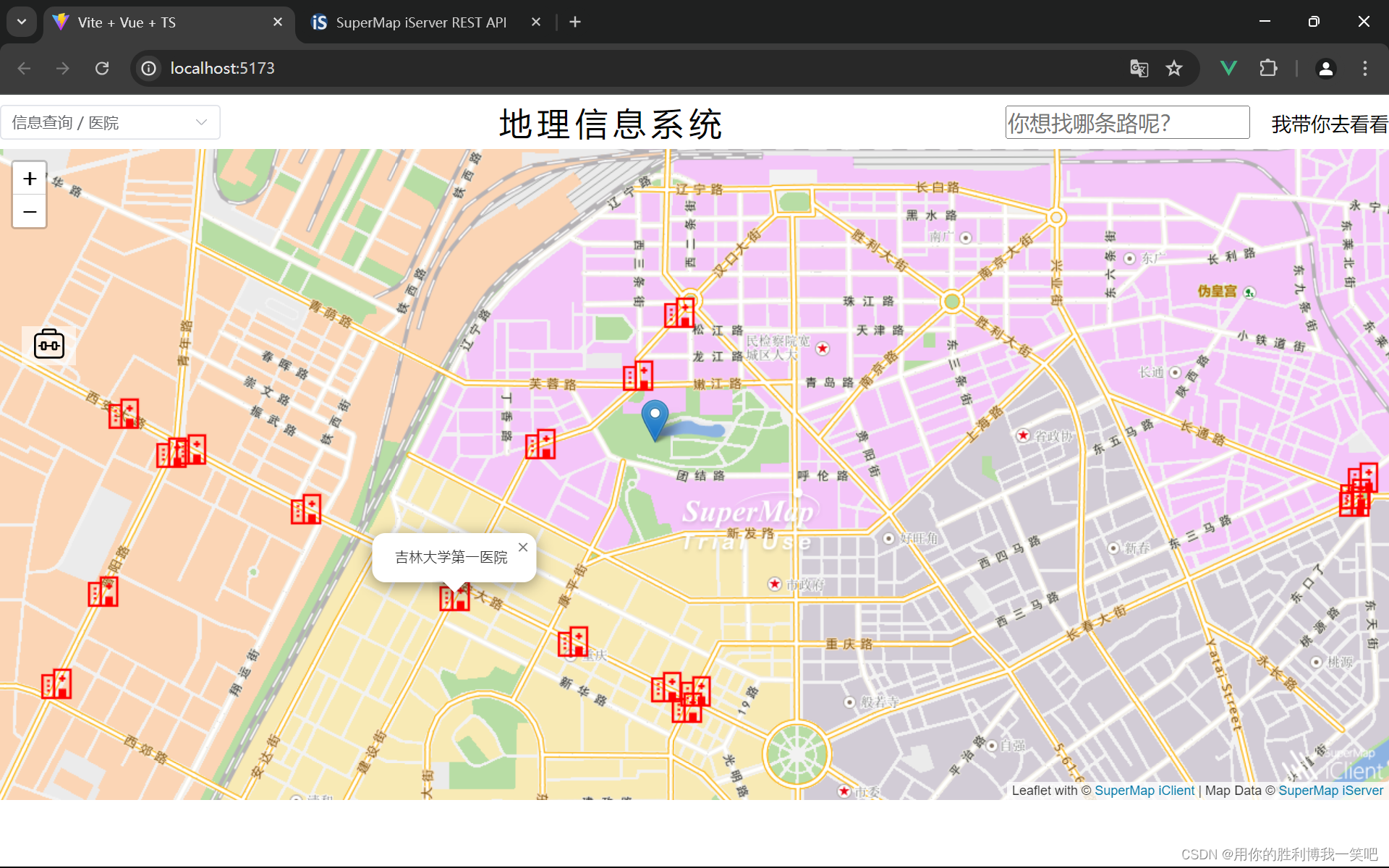

export default requestok封装结束,接下来就剩最后一步了,发送我是在components/Top/index.vue里面实现,下面只展示,点击marker然后弹出框展示一下名字

L.geoJSON(re.result.features,{pointToLayer:function(geoJsonPoint:any, latlng:any) {

let marker= L.marker(latlng,{icon:myIcon})

marker.on('click',async function()

{

let content:any=await request.get(`/hosipital/inf/${geoJsonPoint.id}`)

marker.bindPopup(`${content.data[0].name}`).openPopup()

})

map.value.off('click')

return marker;

}}).addTo(map.value)最后结果如图

ok,结束了,如果有问题可以问我

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?