实验部署

工作中是基于kube-api的自动发现

1、创建账户绑定集群

kubectl create serviceaccount monitor -n monitor-sa

#创建账户

kubectl create clusterrolebinding monitor-clusterrolebinding -n monitor-sa --clusterrole=cluster-admin --serviceaccount=monitor-sa:monitor

#绑定集群2、node-exporter发现节点

先部署node-exporter发现节点:

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: node-exporter

namespace: monitor-sa

labels:

name: node-exporter

spec:

selector:

matchLabels:

name: node-exporter

template:

metadata:

labels:

name: node-exporter

spec:

hostPID: true

hostIPC: true

hostNetwork: true

#表示共用节点服务器的网络,进程号和进程间的通信命名空间

containers:

- name: node-exporter

image: prom/node-exporter

ports:

- containerPort: 9100

resources:

limits:

cpu: "0.5"

securityContext:

privileged: true

#给容器在节点服务器上拥有所有权限

args:

- --path.procfs

- /host/proc

- --path.sysfs

- /host/sys

- --collector.filesystem.ignored-mount-points

- '"^/(sys|proc|dev|host|etc)($|/)"'

#收集节点系统配置,网络配置,硬件设备,主机信息

volumeMounts:

- name: dev

mountPath: /host/dev

- name: proc

mountPath: /host/proc

- name: sys

mountPath: /host/sys

- name: rootfs

mountPath: /rootfs

volumes:

- name: proc

hostPath:

path: /proc

- name: dev

hostPath:

path: /dev

- name: sys

hostPath:

path: /sys

- name: rootfs

hostPath:

path: /

3、创建configmap,传输配置文件

apiVersion: v1

kind: ConfigMap

metadata:

labels:

app: prometheus

name: prometheus-config

namespace: monitor-sa

data:

prometheus.yml: |

global:

scrape_interval: 15s

scrape_timeout: 10s

evaluation_interval: 1m

scrape_configs:

- job_name: 'kubernetes-node'

kubernetes_sd_configs:

- role: node

relabel_configs:

- source_labels: [__address__]

regex: '(.*):10250'

replacement: '${1}:9100'

target_label: __address__

action: replace

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- job_name: 'kubernetes-node-cadvisor'

kubernetes_sd_configs:

- role: node

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- target_label: __address__

replacement: kubernetes.default.svc:443

- source_labels: [__meta_kubernetes_node_name]

regex: (.+)

target_label: __metrics_path__

replacement: /api/v1/nodes/${1}/proxy/metrics/cadvisor

- job_name: 'kubernetes-apiserver'

kubernetes_sd_configs:

- role: endpoints

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- source_labels: [__meta_kubernetes_namespace, __meta_kubernetes_service_name, __meta_kubernetes_endpoint_port_name]

action: keep

regex: default;kubernetes;https

- job_name: 'kubernetes-service-endpoints'

kubernetes_sd_configs:

- role: endpoints

relabel_configs:

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scheme]

action: replace

target_label: __scheme__

regex: (https?)

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_service_annotation_prometheus_io_port]

action: replace

target_label: __address__

regex: ([^:]+)(?::\d+)?;(\d+)

replacement: $1:$2

- action: labelmap

regex: __meta_kubernetes_service_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: kubernetes_name

- job_name: 'kubernetes-ingresses'

kubernetes_sd_configs:

- role: ingress

relabel_configs:

- source_labels: [__meta_kubernetes_ingress_annotation_prometheus_io_probe]

action: keep

regex: true

- source_labels: [__meta_kubernetes_ingress_scheme,__address__,__meta_kubernetes_ingress_path]

regex: (.+);(.+);(.+)

replacement: ${1}://${2}${3}

target_label: __param_target

- target_label: __address__

replacement: blackbox-exporter.example.com:9115

- source_labels: [__param_target]

target_label: instance

- action: labelmap

regex: __meta_kubernetes_ingress_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_ingress_name]

target_label: kubernetes_name

- job_name: 'kubernetes-pods'

kubernetes_sd_configs:

- role: pod

relabel_configs:

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_pod_annotation_prometheus_io_port]

action: replace

regex: ([^:]+)(?::\d+)?;(\d+)

replacement: $1:$2

target_label: __address__

- action: labelmap

regex: __meta_kubernetes_pod_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: kubernetes_pod_name

注释

apiVersion: v1

kind: ConfigMap

matadata:

labels:

app: prometheus

name: prometheus-config

namespace: monitor-sa

data:

prometheus.yaml:

global:

scrape_interval: 15s

scrape_timeout: 10s

evaluation_interval: 1m

scrape_configs:

- job_name: 'kubernetes-node'

kubernetes_sd_configs:

#*_sd_configs,是promQL的语法,使用k8s的服务发现

- role: node

#使用默认kubernets提供的http端口,发现集群中的每个node

relabel_configs:

#重新标记

- source_labels: [_address_]

#匹配原始的标签

regex: '(.*):10250'

#匹配10250端口的url

replacement: '${1}:9100'

target_label: _address_

#把新生成的URL是${1}获取的ip:192.168.233.81:9100---->传给address

action: replace

- action: labelmap

#替换动作

regex: _meta_kubernets_node_label_(.+)

#匹配下面的正则表达式,保留node的标签,否则只会展示instance,这些主要是为了获取和匹配node节点的信息

- job_name: 'kubernetes-node-cadvisor'

kubernetes_sd_configs:

- role: node

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- action: labelmap

regex: _meta_kubernets

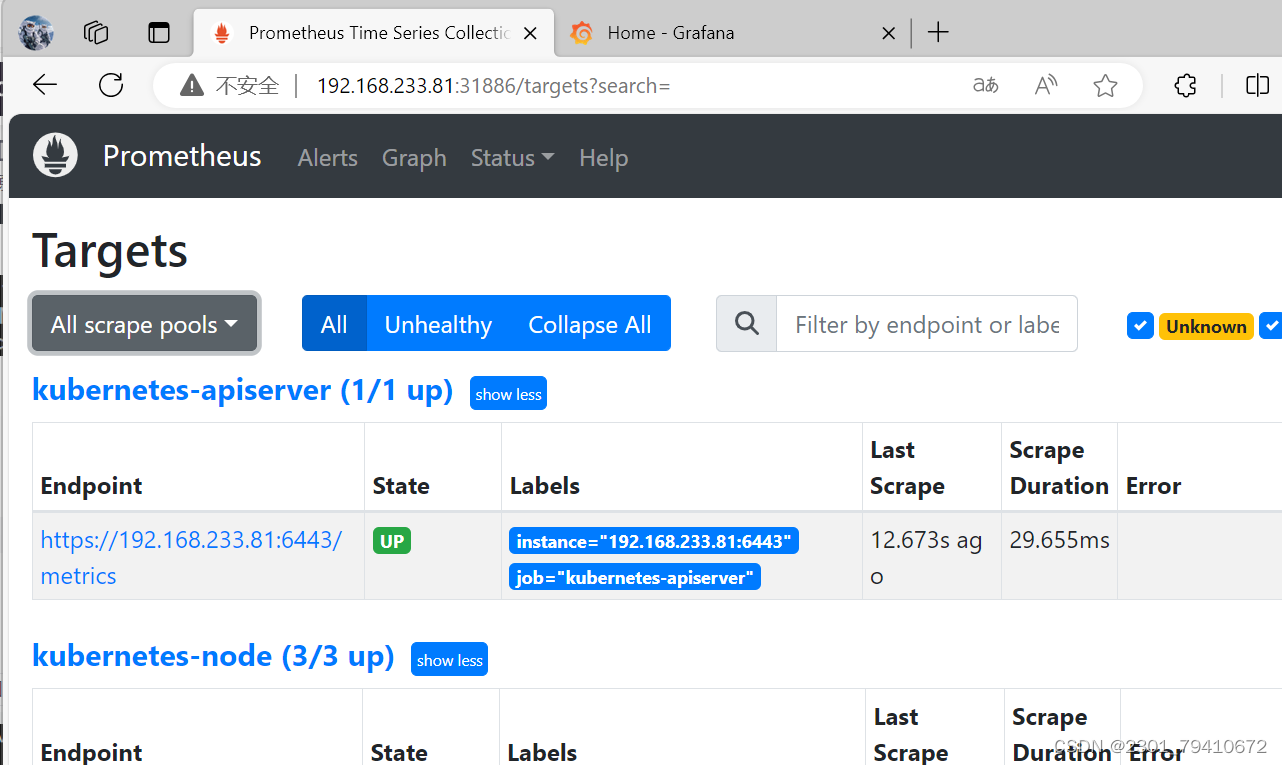

3.1、热更新修改configmap方式

curl -X POST -Ls http://10.244.1.45:9090/-/reload

#热更新的方式。不要直接delete。线上环境一定要热更新

#线上重启,千万不要Delete重启,会数据丢失4、部署Prometheus:

apiVersion: apps/v1

kind: Deployment

metadata:

name: prometheus-server

namespace: monitor-sa

labels:

app: prometheus

spec:

replicas: 1

selector:

matchLabels:

app: prometheus

component: server

template:

metadata:

labels:

app: prometheus

component: server

annotations:

prometheus.io/scrape: 'false'

#prometheus能够获取集群的信息

spec:

serviceAccountName: monitor

initContainers:

- name: init-chmod

image: busybox:latest

#busybox是最精简版的centos系统

command: ['sh','-c','chmod -R 777 /prometheus;chmod -R 777 /etc']

volumeMounts:

- mountPath: /prometheus

name: prometheus-storage-volume

- mountPath: /etc/localtime

name: timezone

containers:

- name: prometheus

image: prom/prometheus:v2.45.0

command:

- prometheus

- --config.file=/etc/prometheus/prometheus.yml

- --storage.tsdb.path=/prometheus

- --storage.tsdb.retention=720h

- --web.enable-lifecycle

ports:

- containerPort: 9090

volumeMounts:

- name: prometheus-config

mountPath: /etc/prometheus/prometheus.yml

subPath: prometheus.yml

- mountPath: /prometheus/

name: prometheus-storage-volume

- name: timezone

mountPath: /etc/localtime

volumes:

- name: prometheus-config

configMap:

name: prometheus-config

defaultMode: 0777

- name: prometheus-storage-volume

hostPath:

path: /data

type: Directory

- name: timezone

hostPath:

path: /usr/share/zoneinfo/Asia/Shanghai

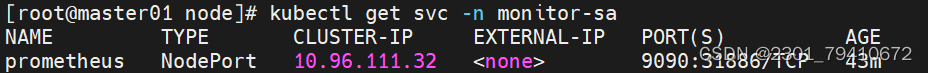

5、部署service提供外部访问:

apiVersion: v1

kind: Service

metadata:

name: prometheus

namespace: monitor-sa

labels:

app: prometheus

spec:

type: NodePort

ports:

- port: 9090

targetPort: 9090

protocol: TCP

selector:

app: prometheus

component: server

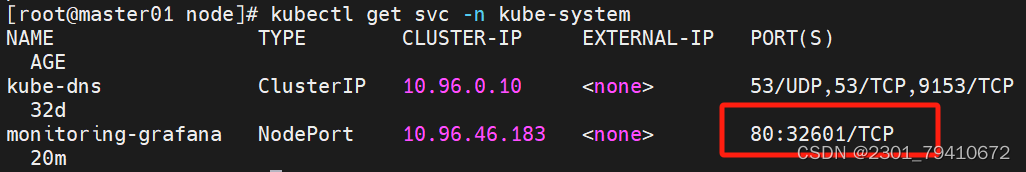

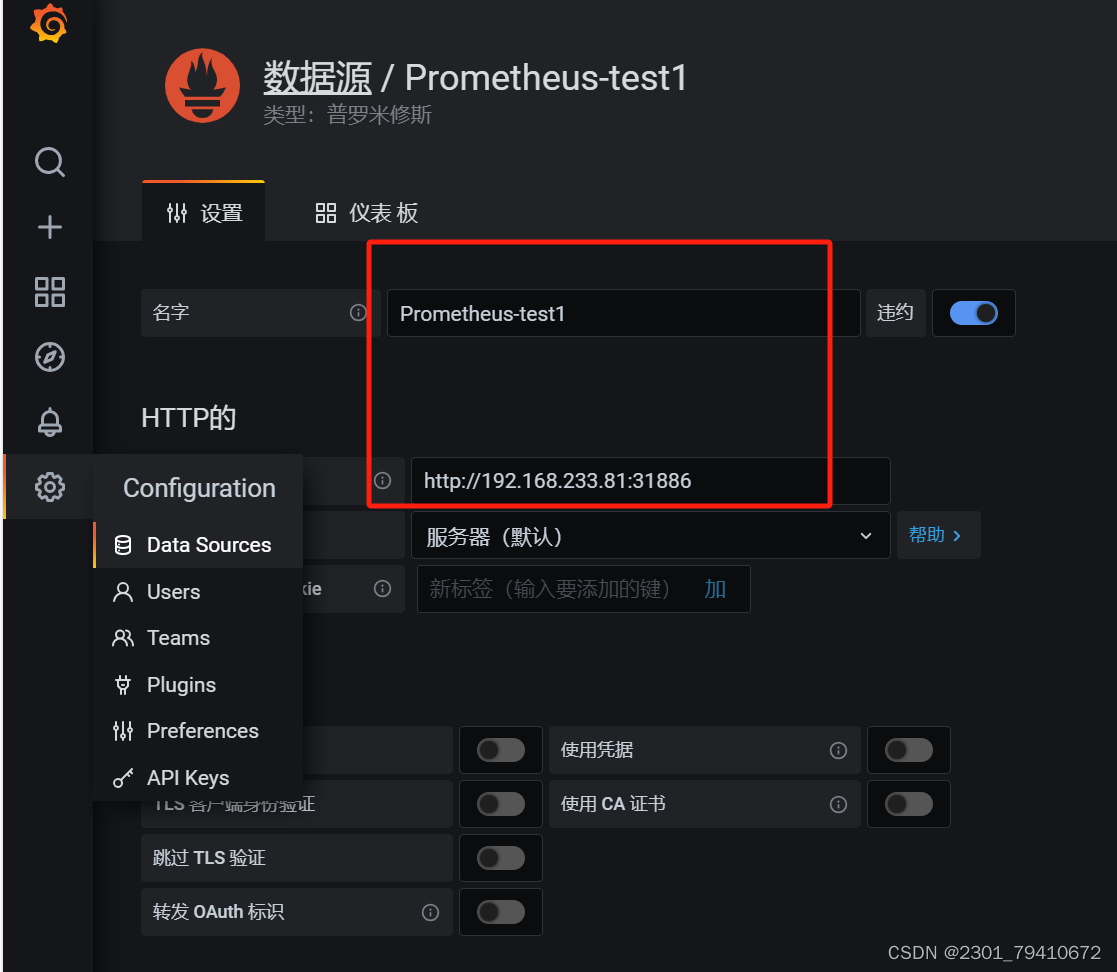

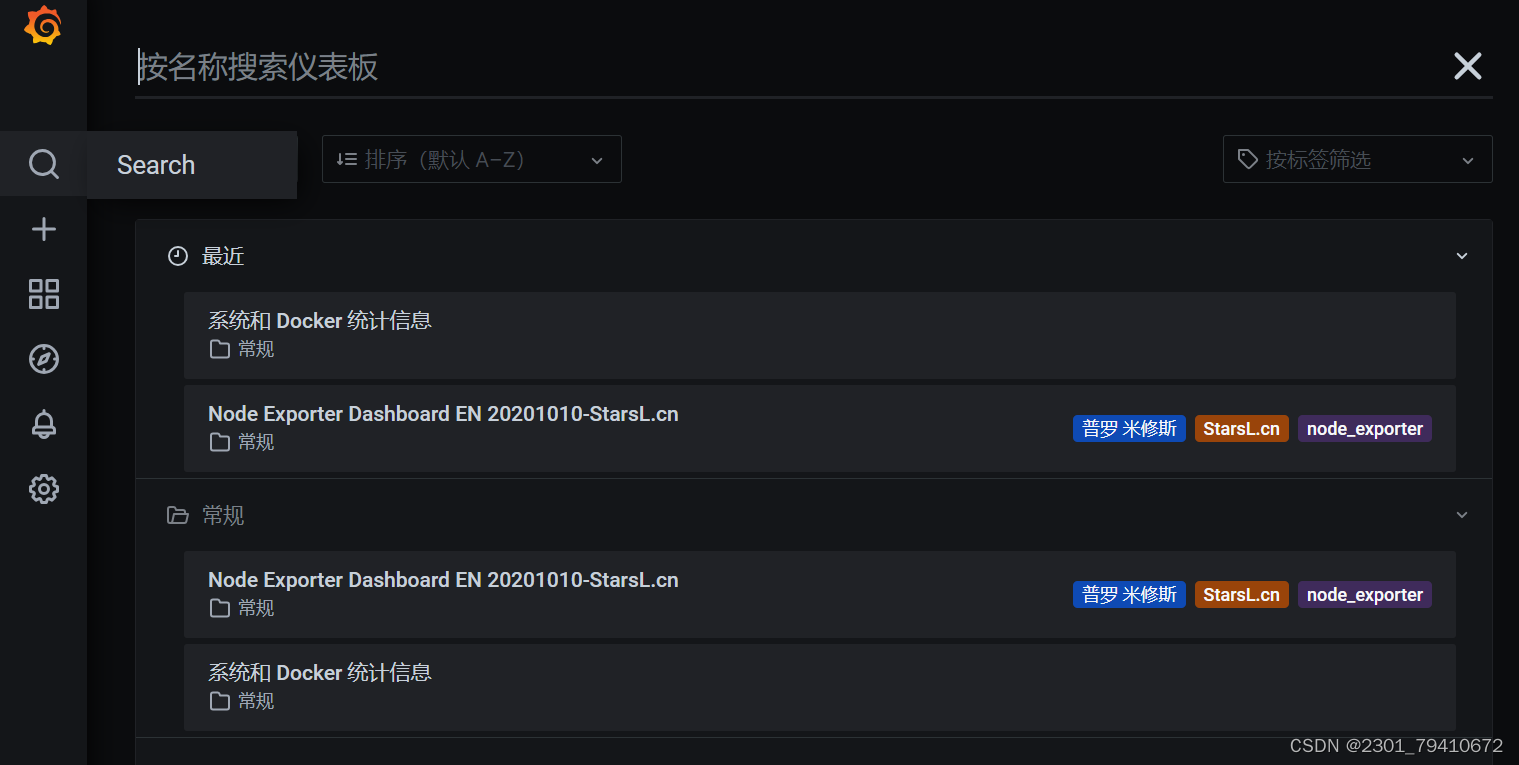

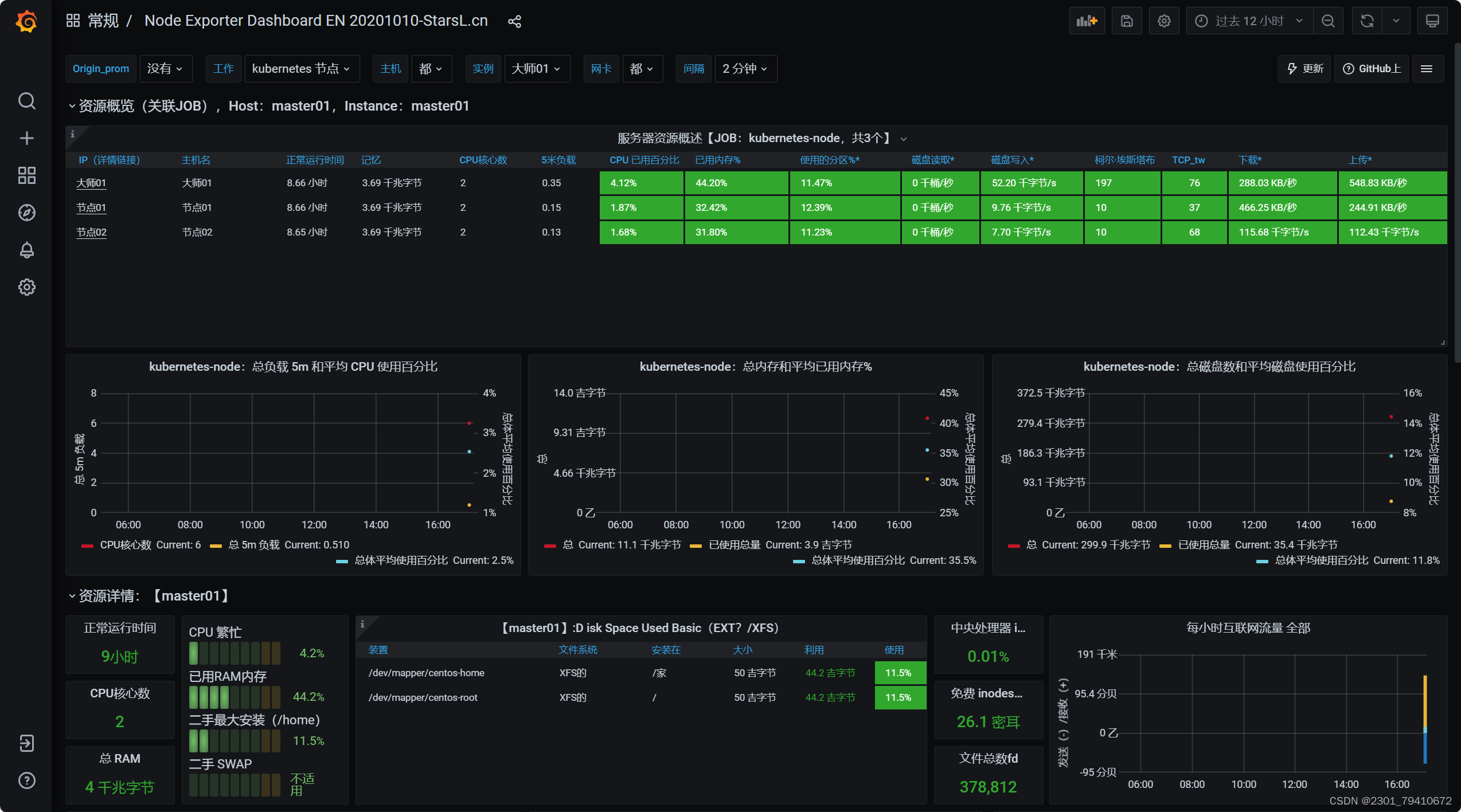

6、安装grafana可视化工具

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: grafana

namespace: kube-system

spec:

accessModes:

- ReadWriteMany

storageClassName: nfs-client-storageclass

resources:

requests:

storage: 2Gi

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: monitoring-grafana

namespace: kube-system

spec:

replicas: 1

selector:

matchLabels:

task: monitoring

k8s-app: grafana

template:

metadata:

labels:

task: monitoring

k8s-app: grafana

spec:

containers:

- name: grafana

image: grafana/grafana:7.5.11

securityContext:

runAsUser: 104

runAsGroup: 107

ports:

- containerPort: 3000

protocol: TCP

volumeMounts:

- mountPath: /etc/ssl/certs

name: ca-certificates

readOnly: false

- mountPath: /var

name: grafana-storage

- mountPath: /var/lib/grafana

name: graf-test

env:

- name: INFLUXDB_HOST

value: monitoring-influxdb

- name: GF_SERVER_HTTP_PORT

value: "3000"

- name: GF_AUTH_BASIC_ENABLED

value: "false"

- name: GF_AUTH_ANONYMOUS_ENABLED

value: "true"

- name: GF_AUTH_ANONYMOUS_ORG_ROLE

value: Admin

- name: GF_SERVER_ROOT_URL

value: /

volumes:

- name: ca-certificates

hostPath:

path: /etc/ssl/certs

- name: grafana-storage

emptyDir: {}

- name: graf-test

persistentVolumeClaim:

claimName: grafana

---

apiVersion: v1

kind: Service

metadata:

labels:

name: monitoring-grafana

namespace: kube-system

spec:

ports:

- port: 80

targetPort: 3000

selector:

k8s-app: grafana

type: NodePort[root@node01 ~]# mkdir -p /data

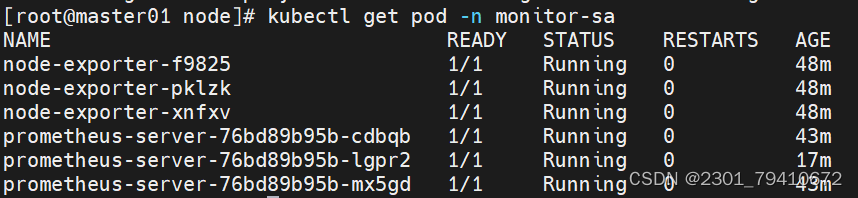

[root@master01 node]# kubectl get pod -n monitor-sa -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

node-exporter-f9825 1/1 Running 0 34m 192.168.233.83 node02 <none> <none>

node-exporter-pklzk 1/1 Running 0 34m 192.168.233.81 master01 <none> <none>

node-exporter-xnfxv 1/1 Running 0 34m 192.168.233.82 node01 <none> <none>

prometheus-server-76bd89b95b-cdbqb 1/1 Running 0 29m 10.244.2.11 node02 <none> <none>

prometheus-server-76bd89b95b-lgpr2 1/1 Running 0 3m22s 10.244.0.3 master01 <none> <none>

prometheus-server-76bd89b95b-mx5gd 1/1 Running 0 29m 10.244.1.12 node01 <none> <none>

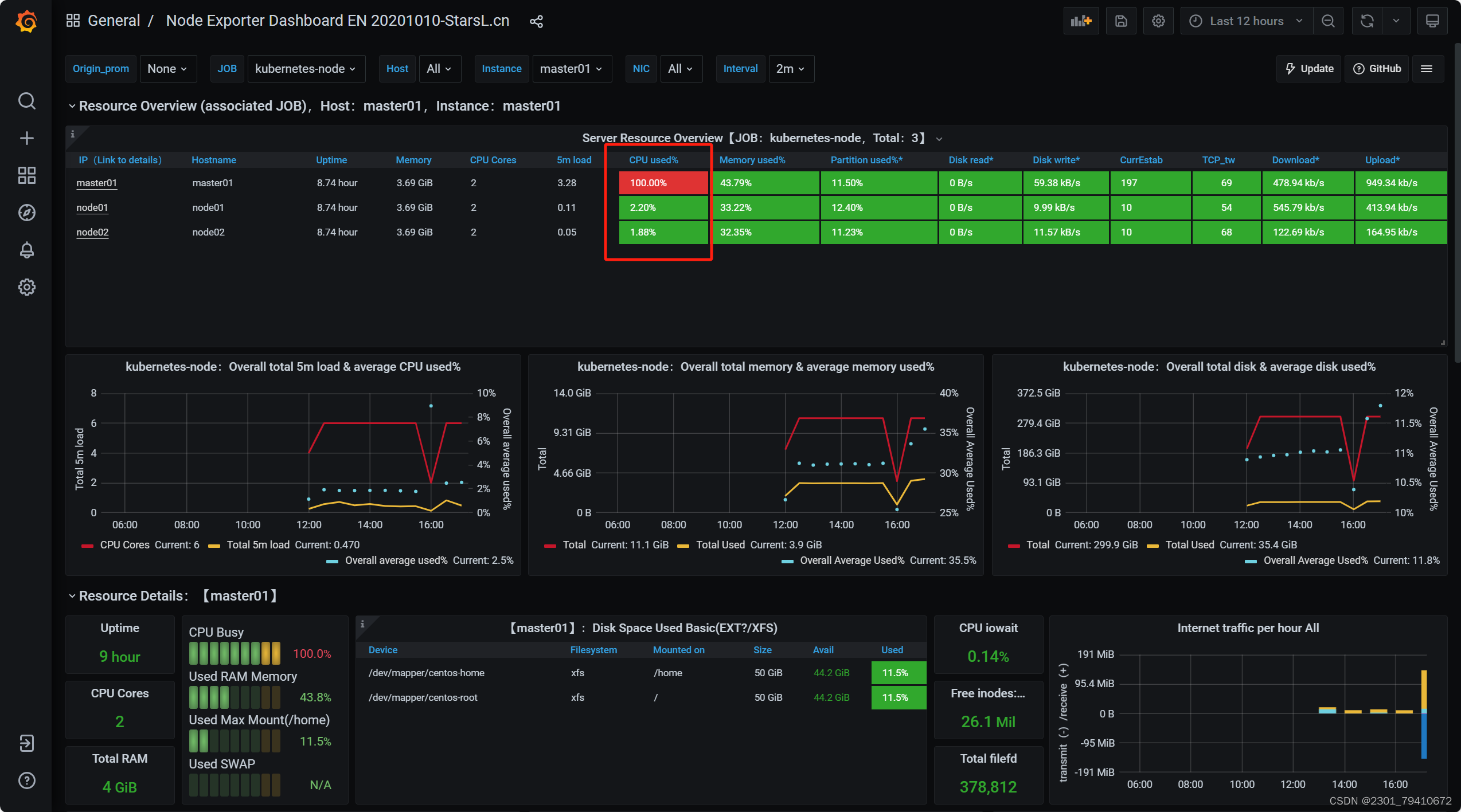

[root@master01 node]# yum -y install stress

[root@master01 node]# stress --cpu 2

stress: info: [72419] dispatching hogs: 2 cpu, 0 io, 0 vm, 0 hdd

压力测试

6562

6562

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?