文末有福利领取哦~

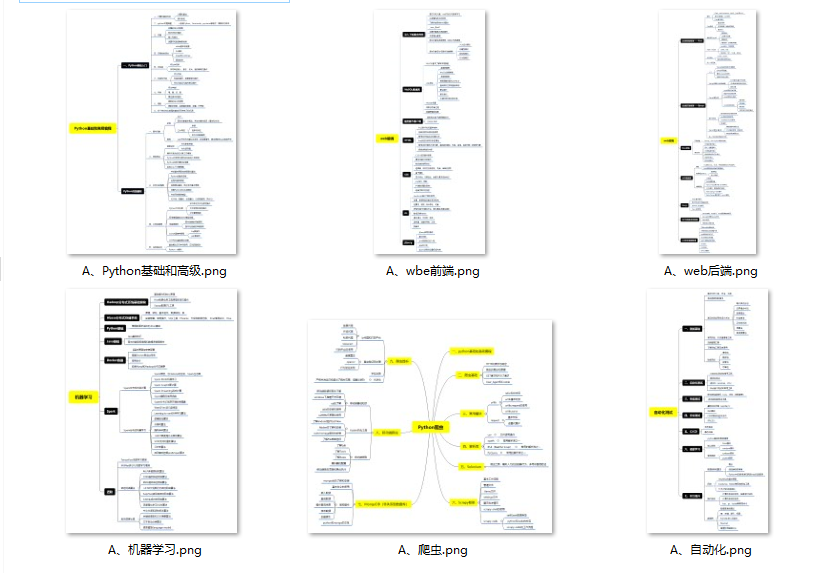

👉一、Python所有方向的学习路线

Python所有方向的技术点做的整理,形成各个领域的知识点汇总,它的用处就在于,你可以按照上面的知识点去找对应的学习资源,保证自己学得较为全面。

👉二、Python必备开发工具

👉三、Python视频合集

观看零基础学习视频,看视频学习是最快捷也是最有效果的方式,跟着视频中老师的思路,从基础到深入,还是很容易入门的。

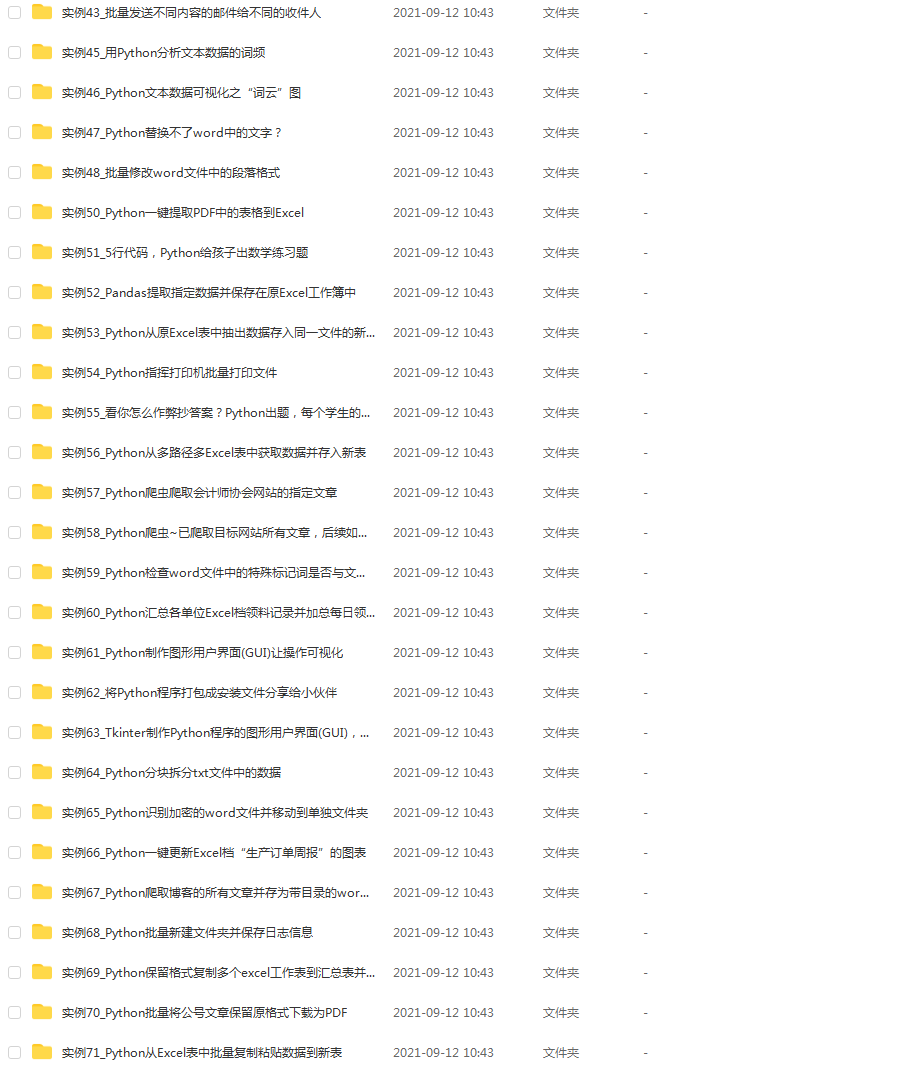

👉 四、实战案例

光学理论是没用的,要学会跟着一起敲,要动手实操,才能将自己的所学运用到实际当中去,这时候可以搞点实战案例来学习。(文末领读者福利)

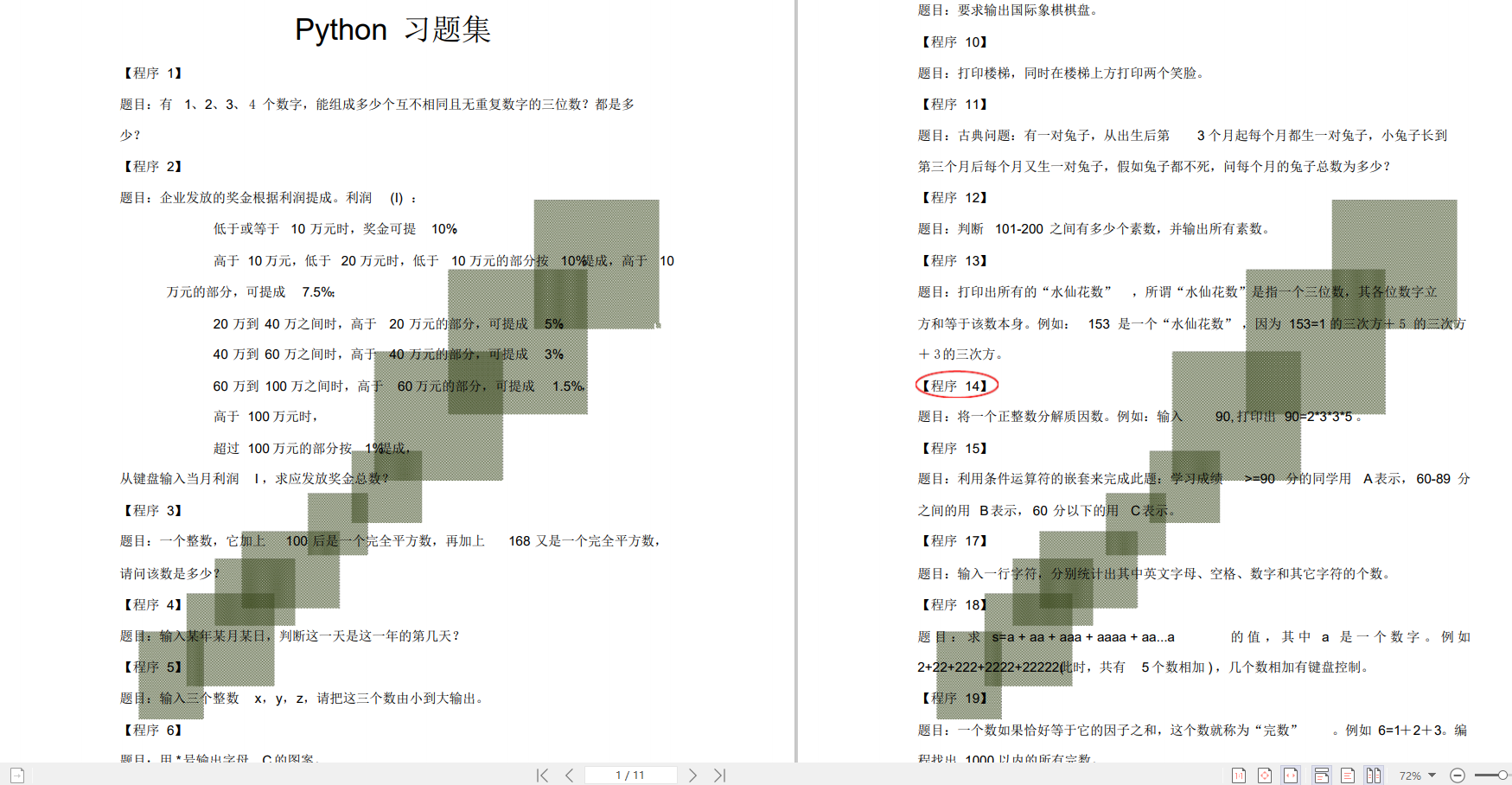

👉五、Python练习题

检查学习结果。

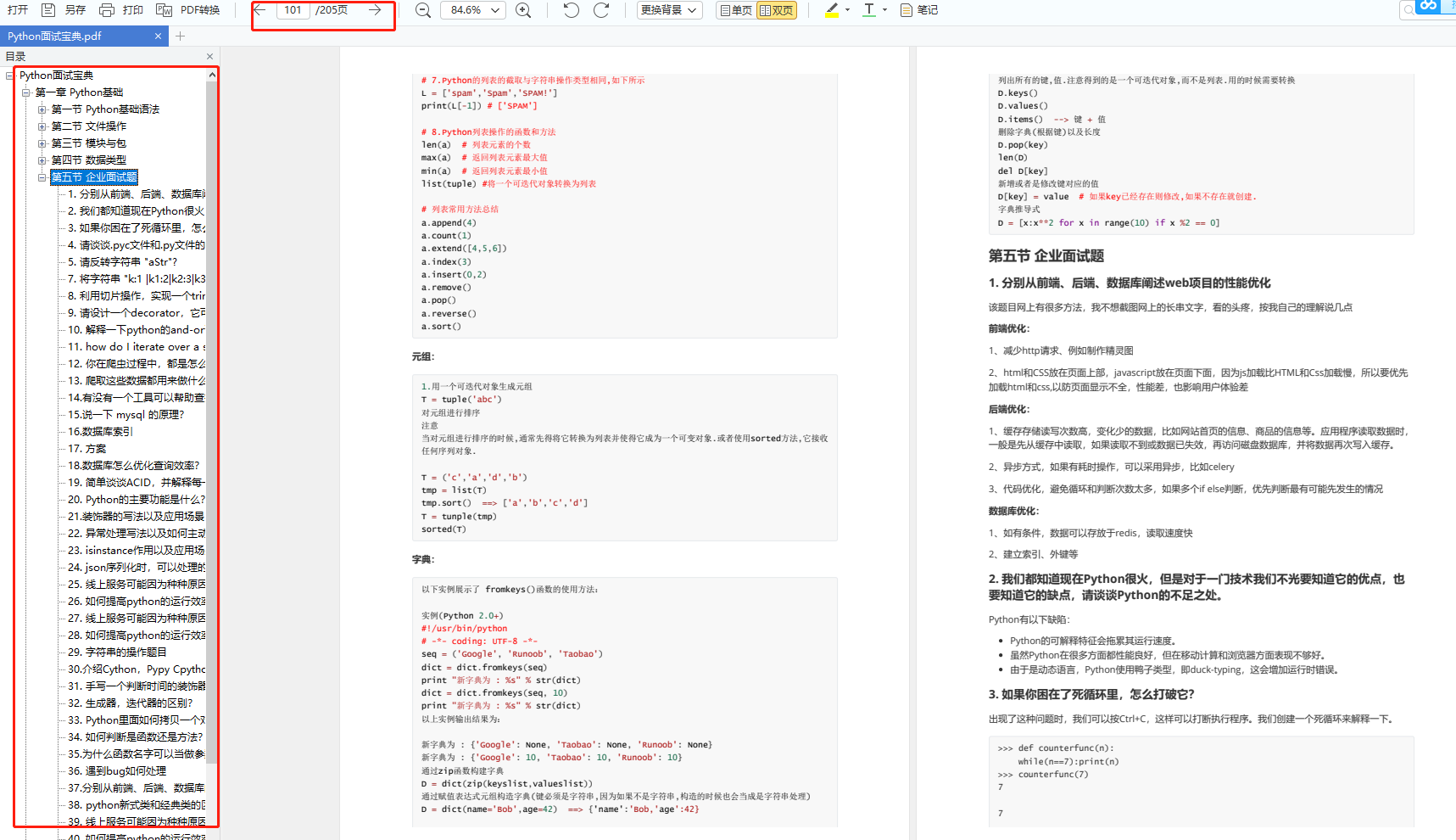

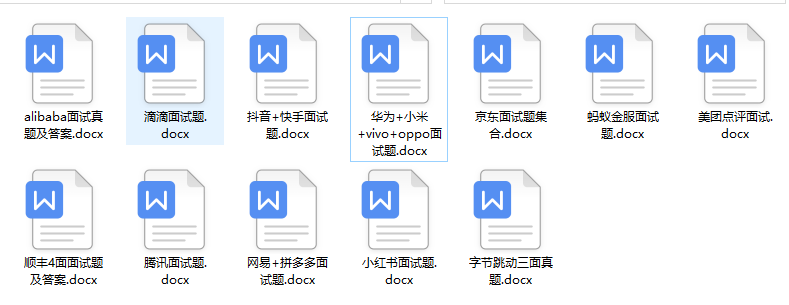

👉六、面试资料

我们学习Python必然是为了找到高薪的工作,下面这些面试题是来自阿里、腾讯、字节等一线互联网大厂最新的面试资料,并且有阿里大佬给出了权威的解答,刷完这一套面试资料相信大家都能找到满意的工作。

👉因篇幅有限,仅展示部分资料,这份完整版的Python全套学习资料已经上传

网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

url.split(‘/’)[-2], max_behot_time, get_as_cp()[‘as’], get_as_cp()[‘cp’],

get_signature(url.split(‘/’)[-2], max_behot_time))

while n < max_qingqiu and not break_flag:

try:

print(url)

r = requests.get(first_url, headers=headers_a, cookies=cookies)

data = json.loads(r.text)

print(data)

max_behot_time = data[‘next’][‘max_behot_time’]

if max_behot_time:

article_list = data[‘data’]

for i in article_list:

try:

if i[‘article_genre’] == ‘article’:

res = requests.get(‘https://www.toutiao.com/i’ + i[‘group_id’], headers=headers(),

cookies=cookies)

time.sleep(1)

article_title = re.findall(“title: ‘(.*?)’”, res.text)

article_content = re.findall(“content: ‘(.*?)’”, res.text, re.S)[0]

pattern = re.compile(r"[(a-zA-Z~-_!@#$%^+*&\/?|:.<>{}()';=)*|\d]")

article_content = re.sub(pattern, ‘’, article_content[0])

article_content = article_content.replace(‘"’, ‘’).replace(‘u003C’, ‘<’).replace(

‘u003E’,

‘>’).replace(

‘=’,

‘=’).replace(

‘u002F’, ‘/’).replace(‘\’, ‘’)

article_images = etree.HTML(article_content)

article_image = article_images.xpath(‘//img/@src’)

article_time = re.findall(“time: ‘(.*?)’”, res.text)

article_source = re.findall(“source: ‘(.*?)’”, res.text, re.S)

result_time = []

[result_time.append(i) for i in

str(article_time[0]).split(’ ‘)[0].replace(’-‘, ‘,’).split(’,')]

print(result_time)

cha = (datetime.now() - datetime(int(result_time[0]), int(result_time[1]),

int(result_time[2]))).days

print(cha)

if 30 < cha <= 32:

print(‘完成’)

break_flag.append(1)

break

continue

if cha > 32:

print(‘完成’)

break_flag.append(1)

break

row = {‘发表时间’: article_time[0], ‘标题’: article_title[0].strip(‘"’),

‘来源’: article_source[0],‘所有图片’:article_image,

‘文章内容’: article_content.strip()}

with open(‘/toutiao/’ + str(csv_name) + ‘文章.csv’, ‘a’, newline=‘’, encoding=‘gb18030’)as f:

f_csv = csv.DictWriter(f, headers1)

f_csv.writeheader()

f_csv.writerow(row)

print(‘正在爬取文章:’, article_title[0].strip(‘"’), article_time[0],

‘https://www.toutiao.com/i’ + i[‘group_id’])

time.sleep(1)

else:

pass

except Exception as e:

print(e, ‘https://www.toutiao.com/i’ + i[‘group_id’])

wenzhang(url=url, max_behot_time=max_behot_time, csv_name=csv_name, n=n)

else:

pass

except KeyError:

n += 1

print(‘第’ + str(n) + ‘次请求’, first_url)

time.sleep(1)

if n == max_qingqiu:

print(‘请求超过最大次数’)

break_flag.append(1)

else:

pass

except Exception as e:

print(e)

else:

pass

print(max_behot_time)

print(data)

文章详情页数据(已合并到文章数据)

def get_wenzhang_detail(url, csv_name=0):

headers1 = [‘发表时间’, ‘标题’, ‘来源’, ‘文章内容’]

res = requests.get(url, headers=headers_a, cookies=cookies)

time.sleep(1)

article_title = re.findall(“title: ‘(.*?)’”, res.text)

article_content = re.findall(“content: ‘(.*?)’”, res.text, re.S)

pattern = re.compile(r"[(a-zA-Z~-_!@#$%^+*&\/?|:.<>{}()';=)*|\d]")

article_content = re.sub(pattern, ‘’, article_content[0])

article_time = re.findall(“time: ‘(.*?)’”, res.text)

article_source = re.findall(“source: ‘(.*?)’”, res.text, re.S)

result_time = []

[result_time.append(i) for i in str(article_time[0]).split(’ ‘)[0].replace(’-‘, ‘,’).split(’,')]

print(result_time)

cha = (datetime.now() - datetime(int(result_time[0]), int(result_time[1]), int(result_time[2]))).days

print(cha)

if cha > 8:

return None

row = {‘发表时间’: article_time[0], ‘标题’: article_title[0].strip(‘"’), ‘来源’: article_source[0],

‘文章内容’: article_content.strip()}

with open(‘/toutiao/’ + str(csv_name) + ‘文章.csv’, ‘a’, newline=‘’)as f:

f_csv = csv.DictWriter(f, headers1)

f_csv.writeheader()

f_csv.writerow(row)

print(‘正在爬取文章:’, article_title[0].strip(‘"’), article_time[0], url)

time.sleep(0.5)

return ‘ok’

视频数据

break_flag_video = []

def shipin(url, max_behot_time=0, csv_name=0, n=0):

max_qingqiu = 20

headers2 = [‘视频发表时间’, ‘标题’, ‘来源’, ‘视频链接’]

first_url = ‘https://www.toutiao.com/c/user/article/?page_type=0&user_id=%s&max_behot_time=%s&count=20&as=%s&cp=%s&_signature=%s’ % (

url.split(‘/’)[-2], max_behot_time, get_as_cp()[‘as’], get_as_cp()[‘cp’],

get_signature(url.split(‘/’)[-2], max_behot_time))

while n < max_qingqiu and not break_flag_video:

try:

res = requests.get(first_url, headers=headers_a, cookies=cookies)

data = json.loads(res.text)

print(data)

max_behot_time = data[‘next’][‘max_behot_time’]

if max_behot_time:

video_list = data[‘data’]

for i in video_list:

try:

start_time = i[‘behot_time’]

video_title = i[‘title’]

video_source = i[‘source’]

detail_url = ‘https://www.ixigua.com/i’ + i[‘item_id’]

resp = requests.get(detail_url, headers=headers())

r = str(random.random())[2:]

url_part = “/video/urls/v/1/toutiao/mp4/{}?r={}”.format(

re.findall(‘“video_id”:“(.*?)”’, resp.text)[0], r)

s = crc32(url_part.encode())

api_url = “https://ib.365yg.com{}&s={}”.format(url_part, s)

resp = requests.get(api_url, headers=headers())

j_resp = resp.json()

video_url = j_resp[‘data’][‘video_list’][‘video_1’][‘main_url’]

video_url = b64decode(video_url.encode()).decode()

print((int(str(time.time()).split(‘.’)[0])-start_time)/86400)

if 30 < (int(str(time.time()).split(‘.’)[0]) - start_time) / 86400 <= 32:

print(‘完成’)

break_flag_video.append(1)

continue

if (int(str(time.time()).split(‘.’)[0]) - start_time) / 86400 > 32:

print(‘完成’)

break_flag_video.append(1)

break

row = {‘视频发表时间’: time.strftime(‘%Y-%m-%d %H:%M:%S’, time.localtime(start_time)),

‘标题’: video_title, ‘来源’: video_source,

‘视频链接’: video_url}

with open(‘/toutiao/’ + str(csv_name) + ‘视频.csv’, ‘a’, newline=‘’, encoding=‘gb18030’)as f:

f_csv = csv.DictWriter(f, headers2)

f_csv.writeheader()

f_csv.writerow(row)

print(‘正在爬取视频:’, video_title, detail_url, video_url)

time.sleep(3)

except Exception as e:

print(e, ‘https://www.ixigua.com/i’ + i[‘item_id’])

shipin(url=url, max_behot_time=max_behot_time, csv_name=csv_name, n=n)

except KeyError:

n += 1

print(‘第’ + str(n) + ‘次请求’, first_url)

time.sleep(3)

if n == max_qingqiu:

print(‘请求超过最大次数’)

break_flag_video.append(1)

except Exception as e:

print(e)

else:

pass

微头条

break_flag_weitoutiao = []

def weitoutiao(url, max_behot_time=0, n=0, csv_name=0):

max_qingqiu = 20

headers3 = [‘微头条发表时间’, ‘来源’, ‘标题’, ‘文章内图片’, ‘微头条内容’]

while n < max_qingqiu and not break_flag_weitoutiao:

try:

first_url = ‘https://www.toutiao.com/api/pc/feed/?category=pc_profile_ugc&utm_source=toutiao&visit_user_id=%s&max_behot_time=%s’ % (

url.split(‘/’)[-2], max_behot_time)

print(first_url)

res = requests.get(first_url, headers=headers_a, cookies=cookies)

data = json.loads(res.text)

print(data)

max_behot_time = data[‘next’][‘max_behot_time’]

weitoutiao_list = data[‘data’]

for i in weitoutiao_list:

try:

detail_url = ‘https://www.toutiao.com/a’ + str(i[‘concern_talk_cell’][‘id’])

print(detail_url)

resp = requests.get(detail_url, headers=headers(), cookies=cookies)

start_time = re.findall(“time: ‘(.*?)’”, resp.text, re.S)

weitoutiao_name = re.findall(“name: ‘(.*?)’”, resp.text, re.S)

weitoutiao_title = re.findall(“title: ‘(.*?)’”, resp.text, re.S)

weitoutiao_images = re.findall(‘images: [“(.*?)”]’,resp.text,re.S)

print(weitoutiao_images)

if weitoutiao_images:

weitoutiao_image = ‘http:’ + weitoutiao_images[0].replace(‘u002F’,‘/’).replace(‘\’,‘’)

print(weitoutiao_image)

else:

weitoutiao_image = ‘此头条内无附件图片’

weitoutiao_content = re.findall(“content: ‘(.*?)’”, resp.text, re.S)

result_time = []

[result_time.append(i) for i in str(start_time[0]).split(’ ‘)[0].replace(’-‘, ‘,’).split(’,')]

print(result_time)

cha = (

datetime.now() - datetime(int(result_time[0]), int(result_time[1]), int(result_time[2]))).days

print(cha)

if cha > 30:

break_flag_weitoutiao.append(1)

print(‘完成’)

break

row = {‘微头条发表时间’: start_time[0], ‘来源’: weitoutiao_name[0],

‘标题’: weitoutiao_title[0].strip(‘"’),‘文章内图片’: weitoutiao_image,

‘微头条内容’: weitoutiao_content[0].strip(‘"’)}

with open(‘/toutiao/’ + str(csv_name) + ‘微头条.csv’, ‘a’, newline=‘’, encoding=‘gb18030’)as f:

f_csv = csv.DictWriter(f, headers3)

f_csv.writeheader()

f_csv.writerow(row)

time.sleep(1)

print(‘正在爬取微头条’, weitoutiao_name[0], start_time[0], detail_url)

except Exception as e:

print(e, ‘https://www.toutiao.com/a’ + str(i[‘concern_talk_cell’][‘id’]))

weitoutiao(url=url, max_behot_time=max_behot_time, csv_name=csv_name, n=n)

except KeyError:

n += 1

print(‘第’ + str(n) + ‘次请求’)

time.sleep(2)

if n == max_qingqiu:

print(‘请求超过最大次数’)

break_flag_weitoutiao.append(1)

else:

pass

except Exception as e:

print(e)

else:

pass

获取需要爬取的网站数据

def csv_read(path):

data = []

with open(path, ‘r’, encoding=‘gb18030’) as f:

reader = csv.reader(f, dialect=‘excel’)

for row in reader:

data.append(row)

return data

启动函数

def main():

for j, i in enumerate(csv_read(‘toutiao-suoyou.csv’)):

data_url = data.get_nowait()

if ‘文章’ in i[3]:

启动抓取文章函数

print(‘当前正在抓取文章第’, j, i[2])

headers1 = [‘发表时间’, ‘标题’, ‘来源’, ‘所有图片’, ‘文章内容’]

with open(‘/toutiao/’ + i[0] + ‘文章.csv’, ‘a’, newline=‘’)as f:

f_csv = csv.DictWriter(f, headers1)

f_csv.writeheader()

break_flag.clear()

wenzhang(url=i[2], csv_name=i[0])

if ‘视频’ in i[3]:

启动爬取视频的函数

print(‘当前正在抓取视频第’, j, i[2])

headers2 = [‘视频发表时间’, ‘标题’, ‘来源’, ‘视频链接’]

with open(‘/toutiao/’ + i[0] + ‘视频.csv’, ‘a’, newline=‘’)as f:

f_csv = csv.DictWriter(f, headers2)

f_csv.writeheader()

break_flag_video.clear()

shipin(url=i[2], csv_name=i[0])

if ‘微头条’ in i[3]:

启动获取微头条的函数

headers3 = [‘微头条发表时间’, ‘来源’, ‘标题’, ‘文章内图片’, ‘微头条内容’]

print(‘当前正在抓取微头条第’, j, i[2])

with open(‘/toutiao/’ + i[0] + ‘微头条.csv’, ‘a’, newline=‘’)as f:

f_csv = csv.DictWriter(f, headers3)

f_csv.writeheader()

break_flag_weitoutiao.clear()

weitoutiao(url=i[2], csv_name=i[0])

多线程启用

def get_all(urlQueue):

while True:

try:

不阻塞的读取队列数据

data_url = urlQueue.get_nowait()

i = urlQueue.qsize()

except Exception as e:

break

print(data_url)

if ‘文章’ in data_url[3]:

# 启动抓取文章函数

print(‘当前正在抓取文章’, data_url[2])

headers1 = [‘发表时间’, ‘标题’, ‘来源’, ‘所有图片’, ‘文章内容’]

with open(‘/toutiao/’ + data_url[0] + ‘文章.csv’, ‘a’, newline=‘’)as f:

f_csv = csv.DictWriter(f, headers1)

f_csv.writeheader()

break_flag.clear()

wenzhang(url=data_url[2], csv_name=data_url[0])

if ‘视频’ in data_url[3]:

启动爬取视频的函数

print(‘当前正在抓取视频’, data_url[2])

headers2 = [‘视频发表时间’, ‘标题’, ‘来源’, ‘视频链接’]

with open(‘/toutiao/’ + data_url[0] + ‘视频.csv’, ‘a’, newline=‘’)as f:

f_csv = csv.DictWriter(f, headers2)

f_csv.writeheader()

break_flag_video.clear()

shipin(url=data_url[2], csv_name=data_url[0])

网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

357

357

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?