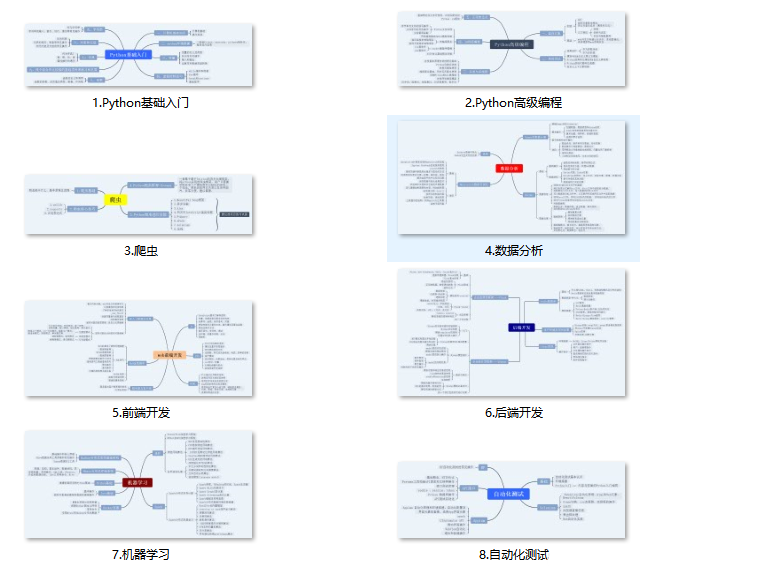

一、Python所有方向的学习路线

Python所有方向路线就是把Python常用的技术点做整理,形成各个领域的知识点汇总,它的用处就在于,你可以按照上面的知识点去找对应的学习资源,保证自己学得较为全面。

二、学习软件

工欲善其事必先利其器。学习Python常用的开发软件都在这里了,给大家节省了很多时间。

三、入门学习视频

我们在看视频学习的时候,不能光动眼动脑不动手,比较科学的学习方法是在理解之后运用它们,这时候练手项目就很适合了。

网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

6

7 Lacie

8 Lacie

9

and

10 Tillie

11 Tillie

12

and they lived at the bottom of a well.

3.7父节点和祖先节点

html = “”"

Once upon a time there were three little sisters; and their names were

Elsie

and

and they lived at the bottom of a well.

...

“”"

from bs4 import BeautifulSoup

soup = BeautifulSoup(html, ‘lxml’)

print(soup.a.parent)

Once upon a time there were three little sisters; and their names were

Elsie

and

and they lived at the bottom of a well.

html = “”"

Once upon a time there were three little sisters; and their names were

Elsie

and

and they lived at the bottom of a well.

...

“”"

from bs4 import BeautifulSoup

soup = BeautifulSoup(html, ‘lxml’)

print(list(enumerate(soup.a.parents)))

[(0,

Once upon a time there were three little sisters; and their names were

Elsie

and

and they lived at the bottom of a well.

), (1,

Once upon a time there were three little sisters; and their names were

Elsie

and

and they lived at the bottom of a well.

...

), (2,

Once upon a time there were three little sisters; and their names were

Elsie

and

and they lived at the bottom of a well.

...

), (3,

Once upon a time there were three little sisters; and their names were

Elsie

and

and they lived at the bottom of a well.

...

)]3.8兄弟节点

html = “”"

Once upon a time there were three little sisters; and their names were

Elsie

and

and they lived at the bottom of a well.

...

“”"

from bs4 import BeautifulSoup

soup = BeautifulSoup(html, ‘lxml’)

print(list(enumerate(soup.a.next_siblings)))

print(list(enumerate(soup.a.previous_siblings)))

[(0, ‘\n’), (1, Lacie), (2, ’ \n and\n '), (3, Tillie), (4, '\n and they lived at the bottom of a well.\n ')]

[(0, '\n Once upon a time there were three little sisters; and their names were\n ')]

4.1find_all( name , attrs , recursive , text , **kwargs )

可根据标签名、属性、内容查找文档

4.1.1name

html=‘’’

Hello

- Foo

- Bar

- Jay

- Foo

- Bar

‘’’

from bs4 import BeautifulSoup

soup = BeautifulSoup(html, ‘lxml’)

print(soup.find_all(‘ul’))

print(type(soup.find_all(‘ul’)[0]))

[

- Foo

- Bar

- Jay

- ,

- Foo

- Bar

- ]

<class ‘bs4.element.Tag’>

html=‘’’

Hello

- Foo

- Bar

- Jay

- Foo

- Bar

‘’’

from bs4 import BeautifulSoup

soup = BeautifulSoup(html, ‘lxml’)

for ul in soup.find_all(‘ul’):

print(ul.find_all(‘li’))

[

- Foo

- ,

- Bar

- ,

- Jay

- ]

[

- Foo

- ,

- Bar

- ]

4.1.2attrs

html=‘’’

Hello

- Foo

- Bar

- Jay

- Foo

- Bar

‘’’

from bs4 import BeautifulSoup

soup = BeautifulSoup(html, ‘lxml’)

print(soup.find_all(attrs={‘id’: ‘list-1’}))

print(soup.find_all(attrs={‘name’: ‘elements’}))

[

- Foo

- Bar

- Jay

- ]

[

- Foo

- Bar

- Jay

- ]

html=‘’’

Hello

- Foo

- Bar

- Jay

- Foo

- Bar

‘’’

from bs4 import BeautifulSoup

soup = BeautifulSoup(html, ‘lxml’)

print(soup.find_all(id=‘list-1’))

print(soup.find_all(class_=‘element’))

[

- Foo

- Bar

- Jay

- ]

[

- Foo

- ,

- Bar

- ,

- Jay

- ,

- Foo

- ,

- Bar

- ]

4.1.3text

html=‘’’

Hello

- Foo

- Bar

- Jay

- Foo

- Bar

‘’’

from bs4 import BeautifulSoup

soup = BeautifulSoup(html, ‘lxml’)

print(soup.find_all(text=‘Foo’))

[‘Foo’, ‘Foo’]

4.2find( name , attrs , recursive , text , **kwargs )

find返回单个元素,find_all返回所有元素

html=‘’’

Hello

- Foo

- Bar

- Jay

- Foo

- Bar

‘’’

from bs4 import BeautifulSoup

soup = BeautifulSoup(html, ‘lxml’)

print(soup.find(‘ul’))

print(type(soup.find(‘ul’)))

print(soup.find(‘page’))

- Foo

- Bar

- Jay

<class ‘bs4.element.Tag’>

None

4.3find_parents() find_parent()

find_parents()返回所有祖先节点,find_parent()返回直接父节点。

4.4find_next_siblings() find_next_sibling()

find_next_siblings()返回后面所有兄弟节点,find_next_sibling()返回后面第一个兄弟节点。

4.5find_previous_siblings() find_previous_sibling()

find_previous_siblings()返回前面所有兄弟节点,find_previous_sibling()返回前面第一个兄弟节点。

4.6find_all_next() find_next()

find_all_next()返回节点后所有符合条件的节点, find_next()返回第一个符合条件的节点

4.7find_all_previous() 和 find_previous()

find_all_previous()返回节点后所有符合条件的节点, find_previous()返回第一个符合条件的节点

通过select()直接传入CSS选择器即可完成选择

html=‘’’

Hello

- Foo

- Bar

- Jay

- Foo

- Bar

‘’’

from bs4 import BeautifulSoup

soup = BeautifulSoup(html, ‘lxml’)

print(soup.select(‘.panel .panel-heading’))

print(soup.select(‘ul li’))

print(soup.select(‘#list-2 .element’))

print(type(soup.select(‘ul’)[0]))

[

Hello

][

- Foo

- ,

- Bar

- ,

- Jay

- ,

- Foo

- ,

- Bar

- ]

[

- Foo

- ,

- Bar

- ]

<class ‘bs4.element.Tag’>

html=‘’’

Hello

- Foo

- Bar

- Jay

- Foo

- Bar

‘’’

from bs4 import BeautifulSoup

soup = BeautifulSoup(html, ‘lxml’)

for ul in soup.select(‘ul’):

print(ul.select(‘li’))

[

- Foo

- ,

- Bar

- ,

- Jay

- ]

[

- Foo

- ,

- Bar

- ]

5.1获取属性

html=‘’’

Hello

- Foo

- Bar

- Jay

- Foo

- Bar

‘’’

from bs4 import BeautifulSoup

soup = BeautifulSoup(html, ‘lxml’)

for ul in soup.select(‘ul’):

print(ul[‘id’])

print(ul.attrs[‘id’])

list-1

list-1

list-2

list-2

5.2获取内容

html=‘’’

Hello

- Foo

- Bar

- Jay

- Foo

- Bar

‘’’

from bs4 import BeautifulSoup

soup = BeautifulSoup(html, ‘lxml’)

for li in soup.select(‘li’):

print(li.get_text())

Foo

Bar

Jay

Foo

Bar

(1)Python所有方向的学习路线(新版)

这是我花了几天的时间去把Python所有方向的技术点做的整理,形成各个领域的知识点汇总,它的用处就在于,你可以按照上面的知识点去找对应的学习资源,保证自己学得较为全面。

最近我才对这些路线做了一下新的更新,知识体系更全面了。

(2)Python学习视频

包含了Python入门、爬虫、数据分析和web开发的学习视频,总共100多个,虽然没有那么全面,但是对于入门来说是没问题的,学完这些之后,你可以按照我上面的学习路线去网上找其他的知识资源进行进阶。

(3)100多个练手项目

我们在看视频学习的时候,不能光动眼动脑不动手,比较科学的学习方法是在理解之后运用它们,这时候练手项目就很适合了,只是里面的项目比较多,水平也是参差不齐,大家可以挑自己能做的项目去练练。

网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

3302

3302

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?