《一线大厂Java面试题解析+核心总结学习笔记+最新讲解视频+实战项目源码》,点击传送门,即可获取!

import org.springframework.boot.context.properties.ConfigurationProperties;

import org.springframework.stereotype.Component;

import javax.annotation.PostConstruct;

import java.util.Arrays;

import static com.google.common.base.Charsets.UTF_8;

/**

-

@author will (zq2599@gmail.com)

-

@version 1.0

-

@description: 包装了SimpleBlockingStub实例的类,发起gRPC请求时需要用到SimpleBlockingStub实例

-

@date 2021/5/8 19:34

*/

@Component(“stubWrapper”)

@Data

@Slf4j

@ConfigurationProperties(prefix = “grpc”)

public class StubWrapper {

/**

- 这是etcd中的一个key,该key对应的值是grpc服务端的地址信息

*/

private static final String GRPC_SERVER_INFO_KEY = “/grpc/local-server”;

/**

- 配置文件中写好的etcd地址

*/

private String etcdendpoints;

private SimpleGrpc.SimpleBlockingStub simpleBlockingStub;

/**

-

从etcd查询gRPC服务端的地址

-

@return

*/

public String[] getGrpcServerInfo() {

// 创建client类

KV kvClient = Client.builder().endpoints(etcdendpoints.split(“,”)).build().getKVClient();

GetResponse response = null;

// 去etcd查询/grpc/local-server这个key的值

try {

response = kvClient.get(ByteSequence.from(GRPC_SERVER_INFO_KEY, UTF_8)).get();

} catch (Exception exception) {

log.error(“get grpc key from etcd error”, exception);

}

if (null==response || response.getKvs().isEmpty()) {

log.error(“empty value of key [{}]”, GRPC_SERVER_INFO_KEY);

return null;

}

// 从response中取得值

String rawAddrInfo = response.getKvs().get(0).getValue().toString(UTF_8);

// rawAddrInfo是“192.169.0.1:8080”这样的字符串,即一个IP和一个端口,用":"分割,

// 这里用":"分割成数组返回

return null==rawAddrInfo ? null : rawAddrInfo.split(“:”);

}

/**

-

每次注册bean都会执行的方法,

-

该方法从etcd取得gRPC服务端地址,

-

用于实例化成员变量SimpleBlockingStub

*/

@PostConstruct

public void simpleBlockingStub() {

// 从etcd获取地址信息

String[] array = getGrpcServerInfo();

log.info(“create stub bean, array info from etcd {}”, Arrays.toString(array));

// 数组的第一个元素是gRPC服务端的IP地址,第二个元素是端口

if (null==array || array.length<2) {

log.error(“can not get valid grpc address from etcd”);

return;

}

// 数组的第一个元素是gRPC服务端的IP地址

String addr = array[0];

// 数组的第二个元素是端口

int port = Integer.parseInt(array[1]);

// 根据刚才获取的gRPC服务端的地址和端口,创建channel

Channel channel = ManagedChannelBuilder

.forAddress(addr, port)

.usePlaintext()

.build();

// 根据channel创建stub

simpleBlockingStub = SimpleGrpc.newBlockingStub(channel);

}

}

- GrpcClientService是封装了StubWrapper的服务类:

package com.bolingcavalry.dynamicrpcaddr;

import com.bolingcavalry.grpctutorials.lib.HelloReply;

import com.bolingcavalry.grpctutorials.lib.HelloRequest;

import com.bolingcavalry.grpctutorials.lib.SimpleGrpc;

import io.grpc.StatusRuntimeException;

import lombok.Setter;

import net.devh.boot.grpc.client.inject.GrpcClient;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.stereotype.Service;

@Service

public class GrpcClientService {

@Autowired(required = false)

@Setter

private StubWrapper stubWrapper;

public String sendMessage(final String name) {

// 很有可能simpleStub对象为null

if (null==stubWrapper) {

return “invalid SimpleBlockingStub, please check etcd configuration”;

}

try {

final HelloReply response = stubWrapper.getSimpleBlockingStub().sayHello(HelloRequest.newBuilder().setName(name).build());

return response.getMessage();

} catch (final StatusRuntimeException e) {

return "FAILED with " + e.getStatus().getCode().name();

}

}

}

- 新增一个controller类GrpcClientController,提供一个http接口,里面会调用GrpcClientService的方法,最终完成远程gRPC调用:

package com.bolingcavalry.dynamicrpcaddr;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.web.bind.annotation.RequestMapping;

import org.springframework.web.bind.annotation.RequestParam;

import org.springframework.web.bind.annotation.RestController;

@RestController

public class GrpcClientController {

@Autowired

private GrpcClientService grpcClientService;

@RequestMapping(“/”)

public String printMessage(@RequestParam(defaultValue = “will”) String name) {

return grpcClientService.sendMessage(name);

}

}

- 接下来新增一个controller类RefreshStubInstanceController,对外提供一个http接口refreshstub,作用是删掉stubWrapper这个bean,再重新注册一次,这样每当外部调用refreshstub接口,就可以从etcd取得服务端信息再重新实例化SimpleBlockingStub成员变量,这样就达到了客户端动态获取服务端地址的效果:

package com.bolingcavalry.dynamicrpcaddr;

import com.bolingcavalry.grpctutorials.lib.SimpleGrpc;

import org.springframework.beans.BeansException;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.beans.factory.support.AbstractBeanDefinition;

import org.springframework.beans.factory.support.BeanDefinitionBuilder;

import org.springframework.beans.factory.support.BeanDefinitionRegistry;

import org.springframework.beans.factory.support.DefaultListableBeanFactory;

import org.springframework.context.ApplicationContext;

import org.springframework.context.ApplicationContextAware;

import org.springframework.web.bind.annotation.RequestMapping;

import org.springframework.web.bind.annotation.RequestParam;

import org.springframework.web.bind.annotation.RestController;

@RestController

public class RefreshStubInstanceController implements ApplicationContextAware {

private ApplicationContext applicationContext;

@Autowired

private GrpcClientService grpcClientService;

@Override

public void setApplicationContext(ApplicationContext applicationContext) throws BeansException {

this.applicationContext = applicationContext;

}

@RequestMapping(“/refreshstub”)

public String refreshstub() {

String beanName = “stubWrapper”;

//获取BeanFactory

DefaultListableBeanFactory defaultListableBeanFactory = (DefaultListableBeanFactory) applicationContext.getAutowireCapableBeanFactory();

// 删除已有bean

defaultListableBeanFactory.removeBeanDefinition(beanName);

//创建bean信息.

BeanDefinitionBuilder beanDefinitionBuilder = BeanDefinitionBuilder.genericBeanDefinition(StubWrapper.class);

//动态注册bean.

defaultListableBeanFactory.registerBeanDefinition(beanName, beanDefinitionBuilder.getBeanDefinition());

// 更新引用关系(注意,applicationContext.getBean方法很重要,会触发StubWrapper实例化操作)

grpcClientService.setStubWrapper(applicationContext.getBean(StubWrapper.class));

return “Refresh success”;

}

}

- 编码完成,开始验证;

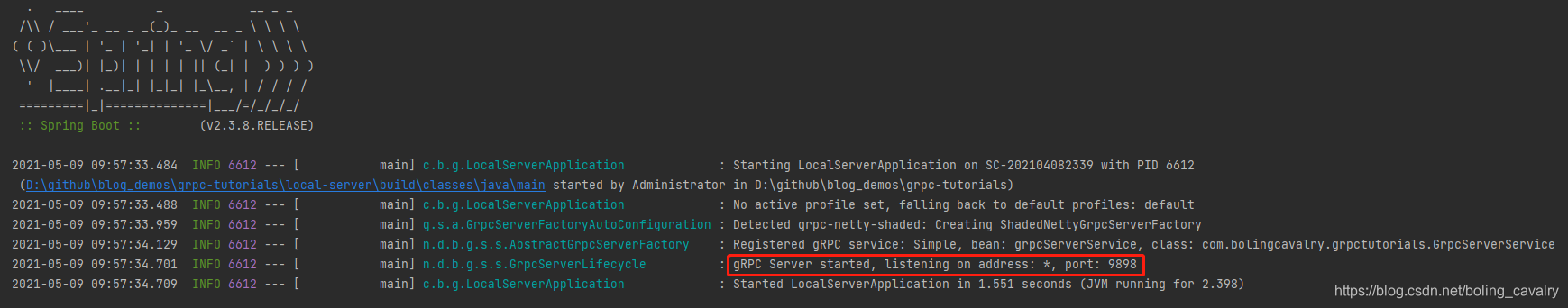

部署gRPC服务端应用

部署gRPC服务端应用很简单,启动local-server应用即可:

部署etcd

- 为了简化操作,我这里的etcd集群是用docker部署的,对应的docker-compose.yml文件内容如下:

version: ‘3’

services:

etcd1:

image: “quay.io/coreos/etcd:v3.4.7”

entrypoint: /usr/local/bin/etcd

command:

-

‘–name=etcd1’

-

‘–data-dir=/etcd_data’

-

‘–initial-advertise-peer-urls=http://etcd1:2380’

-

‘–listen-peer-urls=http://0.0.0.0:2380’

-

‘–listen-client-urls=http://0.0.0.0:2379’

-

‘–advertise-client-urls=http://etcd1:2379’

-

‘–initial-cluster-token=etcd-cluster’

-

‘–heartbeat-interval=250’

-

‘–election-timeout=1250’

-

‘–initial-cluster=etcd1=http://etcd1:2380,etcd2=http://etcd2:2380,etcd3=http://etcd3:2380’

-

‘–initial-cluster-state=new’

ports:

- 2379:2379

volumes:

- ./store/etcd1/data:/etcd_data

etcd2:

image: “quay.io/coreos/etcd:v3.4.7”

entrypoint: /usr/local/bin/etcd

command:

-

‘–name=etcd2’

-

‘–data-dir=/etcd_data’

-

‘–initial-advertise-peer-urls=http://etcd2:2380’

-

‘–listen-peer-urls=http://0.0.0.0:2380’

-

‘–listen-client-urls=http://0.0.0.0:2379’

-

‘–advertise-client-urls=http://etcd2:2379’

-

‘–initial-cluster-token=etcd-cluster’

-

‘–heartbeat-interval=250’

-

‘–election-timeout=1250’

-

‘–initial-cluster=etcd1=http://etcd1:2380,etcd2=http://etcd2:2380,etcd3=http://etcd3:2380’

-

‘–initial-cluster-state=new’

ports:

- 2380:2379

volumes:

- ./store/etcd2/data:/etcd_data

etcd3:

image: “quay.io/coreos/etcd:v3.4.7”

entrypoint: /usr/local/bin/etcd

command:

-

‘–name=etcd3’

-

‘–data-dir=/etcd_data’

-

‘–initial-advertise-peer-urls=http://etcd3:2380’

-

‘–listen-peer-urls=http://0.0.0.0:2380’

-

‘–listen-client-urls=http://0.0.0.0:2379’

-

‘–advertise-client-urls=http://etcd3:2379’

-

‘–initial-cluster-token=etcd-cluster’

-

‘–heartbeat-interval=250’

-

‘–election-timeout=1250’

-

‘–initial-cluster=etcd1=http://etcd1:2380,etcd2=http://etcd2:2380,etcd3=http://etcd3:2380’

-

‘–initial-cluster-state=new’

ports:

- 2381:2379

volumes:

- ./store/etcd3/data:/etcd_data

- 准备好上述文件后,执行docker-compose up -d即可创建集群;

将服务端应用的IP地址和端口写入etcd

- 我这边local-server所在服务器IP是192.168.50.5,端口9898,所以执行以下命令将local-server信息写入etcd:

docker exec 08_etcd2_1 /usr/local/bin/etcdctl put /grpc/local-server 192.168.50.5:9898

启动客户端应用

- 打开DynamicServerAddressDemoApplication.java,点击下图红框位置,即可启动客户端应用:

- 注意下图红框中的日志,该日志证明客户端应用从etcd获取服务端信息成功:

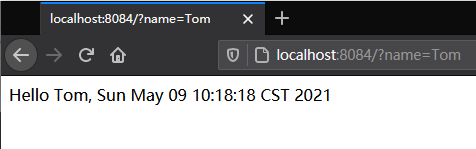

- 浏览器访问应用get-service-addr-from-etcd的http接口,成功收到响应,证明gRPC调用成功:

- 去看local-server的控制台,如下图红框,证明远程调用确实执行了:

重启服务端,重启的时候修改端口

- 为了验证动态获取服务端信息是否有效,咱们先把local-server应用的端口改一下,如下图红框,改成9899:

- 改完重启local-server,如下图红框,可见gRPC端口已经改为9899:

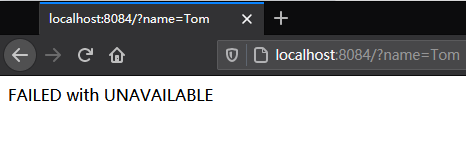

- 这时候再访问get-service-addr-from-etcd的http接口,由于get-service-addr-from-etcd不知道local-server的监听端口发生了改变,因此还是去访问9898端口,毫无意外的返回了失败:

修改etcd中服务端的端口信息

总结:绘上一张Kakfa架构思维大纲脑图(xmind)

其实关于Kafka,能问的问题实在是太多了,扒了几天,最终筛选出44问:基础篇17问、进阶篇15问、高级篇12问,个个直戳痛点,不知道如果你不着急看答案,又能答出几个呢?

若是对Kafka的知识还回忆不起来,不妨先看我手绘的知识总结脑图(xmind不能上传,文章里用的是图片版)进行整体架构的梳理

梳理了知识,刷完了面试,如若你还想进一步的深入学习解读kafka以及源码,那么接下来的这份《手写“kafka”》将会是个不错的选择。

-

Kafka入门

-

为什么选择Kafka

-

Kafka的安装、管理和配置

-

Kafka的集群

-

第一个Kafka程序

-

Kafka的生产者

-

Kafka的消费者

-

深入理解Kafka

-

可靠的数据传递

-

Spring和Kafka的整合

-

SpringBoot和Kafka的整合

-

Kafka实战之削峰填谷

-

数据管道和流式处理(了解即可)

《一线大厂Java面试题解析+核心总结学习笔记+最新讲解视频+实战项目源码》,点击传送门,即可获取!

PFMsWN-1714690049844)]

其实关于Kafka,能问的问题实在是太多了,扒了几天,最终筛选出44问:基础篇17问、进阶篇15问、高级篇12问,个个直戳痛点,不知道如果你不着急看答案,又能答出几个呢?

若是对Kafka的知识还回忆不起来,不妨先看我手绘的知识总结脑图(xmind不能上传,文章里用的是图片版)进行整体架构的梳理

梳理了知识,刷完了面试,如若你还想进一步的深入学习解读kafka以及源码,那么接下来的这份《手写“kafka”》将会是个不错的选择。

-

Kafka入门

-

为什么选择Kafka

-

Kafka的安装、管理和配置

-

Kafka的集群

-

第一个Kafka程序

-

Kafka的生产者

-

Kafka的消费者

-

深入理解Kafka

-

可靠的数据传递

-

Spring和Kafka的整合

-

SpringBoot和Kafka的整合

-

Kafka实战之削峰填谷

-

数据管道和流式处理(了解即可)

[外链图片转存中…(img-74D9QWqt-1714690049844)]

[外链图片转存中…(img-kUlrdKmS-1714690049844)]

《一线大厂Java面试题解析+核心总结学习笔记+最新讲解视频+实战项目源码》,点击传送门,即可获取!

本文解析了一线大厂Java面试中的问题,涉及gRPC服务的Etcd配置、SimpleBlockingStub的使用,以及Kafka的安装、管理与整合Spring框架的实践。还提供了学习资源链接,适合提升面试准备和理解复杂系统架构。

本文解析了一线大厂Java面试中的问题,涉及gRPC服务的Etcd配置、SimpleBlockingStub的使用,以及Kafka的安装、管理与整合Spring框架的实践。还提供了学习资源链接,适合提升面试准备和理解复杂系统架构。

1490

1490

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?