targetPort: 9092

nodePort: 30093

name: client

type: NodePort

selector:

statefulset.kubernetes.io/pod-name: kafka-1

apiVersion: v1

kind: Service

metadata:

name: kafka-cs-2

labels:

app: kafka

spec:

ports:

- port: 9092

targetPort: 9092

nodePort: 30094

name: client

type: NodePort

selector:

statefulset.kubernetes.io/pod-name: kafka-2

apiVersion: policy/v1beta1

kind: PodDisruptionBudget

metadata:

name: kafka-pdb

spec:

selector:

matchLabels:

app: kafka

minAvailable: 2

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: kafka

spec:

serviceName: kafka-hs

replicas: 3

selector:

matchLabels:

app: kafka

template:

metadata:

labels:

app: kafka

spec:

affinity:

podAntiAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

- labelSelector:

matchExpressions:

- key: “app”

operator: In

values:

- kafka

topologyKey: “kubernetes.io/hostname”

podAffinity:

preferredDuringSchedulingIgnoredDuringExecution:

- weight: 1

podAffinityTerm:

labelSelector:

matchExpressions:

- key: “app”

operator: In

values:

- zk

topologyKey: “kubernetes.io/hostname”

terminationGracePeriodSeconds: 300

containers:

- name: kafka

imagePullPolicy: IfNotPresent

image: zhaoguanghui6/kubernetes-kafka:v1

resources:

requests:

memory: “1Gi”

cpu: 1000m

ports:

- containerPort: 9092

name: server

command:

- sh

- -c

- "exec kafka-server-start.sh /opt/kafka/config/server.properties --override broker.id=KaTeX parse error: Expected '}', got '#' at position 10: {HOSTNAME#̲#\*-} …((${HOSTNAME##*-}+30092))

–override zookeeper.connect=zk-0.zk-hs.default.svc.cluster.local:2181,zk-0.zk-hs.default.svc.cluster.local:2181,zk-0.zk-hs.default.svc.cluster.local:2181

–override log.dir=/var/lib/kafka

–override auto.create.topics.enable=true

–override auto.leader.rebalance.enable=true

–override background.threads=10

–override compression.type=producer

–override delete.topic.enable=true

–override leader.imbalance.check.interval.seconds=300

–override leader.imbalance.per.broker.percentage=10

–override log.flush.interval.messages=9223372036854775807

–override log.flush.offset.checkpoint.interval.ms=60000

–override log.flush.scheduler.interval.ms=9223372036854775807

–override log.retention.bytes=-1

–override log.retention.hours=168

–override log.roll.hours=168

–override log.roll.jitter.hours=0

–override log.segment.bytes=1073741824

–override log.segment.delete.delay.ms=60000

–override message.max.bytes=1000012

–override min.insync.replicas=1

–override num.io.threads=8

–override num.network.threads=3

–override num.recovery.threads.per.data.dir=1

–override num.replica.fetchers=1

–override offset.metadata.max.bytes=4096

–override offsets.commit.required.acks=-1

–override offsets.commit.timeout.ms=5000

–override offsets.load.buffer.size=5242880

–override offsets.retention.check.interval.ms=600000

–override offsets.retention.minutes=1440

–override offsets.topic.compression.codec=0

–override offsets.topic.num.partitions=50

–override offsets.topic.replication.factor=3

–override offsets.topic.segment.bytes=104857600

–override queued.max.requests=500

–override quota.consumer.default=9223372036854775807

–override quota.producer.default=9223372036854775807

–override replica.fetch.min.bytes=1

–override replica.fetch.wait.max.ms=500

–override replica.high.watermark.checkpoint.interval.ms=5000

–override replica.lag.time.max.ms=10000

–override replica.socket.receive.buffer.bytes=65536

–override replica.socket.timeout.ms=30000

–override request.timeout.ms=30000

–override socket.receive.buffer.bytes=102400

–override socket.request.max.bytes=104857600

–override socket.send.buffer.bytes=102400

–override unclean.leader.election.enable=true

–override zookeeper.session.timeout.ms=6000

–override zookeeper.set.acl=false

–override broker.id.generation.enable=true

–override connections.max.idle.ms=600000

–override controlled.shutdown.enable=true

–override controlled.shutdown.max.retries=3

–override controlled.shutdown.retry.backoff.ms=5000

–override controller.socket.timeout.ms=30000

–override default.replication.factor=1

–override fetch.purgatory.purge.interval.requests=1000

–override group.max.session.timeout.ms=300000

–override group.min.session.timeout.ms=6000

–override inter.broker.protocol.version=0.10.2-IV0

–override log.cleaner.backoff.ms=15000

–override log.cleaner.dedupe.buffer.size=134217728

–override log.cleaner.delete.retention.ms=86400000

–override log.cleaner.enable=true

–override log.cleaner.io.buffer.load.factor=0.9

–override log.cleaner.io.buffer.size=524288

–override log.cleaner.io.max.bytes.per.second=1.7976931348623157E308

–override log.cleaner.min.cleanable.ratio=0.5

–override log.cleaner.min.compaction.lag.ms=0

–override log.cleaner.threads=1

–override log.cleanup.policy=delete

–override log.index.interval.bytes=4096

–override log.index.size.max.bytes=10485760

–override log.message.timestamp.difference.max.ms=9223372036854775807

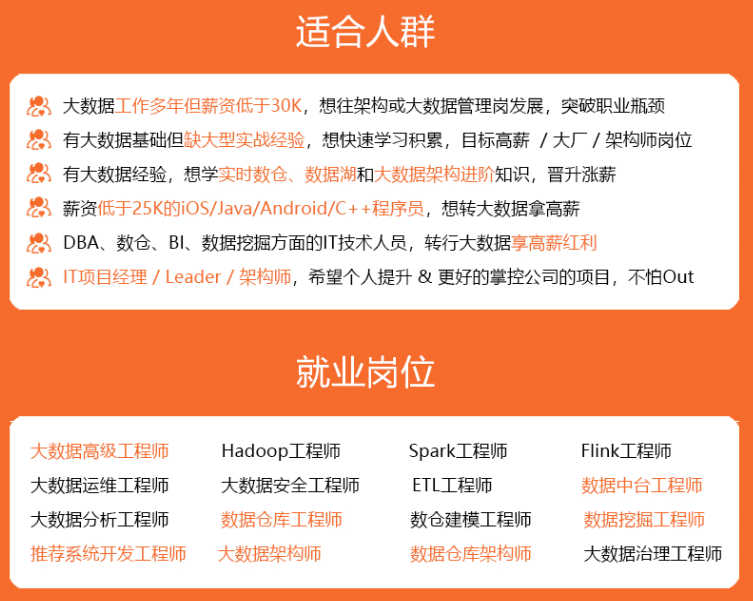

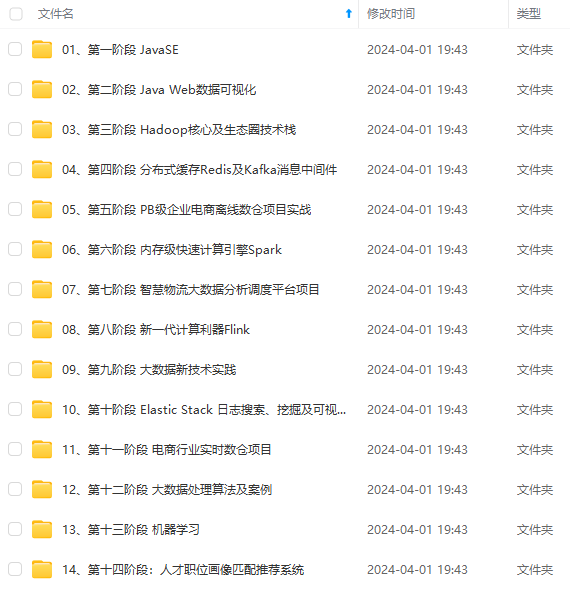

自我介绍一下,小编13年上海交大毕业,曾经在小公司待过,也去过华为、OPPO等大厂,18年进入阿里一直到现在。

深知大多数大数据工程师,想要提升技能,往往是自己摸索成长或者是报班学习,但对于培训机构动则几千的学费,着实压力不小。自己不成体系的自学效果低效又漫长,而且极易碰到天花板技术停滞不前!

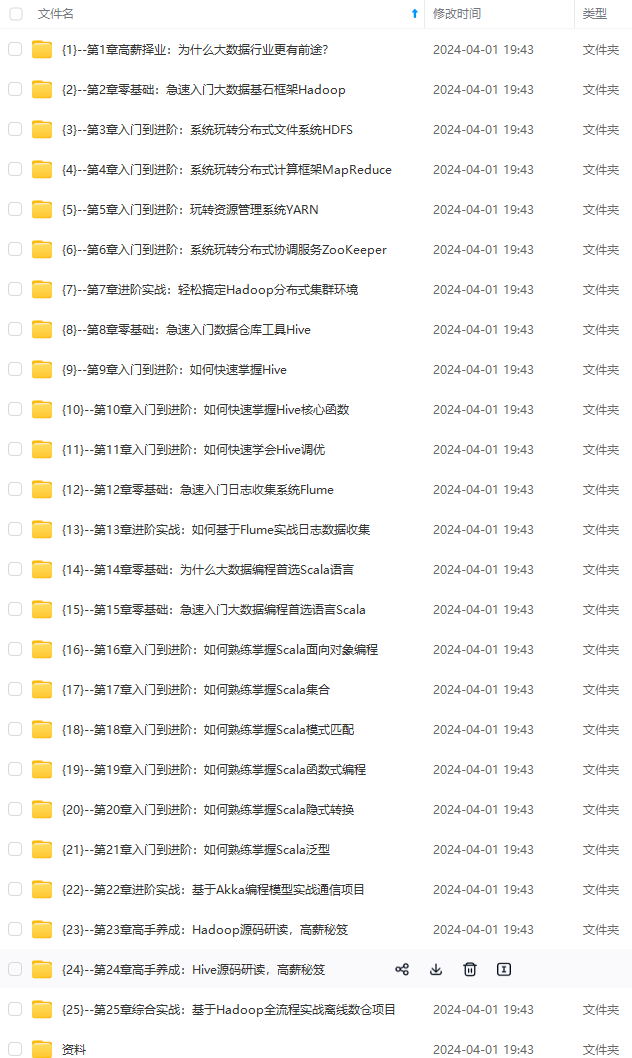

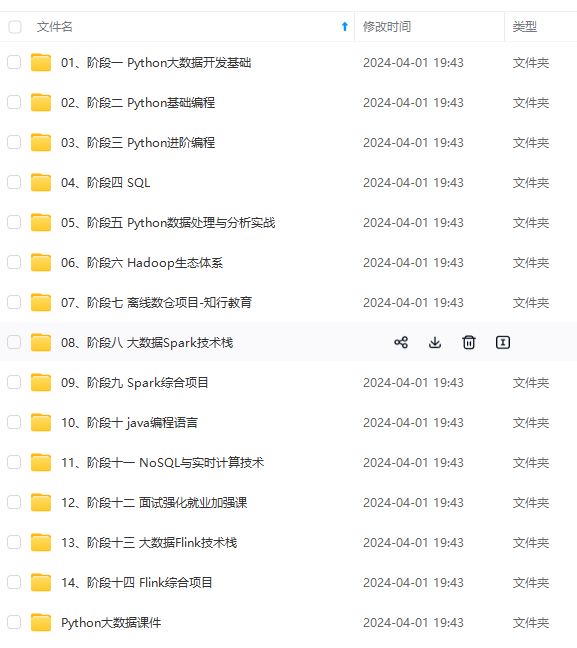

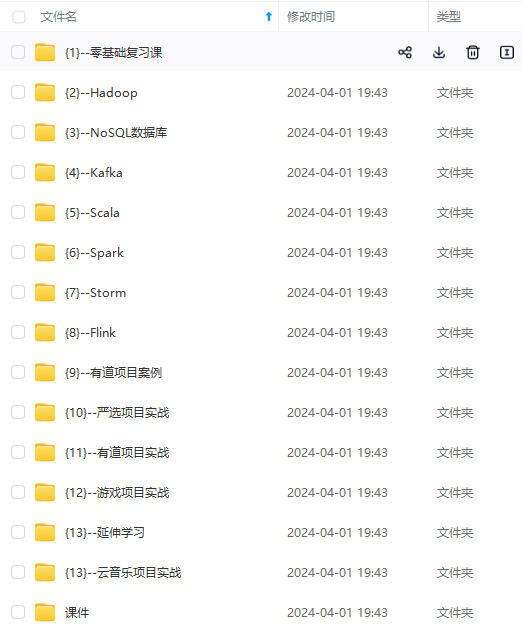

因此收集整理了一份《2024年大数据全套学习资料》,初衷也很简单,就是希望能够帮助到想自学提升又不知道该从何学起的朋友。

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,基本涵盖了95%以上大数据开发知识点,真正体系化!

由于文件比较大,这里只是将部分目录大纲截图出来,每个节点里面都包含大厂面经、学习笔记、源码讲义、实战项目、讲解视频,并且后续会持续更新

如果你觉得这些内容对你有帮助,可以添加VX:vip204888 (备注大数据获取)

一个人可以走的很快,但一群人才能走的更远。不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎扫码加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

有帮助,可以添加VX:vip204888 (备注大数据获取)**

[外链图片转存中…(img-z9LhLPlz-1712979954338)]

一个人可以走的很快,但一群人才能走的更远。不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎扫码加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

498

498

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?