先自我介绍一下,小编浙江大学毕业,去过华为、字节跳动等大厂,目前阿里P7

深知大多数程序员,想要提升技能,往往是自己摸索成长,但自己不成体系的自学效果低效又漫长,而且极易碰到天花板技术停滞不前!

因此收集整理了一份《2024年最新大数据全套学习资料》,初衷也很简单,就是希望能够帮助到想自学提升又不知道该从何学起的朋友。

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,涵盖了95%以上大数据知识点,真正体系化!

由于文件比较多,这里只是将部分目录截图出来,全套包含大厂面经、学习笔记、源码讲义、实战项目、大纲路线、讲解视频,并且后续会持续更新

如果你需要这些资料,可以添加V获取:vip204888 (备注大数据)

正文

hdfs/b2-test-datanode-22-151.sh@hadoop.com

hdfs/b2-test-datanode-22-63.sh@hadoop.com

(1)分别在2个集群服务器上查看认证kerberos和查询用户正常使用

SSD1集群

#id risk_user1

uid=90002(risk_user1) gid=30002(pt_group) groups=30002(pt_group)

[bx-11:02:41root@a2-prod-buffer-165-105 /home/admin]

#/usr/bin/kinit -k -t /home/admin/hadoop.keytab hadoop/admin

[bx-11:03:28root@a2-prod-buffer-165-105 /home/admin]

#klist

Ticket cache: FILE:/home/admin/cache_file/krb5cc_0

Default principal: hadoop/admin@hadoop.com

Valid starting Expires Service principal

03/22/2024 11:03:28 03/23/2024 11:03:28 krbtgt/hadoop.com@hadoop.com

renew until 03/29/2024 11:03:28

[bx-11:03:30root@a2-prod-buffer-165-105 /home/admin]

#hadoop fs -ls /

Found 3 items

drwxr-xr-x - hadoop supergroup 0 2024-03-19 16:30 /system

drwxrwxrwt - hdfs supergroup 0 2024-03-14 10:07 /tmp

drwxr-xr-x - hdfs supergroup 0 2024-03-14 15:40 /user

[bx-11:03:37root@a2-prod-buffer-165-105 /home/admin]

#hive

Java HotSpot™ 64-Bit Server VM warning: ignoring option MaxPermSize=512M; support was removed in 8.0

2024-03-22 11:04:55,315 WARN [main] mapreduce.TableMapReduceUtil: The hbase-prefix-tree module jar containing PrefixTreeCodec is not present. Continuing without it.

Java HotSpot™ 64-Bit Server VM warning: ignoring option MaxPermSize=512M; support was removed in 8.0

Logging initialized using configuration in jar:file:/opt/cloudera/parcels/CDH-5.8.0-1.cdh5.8.0.p0.42/jars/hive-common-1.1.0-cdh5.8.0.jar!/hive-log4j.properties

WARNING: Hive CLI is deprecated and migration to Beeline is recommended.

hive> show databases;

OK

default

ssb

SSD2集群

#hadoop fs -ls /

Found 3 items

drwxrwxrwt - hdfs supergroup 0 2024-03-21 16:51 /tmp

drwxr-xr-x - hdfs supergroup 0 2024-03-21 16:51 /user

drwxr-xr-x - hadoop supergroup 0 2024-03-22 11:04 /zw02

[bx-11:04:15root@a2-prod-datanode-160-46 /root]

#id risk_user1

uid=90002(risk_user1) gid=30002(pt_group) groups=30002(pt_group)

[bx-11:09:05root@a2-prod-datanode-160-46 /root]

#hadoop fs -ls /

Found 3 items

drwxrwxrwt - hdfs supergroup 0 2024-03-21 16:51 /tmp

drwxr-xr-x - hdfs supergroup 0 2024-03-21 16:51 /user

drwxr-xr-x - hadoop supergroup 0 2024-03-22 11:04 /zw02

[bx-11:09:09root@a2-prod-datanode-160-46 /root]

#hive

Java HotSpot™ 64-Bit Server VM warning: ignoring option MaxPermSize=512M; support was removed in 8.0

2024-03-22 11:09:13,213 WARN [main] mapreduce.TableMapReduceUtil: The hbase-prefix-tree module jar containing PrefixTreeCodec is not present. Continuing without it.

Java HotSpot™ 64-Bit Server VM warning: ignoring option MaxPermSize=512M; support was removed in 8.0

Logging initialized using configuration in jar:file:/opt/cloudera/parcels/CDH-5.8.0-1.cdh5.8.0.p0.42/jars/hive-common-1.1.0-cdh5.8.0.jar!/hive-log4j.properties

WARNING: Hive CLI is deprecated and migration to Beeline is recommended.

hive> show databases;

OK

default

zw02

(2)hadoop client访问测试hdfs集群

SSD2集群

[bx-11:02:47root@a2-prod-datanode-160-24 /etc/ansible/roles/ldap-client]

#hadoop fs -ls hdfs://a2-prod-buffer-165-105.sh:8020/

Found 3 items

drwxr-xr-x - hadoop supergroup 0 2024-03-19 16:30 hdfs://a2-prod-buffer-165-105.sh:8020/system

drwxrwxrwt - hdfs supergroup 0 2024-03-14 10:07 hdfs://a2-prod-buffer-165-105.sh:8020/tmp

drwxr-xr-x - hdfs supergroup 0 2024-03-14 15:40 hdfs://a2-prod-buffer-165-105.sh:8020/user

[bx-11:16:43root@a2-prod-datanode-160-24 /etc/ansible/roles/ldap-client]

#hadoop fs -ls hdfs://test2nameservice/

Found 3 items

drwxrwxrwt - hdfs supergroup 0 2024-03-21 16:51 hdfs://test2nameservice/tmp

drwxr-xr-x - hdfs supergroup 0 2024-03-21 16:51 hdfs://test2nameservice/user

drwxr-xr-x - hadoop supergroup 0 2024-03-22 11:04 hdfs://test2nameservice/zw02

SSD1集群

[bx-11:15:54root@a2-prod-buffer-165-105 /home/admin]

#hadoop fs -ls hdfs://a2-prod-buffer-165-105.sh:8020/

Found 3 items

drwxr-xr-x - hadoop supergroup 0 2024-03-19 16:30 hdfs://a2-prod-buffer-165-105.sh:8020/system

drwxrwxrwt - hdfs supergroup 0 2024-03-14 10:07 hdfs://a2-prod-buffer-165-105.sh:8020/tmp

drwxr-xr-x - hdfs supergroup 0 2024-03-14 15:40 hdfs://a2-prod-buffer-165-105.sh:8020/user

[bx-11:16:00root@a2-prod-buffer-165-105 /home/admin]

#hadoop fs -ls hdfs://test2nameservice/

-ls: java.net.UnknownHostException: test2nameservice

Usage: hadoop fs [generic options] -ls [-d] [-h] [-R] [ …]

[bx-11:16:13root@a2-prod-buffer-165-105 /home/admin]

#hadoop fs -ls hdfs://a2-prod-datanode-160-24.sh:8020/

Found 3 items

drwxrwxrwt - hdfs supergroup 0 2024-03-21 16:51 hdfs://a2-prod-datanode-160-24.sh:8020/tmp

drwxr-xr-x - hdfs supergroup 0 2024-03-21 16:51 hdfs://a2-prod-datanode-160-24.sh:8020/user

drwxr-xr-x - hadoop supergroup 0 2024-03-22 11:04 hdfs://a2-prod-datanode-160-24.sh:8020/zw02

可以跨机房访问不同集群,访问使用需要写具体namenode地址(hostname:port方式),如果使用nameserver访问无法跨机房访问。

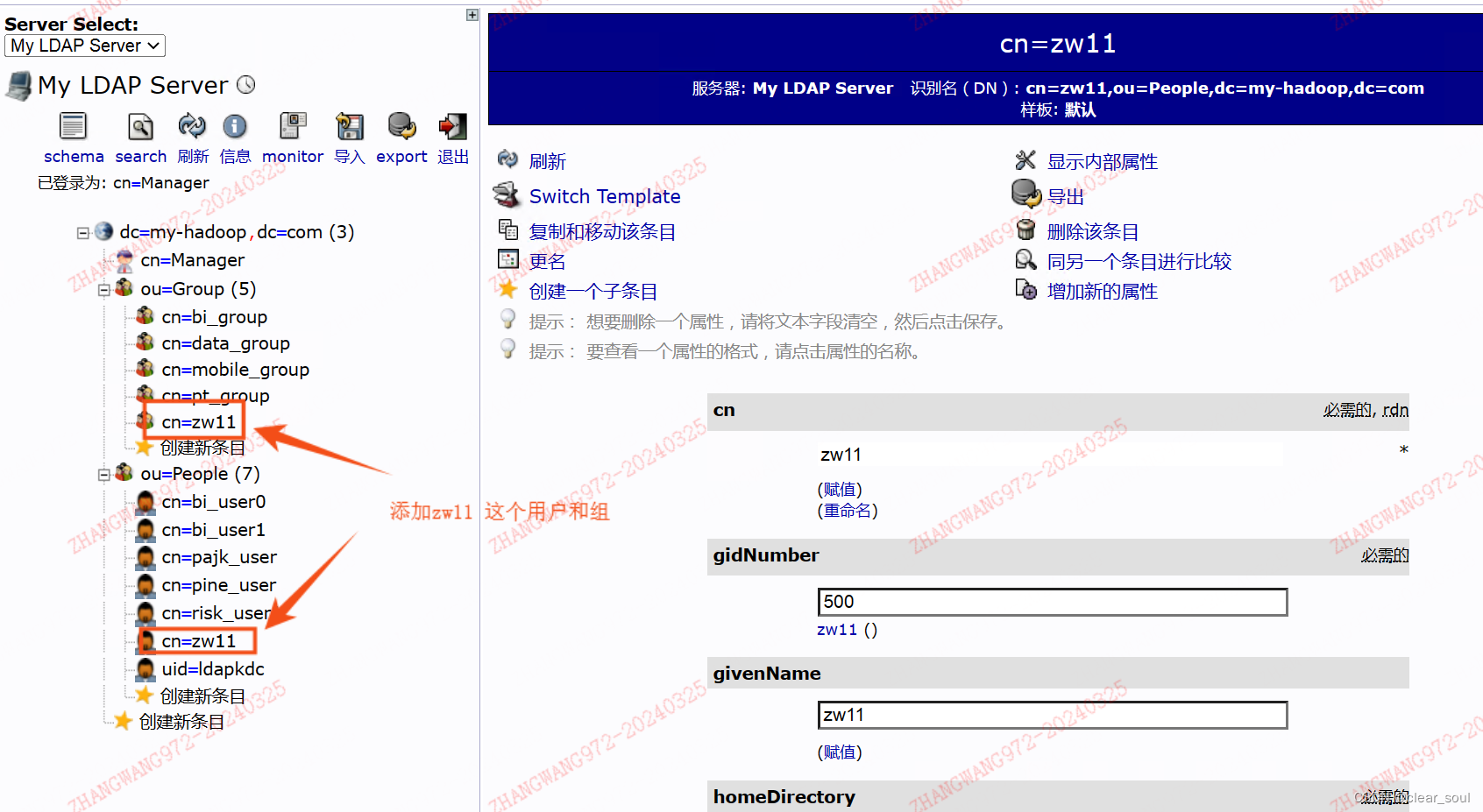

(3)在LDAP创建新用户验证集群的使用

查看用户是否正常

####SSD2

[bx-17:36:41root@a2-prod-datanode-160-46 /root]

#id zw11

uid=1000(zw11) gid=500(zw11) groups=500(zw11)

####SSD1

a2-buffer-server-168-156.sh[bx-17:39:43root@a2-buffer-server-168-156 /root]

#id zw11

uid=1000(zw11) gid=500(zw11) groups=500(zw11)

在kerberos添加认证,并在client机器上使用用户名和密码认证。

SSD2集群测试

###创建Ldap认证的用户

[bx-17:36:45root@a2-prod-datanode-160-46 /root]

#kadmin.local -q “addprinc -pw zw11 zw11/zw11@hadoop.com”

Authenticating as principal hadoop/admin@hadoop.com with password.

WARNING: no policy specified for zw11/zw11@hadoop.com; defaulting to no policy

Principal “zw11/zw11@hadoop.com” created.

[bx-17:42:50root@a2-prod-datanode-160-46 /root]

#klist

网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。

需要这份系统化的资料的朋友,可以添加V获取:vip204888 (备注大数据)

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

份系统化的资料的朋友,可以添加V获取:vip204888 (备注大数据)**

[外链图片转存中…(img-c8Blgeo3-1713268176708)]

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?