–conf ‘spark.serializer=org.apache.spark.serializer.KryoSerializer’

执行作业前建议导入如下:

import org.apache.hudi.QuickstartUtils._

import scala.collection.JavaConversions._

import org.apache.spark.sql.SaveMode._

import org.apache.hudi.DataSourceReadOptions._

import org.apache.hudi.DataSourceWriteOptions._

import org.apache.hudi.config.HoodieWriteConfig._

插入数据

import org.apache.spark.sql._

import org.apache.spark.sql.types._

val fields = Array(

StructField(“id”, IntegerType, true),

StructField(“name”, StringType, true),

StructField(“price”, DoubleType, true),

StructField(“ts”, LongType, true)

)

val simpleSchema = StructType(fields)

val data = Seq(Row(2, “a2”, 200.0, 100L))

val df = spark.createDataFrame(data, simpleSchema)

df.write.format(“hudi”).

option(PRECOMBINE_FIELD_OPT_KEY, “ts”).

option(RECORDKEY_FIELD_OPT_KEY, “id”).

option(TABLE_NAME, “hudi_mor_tbl_shell”).

option(TABLE_TYPE_OPT_KEY, “MERGE_ON_READ”).

mode(Append).

save(“hdfs:///hudi/hudi_mor_tbl_shell”)

验证:

val df = spark.

read.

format(“hudi”).

load(“hdfs:///hudi/hudi_mor_tbl_shell”)

df.createOrReplaceTempView(“hudi_mor_tbl_shell”)

spark.sql(“select * from hudi_mor_tbl_shell”).show()

普通查询

val df = spark.

read.

format(“hudi”).

load(“hdfs:///hudi/hudi_mor_tbl_shell”)

df.createOrReplaceTempView(“hudi_mor_tbl_shell”)

spark.sql(“select * from hudi_mor_tbl_shell”).show()

增量查询

首先再插入/修改一条数据,参见插入/修改数据。然后执行:

spark.

read.

format(“hudi”).

load(“hdfs:///hudi/hudi_mor_tbl_shell”).

createOrReplaceTempView(“hudi_mor_tbl_shell”)

val commits = spark.sql(“select distinct(_hoodie_commit_time) as commitTime from hudi_mor_tbl_shell order by commitTime desc”).map(k => k.getString(0)).take(50)

val beginTime = commits(commits.length - 1)

val idf = spark.read.format(“hudi”).

option(QUERY_TYPE_OPT_KEY, QUERY_TYPE_INCREMENTAL_OPT_VAL).

option(BEGIN_INSTANTTIME_OPT_KEY, beginTime).

load(“hdfs:///hudi/hudi_mor_tbl_shell”)

idf.createOrReplaceTempView(“hudi_mor_tbl_shell_incremental”)

spark.sql(“select \_hoodie\_commit\_time, id, name, price, ts from hudi_mor_tbl_shell_incremental”).show()

发现只取出了最近插入/修改后的数据。

修改数据

import org.apache.spark.sql._

import org.apache.spark.sql.types._

val fields = Array(

StructField(“id”, IntegerType, true),

StructField(“name”, StringType, true),

StructField(“price”, DoubleType, true),

StructField(“ts”, LongType, true)

)

val simpleSchema = StructType(fields)

val data = Seq(Row(2, “a2”, 400.0, 2222L))

val df = spark.createDataFrame(data, simpleSchema)

df.write.format(“hudi”).

option(PRECOMBINE_FIELD_OPT_KEY, “ts”).

option(RECORDKEY_FIELD_OPT_KEY, “id”).

option(TABLE_NAME, “hudi_mor_tbl_shell”).

option(TABLE_TYPE_OPT_KEY, “MERGE_ON_READ”).

mode(Append).

save(“hdfs:///hudi/hudi_mor_tbl_shell”)

验证方法使用普通查询。

Insert overwrite

import org.apache.spark.sql._

import org.apache.spark.sql.types._

val fields = Array(

StructField(“id”, IntegerType, true),

StructField(“name”, StringType, true),

StructField(“price”, DoubleType, true),

StructField(“ts”, LongType, true)

)

val simpleSchema = StructType(fields)

val data = Seq(Row(99, “a99”, 20.0, 900L))

val df = spark.createDataFrame(data, simpleSchema)

df.write.format(“hudi”).

option(OPERATION.key(),“insert_overwrite”).

option(PRECOMBINE_FIELD.key(), “ts”).

option(RECORDKEY_FIELD.key(), “id”).

option(TBL_NAME.key(), “hudi_mor_tbl_shell”).

option(TABLE_TYPE_OPT_KEY, “MERGE_ON_READ”).

mode(Append).

save(“hdfs:///hudi/hudi_mor_tbl_shell”)

验证方法使用普通查询。发现只有新增的这一条数据。

删除数据

import org.apache.spark.sql._

import org.apache.spark.sql.types._

val fields = Array(

StructField(“id”, IntegerType, true),

StructField(“name”, StringType, true),

StructField(“price”, DoubleType, true),

StructField(“ts”, LongType, true)

)

val simpleSchema = StructType(fields)

val data = Seq(Row(2, “a2”, 400.0, 2222L))

val df = spark.createDataFrame(data, simpleSchema)

df.write.format(“hudi”).

option(OPERATION_OPT_KEY,“delete”).

option(PRECOMBINE_FIELD_OPT_KEY, “ts”).

option(RECORDKEY_FIELD_OPT_KEY, “id”).

option(TABLE_NAME, “hudi_mor_tbl_shell”).

mode(Append).

save(“hdfs:///hudi/hudi_mor_tbl_shell”)

验证方法使用普通查询。

Spark SQL方式

启动Hudi spark sql的方法:

./spark-sql

–master yarn

–conf ‘spark.serializer=org.apache.spark.serializer.KryoSerializer’

–conf ‘spark.sql.extensions=org.apache.spark.sql.hudi.HoodieSparkSessionExtension’

–conf ‘spark.sql.catalog.spark_catalog=org.apache.spark.sql.hudi.catalog.HoodieCatalog’

如果使用Hudi的版本为0.11.x,需要执行:

./spark-sql

–master yarn

–conf ‘spark.serializer=org.apache.spark.serializer.KryoSerializer’

–conf ‘spark.sql.extensions=org.apache.spark.sql.hudi.HoodieSparkSessionExtension’

创建表:

create table hudi_mor_tbl (

id int,

name string,

price double,

ts bigint

) using hudi

tblproperties (

type = ‘mor’,

primaryKey = ‘id’,

preCombineField = ‘ts’

)

location ‘hdfs:///hudi/hudi_mor_tbl’;

验证:

show tables;

插入数据

SQL方式:

insert into hudi_mor_tbl select 1, ‘a1’, 20, 1000;

验证:

select * from hudi_mor_tbl;

普通查询

SQL方式:

select * from hudi_mor_tbl;

修改数据

SQL方式:

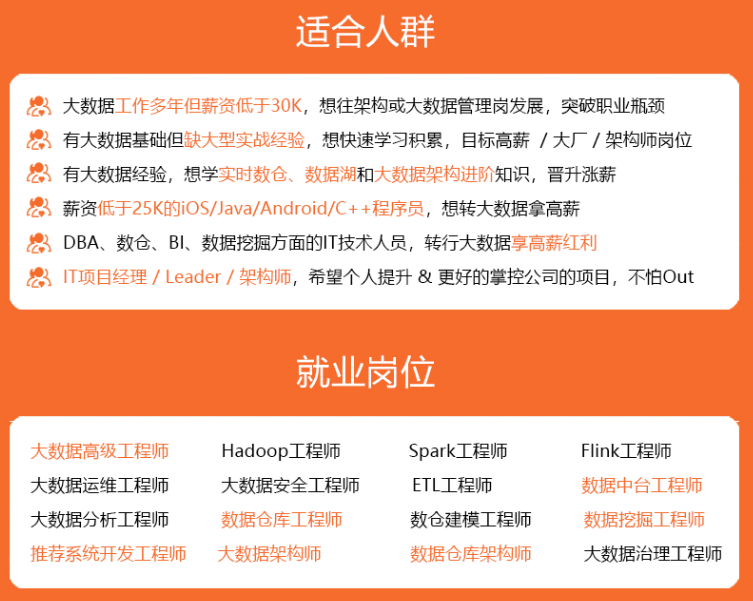

自我介绍一下,小编13年上海交大毕业,曾经在小公司待过,也去过华为、OPPO等大厂,18年进入阿里一直到现在。

深知大多数大数据工程师,想要提升技能,往往是自己摸索成长或者是报班学习,但对于培训机构动则几千的学费,着实压力不小。自己不成体系的自学效果低效又漫长,而且极易碰到天花板技术停滞不前!

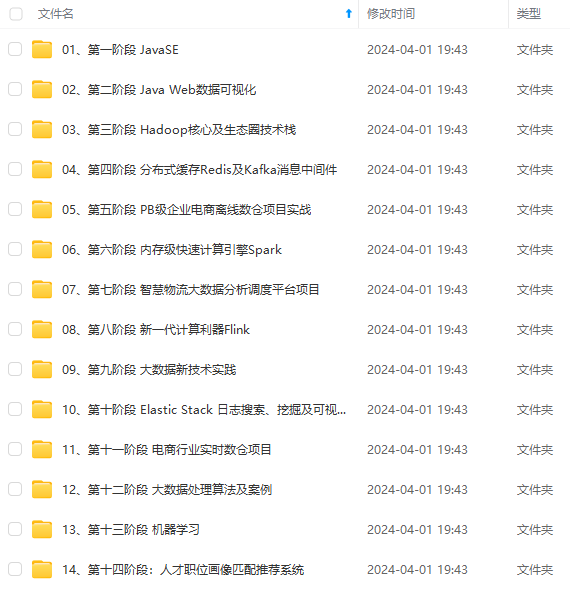

因此收集整理了一份《2024年大数据全套学习资料》,初衷也很简单,就是希望能够帮助到想自学提升又不知道该从何学起的朋友。

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,基本涵盖了95%以上大数据开发知识点,真正体系化!

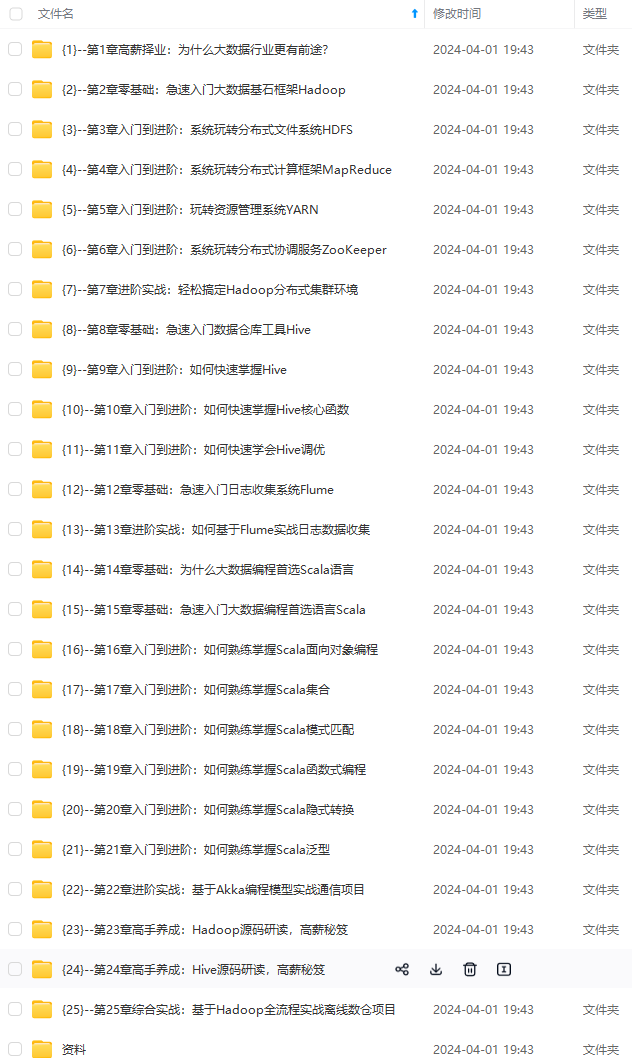

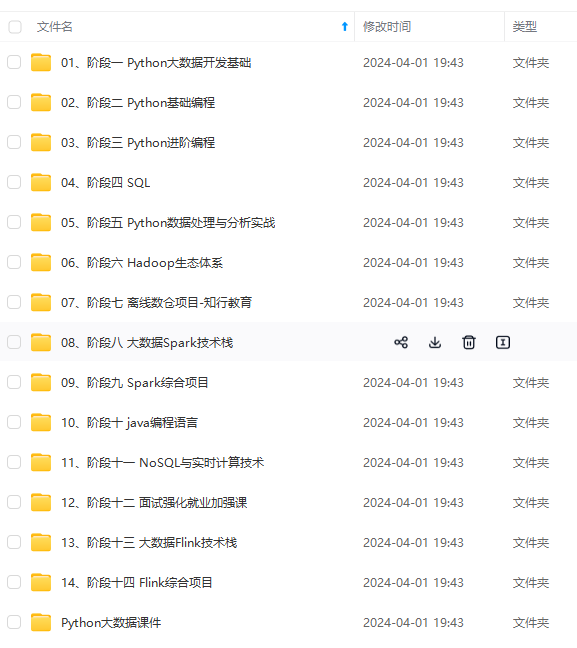

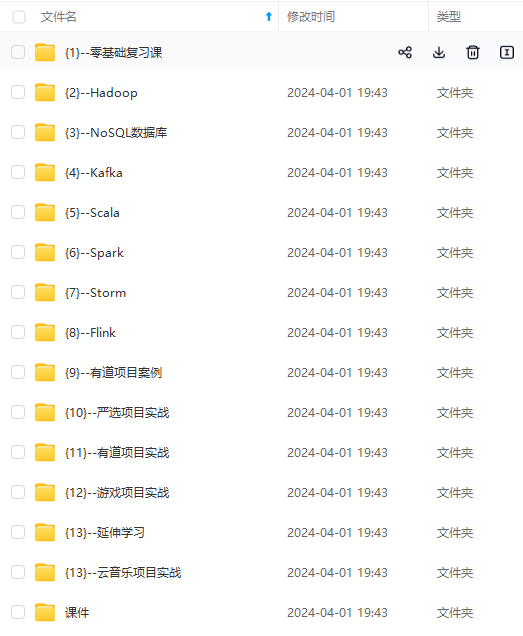

由于文件比较大,这里只是将部分目录大纲截图出来,每个节点里面都包含大厂面经、学习笔记、源码讲义、实战项目、讲解视频,并且后续会持续更新

如果你觉得这些内容对你有帮助,可以添加VX:vip204888 (备注大数据获取)

g-58m9roh3-1712553319563)]

[外链图片转存中…(img-k7OFziOT-1712553319564)]

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,基本涵盖了95%以上大数据开发知识点,真正体系化!

由于文件比较大,这里只是将部分目录大纲截图出来,每个节点里面都包含大厂面经、学习笔记、源码讲义、实战项目、讲解视频,并且后续会持续更新

如果你觉得这些内容对你有帮助,可以添加VX:vip204888 (备注大数据获取)

[外链图片转存中…(img-WTqfUHJh-1712553319564)]

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?