语音情感识别,python 使用CNN、LSTM、CNN-LSTM以及带有注意力机制的模型 构建语音情感识别系统

文章目录

语音情感识别,python

CNN,LSTM,CNN-LSTM,以及加注意力机制这几种算法

附有数据集和代码,

数据集:英文数据集

CASIA语音情感数据集是提取好特征的文件

也可根据你的数据集修改模型的输入

构建语音情感识别系统,同学们。你们可 使用CNN、LSTM、CNN-LSTM以及带有注意力机制的模型。以下是详细的代码实现和说明,包括数据加载、模型构建、训练和评估。

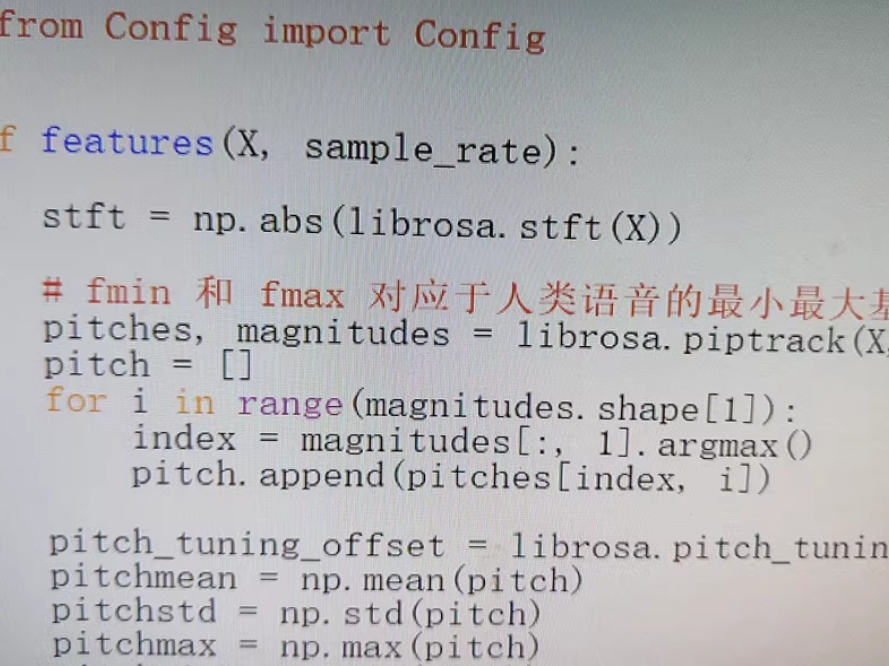

1. 数据准备

首先,我们需要加载CASIA语音情感数据集,并进行预处理。

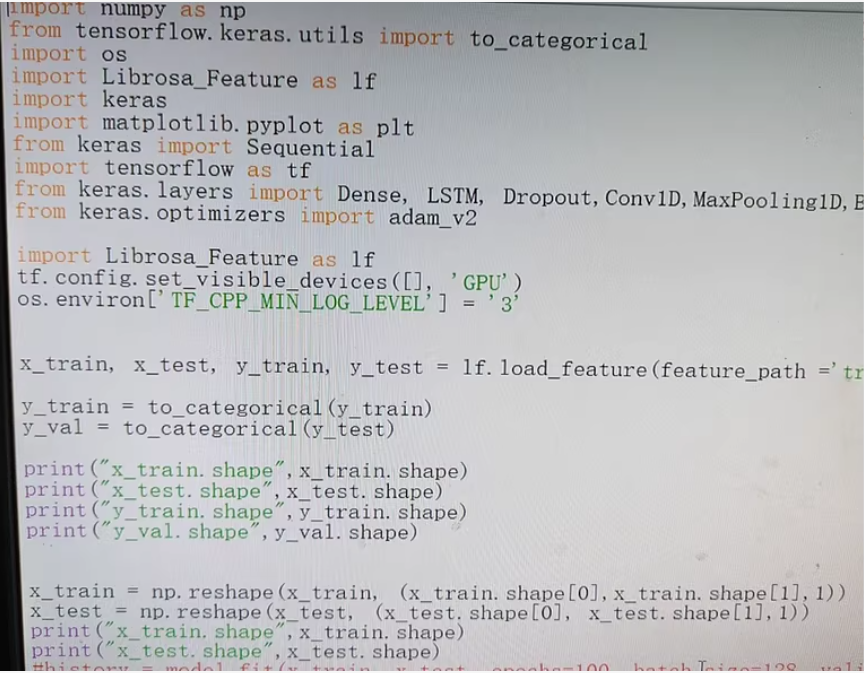

import numpy as np

from tensorflow.keras.utils import to_categorical

import os

import keras

import matplotlib.pyplot as plt

from keras.models import Sequential

from keras.layers import Dense, LSTM, Dropout, Conv1D, MaxPooling1D, Flatten, TimeDistributed, Bidirectional

from keras.optimizers import Adam

from Librosa_Feature import load_feature # 假设这是你自定义的特征加载模块

# 加载特征和标签

x_train, x_test, y_train, y_test = load_feature(feature_path='path/to/feature')

# 将标签转换为one-hot编码

y_train = to_categorical(y_train)

y_val = to_categorical(y_test)

print("x_train.shape", x_train.shape)

print("x_test.shape", x_test.shape)

print("y_train.shape", y_train.shape)

print("y_val.shape", y_val.shape)

# 重塑输入数据以适应模型

x_train = np.reshape(x_train, (x_train.shape[0], x_train.shape[1], 1))

x_test = np.reshape(x_test, (x_test.shape[0], x_test.shape[1], 1))

print("x_train.shape", x_train.shape)

print("x_test.shape", x_test.shape)

2. 模型构建

2.1 CNN模型

def build_cnn_model(input_shape, num_classes):

model = Sequential()

model.add(Conv1D(64, kernel_size=3, activation='relu', input_shape=input_shape))

model.add(MaxPooling1D(pool_size=2))

model.add(Dropout(0.5))

model.add(Conv1D(128, kernel_size=3, activation='relu'))

model.add(MaxPooling1D(pool_size=2))

model.add(Dropout(0.5))

model.add(Flatten())

model.add(Dense(128, activation='relu'))

model.add(Dropout(0.5))

model.add(Dense(num_classes, activation='softmax'))

model.compile(loss='categorical_crossentropy', optimizer=Adam(), metrics=['accuracy'])

return model

input_shape = (x_train.shape[1], 1)

num_classes = y_train.shape[1]

cnn_model = build_cnn_model(input_shape, num_classes)

2.2 LSTM模型

def build_lstm_model(input_shape, num_classes):

model = Sequential()

model.add(LSTM(64, return_sequences=True, input_shape=input_shape))

model.add(Dropout(0.5))

model.add(LSTM(64))

model.add(Dropout(0.5))

model.add(Dense(128, activation='relu'))

model.add(Dropout(0.5))

model.add(Dense(num_classes, activation='softmax'))

model.compile(loss='categorical_crossentropy', optimizer=Adam(), metrics=['accuracy'])

return model

input_shape = (x_train.shape[1], 1)

lstm_model = build_lstm_model(input_shape, num_classes)

2.3 CNN-LSTM模型

def build_cnn_lstm_model(input_shape, num_classes):

model = Sequential()

model.add(TimeDistributed(Conv1D(64, kernel_size=3, activation='relu'), input_shape=input_shape))

model.add(TimeDistributed(MaxPooling1D(pool_size=2)))

model.add(TimeDistributed(Dropout(0.5)))

model.add(TimeDistributed(Conv1D(128, kernel_size=3, activation='relu')))

model.add(TimeDistributed(MaxPooling1D(pool_size=2)))

model.add(TimeDistributed(Dropout(0.5)))

model.add(TimeDistributed(Flatten()))

model.add(LSTM(64, return_sequences=True))

model.add(Dropout(0.5))

model.add(LSTM(64))

model.add(Dropout(0.5))

model.add(Dense(128, activation='relu'))

model.add(Dropout(0.5))

model.add(Dense(num_classes, activation='softmax'))

model.compile(loss='categorical_crossentropy', optimizer=Adam(), metrics=['accuracy'])

return model

input_shape = (x_train.shape[1], 1, 1)

cnn_lstm_model = build_cnn_lstm_model(input_shape, num_classes)

2.4 CNN-LSTM带注意力机制模型

from keras.layers import Attention

def build_cnn_lstm_attention_model(input_shape, num_classes):

model = Sequential()

model.add(TimeDistributed(Conv1D(64, kernel_size=3, activation='relu'), input_shape=input_shape))

model.add(TimeDistributed(MaxPooling1D(pool_size=2)))

model.add(TimeDistributed(Dropout(0.5)))

model.add(TimeDistributed(Conv1D(128, kernel_size=3, activation='relu')))

model.add(TimeDistributed(MaxPooling1D(pool_size=2)))

model.add(TimeDistributed(Dropout(0.5)))

model.add(TimeDistributed(Flatten()))

model.add(Bidirectional(LSTM(64, return_sequences=True)))

model.add(Attention()

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?