人工智能 – python3 爬虫:BeautifulSoup标签查找与信息提取

前言

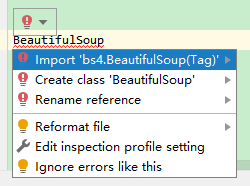

因新旧版本问题,BeautifulSoup所存放的包有变化,要用新方式import bs4.BeautifulSoup导入,习惯上:from bs4 import BeautifulSoup as bs

小技巧:若有类似情况,可以在pycharm编辑器中打出函数,“ctrl”+单击,便可出现提示info,如下:

BeautifulSoup的使用

以下面代码为例:

from urllib import request

from bs4 import BeautifulSoup as bs

headers = {'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/77.0.3865.90 Safari/537.36'}

url = "https://..." # 自定义

resp = request.Request(url, headers=headers)

html_data = request.urlopen(resp).read().decode('utf-8', 'ignore')

soup = bs(html_data, 'html.parser')

接下来便可以用soup对象来搞事情了:

1. 查找a标签

(1)查找所有a标签

for x in soup.find_all('a'):

print(x)

运行结果:

<a class="sister" href="http://example.com/elsie" id="link1">Elsie</a>

<a class="sister" href="http://example.com/lacie" id="link2">Lacie</a>

<a class="sister" href="http://example.com/tillie" id="link3">Tillie</a>

(2)查找所有a标签,且属性值href中需要保护关键字“”

for x in soup.find_all('a',href = re.compile('lacie')):

print(x)

运行结果:

<a class="sister" href="http://example.com/lacie" id="link2">Lacie</a>

(3)查找所有a标签,且字符串内容包含关键字“Elsie”

for x in soup.find_all('a',string = re.compile('Elsie')):

print(x)

运行结果:

<a class="sister" href="http://example.com/elsie" id="link1">Elsie</a>

(4)查找body标签的所有子标签,并循环打印输出

for x in soup.find('body').children:

if isinstance(x,bs4.element.Tag): #使用isinstance过滤掉空行内容

print(x)

运行结果:

<p class="title"><b>The Dormouse's story</b></p>

<p class="story">Once upon a time there were three little sisters; and their names were

<a class="sister" href="http://example.com/elsie" id="link1">Elsie</a>,

<a class="sister" href="http://example.com/lacie" id="link2">Lacie</a> and

<a class="sister" href="http://example.com/tillie" id="link3">Tillie</a>;

and they lived at the bottom of a well.</p>

2. 信息提取(链接提取)

(1)解析信息标签结构,查找所有a标签,并提取每个a标签中href属性的值(即链接),然后存在空列表

linklist = []

for x in soup.find_all('a'):

link = x.get('href')

if link:

linklist.append(link)

for x in linklist: #验证:环打印出linklist列表中的链接

print(x)

运行结果:

http://example.com/elsie

http://example.com/lacie

http://example.com/tillie

小结:链接提取 <—> 属性内容提取 <—> x.get(‘href’)

(2)解析信息标签结构,查找所有a标签,且每个a标签中href中包含关键字“elsie”,然后存入空列表中;

linklst = []

for x in soup.find_all('a', href = re.compile('elsie')):

link = x.get('href')

if link:

linklst.append(link)

for x in linklst: #验证:循环打印出linklist列表中的链接

print(x)

运行结果:

http://example.com/elsie

小结:在进行a标签查找时,加入了对属性值href内容的正则匹配内容 <—> href = re.compile(‘elsie’)

(3)解析信息标签结构,查询所有a标签,然后输出所有标签中的“字符串”内容;

for x in soup.find_all('a'):

string = x.get_text()

print(string)

运行结果:

Elsie

Lacie

Tillie

附:

另外,发现一篇总结的爬虫标签提取很不错,可以参考学习下。

https://www.cnblogs.com/simple-li/p/11253312.html

1294

1294

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?