二元交叉熵损失函数主要做的是关于二分类问题

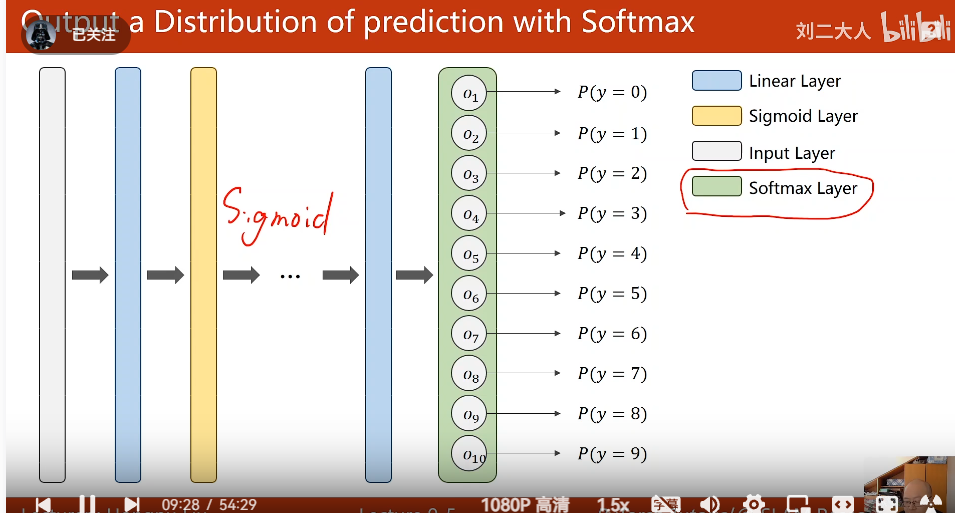

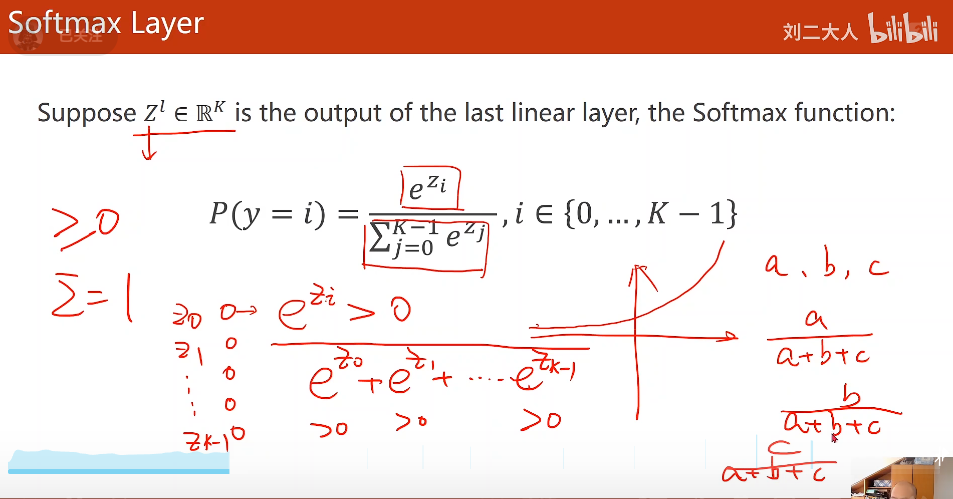

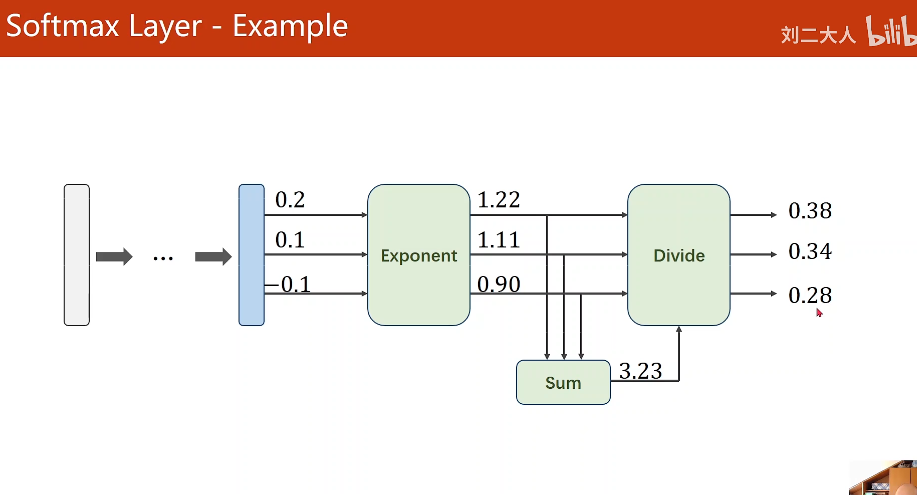

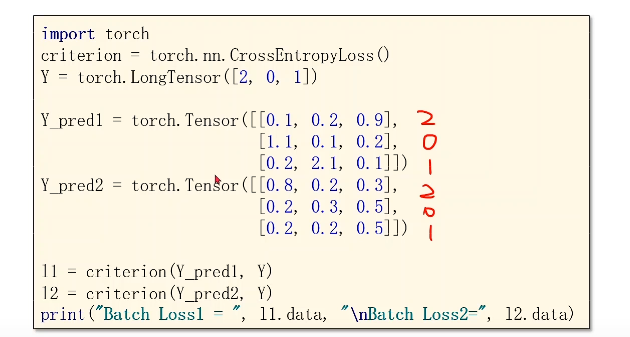

softmax主要来做多分类问题

前面的激活函数采用Sigmoid,最后一层采用softmax激活函数。这是因为softmax激活函数的可完成多分类,所有类别概率之和为1

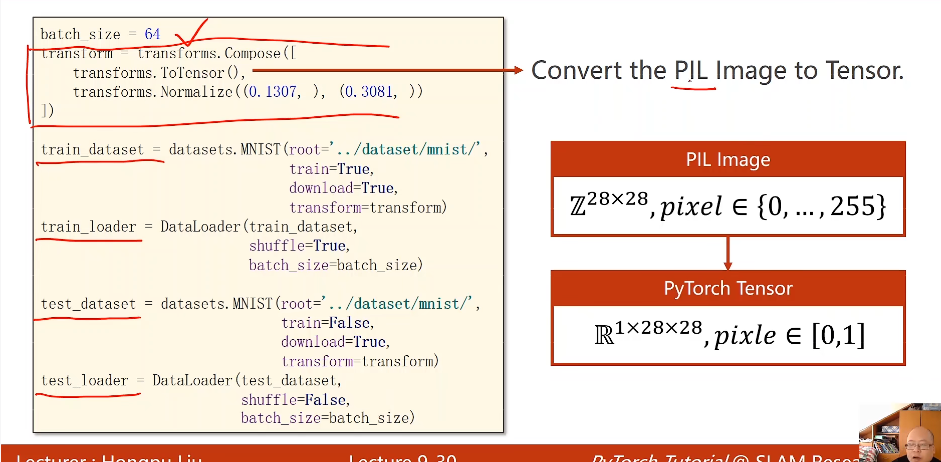

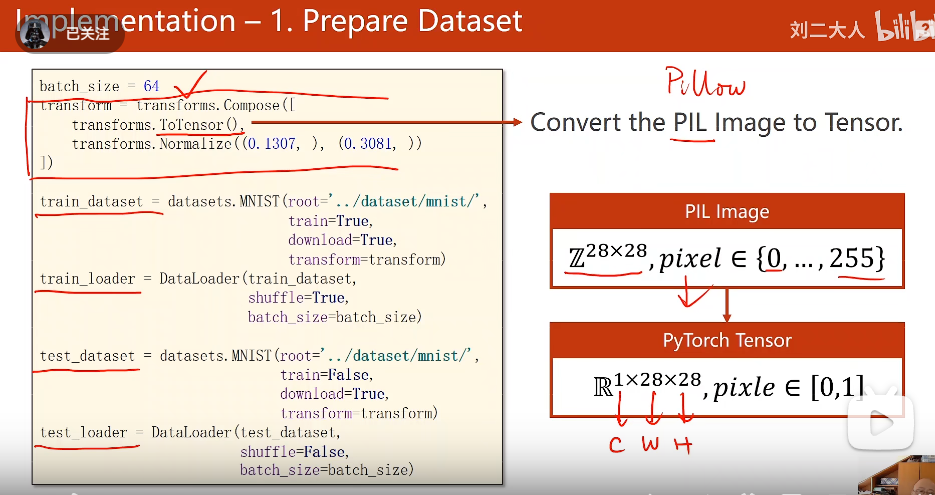

其中,ToTensor()是将其转化成张量形式,以便能够进行维度提升

Mormalize主要是进行归一化的操作。让计算更加方便,转在0--1之间是训练效果最好的

先转成一维度的向量形式。,如果有三个标签,那么列向量就是三个。

##

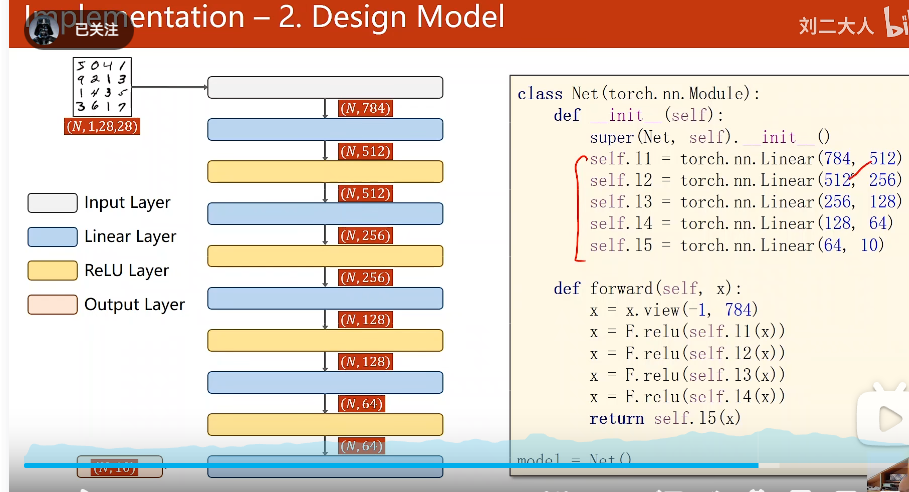

训练过程是一个数字转成一个784向量维度

batch-size目的是为了获取一共取了几个784维度的向量

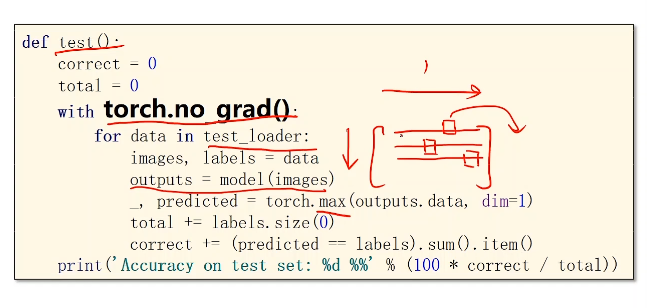

这里的torch.max(outputs.data, dim=1)中,dim=1指的是,在一行中选择最大值的概率

labels.size(0)的个数指的是标签个数

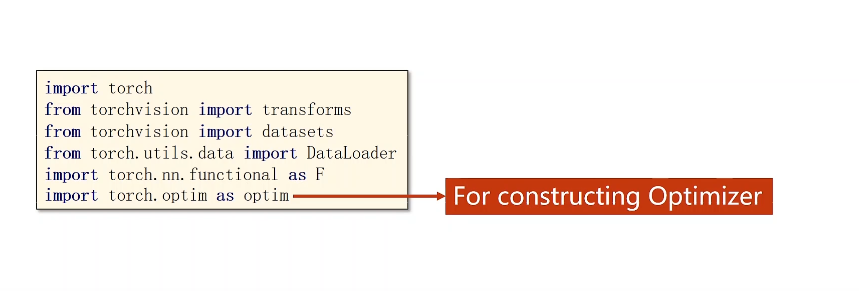

import torch

from torchvision import transforms

from torchvision import datasets

from torch.utils.data import DataLoader

import torch.nn.functional as F

import torch.optim as optim

##准备数据集

batch_size = 64

transform = transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.1307, ), (0.3081, ))

])

train_dataset = datasets.MNIST(root='./dataset/mnist/',

train = True,

download = True,

transform = transform)

train_loader = DataLoader(train_dataset,

shuffle=True,

batch_size=batch_size,

num_workers=4)

test_dataset = datasets.MNIST(root='./dataset/mnist/',

train = False,

download = True,

transform=transform)

test_loader = DataLoader(test_dataset,

shuffle=False,

batch_size = batch_size,

num_workers=4)

##构建神经网络模型

class Net(torch.nn.Module):

def __init__(self):

super(Net, self).__init__()

self.l1 = torch.nn.Linear(784, 512)

self.l2 = torch.nn.Linear(512, 256)

self.l3 = torch.nn.Linear(256, 128)

self.l4 = torch.nn.Linear(128, 64)

self.l5 = torch.nn.Linear(64,10)

def forward(self, x):

x = x.view(-1, 784) ##将获取的batch-size,即一次运行几张,即,列是784,行是几张手写数字图像

x = F.relu(self.l1(x))

x = F.relu(self.l2(x))

x = F.relu(self.l3(x))

x = F.relu(self.l4(x))

return self.l5(x)

model = Net()

##设置损失函数和优化器

criterion = torch.nn.CrossEntropyLoss()

optimizer = optim.SGD(model.parameters(), lr=0.01, momentum=0.5)

##训练模型

def train(epoch):

running_loss = 0.0

for batch_idex,data in enumerate(train_loader, 0):

inputs, target = data

optimizer.zero_grad() ##梯度归零

outputs = model(inputs)

loss = criterion(outputs, target) ##计算损失

loss.backward() ##根据损失反向传播获得梯度

optimizer.step()

running_loss += loss.item()

if batch_idex % 300 ==299:

print("[%d, %5d] loss: %.3f" % (epoch + 1,batch_idex + 1,running_loss/300))

running_loss = 0.0

def te():

correct = 0

total = 0

with torch.no_grad():

for data in test_loader:

images, labels = data

outputs = model(images)

_, predicted = torch.max(outputs.data, dim=1)

total += labels.size(0)

correct += (predicted == labels).sum().item()

print("Accurary on test set: %d %%" % (100*correct / total))

if __name__ =="__main__":

for epoch in range(10):

train(epoch)

te()

345

345

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?