第4天没有链接,只有一张图片:

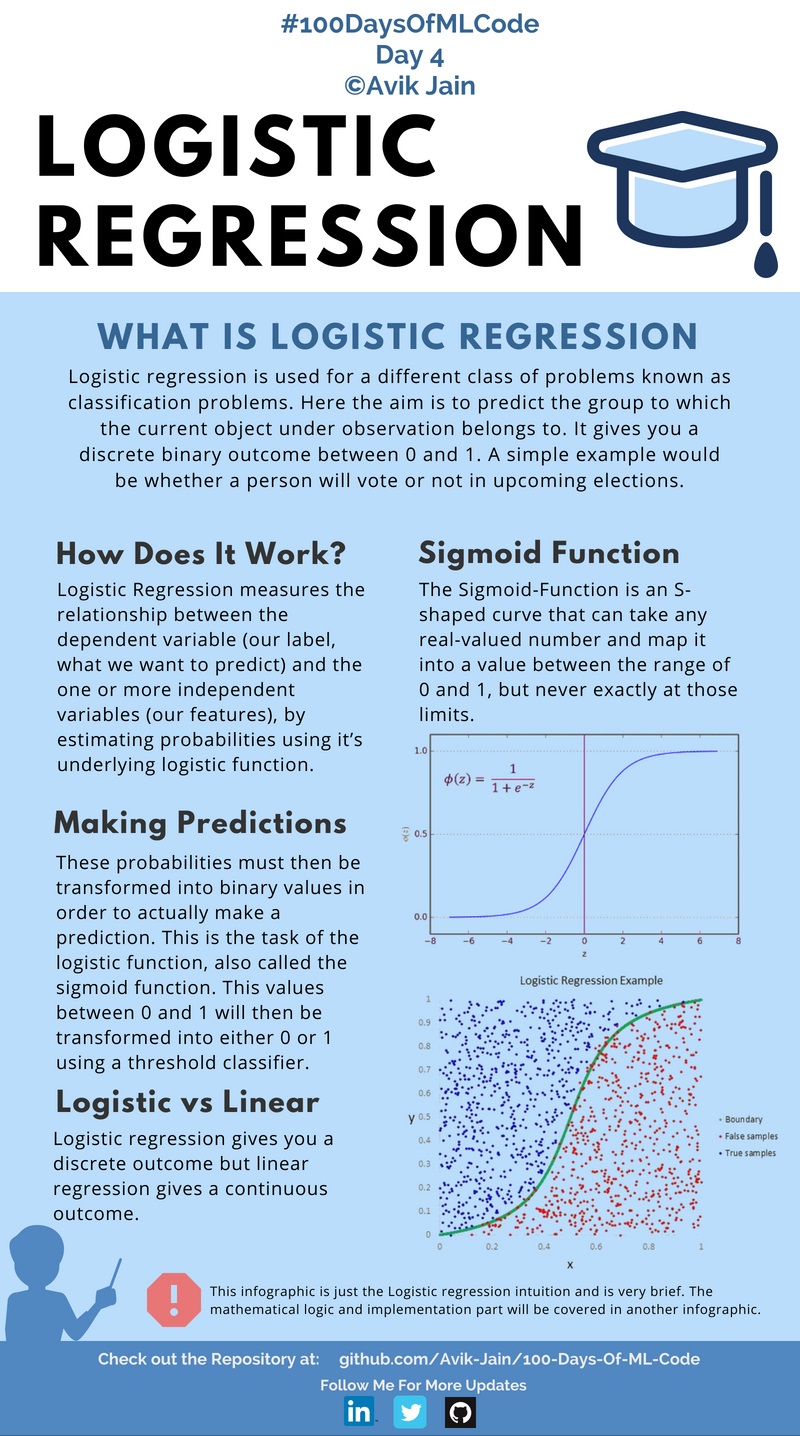

逻辑回归

什么是逻辑回归:

逻辑回归(Logistic Regression)是用于处理因变量为分类变量的回归问题,常见的是二分类或二项分布问题,也可以处理多分类问题,它实际上是属于一种分类方法。

不同于线性回归模型与多元线性回归模型中处理的因变量都是数值型区间变量,对于逻辑回归,举个例子:分析年龄、性别、体制指数、平均血压、疾病指数等指标得以判断一个是是否患糖尿病,Y=0表示没有患病,Y=1表示患病。

线性回归模型通常是处理因变量是连续变量的问题,如果因变量是定性变量,线性回归模型就不再适用了,需采用逻辑回归模型解决。

推荐博客1:https://blog.csdn.net/t46414704152abc/article/details/79574003

推荐博客2:https://www.cnblogs.com/sxron/p/5489214.html

推荐博客3:https://blog.csdn.net/u012343179/article/details/77886224

Day5的代码:

import numpy as np

import matplotlib.pyplot as plt

import pandas as pd

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import StandardScaler

from sklearn.linear_model import LogisticRegression

from sklearn.metrics import confusion_matrix

'''数据预处理部分'''

#importing the dataset

dataset=pd.read_csv('Social_Network_Ads.csv')

X=dataset.iloc[:,[2,3]].values

y=dataset.iloc[:,4].values

#Splitting the dataset into the Training set and Test set

X_train,X_test,y_train,y_test=train_test_split(X,y,test_size=0.25,random_state=0)

#feature Scaling

sc=StandardScaler()

X_train=sc.fit_transform(X_train)

X_test=sc.transform(X_test)

'''逻辑回归模型'''

#Fitting Logistic Regression to Training set

classifier=LogisticRegression()

classifier.fit(X_train,y_train)

'''预测'''

#predicting the test set results

y_pred=classifier.predict(X_test)

'''评估预测结果'''

#making the confusion matrix

cm=confusion_matrix(y_test,y_pred)

代码2

import numpy as np

import pandas as pd

from sklearn import preprocessing

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import StandardScaler,PolynomialFeatures

from sklearn.linear_model import LogisticRegression

from sklearn.metrics import accuracy_score,confusion_matrix

from sklearn.pipeline import Pipeline

import matplotlib as mpl

import matplotlib.pyplot as plt

import matplotlib.patches as mpatches

#1 导入数据 只提取age和salary特征

dataset = pd.read_csv('Social_Network_Ads.csv')

# X = dataset.iloc[:,1:4].values

# Y = dataset.iloc[:,-1].values

# le = preprocessing.LabelEncoder()

# X[:,0] = le.fit_transform(X[:,0])

X = dataset.iloc[:,2:4].values

Y = dataset.iloc[:,-1].values

print(X)

X_train,X_test,Y_train,Y_test = train_test_split(X,Y,test_size=0.25,random_state=0)

# 特征降维

# sc = StandardScaler()

# X_train = sc.fit_transform(X_train)

# X_test = sc.fit_transform(X_test)

#2 Pipeline

lr = Pipeline([('sc', StandardScaler()),

('poly', PolynomialFeatures(degree=2)),

('clf', LogisticRegression()) ])

lr.fit(X_train,Y_train)

y_test_hat = lr.predict(X_test)

#3 精度与confusion_matrix

acc = accuracy_score(Y_test,y_test_hat)

print(acc)

cm = confusion_matrix(Y_test,y_test_hat)

print(cm)

#4 可视化

mpl.rcParams['font.sans-serif'] = ['simHei']#字体显示设置

mpl.rcParams['axes.unicode_minus'] = False

cm_light = mpl.colors.ListedColormap(['#77E0A0', '#FF8080'])#设置不同标签的颜色深浅

cm_dark = mpl.colors.ListedColormap(['g', 'r'])

N, M = 500, 500 # 横纵各采样多少个值

x1_min, x1_max = X[:, 0].min(), X[:, 0].max() # 第0列的范围

x2_min, x2_max = X[:, 1].min(), X[:, 1].max() # 第1列的范围

t1 = np.linspace(x1_min, x1_max, N)

t2 = np.linspace(x2_min, x2_max, M)

x1, x2 = np.meshgrid(t1, t2) # 生成网格采样点

x_test = np.stack((x1.flat, x2.flat), axis=1) # 测试点

y_hat = lr.predict(x_test) # 预测值

y_hat = y_hat.reshape(x1.shape) # 使之与输入的形状相同

plt.figure(facecolor='w')

plt.pcolormesh(x1, x2, y_hat, cmap=cm_light) # 预测值的显示

plt.scatter(X_train[:, 0], X_train[:, 1], s=50,c=Y_train, edgecolors='k', cmap=cm_dark)

plt.scatter(X_test[:, 0], X_test[:, 1], s=50,c=Y_test, edgecolors='k', cmap=cm_dark)

plt.xlabel('age')

plt.ylabel('salary')

plt.title('SUV购买')

patchs = [mpatches.Patch(color='#77E0A0', label='0'),

mpatches.Patch(color='#FF8080', label='1')]

plt.legend(handles=patchs, fancybox=True, framealpha=0.8,loc ='upper right')

plt.show()

可视化结果:

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?