自定义函数

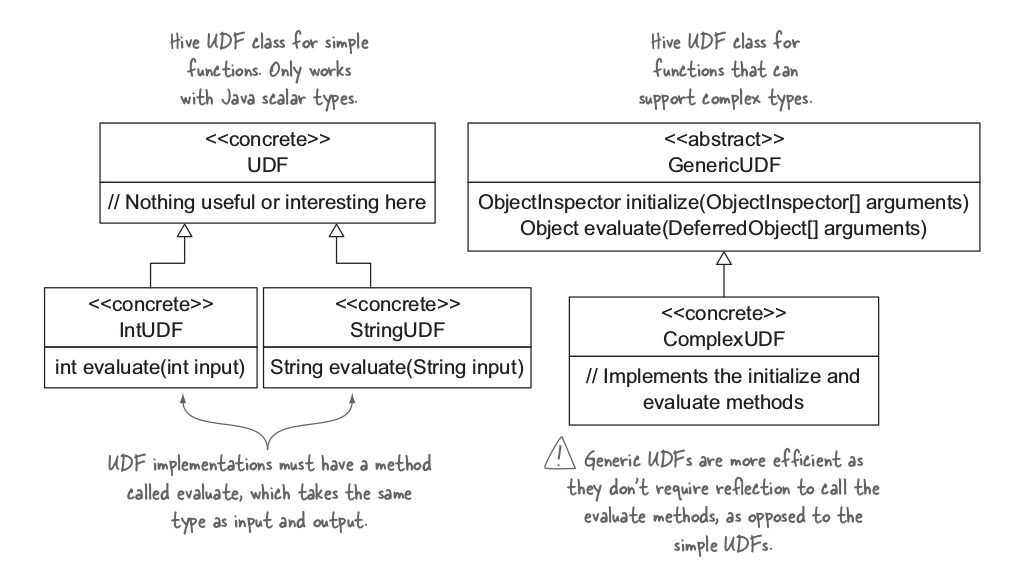

当写hive UDF时,有两个选择:一是继承 UDF类,二是继承抽象类GenericUDF。这两种实现不同之处是:GenericUDF 可以处理复杂类型参数,并且继承GenericUDF更加有效率,因为UDF class 需要HIve使用反射的方式去实现。

UDF

一个UDF 必须满足两个条件:

1. 必须继承 org.apache.hadoop.hive.ql.exec.UDF类

2. 至少实现了一个 evaluate() 方法

/**

* A User-defined function (UDF) for the use with Hive.

*

* New UDF classes need to inherit from this UDF class.

*

* Required for all UDF classes: 1. Implement one or more methods named

* "evaluate" which will be called by Hive. The following are some examples:

* public int evaluate(); public int evaluate(int a); public double evaluate(int

* a, double b); public String evaluate(String a, int b, String c);

*

* "evaluate" should never be a void method. However it can return "null" if

* needed.

*/

@UDFType(deterministic = true)

public class UDF {

/**

* The resolver to use for method resolution.

*/

private UDFMethodResolver rslv;

/**

* The constructor.

*/

public UDF() {

rslv = new DefaultUDFMethodResolver(this.getClass());

}

/**

* The constructor with user-provided UDFMethodResolver.

*/

protected UDF(UDFMethodResolver rslv) {

this.rslv = rslv;

}

/**

* Sets the resolver.

*

* @param rslv

* The method resolver to use for method resolution.

*/

public void setResolver(UDFMethodResolver rslv) {

this.rslv = rslv;

}

/**

* Get the method resolver.

*/

public UDFMethodResolver getResolver() {

return rslv;

}

/**

* These can be overriden to provide the same functionality as the

* correspondingly named methods in GenericUDF.

*/

public String[] getRequiredJars() {

return null;

}

public String[] getRequiredFiles() {

return null;

}

}UDF 函数只能处理简单的java标量类型,如int,double,String等类型

GenericUDF

使用Geoip 根据IP定位国家,实现GenericUDF。

public class GeoLocUDF extends GenericUDF {

private LookupService service;

//用于将输入类型转换为目标类型

private ObjectInspectorConverters.Converter[] converters;

/**

* 初始化

* @param arguments

* @return

* @throws UDFArgumentException

*/

@Override

public ObjectInspector initialize(ObjectInspector[] arguments) throws UDFArgumentException {

if (arguments.length !=2){

throw new UDFArgumentLengthException(

"The function COUNTRY(ip, geolocfile) takes exactly 2 arguments.");

}

converters = new ObjectInspectorConverters.Converter[arguments.length];

for (int i = 0; i < arguments.length; i++) {

//创建一个Converter,可以在evaluate方法中使用它 将参数的原生数据类型转存未Java Strings。

converters[i] = ObjectInspectorConverters.getConverter(arguments[i],

PrimitiveObjectInspectorFactory.javaStringObjectInspector);

}

// 指定UDF (evaluate函数)返回类型为String

return PrimitiveObjectInspectorFactory

.getPrimitiveJavaObjectInspector(PrimitiveObjectInspector.PrimitiveCategory.STRING);

}

@Override

public Object evaluate(DeferredObject[] arguments) throws HiveException {

if (arguments[0].get() == null || arguments[1].get() == null) {

return null;

}

String ip = (String) converters[0].convert(arguments[0].get());

String filename = (String) converters[1].convert(arguments[1].get());

return lookup(ip, filename);

}

//在出现异常时,会调用这个方法,输出调用信息

@Override

public String getDisplayString(String[] children) {

return "country(" + children[0] + ", " + children[1] + ")";

}

protected String lookup(String ip, String filename) throws HiveException {

try {

if (service == null) {

URL u = getClass().getClassLoader().getResource(filename);

if (u == null) {

throw new HiveException("Couldn't find geolocation file '" + filename + "'");

}

service =

new LookupService(u.getFile(), LookupService.GEOIP_MEMORY_CACHE);

}

String countryCode = service.getCountry(ip).getCode();

if ("--".equals(countryCode)) {

return null;

}

return countryCode;

} catch (IOException e) {

throw new HiveException("Caught IO exception", e);

}

}

}在Hive中调用UDF

ADD JAR /path/hive-test.jar;

CREATE temporary FUNCTION geoIp AS 'com.hadoop2.hive.GeoLocUDF';temporary 关键字表示UDF只是为这个Hive会话过程定义的。

深入学习:https://cwiki.apache.org/confluence/display/Hive/GenericUDAFCaseStudy

971

971

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?