介绍

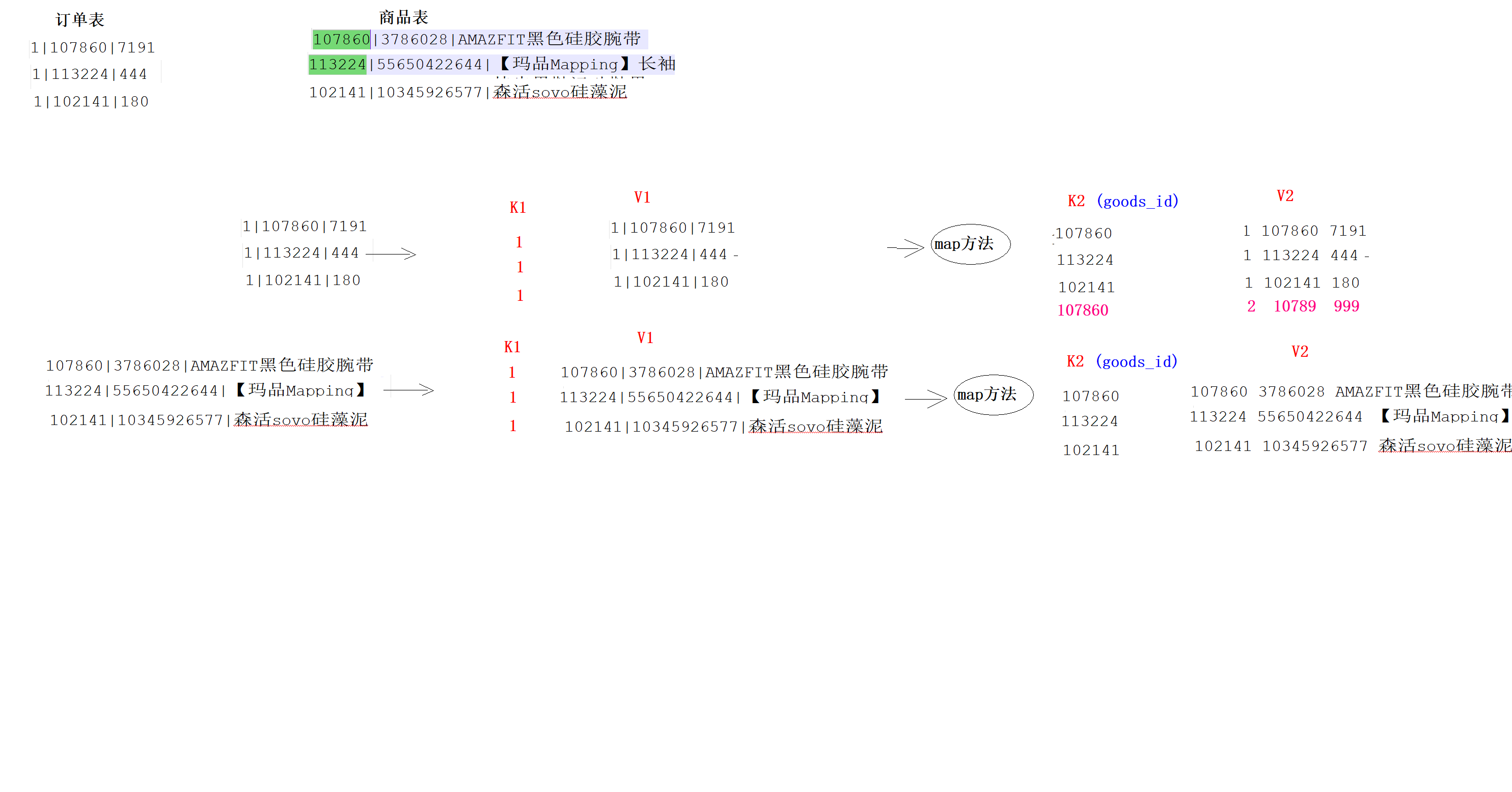

1、Reduce Join是在Reduce完成Join操作

2、Reduce端Join,Join的文件在Map阶段K2就是Join字段

3、Reduce会存在数据倾斜的风险,如果存在该文件,则可以使用MapJoin来解决

4、Reduce端Join的代码必须放在集群运行,不能在本地运行

Reduce端Join

public class ReduceJoinMapper extends Mapper<LongWritable, Text, Text, Text> {

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

//1:确定读取的是哪个源数据文件

FileSplit fileSplit = (FileSplit) context.getInputSplit(); //获取文件切片

String fileName = fileSplit.getPath().getName(); //获取源文件的名字

String[] array = value.toString().split("\\|");

//2:处理订单文件

if ("itheima_order_goods.txt".equals(fileName)) { //订单文件

//2.1:获取K2

String k2 = array[1];

//2.2:获取v2

String v2 = "o_"+array[0] + "\t" + array[2];

//2.3:将k2和v2写入上下文中

context.write(new Text(k2), new Text(v2));

}

//3:处理商品文件

if ("itheima_goods.txt".equals(fileName)) { //商品文件

//3.1 获取K2

String k2 = array[0];

String v2 = "g_"+array[0] + "\t" + array[2];

//3.2:将k2和v2写入上下文中

context.write(new Text(k2), new Text(v2));

}

}

}public class ReduceJoinDriver {

public static void main(String[] args) throws Exception {

//1:创建Job任务对象

Configuration configuration = new Configuration();

//configuration.set("参数名字","参数值");

Job job = Job.getInstance(configuration, "reduce_join_demo");

//2、设置置作业驱动类

job.setJarByClass(ReduceJoinDriver.class);

//3、设置文件读取输入类的名字和文件的读取路径

FileInputFormat.addInputPath(job, new Path(args[0]));

//4:设置你自定义的Mapper类信息、设置K2类型、设置V2类型

job.setMapperClass(ReduceJoinMapper.class);

job.setMapOutputKeyClass(Text.class); //设置K2类型

job.setMapOutputValueClass(Text.class); //设置V2类型

//5:设置分区、[排序],规约、分组(保留)

//6:设置你自定义的Reducer类信息、设置K3类型、设置V3类型

job.setReducerClass(ReduceJoinReducer.class);

job.setOutputKeyClass(Text.class); //设置K3类型

job.setOutputValueClass(NullWritable.class); //设置V3类型

//7、设置文件读取输出类的名字和文件的写入路径

//7.1 如果目标目录存在,则删除

String fsType = "file:///";

//String outputPath = "file:///D:\\output\\wordcount";

//String fsType = "hdfs://node1:8020";

//String outputPath = "hdfs://node1:8020/mapreduce/output/wordcount";

String outputPath = args[1];

URI uri = new URI(fsType);

FileSystem fileSystem =

FileSystem.get(uri, configuration);

boolean flag = fileSystem.exists(new Path(outputPath));

if(flag == true){

fileSystem.delete(new Path(outputPath),true);

}

FileOutputFormat.setOutputPath(job, new Path(outputPath));

//8、将设置好的job交给Yarn集群去执行

// 提交作业并等待执行完成

boolean resultFlag = job.waitForCompletion(true);

//程序退出

System.exit(resultFlag ? 0 :1);

}

}Map端Join

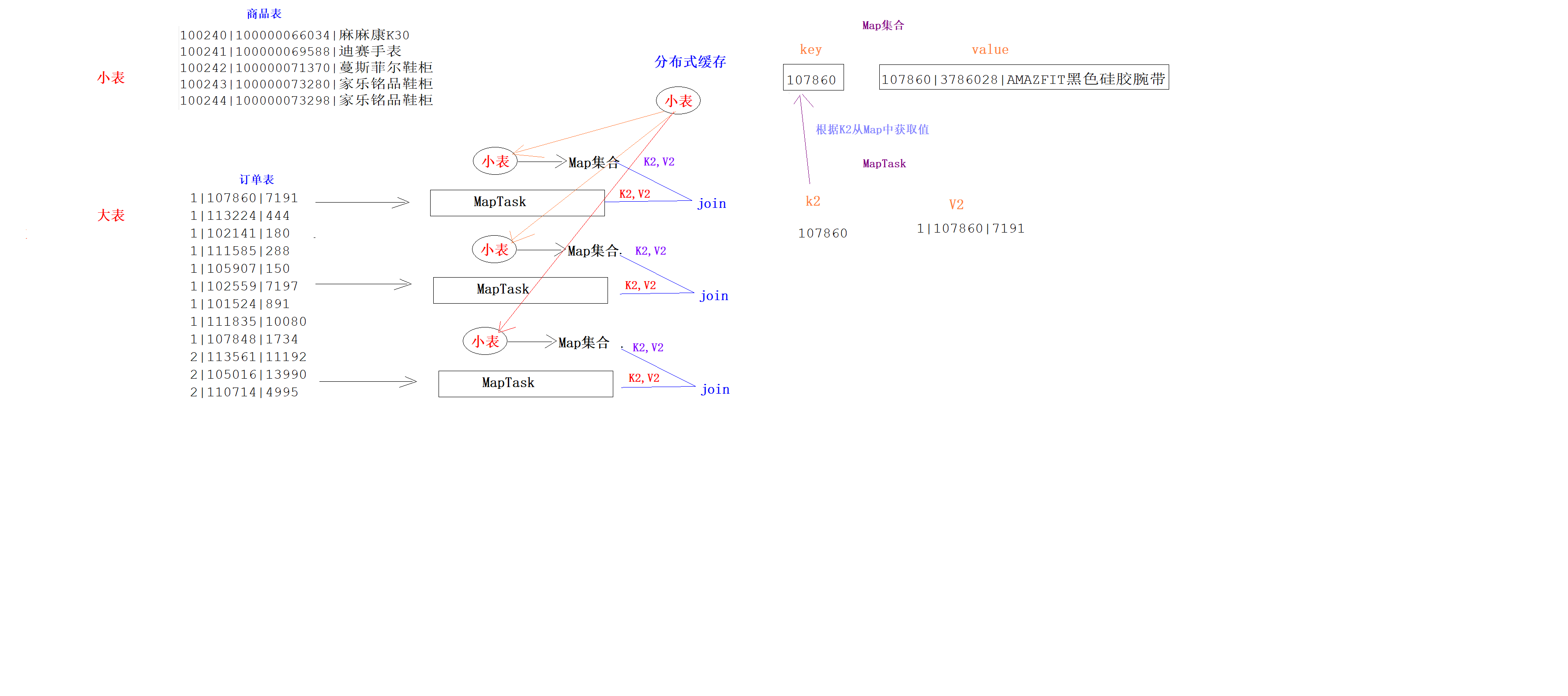

1、Map端join就是在Map端将Join操作完成

2、Map端join的前提是小表Join大表,小表的大小默认是20M

3、Map端Join需要将小表存在在分布式缓存中,然后读取到每一个MapTask的本地内存的Map集合中

4、Map端Join一般不会数据倾斜问题,因为Map的数量是由数据量大小自动决定的

5、Map端Join代码不需要Reduce

public class MapJoinMapper extends Mapper<LongWritable, Text,Text,NullWritable> {

HashMap<String, String> goodsMap = new HashMap<>();

/**

* setup方法会在map方法执行之前先执行,而且只会执行一次,主要用来做初始化工作

* @param context

* @throws IOException

* @throws InterruptedException

*/

//将小表从分布式缓存中读取,存入Map集合

@Override

protected void setup(Context context) throws IOException, InterruptedException {

//1:获取分布式缓存中文件的输入流

BufferedReader bufferedReader = new BufferedReader(new InputStreamReader(new FileInputStream("itheima_goods.txt")));

String line = null;

while ((line = bufferedReader.readLine()) != null){

String[] array = line.split("\\|");

goodsMap.put(array[0], array[2]);

}

/*

{100101,四川果冻橙6个约180g/个}

{100102,鲜丰水果秭归脐橙中华红}

*/

}

@Override

protected void map(LongWritable key, Text value,Context context) throws IOException, InterruptedException {

//1:得到K2

String[] array = value.toString().split("\\|");

String k2 = array[1];

String v2 = array[0] + "\t" + array[2];

//2:将K2和Map集合进行Join

String mapValue = goodsMap.get(k2);

context.write(new Text(v2 + "\t" + mapValue), NullWritable.get());

}

}public class MapJoinDriver {

public static void main(String[] args) throws Exception{

Configuration configuration = new Configuration();

//1:创建一个Job对象

Job job = Job.getInstance(configuration, "map_join");

//2:对Job进行设置

//2.1 设置当前的主类的名字

job.setJarByClass(MapJoinDriver.class);

//2.2 设置数据读取的路径(大表路径)

FileInputFormat.addInputPath(job,new Path("hdfs://node1:8020/mapreduce/input/map_join/big_file"));

//2.3 指定你自定义的Mapper是哪个类及K2和V2的类型

job.setMapperClass(MapJoinMapper.class);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(NullWritable.class);

//2.3 指定你自定义的Reducer是哪个类及K3和V3的类型

//将小表存入分布式缓存

job.addCacheFile(new URI("hdfs://node1:8020/mapreduce/input/map_join/small_file/itheima_goods.txt"));

//2.4 设置数据输出的路径--该目录要求不能存在,否则报错

Path outputPath = new Path("hdfs://node1:8020/output/map_join");

FileOutputFormat.setOutputPath(job,outputPath);

//2.5 设置Shuffle的分组类

FileSystem fileSystem = FileSystem.get(new URI("hdfs://node1:8020"), new Configuration());

boolean is_exists = fileSystem.exists(outputPath);

if(is_exists == true){

//如果目标文件存在,则删除

fileSystem.delete(outputPath,true);

}

//3:将Job提交为Yarn执行

boolean bl = job.waitForCompletion(true);

//4:退出任务进程,释放资源

System.exit(bl ? 0 : 1);

}

}

文章详细介绍了在HadoopMapReduce中ReduceJoin和MapJoin的实现原理。ReduceJoin在Reduce阶段完成Join,适合处理大数据,但可能面临数据倾斜问题;而MapJoin则在Map阶段完成Join,适用于小表Join大表的情况,利用分布式缓存减少数据传输。文章提供了相应的Mapper和Reducer代码示例。

文章详细介绍了在HadoopMapReduce中ReduceJoin和MapJoin的实现原理。ReduceJoin在Reduce阶段完成Join,适合处理大数据,但可能面临数据倾斜问题;而MapJoin则在Map阶段完成Join,适用于小表Join大表的情况,利用分布式缓存减少数据传输。文章提供了相应的Mapper和Reducer代码示例。

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?