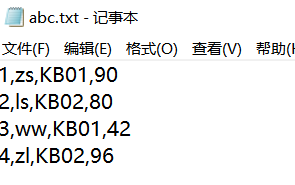

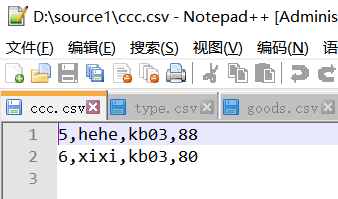

实现读取两张表到同一个文件夹里,在d盘下创建两张表,例如我创建了一张abc.txt的表和ccc.csv的表,放在同一个文件夹source1下,csv文件默认用“,”隔开。表中数据类型如下。

两张表连接排序展示

WritableComparable继承自Writable和java.lang.Comparable接口,是一个Writable也是一个Comparable,也就是说,既可以序列化,也可以比较!

再看看它的实现类,发现BooleanWritable, BytesWritable, ByteWritable, DoubleWritable, FloatWritable, IntWritable, LongWritable, MD5Hash, NullWritable, Record, RecordTypeInfo, Text, VIntWritable, VLongWritable都实现了WritableComparable类!

WritableComparable的实现类之间相互来比较,在Map/Reduce中,任何用作键来使用的类都应该实现WritableComparable接口!

以下实现了comparato方法和write和readFields方法

public class Tools {

private static Tools tools = new Tools();

private Tools(){}

public static Tools getInstance() {

return tools;

}

// 检查文件是否存在,存在则删除,不存在则自动创建

public void checkpoint(String path) {

File file = new File(path);

if (file.exists()) {

File[] lst = file.listFiles();

for (File f : lst) {

f.delete();

}

file.delete();

}

}

}

MapInner

/**

* MapInner是在map端做表连接,先把小表读到缓存中,然后读大表一行一行和内存中的小表连接

*/

public class MyMapInner {

public static void main(String[] args) throws Exception {

Tools.getInstance().checkpoint("d://ff");

Job job = Job.getInstance(new Configuration());

// 读数据

FileInputFormat.addInputPath(job, new Path("d://source2//goods.csv"));

job.setMapperClass(MyMapper.class);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(NullWritable.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(NullWritable.class);

// job.addCacheFile(new URI("hdfs://192.168.56.100:9000/mydemo/xuxu/type.csv"));

// 把小表加到大表上面

job.addFileToClassPath(new Path("d://type.csv"));

FileOutputFormat.setOutputPath(job, new Path("d://ff"));

job.waitForCompletion(true);

}

public static class MyMapper extends Mapper<LongWritable, Text, Text, NullWritable> {

private Map myType = new HashMap(); // 存储小表信息

@Override

protected void setup(Context context) throws IOException, InterruptedException {

// 获取缓存中的小表type.csv

// String fileName = context.getLocalCacheFiles()[0].getName();

String fileName = context.getCacheFiles()[0].getPath();

final BufferedReader read = new BufferedReader(new FileReader(fileName));

String str = null;

while ((str = read.readLine()) != null) {

String[] sps = str.split(",");

// 0号放建,1号放值

myType.put(sps[0], sps[1]);

}

read.close();

}

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

String[] goodsinfos = value.toString().split(",");

// 根据主外键获取商品分类

String type = myType.get(goodsinfos[1]).toString();

// 将数据填充回goods数组

goodsinfos[1] = type;

// 将数组转为字符串

Text txt = new Text(Arrays.toString(goodsinfos));

context.write(txt, NullWritable.get());

}

}

}

ReduceInner

/**

* reduceinner是在reduce端做表连接,直接把两张表都给reduce,让reduce自己连接

*/

public class MyReduceInner {

public static void main(String[] args) throws Exception {

Tools.getInstance().checkpoint("d://ddd");

Job job = Job.getInstance(new Configuration());

FileInputFormat.addInputPath(job, new Path("d://source3"));

job.setMapperClass(MyMapper.class);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(Text.class);

job.setReducerClass(MyReduce.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(NullWritable.class);

// job.addCacheFile(new URI("hdfs://192.168.56.100:9000/mydemo/xuxu/type.csv"));

FileOutputFormat.setOutputPath(job, new Path("d://ddd"));

job.waitForCompletion(true);

}

public static class MyMapper extends Mapper<LongWritable, Text, Text, Text> {

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

String[] words = value.toString().split(",");

// 获取当前行数据出自哪个文件的文件名

String path = ((FileSplit) context.getInputSplit()).getPath().toString();

// 不同文件输出的位置不同

if (path.contains("type")) {

context.write(new Text(words[0]), new Text("type:" + words[1]));

} else {

context.write(new Text(words[1]), new Text("context:" + words[0] + ":" + words[2] + ":" + words[3]));

}

}

}

public static class MyReduce extends Reducer<Text, Text, Text, NullWritable> {

@Override

protected void reduce(Text key, Iterable<Text> values, Context context) throws IOException, InterruptedException {

// 按分类编号已经分好组 处理两种不同的数据

// 先将迭代器中的数据存放到集合中

List<Text> lst = new ArrayList<>();

for (Text tx : values) {

String s = tx.toString();

lst.add(new Text(s));

}

// 在数组中找到前面有type:的信息,用来获得商品分类信息

// 相同类型的数据放入同一个节点中

String typeInfo = "";

for (Text tx : lst) {

String val = tx.toString();

if (val.contains("type")) {

typeInfo = val.substring(val.indexOf(":") + 1);

// 在集合中移除本条信息

lst.remove(tx);

break;

}

}

// 将其他的信息遍历替换中间分类的内容

for (Text tx : lst) {

String[] infos = tx.toString().split(":");

infos[0] = typeInfo;

context.write(new Text(Arrays.toString(infos)), NullWritable.get());

}

}

}

}

295

295

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?