一、提出任务

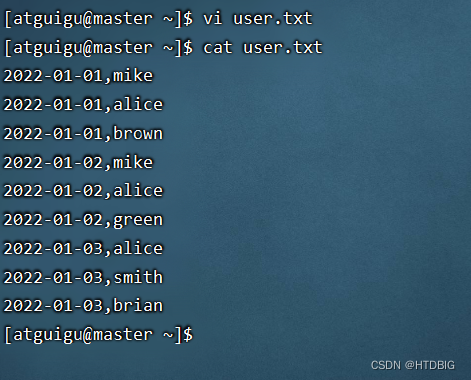

- 已知有以下用户访问历史数据,第一列为用户访问网站的日期,第二列为用户名:

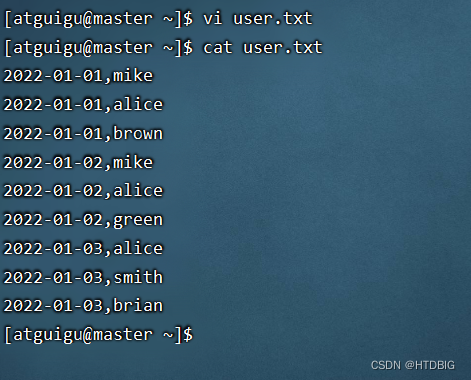

2022-01-01,mike

2022-01-01,alice

2022-01-01,brown

2022-01-02,mike

2022-01-02,alice

2022-01-02,green

2022-01-03,alice

2022-01-03,smith

2022-01-03,brian

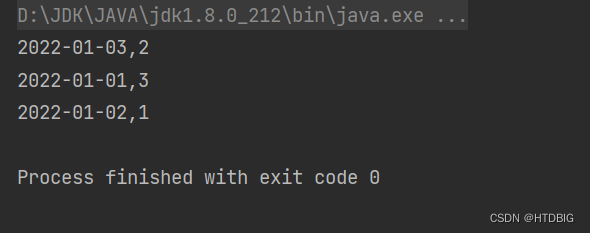

- 现需要根据上述数据统计每日新增的用户数量,期望统计结果

2022-01-01,3

2022-01-02,1

2022-01-03,2

- 即2022-01-01新增了3个用户(分别为mike、alice、brown),2022-01-02新增了1个用户(green),2022-01-03新增了两个用户(分别为smith、brian)。

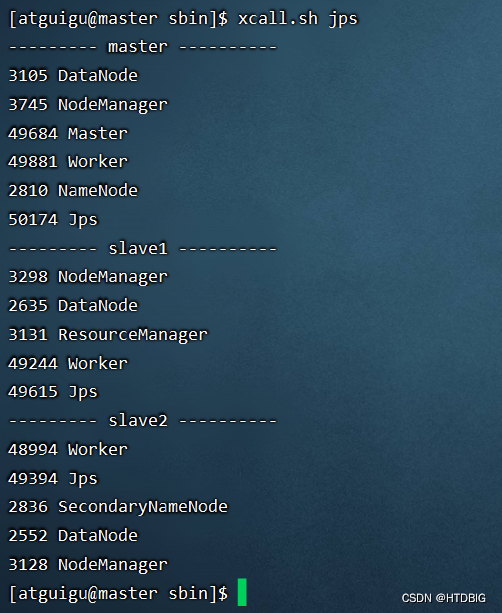

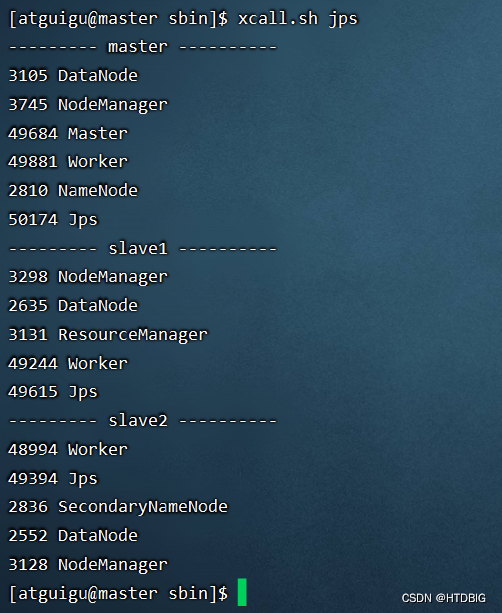

- 预备工作:启动集群的HDFS与Spark

- 在虚拟机创建user.txt文件

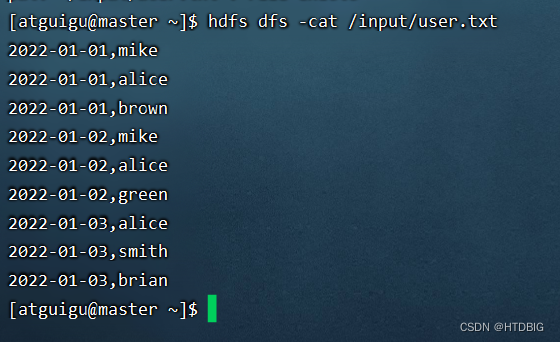

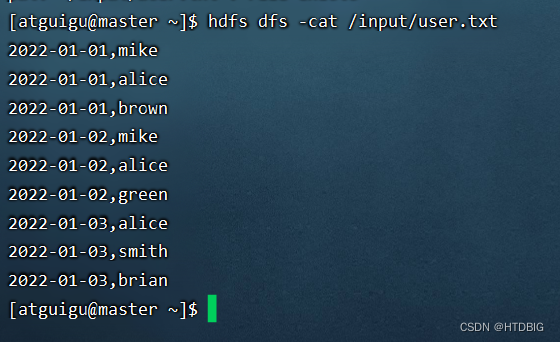

- 将user.txt上传到HDFS/input目录下

二、完成任务

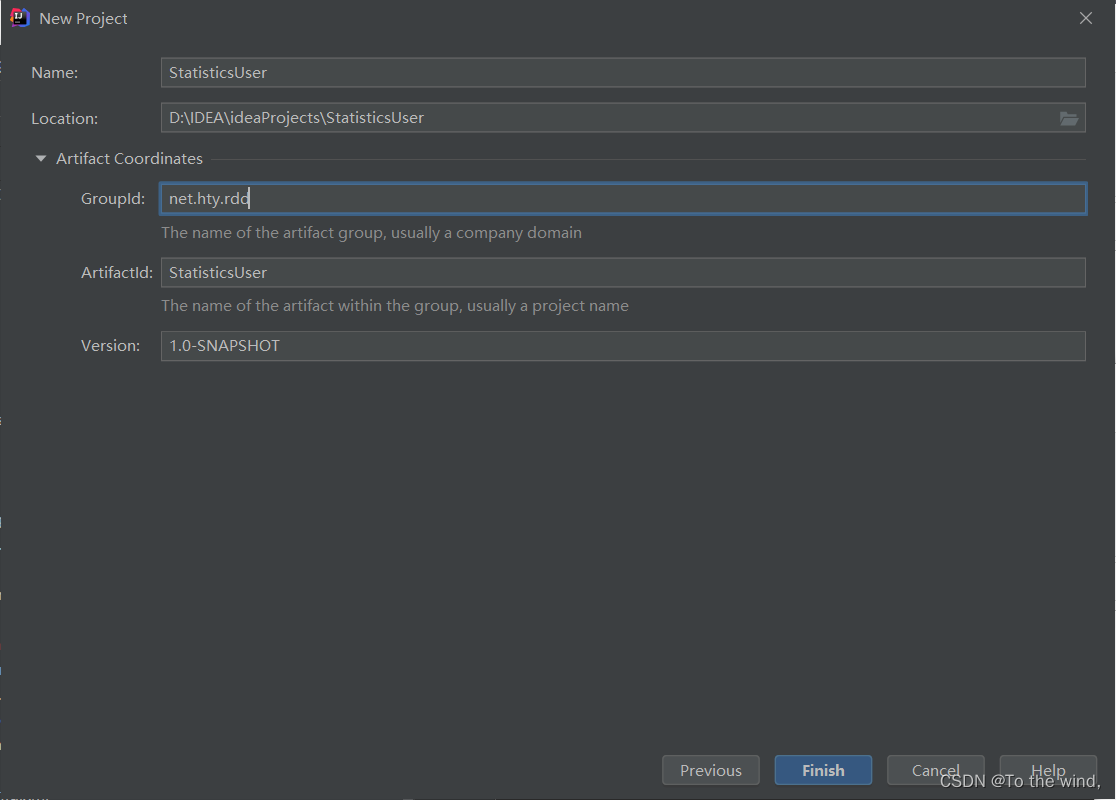

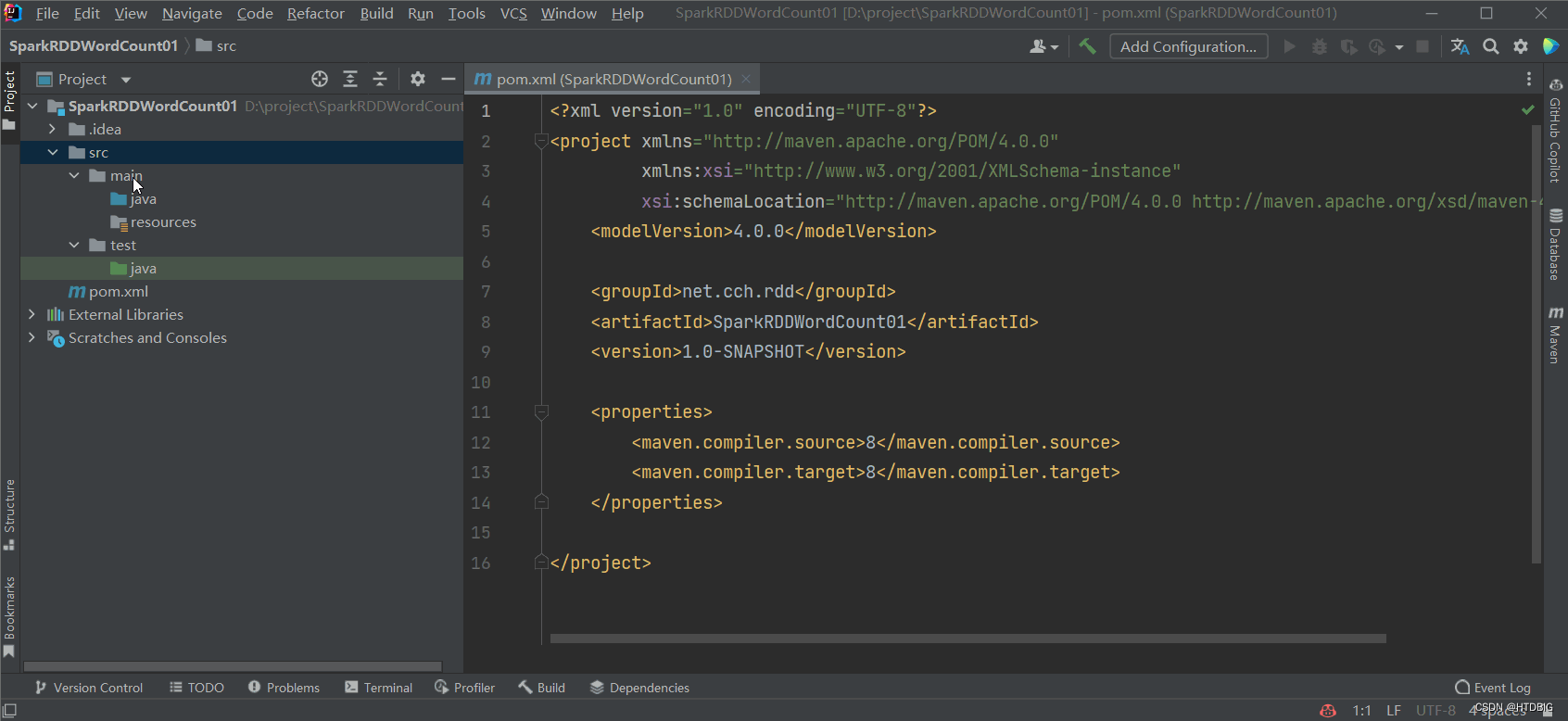

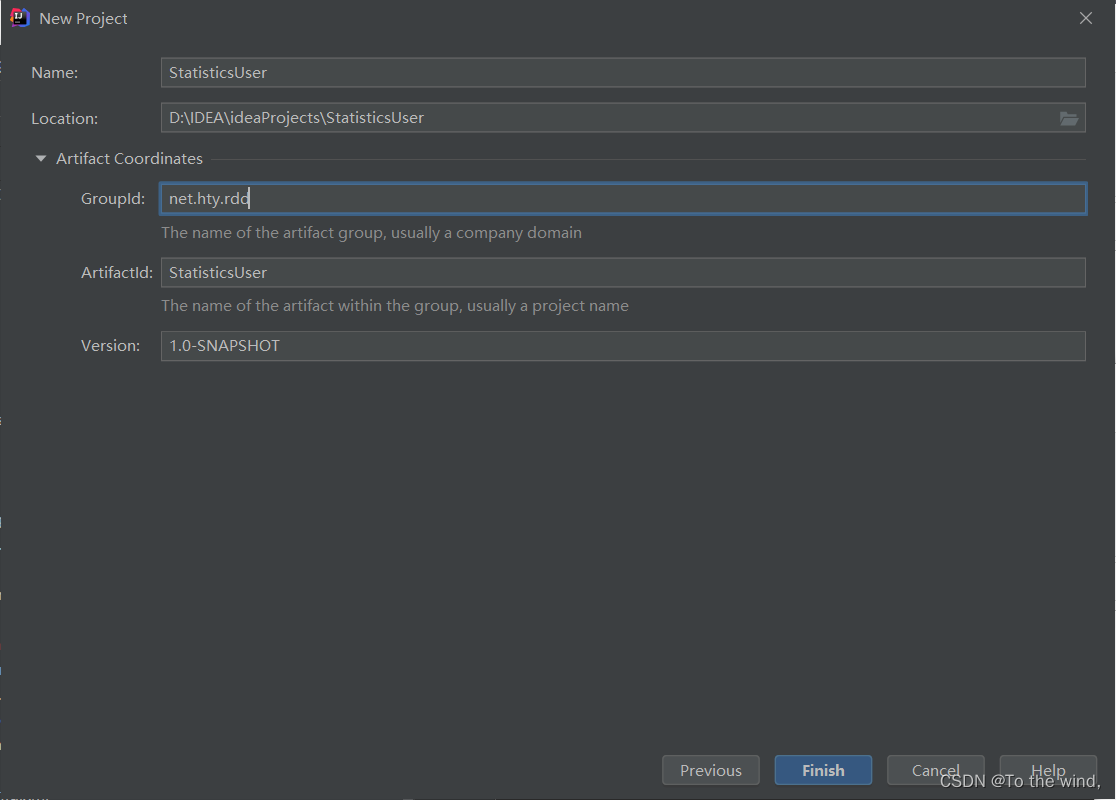

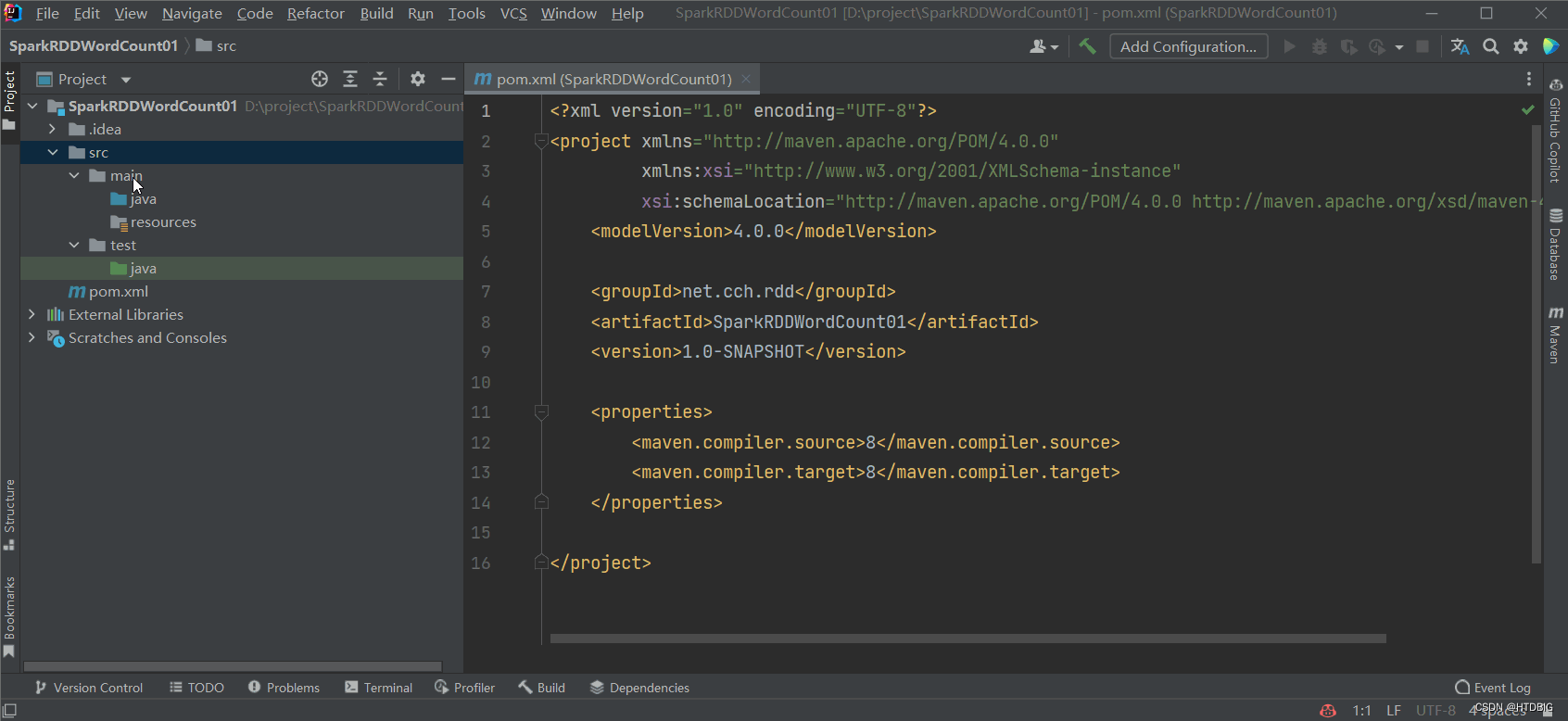

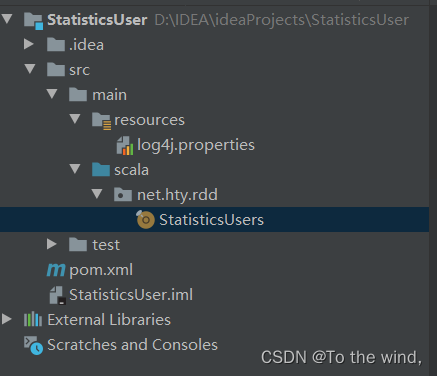

(一)新建Maven项目

- 设置项目类型

- scala 目录(用的以前的gif不会影响)

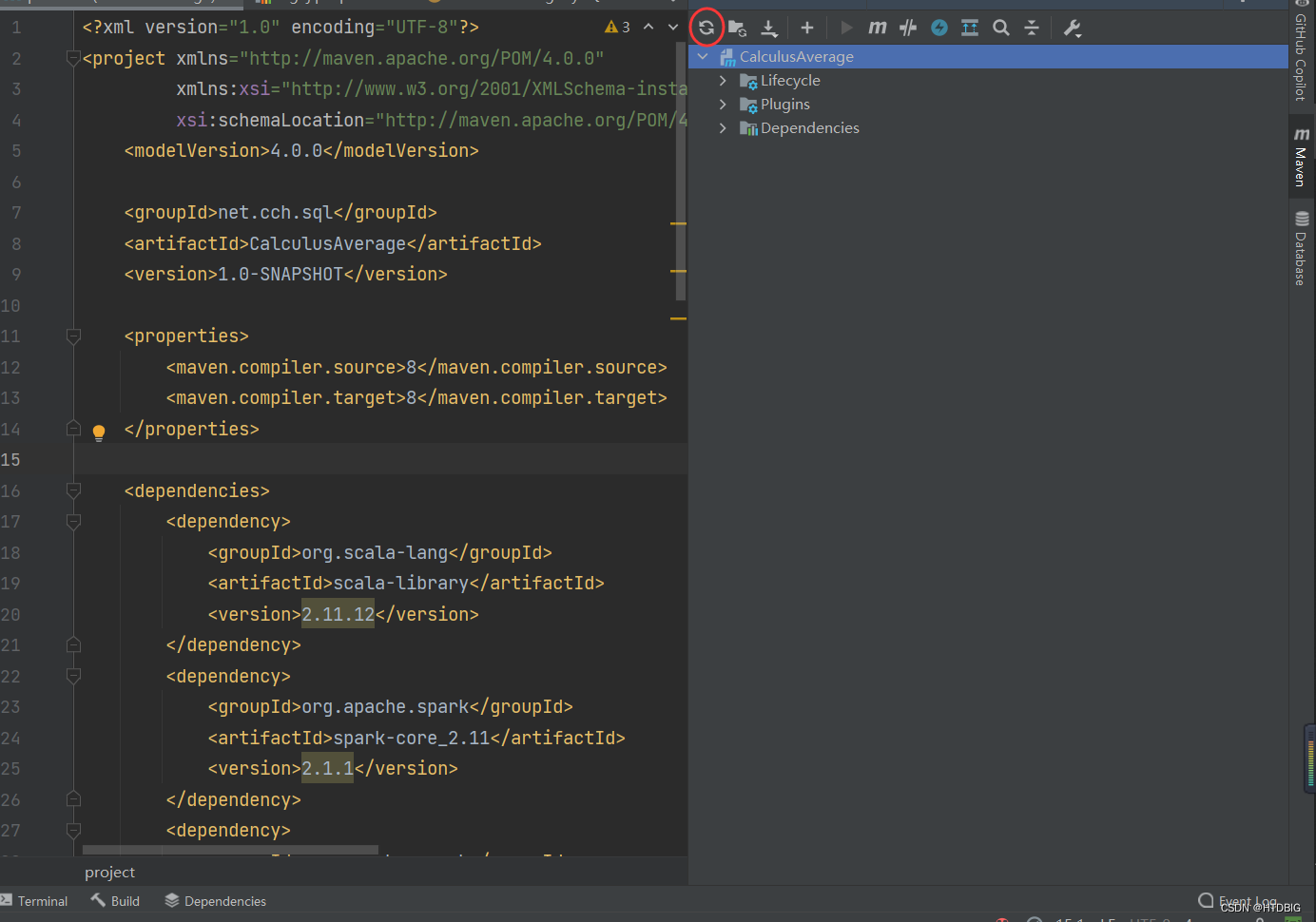

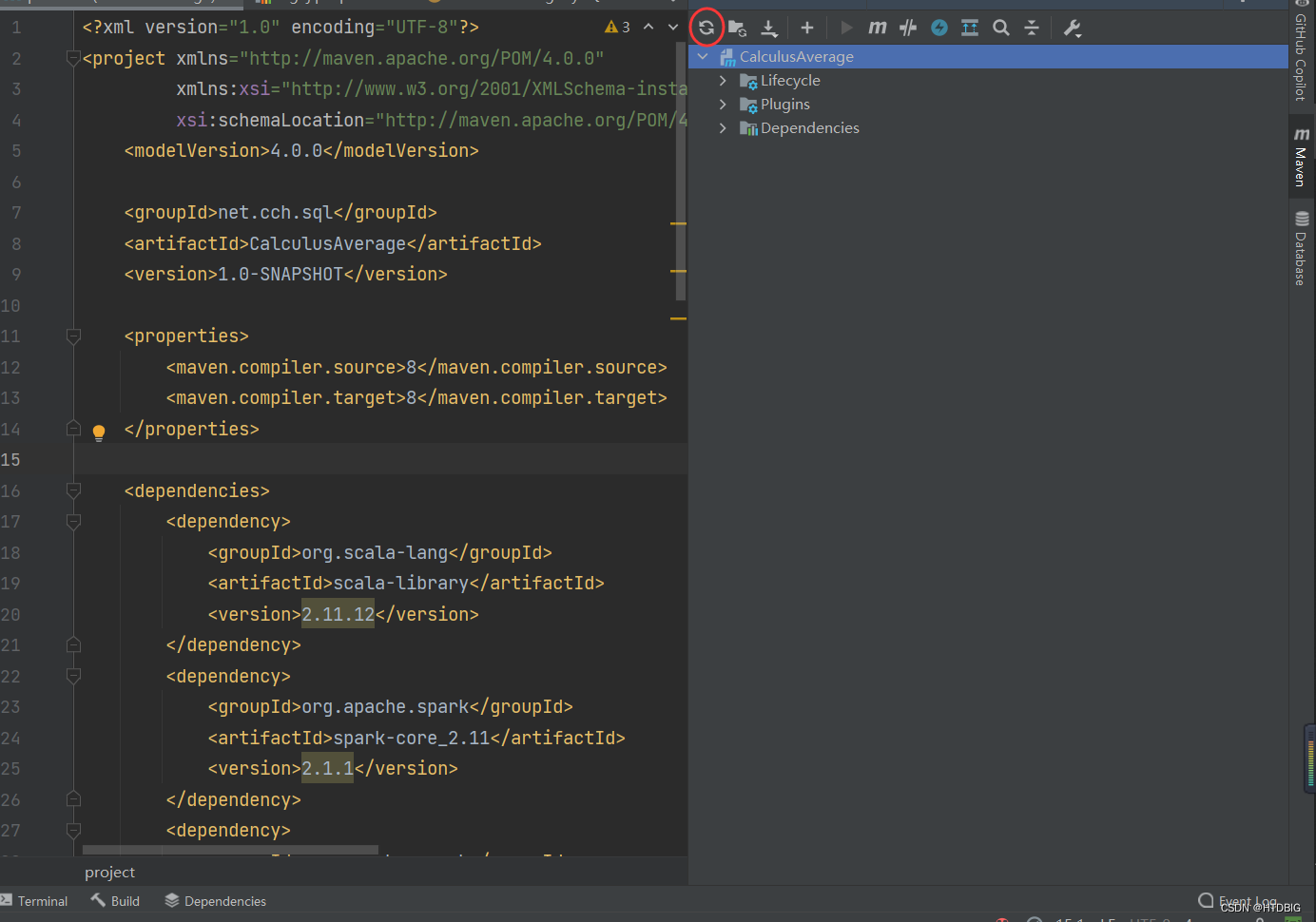

(二)添加相关依赖和构建插件

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>net.cch.sql</groupId>

<artifactId>SparkSQL</artifactId>

<version>1.0-SNAPSHOT</version>

<properties>

<maven.compiler.source>8</maven.compiler.source>

<maven.compiler.target>8</maven.compiler.target>

</properties>

<dependencies>

<dependency>

<groupId>org.scala-lang</groupId>

<artifactId>scala-library</artifactId>

<version>2.11.12</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_2.11</artifactId>

<version>2.1.1</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-sql_2.11</artifactId>

<version>2.1.1</version>

</dependency>

</dependencies>

<build>

<sourceDirectory>src/main/scala</sourceDirectory>

<plugins>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-assembly-plugin</artifactId>

<version>3.3.0</version>

<configuration>

<descriptorRefs>

<descriptorRef>jar-with-dependencies</descriptorRef>

</descriptorRefs>

</configuration>

<executions>

<execution>

<id>make-assembly</id>

<phase>package</phase>

<goals>

<goal>single</goal>

</goals>

</execution>

</executions>

</plugin>

<plugin>

<groupId>net.alchim31.maven</groupId>

<artifactId>scala-maven-plugin</artifactId>

<version>3.3.2</version>

<executions>

<execution>

<id>scala-compile-first</id>

<phase>process-resources</phase>

<goals>

<goal>add-source</goal>

<goal>compile</goal>

</goals>

</execution>

<execution>

<id>scala-test-compile</id>

<phase>process-test-resources</phase>

<goals>

<goal>testCompile</goal>

</goals>

</execution>

</executions>

</plugin>

</plugins>

</build>

</project>

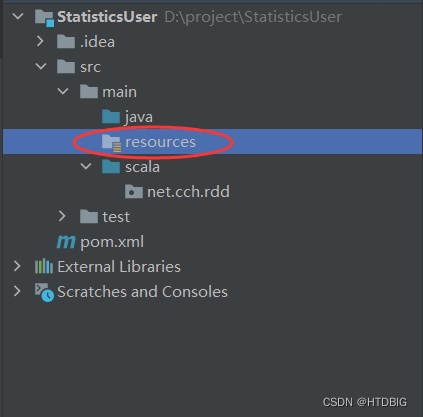

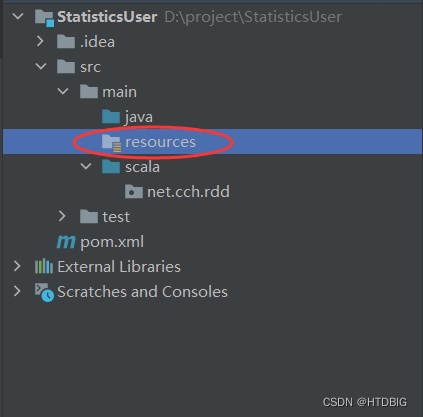

(三)创建日志属性文件

- 添加log4j.properties日志文件

log4j.rootLogger=ERROR, stdout, logfile

log4j.appender.stdout=org.apache.log4j.ConsoleAppender

log4j.appender.stdout.layout=org.apache.log4j.PatternLayout

log4j.appender.stdout.layout.ConversionPattern=%d %p [%c] - %m%n

log4j.appender.logfile=org.apache.log4j.FileAppender

log4j.appender.logfile.File=target/spark.log

log4j.appender.logfile.layout=org.apache.log4j.PatternLayout

log4j.appender.logfile.layout.ConversionPattern=%d %p [%c] - %m%n

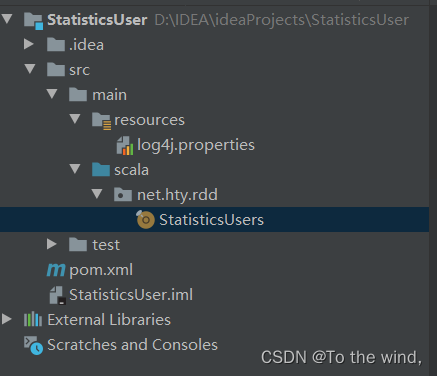

(四)创建统计每日新增用户单例对象

- net.cch.rdd包里创建StatisticsUsers单例对象

package net.hty.rdd

import org.apache.spark.rdd.RDD

import org.apache.spark.{SparkConf, SparkContext}

object StatisticsUsers {

def main(args: Array[String]): Unit = {

val spark: SparkConf = new SparkConf()

.setAppName("CalculusAverageByRDD")

.setMaster("local[*]")

val sc: SparkContext = new SparkContext(spark)

val lines = sc.textFile("hdfs://master:9000/input/user.txt")

val value = lines.map(

line =>{

val fields = line.split(",")

val name = fields(1)

val time = fields(0)

(name,time)

}

)

val nameGB = value.groupByKey().map(

line => {

val time = line._2.min

val name = line._1

(time,name)

}

)

val CB = nameGB.countByKey()

CB.keys.foreach(key => println(key + "," + CB(key)))

sc.stop()

}

}

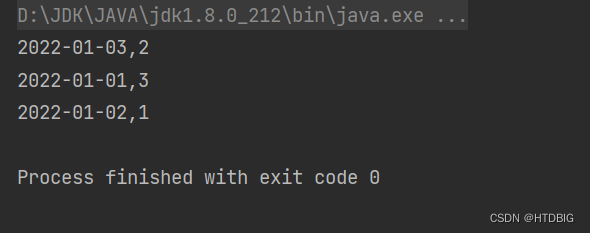

(五)本地运行程序,查看结果

- 结果如下

该博客介绍了如何使用Scala和Spark来统计用户访问历史数据中每日新增的用户数量。首先,创建了一个Maven项目,并添加了Scala和Spark的相关依赖。接着,创建了日志属性文件和一个名为`StatisticsUsers`的单例对象,用于处理数据。通过读取HDFS上的用户数据,对数据进行处理,最后得到了每日新增用户数量并打印结果。

该博客介绍了如何使用Scala和Spark来统计用户访问历史数据中每日新增的用户数量。首先,创建了一个Maven项目,并添加了Scala和Spark的相关依赖。接着,创建了日志属性文件和一个名为`StatisticsUsers`的单例对象,用于处理数据。通过读取HDFS上的用户数据,对数据进行处理,最后得到了每日新增用户数量并打印结果。

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?