1)安装mysql

(1)安装mysql

sudo apt-get install mysqlsudo apt-get install python-mysqldbsudo pip install pymysql2)新建数据库

(1)以root身份进入mysql

mysql -u rootcreate database dbGRANT ALL PRIVILEGES ON db.* TO star@localhost IDENTIFIED BY "123456";

star 是新建的数据库用户

12345 是密码

(4)新建table

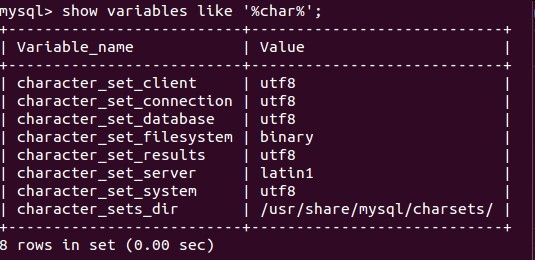

use dbalter database mydb character set utf8show variables like 'character_set_%';

然后自己去新建table(这里就不多说了)

3)scrapy插入数据

(1) setting.py添加如下代码

ITEM_PIPELINES = ['wooyun.pipelines.WooyunPipeline']

WooyunPipeline 是Pipeline 名

(2)pipeline.py

# Define your item pipelines here

#

# Don't forget to add your pipeline to the ITEM_PIPELINES setting

# See: http://doc.scrapy.org/topics/item-pipeline.html

#

from scrapy import log

from twisted.enterprise import adbapi

from scrapy.http import Request

from scrapy.exceptions import DropItem

from scrapy.contrib.pipeline.images import ImagesPipeline

import time

import MySQLdb

import MySQLdb.cursors

import socket

import select

import sys

import os

import errno

class WooyunPipeline(object):

def __init__(self):

self.dbpool = adbapi.ConnectionPool('MySQLdb', db='db',

user='star', passwd='cc', cursorclass=MySQLdb.cursors.DictCursor,

charset='utf8', use_unicode=True)

def process_item(self, item, spider):

# run db query in thread pool

query = self.dbpool.runInteraction(self._conditional_insert, item)

query.addErrback(self.handle_error)

return item

def _conditional_insert(self, tx, item):

# create record if doesn't exist.

# all this block run on it's own thread

tx.execute("select * from wooyun where name = %s", (item['name'][0]))

result = tx.fetchone()

if result:

log.msg("Item already stored in db: %s" % item, level=log.DEBUG)

else:

tx.execute(\

"insert into wooyun (name,time,url) "

"values (%s, %s,%s)",

(item['name'][0],

item['time'][0],

item['url'][0])

)

log.msg("Item stored in db: %s" % item, level=log.DEBUG)

def handle_error(self, e):

log.err(e)

893

893

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?