Kubernetes核心实战

一、Namespace名称空间

1、概述

NameSpace:名称空间,用来对集群资源进行隔离划分,默认只隔离资源,不隔离网络。

Kubernetes 集群在启动后,会创建一个名为"default"的 Namespace,如果不特别指明 Namespace,则用户创建的 Pod,RC,Service 都将 被系统 创建到这个默认的名为 default 的 Namespace 中。

2、命令

法一:

#获取名称空间:

kubectl get ns

#创建名称空间:

kubectl create ns [名称空间]

#删除名称空间:

kubectl delete ns [名称空间]

法二:

#使用hello.yaml文件创建名称空间

apiVersion: v1

kind: Namespace

metadata:

name: hello

#应用配置文件:

kubectl apply -f pod.yaml

#注意:如果使用配置文件创建的名称空间,可以直接使用以下命令进行更干净的删除:

kubectl delete -f hello.yaml

二、Pod

1、概述

Pod是指运行中的一组容器,一个Pod里面可以有很多docker,Pod是Kubernetes中应用的最小单位。

在K8s中,之所以会在docker外封装pod,是因为K8s认为在很多情况下,docker都不是独立完成工作的,经常需要多个docker一起协同完成一个工作,因此,pod可以用来统一管理,即pod为K8s的最小单位。

如下图所示,我们启动一个docker,用于下载文件(File Puller),下载好的文件保存在数据卷(Volume)中,再启动一个docker,用于在web层展示下载的文件(Web Server),这时Pod的作用就显而易见了,由于这两个docker之间需要互相配合工作,因此直接在一个Pod内创建两个docker,以后只需要调用pod即可,无需去调用两个docker。

2、命令

#获取系统所有应用资源:

kubectl get pods -A

#获取系统默认名称空间(default)下的应用资源:

kubectl get pods

#获取系统指定的名称空间下的应用资源:

kubectl get pod -n [名称空间]

#获取系统默认名称空间下pod的详细应用资源:

kubectl get pod -owide

#创建pod:

法一:

kubectl run mynginx --image=nginx

#(mynginx是pod名,

#--image是使用的镜像

#)

法二:使用yaml文件创建pod:

#(

apiVersion: v1

kind: Pod

metadata:

labels:

run: mynginx

name: mynginx

# namespace: default

spec:

containers:

- image: nginx

name: mynginx

#)(metadata表述pod的数据,命名为mynginx。spec中描述的是pod内的详细相信,有一个容器,容器中有一个镜像nginx,镜像命名为mynginx)

#注意:如果使用配置文件创建的pod,可以直接使用以下命令进行更干净的删除:

kubectl delete -f pod.yaml

法三:使用deployment创建pod(有自愈能力):

#如果机器宕机,会重新启动一个pod

kubectl create deployment [pod名] --image=镜像名

#(如需多副本,在结尾加上 --replicas=数字)

#例:

#1.kubectl create deployment mytomcat --image=tomcat:8.5.68

#2.kubectl create deployment my-dep --image=nginx --replicas=3

#删除deployment的部署:

kubectl delete deploy [pod名]

#例:kubectl delete deploy mytomcat

#查看pod的描述信息:

kubectl describe pod [pod名称]

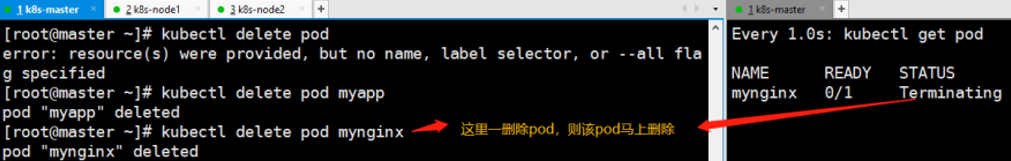

#删除pod:

kubectl delete pod [pod名称]

#(默认是删除default下的pod,如需删除其他名称空间下的pod,只需要在末尾加上:-n [ns名称])

#在可视化界面下创建pod,需要指定在哪个名称空间下

#查看pod的日志:

kubectl logs [pod名称]

#进入pod内:

kubectl exec -it [pod名] -- /bin/bash

#pod内如果有两个或以上的容器,其容器内互相访问可以直接使用127.0.0.1:端口号,进行访问。而容器外则需要使用pod的ip+容器端口号进行访问

#每隔1s监控pod情况:

watch -n 1 kubectl get pod

四、Deployment

1、概述

Deployment 是 Kubenetes v1.2 引入的新概念,工作负载,控制pod,让pod拥有多副本、自愈、扩缩容等能力,除了Deployment外,还有其他工作负载,分别是StatefulSet、DaemonSet、Job/CronJob。

Deployment:

无状态应用部署,比如微服务,提供多副本等功能

StatefulSet:

有状态应用部署,比如redis、提供稳定的存储、网络等功能

DaemonSet:

守护型应用部署,比如日志收集组件,在每个机器都运行一份

Job/CronJob:

定时任务部署,比如垃圾清理组件,可以在指定时间运行

一般开发中,我们会通过工作负载去创建pod,这里以Deployment举例演示

2、命令

使用deployment创建pod(有自愈能力):

#如果机器宕机,会重新启动一个pod

kubectl create deployment [pod名] --image=镜像名

#(如需多副本,在结尾加上 --replicas=数字)

#例:

#1.kubectl create deployment mytomcat --image=tomcat:8.5.68

#2.kubectl create deployment my-dep --image=nginx --replicas=3

#删除deployment的部署:

kubectl delete deploy [pod名]

#例:kubectl delete deploy mytomcat

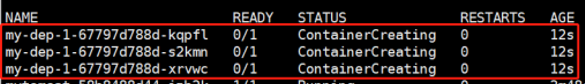

3、多副本

创建多副本

法一:命令行创建多副本

kubectl create deployment my-dep --image=nginx --replicas=3

法二:yaml文件创建多副本

apiVersion: apps/v1 #与k8s集群版本有关,使用 kubectl api-versions 即可查看当前集群支持的版本

kind: Deployment #该配置的类型,我们使用的是 Deployment

metadata: #译名为元数据,即 Deployment 的一些基本属性和信息

name: nginx-deployment #Deployment 的名称

labels: #标签,可以灵活定位一个或多个资源,其中key和value均可自定义,可以定义多组,目前不需要理解

app: nginx #为该Deployment设置key为app,value为nginx的标签

spec: #这是关于该Deployment的描述,可以理解为你期待该Deployment在k8s中如何使用

replicas: 2 #使用该Deployment创建一个应用程序实例

selector: #标签选择器,与上面的标签共同作用,目前不需要理解

matchLabels: #选择包含标签app:nginx的资源

app: nginx

template: #这是选择或创建的Pod的模板

metadata: #Pod的元数据

labels: #Pod的标签,上面的selector即选择包含标签app:nginx的Pod

app: nginx

spec: #期望Pod实现的功能(即在pod中部署)

containers: #生成container,与docker中的container是同一种

- name: nginx #container的名称

image: nginx:1.7.9 #使用镜像nginx:1.7.9创建container,该container默认80端口可访问

应用

kubectl apply -f my-dep.yaml

4、扩缩容

法一:

kubectl scale --replicas=5 deployment/my-dep

法二:

kubectl edit deployment my-dep

#修改 replicas

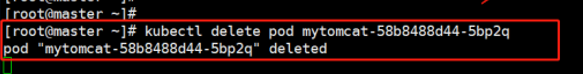

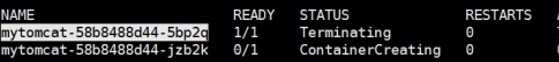

5、自愈

# 清除所有Pod ,重新以工作负载的方式创建pod

kubectl delete pod xxx

# 这里注意单纯删除以前的pod,以及创建deployment后,再删除里面的pod的区别

以前直接删除pod

# 通过deployment创建pod

kubectl create deployment mytomcat --image=tomcat

现在再删除deployment的pod

结果:删除就自动创建一个新的

6、版本更新及回退

#滚动更新:

kubectl set image deployment/my-dep nginx=nginx:1.16.1 --record

kubectl rollout status deployment/my-dep

#历史记录

kubectl rollout history deployment/my-dep

#查看某个历史详情

kubectl rollout history deployment/my-dep --revision=2

#回滚(回到上次)

kubectl rollout undo deployment/my-dep

#回滚(回到指定版本)

kubectl rollout undo deployment/my-dep --to-revision=2

总结:

kubectl create 资源 #创建任意资源

kubectl create deploy #创建部署

kubectl run #只创建一个Pod

kubectl get 资源名(node/pod/deploy) -n xxx(指定名称空间,默认是default) #获取资源

kubectl describe 资源名(node/pod/deploy) xxx #描述某个资源的详细信息

kubectl logs 资源名 ##查看日志

kubectl exec -it pod名 -- 命令 #进pod并执行命令

kubectl delete 资源名(node/pod/deploy) xxx #删除资源

五、Service的介绍

Service 是 Kubernetes 最核心概念,通过创建 Service,可以为一组具有相同功能的容器应用提供一个统一的入口地 址,并且将请求负载分发到后端的各个容器应用上。

#暴露Deploy

kubectl expose deployment my-dep --port=8000 --target-port=80

#使用标签检索Pod

kubectl get pod -l app=my-dep

#yaml文件暴露

apiVersion: v1

kind: Service

metadata:

labels:

app: my-dep

name: my-dep

spec:

selector:

app: my-dep

ports:

- port: 8000

protocol: TCP

targetPort: 80

#获取service

kubectl get service

通过修改各个pod中nginx默认页位置/usr/share/nginx/html下的index.html文件,可以直接通过Deployment的ip加端口号进行访问。

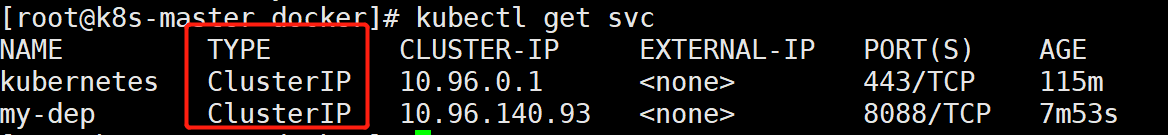

1、ClusterIP

ClusterIP模式,集群内可以访问,但集群外无法访问。

ClusterIP是Service的默认类型,即没有指定type时,默认为ClusterIP模式。

# 等同于没有--type的

kubectl expose deployment my-dep --port=8000 --target-port=80 --type=ClusterIP

yaml文件

apiVersion: v1

kind: Service

metadata:

labels:

app: my-dep

name: my-dep

spec:

ports:

- port: 8000

protocol: TCP

targetPort: 80

selector:

app: my-dep

type: ClusterIP

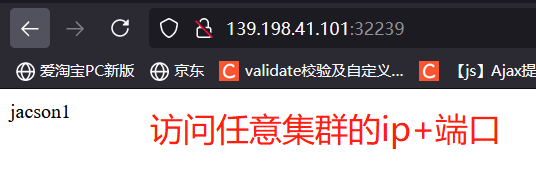

2、NodePort

NodePort模式,集群内可以访问,集群外也可以访问。

若需要NodePort模式,则需要暴露时指定type。

kubectl expose deployment my-dep --port=8000 --target-port=80 --type=NodePort

yaml文件

apiVersion: v1

kind: Service

metadata:

labels:

app: my-dep

name: my-dep

spec:

ports:

- port: 8000

protocol: TCP

targetPort: 80

selector:

app: my-dep

type: NodePort

六、Ingress

1、概述

Ingress 公开了从集群外部到集群内服务的 HTTP 和 HTTPS 路由。流量路由由 Ingress 资源上定义的规则控制。简单来说,Ingress是一个调度Service的控制器。

2、安装

法一:

wget https://raw.githubusercontent.com/kubernetes/ingress-nginx/controller-v0.47.0/deploy/static/provider/baremetal/deploy.yaml

#修改镜像

vi deploy.yaml

#将image的值改为如下值:

registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images/ingress-nginx-controller:v0.46.0

# 检查安装的结果

kubectl get pod,svc -n ingress-nginx

# 最后别忘记把svc暴露的端口要放行

法二:推荐

apiVersion: v1

kind: Namespace

metadata:

name: ingress-nginx

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

---

# Source: ingress-nginx/templates/controller-serviceaccount.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

helm.sh/chart: ingress-nginx-3.33.0

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: 0.47.0

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: controller

name: ingress-nginx

namespace: ingress-nginx

automountServiceAccountToken: true

---

# Source: ingress-nginx/templates/controller-configmap.yaml

apiVersion: v1

kind: ConfigMap

metadata:

labels:

helm.sh/chart: ingress-nginx-3.33.0

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: 0.47.0

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: controller

name: ingress-nginx-controller

namespace: ingress-nginx

data:

---

# Source: ingress-nginx/templates/clusterrole.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

labels:

helm.sh/chart: ingress-nginx-3.33.0

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: 0.47.0

app.kubernetes.io/managed-by: Helm

name: ingress-nginx

rules:

- apiGroups:

- ''

resources:

- configmaps

- endpoints

- nodes

- pods

- secrets

verbs:

- list

- watch

- apiGroups:

- ''

resources:

- nodes

verbs:

- get

- apiGroups:

- ''

resources:

- services

verbs:

- get

- list

- watch

- apiGroups:

- extensions

- networking.k8s.io # k8s 1.14+

resources:

- ingresses

verbs:

- get

- list

- watch

- apiGroups:

- ''

resources:

- events

verbs:

- create

- patch

- apiGroups:

- extensions

- networking.k8s.io # k8s 1.14+

resources:

- ingresses/status

verbs:

- update

- apiGroups:

- networking.k8s.io # k8s 1.14+

resources:

- ingressclasses

verbs:

- get

- list

- watch

---

# Source: ingress-nginx/templates/clusterrolebinding.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

labels:

helm.sh/chart: ingress-nginx-3.33.0

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: 0.47.0

app.kubernetes.io/managed-by: Helm

name: ingress-nginx

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: ingress-nginx

subjects:

- kind: ServiceAccount

name: ingress-nginx

namespace: ingress-nginx

---

# Source: ingress-nginx/templates/controller-role.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: Role

metadata:

labels:

helm.sh/chart: ingress-nginx-3.33.0

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: 0.47.0

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: controller

name: ingress-nginx

namespace: ingress-nginx

rules:

- apiGroups:

- ''

resources:

- namespaces

verbs:

- get

- apiGroups:

- ''

resources:

- configmaps

- pods

- secrets

- endpoints

verbs:

- get

- list

- watch

- apiGroups:

- ''

resources:

- services

verbs:

- get

- list

- watch

- apiGroups:

- extensions

- networking.k8s.io # k8s 1.14+

resources:

- ingresses

verbs:

- get

- list

- watch

- apiGroups:

- extensions

- networking.k8s.io # k8s 1.14+

resources:

- ingresses/status

verbs:

- update

- apiGroups:

- networking.k8s.io # k8s 1.14+

resources:

- ingressclasses

verbs:

- get

- list

- watch

- apiGroups:

- ''

resources:

- configmaps

resourceNames:

- ingress-controller-leader-nginx

verbs:

- get

- update

- apiGroups:

- ''

resources:

- configmaps

verbs:

- create

- apiGroups:

- ''

resources:

- events

verbs:

- create

- patch

---

# Source: ingress-nginx/templates/controller-rolebinding.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

labels:

helm.sh/chart: ingress-nginx-3.33.0

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: 0.47.0

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: controller

name: ingress-nginx

namespace: ingress-nginx

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: ingress-nginx

subjects:

- kind: ServiceAccount

name: ingress-nginx

namespace: ingress-nginx

---

# Source: ingress-nginx/templates/controller-service-webhook.yaml

apiVersion: v1

kind: Service

metadata:

labels:

helm.sh/chart: ingress-nginx-3.33.0

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: 0.47.0

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: controller

name: ingress-nginx-controller-admission

namespace: ingress-nginx

spec:

type: ClusterIP

ports:

- name: https-webhook

port: 443

targetPort: webhook

selector:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/component: controller

---

# Source: ingress-nginx/templates/controller-service.yaml

apiVersion: v1

kind: Service

metadata:

annotations:

labels:

helm.sh/chart: ingress-nginx-3.33.0

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: 0.47.0

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: controller

name: ingress-nginx-controller

namespace: ingress-nginx

spec:

type: NodePort

ports:

- name: http

port: 80

protocol: TCP

targetPort: http

- name: https

port: 443

protocol: TCP

targetPort: https

selector:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/component: controller

---

# Source: ingress-nginx/templates/controller-deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

helm.sh/chart: ingress-nginx-3.33.0

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: 0.47.0

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: controller

name: ingress-nginx-controller

namespace: ingress-nginx

spec:

selector:

matchLabels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/component: controller

revisionHistoryLimit: 10

minReadySeconds: 0

template:

metadata:

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/component: controller

spec:

dnsPolicy: ClusterFirst

containers:

- name: controller

image: registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images/ingress-nginx-controller:v0.46.0

imagePullPolicy: IfNotPresent

lifecycle:

preStop:

exec:

command:

- /wait-shutdown

args:

- /nginx-ingress-controller

- --election-id=ingress-controller-leader

- --ingress-class=nginx

- --configmap=$(POD_NAMESPACE)/ingress-nginx-controller

- --validating-webhook=:8443

- --validating-webhook-certificate=/usr/local/certificates/cert

- --validating-webhook-key=/usr/local/certificates/key

securityContext:

capabilities:

drop:

- ALL

add:

- NET_BIND_SERVICE

runAsUser: 101

allowPrivilegeEscalation: true

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

- name: LD_PRELOAD

value: /usr/local/lib/libmimalloc.so

livenessProbe:

failureThreshold: 5

httpGet:

path: /healthz

port: 10254

scheme: HTTP

initialDelaySeconds: 10

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 1

readinessProbe:

failureThreshold: 3

httpGet:

path: /healthz

port: 10254

scheme: HTTP

initialDelaySeconds: 10

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 1

ports:

- name: http

containerPort: 80

protocol: TCP

- name: https

containerPort: 443

protocol: TCP

- name: webhook

containerPort: 8443

protocol: TCP

volumeMounts:

- name: webhook-cert

mountPath: /usr/local/certificates/

readOnly: true

resources:

requests:

cpu: 100m

memory: 90Mi

nodeSelector:

kubernetes.io/os: linux

serviceAccountName: ingress-nginx

terminationGracePeriodSeconds: 300

volumes:

- name: webhook-cert

secret:

secretName: ingress-nginx-admission

---

# Source: ingress-nginx/templates/admission-webhooks/validating-webhook.yaml

# before changing this value, check the required kubernetes version

# https://kubernetes.io/docs/reference/access-authn-authz/extensible-admission-controllers/#prerequisites

apiVersion: admissionregistration.k8s.io/v1

kind: ValidatingWebhookConfiguration

metadata:

labels:

helm.sh/chart: ingress-nginx-3.33.0

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: 0.47.0

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: admission-webhook

name: ingress-nginx-admission

webhooks:

- name: validate.nginx.ingress.kubernetes.io

matchPolicy: Equivalent

rules:

- apiGroups:

- networking.k8s.io

apiVersions:

- v1beta1

operations:

- CREATE

- UPDATE

resources:

- ingresses

failurePolicy: Fail

sideEffects: None

admissionReviewVersions:

- v1

- v1beta1

clientConfig:

service:

namespace: ingress-nginx

name: ingress-nginx-controller-admission

path: /networking/v1beta1/ingresses

---

# Source: ingress-nginx/templates/admission-webhooks/job-patch/serviceaccount.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: ingress-nginx-admission

annotations:

helm.sh/hook: pre-install,pre-upgrade,post-install,post-upgrade

helm.sh/hook-delete-policy: before-hook-creation,hook-succeeded

labels:

helm.sh/chart: ingress-nginx-3.33.0

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: 0.47.0

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: admission-webhook

namespace: ingress-nginx

---

# Source: ingress-nginx/templates/admission-webhooks/job-patch/clusterrole.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: ingress-nginx-admission

annotations:

helm.sh/hook: pre-install,pre-upgrade,post-install,post-upgrade

helm.sh/hook-delete-policy: before-hook-creation,hook-succeeded

labels:

helm.sh/chart: ingress-nginx-3.33.0

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: 0.47.0

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: admission-webhook

rules:

- apiGroups:

- admissionregistration.k8s.io

resources:

- validatingwebhookconfigurations

verbs:

- get

- update

---

# Source: ingress-nginx/templates/admission-webhooks/job-patch/clusterrolebinding.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: ingress-nginx-admission

annotations:

helm.sh/hook: pre-install,pre-upgrade,post-install,post-upgrade

helm.sh/hook-delete-policy: before-hook-creation,hook-succeeded

labels:

helm.sh/chart: ingress-nginx-3.33.0

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: 0.47.0

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: admission-webhook

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: ingress-nginx-admission

subjects:

- kind: ServiceAccount

name: ingress-nginx-admission

namespace: ingress-nginx

---

# Source: ingress-nginx/templates/admission-webhooks/job-patch/role.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: Role

metadata:

name: ingress-nginx-admission

annotations:

helm.sh/hook: pre-install,pre-upgrade,post-install,post-upgrade

helm.sh/hook-delete-policy: before-hook-creation,hook-succeeded

labels:

helm.sh/chart: ingress-nginx-3.33.0

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: 0.47.0

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: admission-webhook

namespace: ingress-nginx

rules:

- apiGroups:

- ''

resources:

- secrets

verbs:

- get

- create

---

# Source: ingress-nginx/templates/admission-webhooks/job-patch/rolebinding.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

name: ingress-nginx-admission

annotations:

helm.sh/hook: pre-install,pre-upgrade,post-install,post-upgrade

helm.sh/hook-delete-policy: before-hook-creation,hook-succeeded

labels:

helm.sh/chart: ingress-nginx-3.33.0

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: 0.47.0

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: admission-webhook

namespace: ingress-nginx

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: ingress-nginx-admission

subjects:

- kind: ServiceAccount

name: ingress-nginx-admission

namespace: ingress-nginx

---

# Source: ingress-nginx/templates/admission-webhooks/job-patch/job-createSecret.yaml

apiVersion: batch/v1

kind: Job

metadata:

name: ingress-nginx-admission-create

annotations:

helm.sh/hook: pre-install,pre-upgrade

helm.sh/hook-delete-policy: before-hook-creation,hook-succeeded

labels:

helm.sh/chart: ingress-nginx-3.33.0

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: 0.47.0

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: admission-webhook

namespace: ingress-nginx

spec:

template:

metadata:

name: ingress-nginx-admission-create

labels:

helm.sh/chart: ingress-nginx-3.33.0

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: 0.47.0

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: admission-webhook

spec:

containers:

- name: create

image: docker.io/jettech/kube-webhook-certgen:v1.5.1

imagePullPolicy: IfNotPresent

args:

- create

- --host=ingress-nginx-controller-admission,ingress-nginx-controller-admission.$(POD_NAMESPACE).svc

- --namespace=$(POD_NAMESPACE)

- --secret-name=ingress-nginx-admission

env:

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

restartPolicy: OnFailure

serviceAccountName: ingress-nginx-admission

securityContext:

runAsNonRoot: true

runAsUser: 2000

---

# Source: ingress-nginx/templates/admission-webhooks/job-patch/job-patchWebhook.yaml

apiVersion: batch/v1

kind: Job

metadata:

name: ingress-nginx-admission-patch

annotations:

helm.sh/hook: post-install,post-upgrade

helm.sh/hook-delete-policy: before-hook-creation,hook-succeeded

labels:

helm.sh/chart: ingress-nginx-3.33.0

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: 0.47.0

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: admission-webhook

namespace: ingress-nginx

spec:

template:

metadata:

name: ingress-nginx-admission-patch

labels:

helm.sh/chart: ingress-nginx-3.33.0

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/version: 0.47.0

app.kubernetes.io/managed-by: Helm

app.kubernetes.io/component: admission-webhook

spec:

containers:

- name: patch

image: docker.io/jettech/kube-webhook-certgen:v1.5.1

imagePullPolicy: IfNotPresent

args:

- patch

- --webhook-name=ingress-nginx-admission

- --namespace=$(POD_NAMESPACE)

- --patch-mutating=false

- --secret-name=ingress-nginx-admission

- --patch-failure-policy=Fail

env:

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

restartPolicy: OnFailure

serviceAccountName: ingress-nginx-admission

securityContext:

runAsNonRoot: true

runAsUser: 2000

# 应用yaml文件

kubectl apply -f ingress.yaml

3、测试

官网地址:https://kubernetes.github.io/ingress-nginx/

就是nginx做的

https://139.198.38.94:30427/

http://139.198.38.94:32453/

IP访问测试

apiVersion: apps/v1

kind: Deployment

metadata:

name: hello-server

spec:

replicas: 2

selector:

matchLabels:

app: hello-server

template:

metadata:

labels:

app: hello-server

spec:

containers:

- name: hello-server

image: registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images/hello-server

ports:

- containerPort: 9000

---

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: nginx-demo

name: nginx-demo

spec:

replicas: 2

selector:

matchLabels:

app: nginx-demo

template:

metadata:

labels:

app: nginx-demo

spec:

containers:

- image: nginx

name: nginx

---

apiVersion: v1

kind: Service

metadata:

labels:

app: nginx-demo

name: nginx-demo

spec:

selector:

app: nginx-demo

ports:

- port: 8000

protocol: TCP

targetPort: 80

---

apiVersion: v1

kind: Service

metadata:

labels:

app: hello-server

name: hello-server

spec:

selector:

app: hello-server

ports:

- port: 8000

protocol: TCP

targetPort: 9000

域名访问测试

#yaml文件配置域名访问规则

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: ingress-host-bar

spec:

ingressClassName: nginx

rules:

- host: "hello.tt.com"

http:

paths:

- pathType: Prefix

path: "/"

backend:

service:

name: hello-server

port:

number: 8000

- host: "demo.tt.com"

http:

paths:

- pathType: Prefix

# 把请求会转给下面的服务,下面的服务一定要能处理这个路径,不能处理就是404

path: "/nginx"

backend:

service:

name: nginx-demo

port:

number: 8000

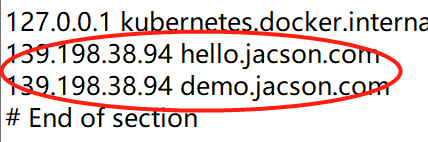

注意:这里应该在自己的主机配置好host域名规则,win10系统可以找到“C:\Windows\System32\drivers\etc” 该路径下,修改“hosts”文件,参考下图

路径重写

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

nginx.ingress.kubernetes.io/rewrite-target: /$2

name: ingress-host-bar

spec:

ingressClassName: nginx

rules:

- host: "hello.atguigu.com"

http:

paths:

- pathType: Prefix

path: "/"

backend:

service:

name: hello-server

port:

number: 8000

- host: "demo.atguigu.com"

http:

paths:

- pathType: Prefix

path: "/nginx(/|$)(.*)" # 把请求会转给下面的服务,下面的服务一定要能处理这个路径,不能处理就是404

backend:

service:

name: nginx-demo ## java,比如使用路径重写,去掉前缀nginx

port:

number: 8000

流量限制

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: ingress-limit-rate

annotations:

nginx.ingress.kubernetes.io/limit-rps: "1"

spec:

ingressClassName: nginx

rules:

- host: "haha.atguigu.com"

http:

paths:

- pathType: Exact

path: "/"

backend:

service:

name: nginx-demo

port:

number: 8000

七、存储抽象

存储抽象,即将存储单独用一个服务去联系起来。以前我们习惯把各个pod的卷挂载到其主机上的某个位置,但k8s的集群特点之一是master节点的随机分配,若有一个pod宕机,k8s会自动创建一个新的pod,当此时若新的pod没有分配到原先的节点上,则原理与本机挂载的文件将作废,导致数据丢失。为了解决这个问题,我们需要有一个统一的存储服务,来管理整个集群常见的存储框架有Glusterfs、NFS、CephFS等

1、环境准备

#所有机器安装

yum install -y nfs-utils

主节点

#nfs主节点

echo "/nfs/data/ *(insecure,rw,sync,no_root_squash)" > /etc/exports

mkdir -p /nfs/data

systemctl enable rpcbind --now

systemctl enable nfs-server --now

#配置生效

exportfs -r

从节点

showmount -e 172.31.0.4

#执行以下命令挂载 nfs 服务器上的共享目录到本机路径 /root/nfsmount

mkdir -p /nfs/data

mount -t nfs 172.31.0.4:/nfs/data /nfs/data

# 写入一个测试文件

echo "hello nfs server" > /nfs/data/test.txt

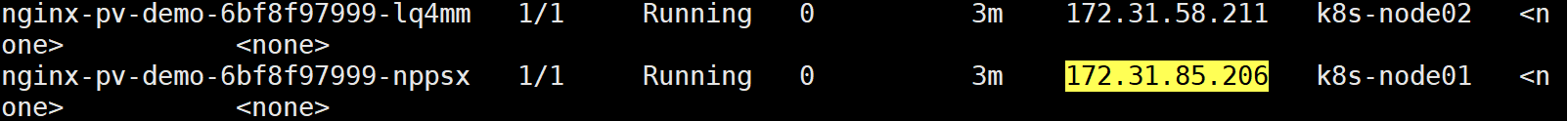

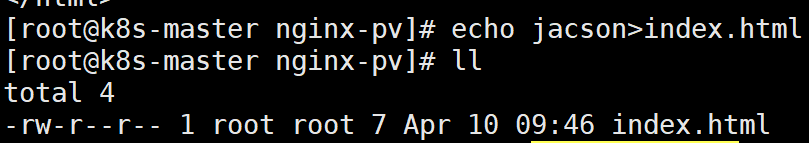

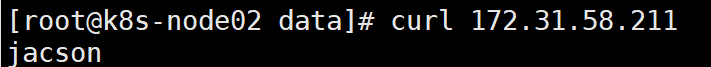

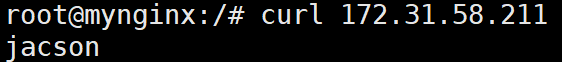

原生方式数据挂载

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: nginx-pv-demo

name: nginx-pv-demo

spec:

replicas: 2

selector:

matchLabels:

app: nginx-pv-demo

template:

metadata:

labels:

app: nginx-pv-demo

spec:

containers:

- image: nginx

name: nginx

volumeMounts:

- name: html

mountPath: /usr/share/nginx/html

volumes:

- name: html

nfs:

server: 172.31.0.4

path: /nfs/data/nginx-pv

2、PV&PVC

PV:持久卷(Persistent Volume),将应用需要持久化的数据保存到指定位置

PVC:持久卷申明(Persistent Volume Claim),申明需要使用的持久卷规格

简单来说,不同于以往的指定挂载目录,PV方式的挂载,这里以静态供应为例,会先创建几个固定大小的PV池,比如10MB、1GB、10GB等,然后通过PVC绑定,在创建Pod的时候绑定PVC,则NFS会根据PVC的需求,自动找到PV池中最接近大小的文件目录进行挂载

创建pv池

静态供应

#nfs主节点

mkdir -p /nfs/data/01

mkdir -p /nfs/data/02

mkdir -p /nfs/data/03

创建pv

apiVersion: v1

kind: PersistentVolume

metadata:

name: pv01-10m

spec:

capacity:

storage: 10M

accessModes:

- ReadWriteMany

storageClassName: nfs

nfs:

path: /nfs/data/01

server: 172.31.0.4

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: pv02-1gi

spec:

capacity:

storage: 1Gi

accessModes:

- ReadWriteMany

storageClassName: nfs

nfs:

path: /nfs/data/02

server: 172.31.0.4

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: pv03-3gi

spec:

capacity:

storage: 3Gi

accessModes:

- ReadWriteMany

storageClassName: nfs

nfs:

path: /nfs/data/03

server: 172.31.0.4

PVC创建与绑定

#创建pvc

kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: nginx-pvc

spec:

accessModes:

- ReadWriteMany

resources:

requests:

storage: 200Mi

storageClassName: nfs

#创建Pod绑定PVC

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: nginx-deploy-pvc

name: nginx-deploy-pvc

spec:

replicas: 2

selector:

matchLabels:

app: nginx-deploy-pvc

template:

metadata:

labels:

app: nginx-deploy-pvc

spec:

containers:

- image: nginx

name: nginx

volumeMounts:

- name: html

mountPath: /usr/share/nginx/html

volumes:

- name: html

persistentVolumeClaim:

claimName: nginx-pvc

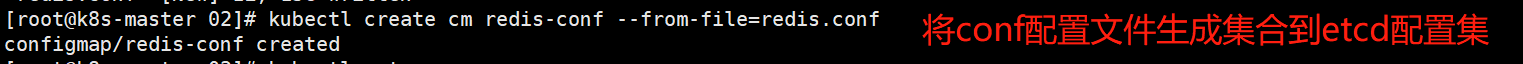

3、ConfigMap

ConfigMap,就是抽取应用配置,以key-value的形式生成一个配置集,方便修改并且可以自动更新。

# 创建一个简单的redis配置文件

vi redis.conf

# 内容

appendonly yes

# 保存退出,创建配置,将redis保存到K8s的etcd中

kubectl create cm redis-conf --from-file=redis.conf

# 查看当前的所有ConfigMap

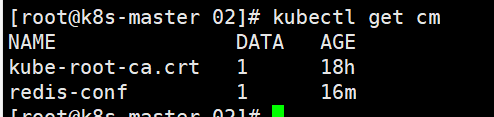

kubectl get cm

# 查看当前cm的yaml文件

kubectl get cm redis-conf -oyaml

# 创建pod

# 这里简单演示,直接创建pod

# yaml

apiVersion: v1

kind: Pod

metadata:

name: redis

spec:

containers:

- name: redis

image: redis

command:

- redis-server

- "/redis-master/redis.conf" #指的是redis容器内部的位置

ports:

- containerPort: 6379

volumeMounts:

- mountPath: /data

name: data

- mountPath: /redis-master

name: config

volumes:

- name: data

emptyDir: {}

- name: config

configMap:

name: redis-conf

items:

- key: redis.conf

path: redis.conf

# 检查默认配置

kubectl exec -it redis -- redis-cli

127.0.0.1:6379> CONFIG GET appendonly

127.0.0.1:6379> CONFIG GET requirepass

# 修改ConfigMap

apiVersion: v1

kind: ConfigMap

metadata:

name: example-redis-config

data:

redis-config: |

maxmemory 2mb

maxmemory-policy allkeys-lru

# 检查配置是否更新

kubectl exec -it redis -- redis-cli

127.0.0.1:6379> CONFIG GET maxmemory

127.0.0.1:6379> CONFIG GET maxmemory-policy

# 这里需要重新启动pod才会从关联的ConfigMap中获取更新的值

配置值未更改,因为需要重新启动 Pod 才能从关联的 ConfigMap 中获取更新的值。

原因:我们的Pod部署的中间件自己本身没有热更新能力。

4、Secret

ConfigMap保存的是配置信息,而Secret对象类型用来保存敏感信息,例如密码、OAuth令牌和SSH密钥。

kubectl create secret docker-registry leifengyang-docker \

--docker-username=leifengyang \

--docker-password=Lfy123456 \

--docker-email=534096094@qq.com

##命令格式

kubectl create secret docker-registry regcred \

--docker-server=<你的镜像仓库服务器> \

--docker-username=<你的用户名> \

--docker-password=<你的密码> \

--docker-email=<你的邮箱地址>

apiVersion: v1

kind: Pod

metadata:

name: private-nginx

spec:

containers:

- name: private-nginx

image: leifengyang/guignginx:v1.0

imagePullSecrets:

- name: leifengyang-docker

个人学习知识点总结:

域名:

服务名.所在名称空间.svc

例子:my-dep.default.svc

在容器内可以直接使用curl 域名:service端口号访问

获取集群种所有的资源:kubectl get all -A

获取k8s的所有资源:kubectl api-resources

获取pod的镜像:kubectl get pod my-nginx2-78sdf797a9-a7997 -o yaml | grep image

滚动跟新:kubectl set image deploy my-nginx2 nginx=nginx:1.9.1 (--recode=true)

!!!写yaml文件部署应用

使用yaml编写任意源的pod

kubectl run my-tomcat --image=tomcat --dry-run -oyalm

不同名称空间实现资源隔离,但是不同名称空间可以网络通信

解释yaml文件中的container的解释

例子:kubectl explain pod.spec.container

从私有仓库下载镜像需要密钥,密钥需要指定在哪个名称空间下使用

给节点打标签:kubectl label node k8s-node2 disketype=ssd

给节点删除标签:kubectl label node k8s-node2 disketype-

污点是在节点上的,而容忍是在pod上

给节点添加污点:kubectl taint node k8s-node2 haha=hehe:NoSchedule

删除节点的污点:kubectl taint node k8s-node2 haha-

(可以不写值和效果)

使用此命令可以检测单词是否写错

kubectl apply --validate -f mypod.yaml

查看pod的yaml

kubectl get pods/mypod -o yaml

通过如下命令可以查看 endpoints 资源:

kubectl get endpoints ${SERVICE_NAME}

(确保 Endpoints 与服务成员 Pod 个数一致。 例如,如果你的 Service 用来运行 3 个副本的 nginx 容器,你应该会在 Service 的 Endpoints 中看到 3 个不同的 IP 地址。)

创建deployment的service

kubectl expose deployment hostnames --port=80 --target-port=9376

ReplicaSet 的目的是维护一组在任何时候都处于运行状态的 Pod 副本的稳定集合。

和 Deployment 不同的是, StatefulSet 为它们的每个 Pod 维护了一个有粘性的 ID。这些 Pod 是基于相同的规约来创建的, 但是不能相互替换:无论怎么调度,每个 Pod 都有一个永久不变的 ID。

为deployment创建service:

kubectl expose deployment/my-nginx

volumeMounts是容器内的路径

volumes是宿主机上的路径

创建role,具有ingress,service资源,以及list,watch,create,update,delete动作。

kubectl create role rolename --resource=ingress,service --verb list,watch,create,update,delete --dry-run=client -o yaml

查看api:kubectl get --raw

查找指定api并且格式化:kubectl get --raw /apis/apps/1 | python -m json.tool

k8s:多副本、自愈、扩缩容

ingress:域名访问,如果访问的是/nginx,则找到service下的nginx才能处理,否则无法处理

通过service域名访问,只限制在其资源内访问,而不能在主机访问

手动指定endpoint,节点重启后,endpoint无效

ingress主要就是写annotation、rules规则以及spec中的tls,映射到service

kube-proxy,负责进行流量转发

查看所有名称空间的 Deployment

kubectl get deployments -A

#查看名称为nginx-XXXXXX的Pod的信息

kubectl describe pod nginx-XXXXXX

#查看名称为nginx-pod-XXXXXXX的Pod内的容器打印的日志

#本案例中的 nginx-pod 没有输出日志,所以您看到的结果是空的

kubectl logs -f nginx-pod-XXXXXXX

编写yaml文件的方法

kubectl run my-tomcat --image=tomcat --dry-run -oyaml 干跑一遍

kubelet额外参数配置 /etc/sysconfig/kubelet;kubelet配置位置 /var/lib/kubelet/config.yaml

静态pod:在 /etc/kubernetes/manifests 位置放的所有Pod.yaml文件,机器启动kubelet自己就把他启动起来。

启动探针、存活探针、就绪探针

Service 创建完成后,会对应一组EndPoint。可以kubectl get ep 进行查看

service无非就是哪几种type的区别,以及选择器写与不写

ingress主要是以域名访问service,包括最基本的配置,默认后端、路径重写,配置ssl,证书;其次可以通过注解来设定限速、亲和性

如果一个 NetworkPolicy 的标签选择器选中了某个 Pod,则该 Pod 将变成隔离的(isolated),并将拒绝任何不被 NetworkPolicy 许可的网络连接。

存储主要有持久卷以及临时卷以及secret和configmap这两种存储

持久卷:1、nfs;2、pv&pvc

重点:如何再kubeshere中部署应用

1、创建配置

2、创建服务

kubekey生产配置文件:./kk create config -f config.yaml

添加集群节点:1、修改config.yaml文件;2、执行 ./kk add nodes -f config.yaml

删除集群节点:./kk delete node node3 -f config.yaml

查看集群资源的:kubectl api-resources -owide

pod有亲和、反亲和,而node只有亲和

容器下的/etc/localtime是存储日期的文件,主机则是在/usr/share/zoneinfo/Asia/Shanghai

nginx的页面在/usr/share/nginx/html

731

731

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?