目录

AdaBoost.M2出处

本文参考paper《Experiments with a New Boosting Algorithm》

Experiments with a New Boosting Algorithm论文地址

论文指出AdaBoost.M1的主要缺点:

The main disadvantage of AdaBoost.M1 is that it is unable to handle weak hypotheses with error greater than 1/2.when class num > 2, the requirement that the error be less than 1/2 is quite strong and may often be hard to meet.

AdaBoost.M1不能够处理错误率大于0.5的弱假设,并且现实中要求错误率大于0.5是很难满足的强假设。

AdaBoost.M解决AdaBoost.M1缺点的方式:

Method:extending the communication between the boosting algorithm and the weak learner.

Advantage :

the boosting algorithm can focus the weak learner not only on hard-to-classify examples, but more specifically, on the incorrect labels that are hardest to discriminate.(主要因为使用了伪标签、模糊的分数和使用伪损失)

具体的Methods:

1. give the weak learning algorithm more expressive power

a. allow the weak learner to generate more expressive hypotheses, which, rather than identifying a single label in Y, instead choose a set of “plausible” labels.This may often be easier than choosing just one label.(生成一个似是而非的标签,例如手写数字,生成7和9两个标签)

b.allow the weak learner to indicate a “degree of plausibility.” Thus, each weak hypothesis outputs a kdim [0, 1] vector.(学习器可以输出一个非概率值的处于[0, 1]之间的的似乎可信的值。)

2.place a more complex requirement on the performance of the weak hypotheses.Rather than using the usual prediction error, we ask that the weak hypotheses do well with respect to a more sophisticated error measure that we call the pseudo-loss(不使用M1使用的错误率,而是使用“伪损失”。)

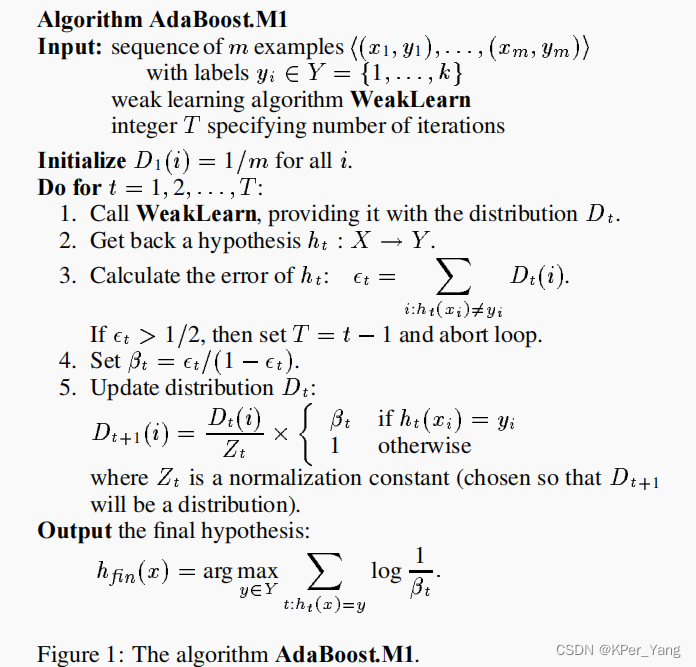

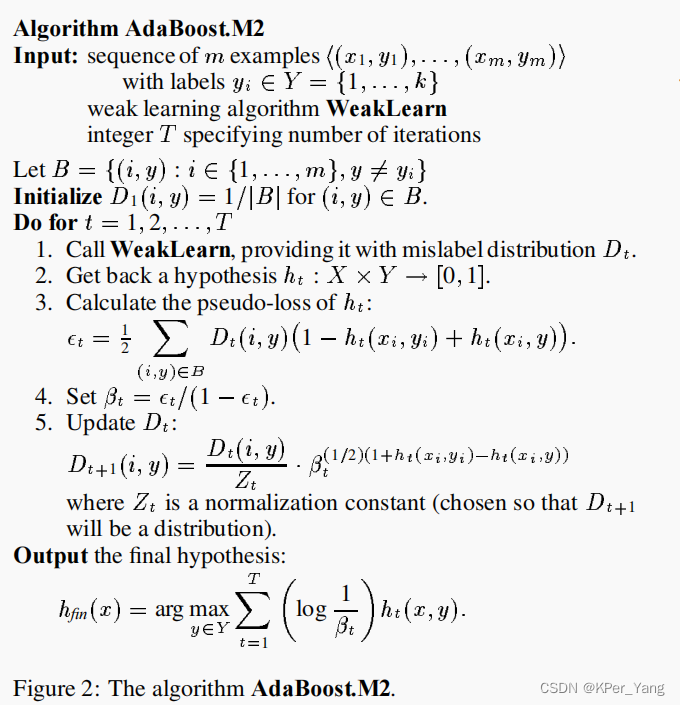

M1和M2的算法流程对比:

总结共同点和区别:

-

M1和M2都给难分类的样本更大的权重,但是M2不仅关注难样本,还关注难区分的标签。

-

M2使用一种新的loss,M1使用错误率。

-

M2对于分类器的输出使用伪标签和模糊的分数值。

2291

2291

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?