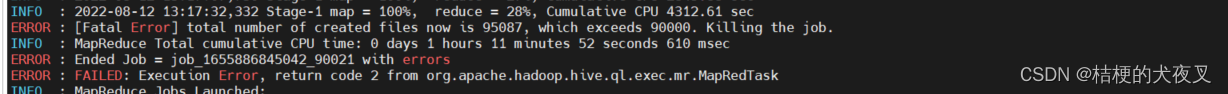

hive在把一张表的数据插入到另一张分区表时,经常会报这个创建文件数超出限制的错误

解决办法:set hive.exec.max.created.files=900000; 提高最大文件数

加上如下设置即可

set hive.input.format=org.apache.hadoop.hive.ql.io.CombineHiveInputFormat;

set mapred.max.split.size=256000000; -- 256M

set mapred.min.split.size.per.node=100000000; -- 100M

set mapred.min.split.size.per.rack=100000000; -- 100M

set hive.merge.mapfiles = true;

set hive.merge.mapredfiles = true;

set hive.merge.size.per.task = 256000000; -- 256M

set hive.merge.smallfiles.avgsize=16000000; -- 16M

set mapreduce.map.memory.mb=10240;

set mapreduce.reduce.memory.mb=10240;

set mapred.reduce.slowstart.completed.maps=1;

set hive.exec.dynamic.partition.mode=nonstrict;

set hive.exec.max.dynamic.partitions.pernode=20000;

set hive.exec.max.dynamic.partitions=20000;

set hive.exec.max.created.files=900000;

4534

4534

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?