Redis 集群搭建

{{Redis 搭建集群高可用}}

Redis 集群删除

1、关闭所有的 redis 服务

➜ bin ps -ef | grep redis

root 8581 1 0 17:18 ? 00:00:07 ./redis-server *:7010 [cluster]

root 8583 1 0 17:18 ? 00:00:07 ./redis-server *:7020 [cluster]

root 8585 1 0 17:18 ? 00:00:07 ./redis-server *:7030 [cluster]

root 8593 1 0 17:18 ? 00:00:07 ./redis-server *:7011 [cluster]

root 8601 1 0 17:18 ? 00:00:07 ./redis-server *:7012 [cluster]

root 8606 1 0 17:18 ? 00:00:07 ./redis-server *:7021 [cluster]

root 8611 1 0 17:18 ? 00:00:07 ./redis-server *:7022 [cluster]

root 8613 1 0 17:18 ? 00:00:07 ./redis-server *:7031 [cluster]

root 8615 1 0 17:18 ? 00:00:20 ./redis-server *:7032 [cluster]

➜ bin kill -9 8581

➜ bin kill -9 8583

➜ bin kill -9 8585

➜ bin kill -9 8593

➜ bin kill -9 8601

➜ bin kill -9 8606

➜ bin kill -9 8611

➜ bin kill -9 8613

➜ bin kill -9 8615

➜ bin ps -ef | grep redis

➜ bin

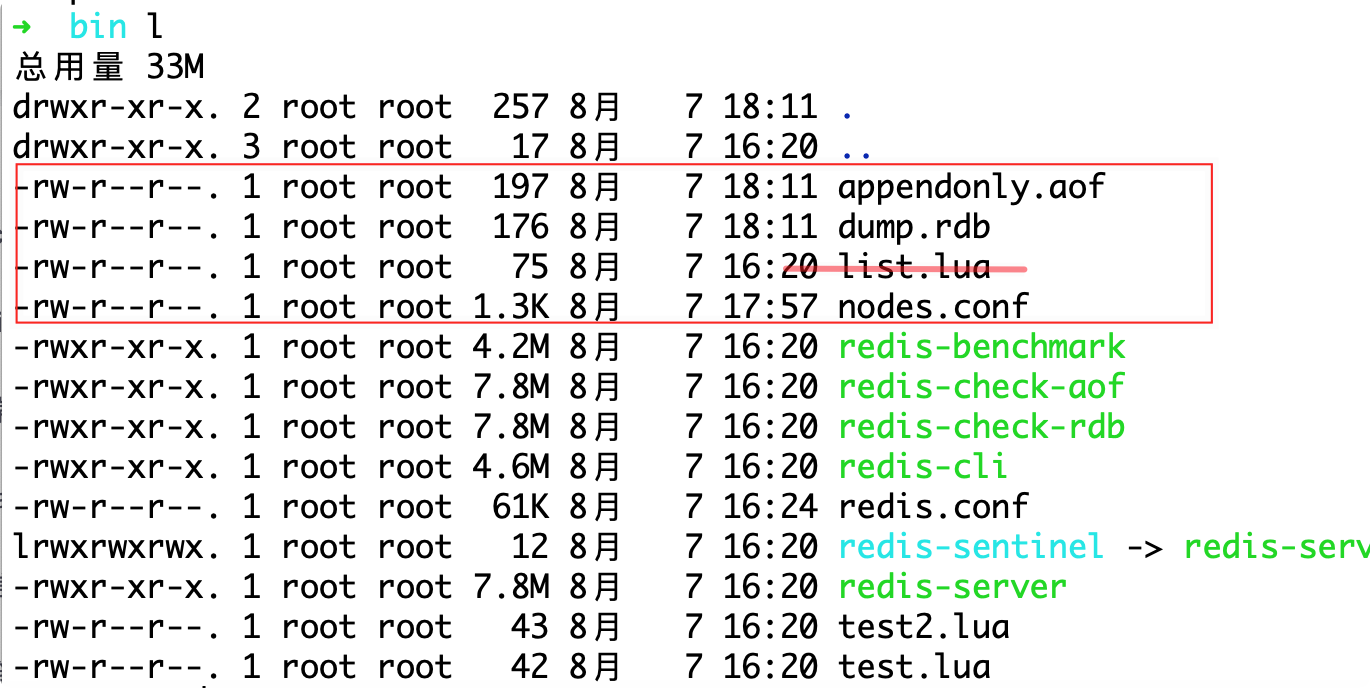

2、删除所有的集群和数据文件

#删除每个redis的bin下面的

dump.rdb

appendonly.aof

nodes.conf

使用 shell 脚本实现

vim delete.sh

#添加如下内容

cd redis-m1-7010/bin

rm appendonly.aof

rm dump.rdb

rm nodes.conf

cd ../../

cd redis-m2-7020/bin

rm appendonly.aof

rm dump.rdb

rm nodes.conf

cd ../../

cd redis-m3-7030/bin

rm appendonly.aof

rm dump.rdb

rm nodes.conf

cd ../../

cd redis-s11-7011/bin/

rm appendonly.aof

rm dump.rdb

rm nodes.conf

cd ../../

cd redis-s12-7012/bin/

rm appendonly.aof

rm dump.rdb

rm nodes.conf

cd ../../

cd redis-s21-7021/bin/

rm appendonly.aof

rm dump.rdb

rm nodes.conf

cd ../../

cd redis-s22-7022/bin/

rm appendonly.aof

rm dump.rdb

rm nodes.conf

cd ../../

cd redis-s31-7031/bin/

rm appendonly.aof

rm dump.rdb

rm nodes.conf

cd ../../

cd redis-s32-7032/bin/

rm appendonly.aof

rm dump.rdb

rm nodes.conf

cd ../../

#赋予写和执行权限

chmod u+x delete.sh

#执行删除

sh delete.sh

Redis 集群的重新构建

启动所有的 redis

#搭建的时候已经写好了的shell脚本直接执行

sh start.sh

➜ redis-cluster sh start.sh

9186:C 07 Aug 2021 18:39:08.394 # oO0OoO0OoO0Oo Redis is starting oO0OoO0OoO0Oo

9186:C 07 Aug 2021 18:39:08.394 # Redis version=5.0.5, bits=64, commit=00000000, modified=0, pid=9186, just started

9186:C 07 Aug 2021 18:39:08.394 # Configuration loaded

9188:C 07 Aug 2021 18:39:08.400 # oO0OoO0OoO0Oo Redis is starting oO0OoO0OoO0Oo

9188:C 07 Aug 2021 18:39:08.400 # Redis version=5.0.5, bits=64, commit=00000000, modified=0, pid=9188, just started

9188:C 07 Aug 2021 18:39:08.400 # Configuration loaded

9190:C 07 Aug 2021 18:39:08.406 # oO0OoO0OoO0Oo Redis is starting oO0OoO0OoO0Oo

9190:C 07 Aug 2021 18:39:08.406 # Redis version=5.0.5, bits=64, commit=00000000, modified=0, pid=9190, just started

9190:C 07 Aug 2021 18:39:08.406 # Configuration loaded

9195:C 07 Aug 2021 18:39:08.411 # oO0OoO0OoO0Oo Redis is starting oO0OoO0OoO0Oo

9195:C 07 Aug 2021 18:39:08.411 # Redis version=5.0.5, bits=64, commit=00000000, modified=0, pid=9195, just started

9195:C 07 Aug 2021 18:39:08.411 # Configuration loaded

9200:C 07 Aug 2021 18:39:08.417 # oO0OoO0OoO0Oo Redis is starting oO0OoO0OoO0Oo

9200:C 07 Aug 2021 18:39:08.417 # Redis version=5.0.5, bits=64, commit=00000000, modified=0, pid=9200, just started

9200:C 07 Aug 2021 18:39:08.417 # Configuration loaded

9202:C 07 Aug 2021 18:39:08.423 # oO0OoO0OoO0Oo Redis is starting oO0OoO0OoO0Oo

9202:C 07 Aug 2021 18:39:08.423 # Redis version=5.0.5, bits=64, commit=00000000, modified=0, pid=9202, just started

9202:C 07 Aug 2021 18:39:08.423 # Configuration loaded

9210:C 07 Aug 2021 18:39:08.433 # oO0OoO0OoO0Oo Redis is starting oO0OoO0OoO0Oo

9210:C 07 Aug 2021 18:39:08.433 # Redis version=5.0.5, bits=64, commit=00000000, modified=0, pid=9210, just started

9210:C 07 Aug 2021 18:39:08.433 # Configuration loaded

9215:C 07 Aug 2021 18:39:08.438 # oO0OoO0OoO0Oo Redis is starting oO0OoO0OoO0Oo

9215:C 07 Aug 2021 18:39:08.438 # Redis version=5.0.5, bits=64, commit=00000000, modified=0, pid=9215, just started

9215:C 07 Aug 2021 18:39:08.438 # Configuration loaded

9220:C 07 Aug 2021 18:39:08.442 # oO0OoO0OoO0Oo Redis is starting oO0OoO0OoO0Oo

9220:C 07 Aug 2021 18:39:08.442 # Redis version=5.0.5, bits=64, commit=00000000, modified=0, pid=9220, just started

9220:C 07 Aug 2021 18:39:08.442 # Configuration loaded

创建集群命令

cd /usr/redis-cluster/redis-m1-7010/bin

➜ bin ./redis-cli --cluster create 172.16.94.13:7010 172.16.94.13:7020 172.16.94.13:7030 172.16.94.13:7011 172.16.94.13:7012 172.16.94.13:7021 172.16.94.13:7022 172.16.94.13:7031 172.16.94.13:7032 --cluster-replicas 2

>>> Performing hash slots allocation on 9 nodes...

Master[0] -> Slots 0 - 5460

Master[1] -> Slots 5461 - 10922

Master[2] -> Slots 10923 - 16383

Adding replica 172.16.94.13:7012 to 172.16.94.13:7010

Adding replica 172.16.94.13:7021 to 172.16.94.13:7010

Adding replica 172.16.94.13:7022 to 172.16.94.13:7020

Adding replica 172.16.94.13:7031 to 172.16.94.13:7020

Adding replica 172.16.94.13:7032 to 172.16.94.13:7030

Adding replica 172.16.94.13:7011 to 172.16.94.13:7030

>>> Trying to optimize slaves allocation for anti-affinity

[WARNING] Some slaves are in the same host as their master

M: ee286e7fb5fe573c46486318ce096ab23ae34b1f 172.16.94.13:7010

slots:[0-5460] (5461 slots) master

M: f2b1c83ac812ef667019d50d70c918beaa94eb8c 172.16.94.13:7020

slots:[5461-10922] (5462 slots) master

M: b9e24bb366273e16022b37262931f2c887c7b391 172.16.94.13:7030

slots:[10923-16383] (5461 slots) master

S: 49dad3f2b928850e306290bcf75f7346bc2ed6fd 172.16.94.13:7011

replicates f2b1c83ac812ef667019d50d70c918beaa94eb8c

S: e24cab86b8eb6312dd6f2f99c413261c814a4216 172.16.94.13:7012

replicates b9e24bb366273e16022b37262931f2c887c7b391

S: a963946d7e82443d334bcfaef82faa4ad0dcba20 172.16.94.13:7021

replicates f2b1c83ac812ef667019d50d70c918beaa94eb8c

S: 260ad554e37c39c39f4abeac5531f2d9189b1d3b 172.16.94.13:7022

replicates ee286e7fb5fe573c46486318ce096ab23ae34b1f

S: a05cf8a1ac31d5d25db812ad56d25e5c60893311 172.16.94.13:7031

replicates ee286e7fb5fe573c46486318ce096ab23ae34b1f

S: 5682f20105511056e3f6d90c0818ea2612597733 172.16.94.13:7032

replicates b9e24bb366273e16022b37262931f2c887c7b391

Can I set the above configuration? (type 'yes' to accept): yes

>>> Nodes configuration updated

>>> Assign a different config epoch to each node

>>> Sending CLUSTER MEET messages to join the cluster

Waiting for the cluster to join

...............

>>> Performing Cluster Check (using node 172.16.94.13:7010)

M: ee286e7fb5fe573c46486318ce096ab23ae34b1f 172.16.94.13:7010

slots:[0-5460] (5461 slots) master

2 additional replica(s)

S: e24cab86b8eb6312dd6f2f99c413261c814a4216 172.16.94.13:7012

slots: (0 slots) slave

replicates b9e24bb366273e16022b37262931f2c887c7b391

S: 260ad554e37c39c39f4abeac5531f2d9189b1d3b 172.16.94.13:7022

slots: (0 slots) slave

replicates ee286e7fb5fe573c46486318ce096ab23ae34b1f

M: b9e24bb366273e16022b37262931f2c887c7b391 172.16.94.13:7030

slots:[10923-16383] (5461 slots) master

2 additional replica(s)

S: a963946d7e82443d334bcfaef82faa4ad0dcba20 172.16.94.13:7021

slots: (0 slots) slave

replicates f2b1c83ac812ef667019d50d70c918beaa94eb8c

S: 49dad3f2b928850e306290bcf75f7346bc2ed6fd 172.16.94.13:7011

slots: (0 slots) slave

replicates f2b1c83ac812ef667019d50d70c918beaa94eb8c

S: a05cf8a1ac31d5d25db812ad56d25e5c60893311 172.16.94.13:7031

slots: (0 slots) slave

replicates ee286e7fb5fe573c46486318ce096ab23ae34b1f

M: f2b1c83ac812ef667019d50d70c918beaa94eb8c 172.16.94.13:7020

slots:[5461-10922] (5462 slots) master

2 additional replica(s)

S: 5682f20105511056e3f6d90c0818ea2612597733 172.16.94.13:7032

slots: (0 slots) slave

replicates b9e24bb366273e16022b37262931f2c887c7b391

[OK] All nodes agree about slots configuration.

>>> Check for open slots...

>>> Check slots coverage...

[OK] All 16384 slots covered.

可以看到三主六从创建 成功 slot 成功分配均匀连续完全

M1: ee286e7fb5fe573c46486318ce096ab23ae34b1f 172.16.94.13:7010

slots:[0-5460] (5461 slots) master

M2: f2b1c83ac812ef667019d50d70c918beaa94eb8c 172.16.94.13:7020

slots:[5461-10922] (5462 slots) master

M3: b9e24bb366273e16022b37262931f2c887c7b391 172.16.94.13:7030

slots:[10923-16383] (5461 slots) master

3929

3929

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?