下载kafka安装包

kafka官网下载地址:https://dlcdn.apache.org/kafka/3.2.0/kafka_2.12-3.2.0.tgz

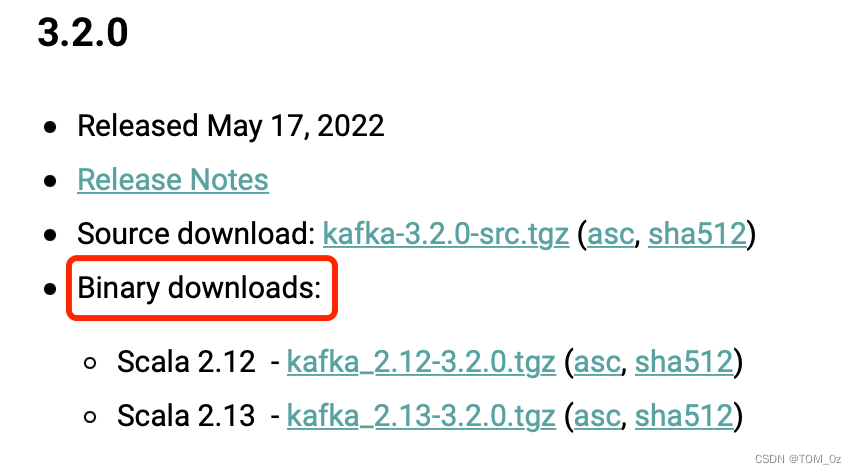

说明:需要下载Binary downloads包,有些同学下载了Source download包,Source download包是无法直接安装使用的!

修改配置文件

下载好的软件包通过xshell或者其他工具上传到虚拟机或者服务器中的

/usr/local/(或者其他目录都可以)

#解压软件包

tar zxvf kafka_2.12-3.2.0.tgz -C /usr/local/

#修改配置文件

cd /usr/local/kafka_2.12-3.2.0/config

修改zookeeper配置文件:

vi /usr/local/kafka_2.12-3.2.0/config/zookeeper.properties

dataDir=/tmp/zookeeper

# the port at which the clients will connect

clientPort=2181

# disable the per-ip limit on the number of connections since this is a non-production config

maxClientCnxns=0

# Disable the adminserver by default to avoid port conflicts.

# Set the port to something non-conflicting if choosing to enable this

admin.enableServer=false

修改kafka配置文件:

vi /usr/local/kafka_2.12-3.2.0/config/server.properties

############################# Server Basics #############################

# The id of the broker. This must be set to a unique integer for each broker.

broker.id=0

####################### Socket Server Settings ##########################

# The number of threads that the server uses for receiving requests from the network and sending responses to the network

num.network.threads=3

# The number of threads that the server uses for processing requests, which may include disk I/O

num.io.threads=8

# The send buffer (SO_SNDBUF) used by the socket server

socket.send.buffer.bytes=102400

# The receive buffer (SO_RCVBUF) used by the socket server

socket.receive.buffer.bytes=102400

# The maximum size of a request that the socket server will accept (protection against OOM)

socket.request.max.bytes=104857600

############################# Log Basics #############################

# A comma separated list of directories under which to store log files

log.dirs=/tmp/kafka

# The default number of log partitions per topic. More partitions allow greater

# parallelism for consumption, but this will also result in more files across

# the brokers.

num.partitions=1

# The number of threads per data directory to be used for log recovery at startup and flushing at shutdown.

# This value is recommended to be increased for installations with data dirs located in RAID array.

num.recovery.threads.per.data.dir=1

################ Internal Topic Settings #############################

# For anything other than development testing, a value greater than 1 is recommended to ensure availability such as 3.

offsets.topic.replication.factor=1

transaction.state.log.replication.factor=1

transaction.state.log.min.isr=1

################## Log Retention Policy #############################

# The minimum age of a log file to be eligible for deletion due to age

log.retention.hours=168

# The maximum size of a log segment file. When this size is reached a new log segment will be created.

log.segment.bytes=1073741824

# The interval at which log segments are checked to see if they can be deleted according

# to the retention policies

log.retention.check.interval.ms=300000

############################# Zookeeper #############################

# Zookeeper connection string (see zookeeper docs for details).

# This is a comma separated host:port pairs, each corresponding to a zk

# server. e.g. "127.0.0.1:3000,127.0.0.1:3001,127.0.0.1:3002".

# You can also append an optional chroot string to the urls to specify the

# root directory for all kafka znodes.

zookeeper.connect=localhost:2181

#zookeeper.connect=localhost:2181

# Timeout in ms for connecting to zookeeper

zookeeper.connection.timeout.ms=18000

############## Group Coordinator Settings #############################

# However, in production environments the default value of 3 seconds is more suitable as this will help to avoid unnecessary, and potentially expensive, rebalances during application startup.

group.initial.rebalance.delay.ms=0

⚠️:以上配置文件需要重点关注以下几个参数,默认是3,单机版必须修改为1,否则kafka服务无法正常启动!!!

offsets.topic.replication.factor=1

transaction.state.log.replication.factor=1

transaction.state.log.min.isr=1

在这里插入代码片

启动zookeeper服务

/usr/local/kafka_2.12-3.2.0/bin/zookeeper-server-start.sh -daemon /usr/local/kafka_2.12-3.2.0/config/zookeeper.properties

启动kafka服务

/usr/local/kafka_2.12-3.2.0/bin/kafka-server-start.sh -daemon /usr/local/kafka_2.12-3.2.0/config/server.properties

启动生产者客户端

/usr/local/kafka_2.12-3.2.0/bin/kafka-console-producer.sh --bootstrap-server localhost:9092 --topic test

#在生产者窗口输入任意内容

说明:不需要提前创建topic,启动客户端生产者或消费者会自动创建

启动消费者客户端

/usr/local/kafka_2.12-3.2.0/bin/kafka-console-consumer.sh --bootstrap-server localhost:9092 --topic test

#消费者窗口可以正常显示,表示生产消费正常

补充:

创建topic

/usr/local/kafka_2.12-3.2.0/bin/kafka-topics.sh --bootstrap-server localhost:9092 --create --topic nn --replication-factor 1 --partitions 1

查看所有的topic

/usr/local/kafka_2.12-3.2.0/bin/kafka-topics.sh --bootstrap-server localhost:9092 --list

查看所有消费者组信息

/usr/local/kafka_2.12-3.2.0/bin/kafka-consumer-groups.sh --bootstrap-server localhost:9092 --all-groups --list

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?