非常建议大家找以下两个文档看一下:

RHadoop2.0.2u2_Installation_Configuration_for_RedHat.pdf

Microsoft Word - RHadoop and MapR v2.0.pdf

rhadoop包含下边三个包:rhdfs、rmr2、rhbase

下载链接:https://github.com/RevolutionAnalytics/RHadoop/wiki/Downloads

第一步:介绍

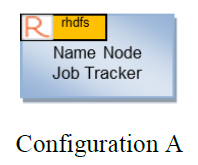

基本Hadoop配置(拓扑结构图如下)

rmr2包使得在R中编码的MapReduce作业能够在Hadoop集群上执行。 为了执行这些MapReduce作业,必须在Hadoop集群的每个Task节点上安装Revolution R Enterprise和rmr2软件包(拓扑结构图如下)

rhdfs包提供与HDFS的连接。 rhdfs安装在Hadoop的Name Node节点上

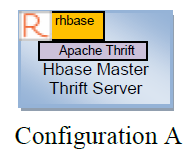

rhbase包提供与HBase的连接。 必须在可以访问HBase主服务器的节点上安装软件包和Revolution R Enterprise。 该软件包使用Thrift API与HBase通信,因此Apache Thrift服务器也必须安装在同一节点上。

第二步:安装

一、在所有的节点上安装Revolution R Enterprise 和 rmr2

1. Use the link(s) in your Revolution Analytics welcome letter to download the following installation files.

§ Revo-Ent-6.2.0-RHEL5.tar.gz or Revo-Ent-6.2.0-RHEL6.tar.gz

§ RHadoop-2.0.2u2.tar.gz

2. Unpack the contents of the Revolution R Enterprise installation bundle. At the prompt, type:

tar -xzf Revo-Ent-6.2.0-RHEL5.tar.gz

Note: If installing on Red Hat Enterprise Linux 5.x, replace RHEL6 with RHEL5 in the previous tar command.

3. Change directory to the versioned Revolution directory. At the prompt, type:

cd RevolutionR_6.2.0

4. Install Revolution R Enterprise. At the prompt, type:

./install.py --no-ask -d

5. Unpack the contents of the RHadoop installation bundle. At the prompt, type:

cd ..

tar -xzf RHadoop-2.0.2u2.tar.gz

6. Change directory to the versioned RHadoop directory. At the prompt, type:

cd RHadoop_2.0.2

7. Install rmr2 and its dependent R packages. At the prompt, type:

R CMD INSTALL digest_0.6.3.tar.gz plyr_1.8.tar.gz stringr_0.6.2.tar.gz RJSONIO_1.0-.tar.gz Rcpp_0.10.3.tar.gz functional_0.1.tar.gz quickcheck_1.0.tar.gz rmr2_2.0.2.tar.gz

8. Update the environment variables needed by rmr2. The values for the environments will depend upon your Hadoop distribution.

HADOOP_CMD – The complete path to the “hadoop” executable

HADOOP_STREAMING – The complete path to the Hadoop Streaming jar file

Examples of both of these environment variables are shown below:

export HADOOP_CMD=/usr/bin/hadoop

export HADOOP_STREAMING=/usr/lib/hadoop/contrib/streaming/hadoop-streaming-<version>.jar

二、安装rhdfs

1. Install the rJava packages. At the prompt, type:

R CMD INSTALL rJava_0.9-4.tar.gz

2. Update the environment variable needed by rhdfs. The value for the environment variable will depend upon your hadoop distribution.

HADOOP_CMD – The complete path to the “hadoop” executable

An example of the environment variable is shown below:

export HADOOP_CMD=/usr/bin/hadoop

注:重要! 只需要在使用rhdfs包的节点上设置此环境变量(即本文档前面所述的Edge节点)。 此外,建议将此环境变量添加到文件/ etc / profile中,以便所有用户都可以使用它。

3. Install rhdfs. At the prompt, type:

R CMD INSTALL rhdfs_1.0.5.tar.gz

三、安装 rhbase

1.安装Apache Thrift

重要! rhbase需要Apache Thrift Server。 如果您尚未配置和安装thrift,则需要构建并安装Apache Thrift。 参考网站:http://thrift.apache.org/

2.安装依赖包

yum -y install automake libtool flex bison pkgconfig gcc-c++ boost-devel libevent-devel zlib-devel python-devel ruby-devel openssl-devel

3.解压

tar -xzf thrift-0.8.0.tar.gz

4. 5.Build the thrift library。 我们只需要Thrift的C ++接口,所以我们在没有ruby或python的情况下构建。 在提示符下键入以下两个命令

cd thrift-0.8.0

./configure --without-ruby --without-python

make

6. Install the thrift library. At the prompt, type:

make install

7. Create a symbolic link to the thrift library so it can be loaded by the rhbase package. Example of symbolic link:

ln -s /usr/local/lib/libthrift-0.8.0.so /usr/lib

8. Setup the PKG_CONFIG_PATH environment variable. At the prompt, type :

export PKG_CONFIG_PATH=$PKG_CONFIG_PATH:/usr/local/lib/pkgconfig

9. Install the rhdfs package. At the prompt, type:

cd ..

R CMD INSTALL rhbase_1.1.tar.gz

第三步:测试以确保包已配置并正常工作

您应该执行两组测试来验证配置是否正常工作。 第一组测试将检查是否可以加载和初始化已安装的软件包

1. Invoke R. At the prompt, type:

R

2. Load and initialize the rmr2 package, and execute some simple commands

At the R prompt, type the following commands: (Note: the “>” symbol in the following code is the ‘R’ prompt and should not be typed.)

> library(rmr2)

> from.dfs(to.dfs(1:100))

> from.dfs(mapreduce(to.dfs(1:100)))

Ø If any errors occur check the following:

a. Revolution R Enterprise is installed on each node in the cluster.

b. Check that rmr2, and its dependent packages are installed on each node in the cluster.

c. Make sure that a link to Rscript executable is in the PATH on each node in the Hadoop cluster.

d. The user that invoked ‘R’ has read and write permissions to HDFS

e. HADOOP_CMD environment variable is set, exported and its value is the complete path of the “hadoop” executable.

f. HADOOP_STREAMING environment variable is set, exported and its value is the complete path to the Hadoop Streaming jar file.

g. If you encounter errors like the following (see below), check the ‘stderr’ log file for the job, and resolve any errors reported. The easiest way to find the log files is to use the tracking URL (i.e. http://<my_ip_address>:50030/jobdetails.jsp?jobid=job_201208162037_0011)

3. Load and initialize the rhdfs package.

At the R prompt, type the following commands: (Note: the “>” symbol in the following code is the ‘R’ prompt and should not be typed.)

> library(rhdfs)

> hdfs.init()

> hdfs.ls("/")

Ø If any error occurs check the following:

a. rJava package is installed, configured and loaded.

b. HADOOP_CMD is set and its value is set to the complete path of the “hadoop” executable, and exported.

4. Load and initialize the rhbase package.

At the R prompt, type the following commands: (Note: the “>” symbol in the following code is the ‘R’ prompt and should not be typed.)

> library(rhbase)

> hb.init()

> hb.list.tables()

Ø If any error occurs check the following:

a. Thrift Server is running (refer to your Hadoop documentation for more details)

b. The default port for the Thrift Server is 9090. Be sure there is not a port conflict with other running processes

c. Check to be sure you are not running the Thrift Server in hsha or nonblocking mode. If necessary use the threadpool command line parameter to start the server

(i.e. /usr/bin/hbase thrift –threadpool start)

5. Using the standard R mechanism for checking packages, you can verify that your configuration is working properly.

Go to the directory where the R package source (rmr2, rhdfs, rhbase) exist. Type the following commands for each package.

Important!: Be aware that running the tests for the rmr2 package may take a significant time (hours) to complete

R CMD check rmr2_2.0.2.tar.gz

R CMD check rhdfs_1.0.5.tar.gz

R CMD check rhbase_1.1.tar.gz

Ø If any error occurs, refer to the trouble shooting information in the previous sections:

Ø Note: errors referring to missing package pdflatex can be ignored

Error in texi2dvi("Rd2.tex", pdf = (out_ext == "pdf"), quiet = FALSE, :

pdflatex is not available

Error in running tools::texi2dvi

2460

2460

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?