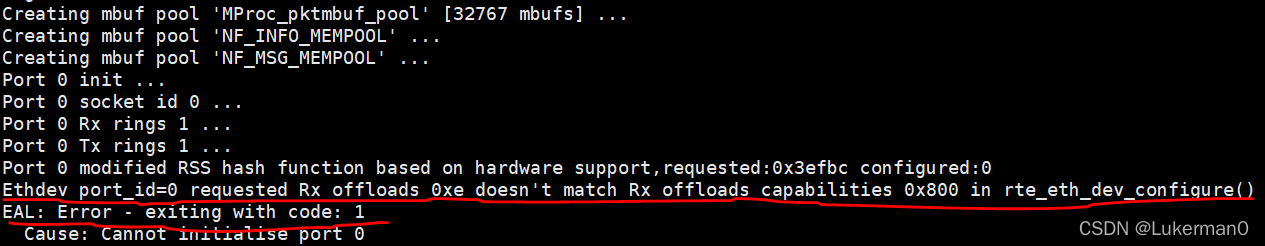

运行OpenNetVM的NF Manager时报错如下:Ethdev port_id=0 requested Rx offloads 0xe doesn't match Rx offloads capabilities 0x800 in rte_eth_dev_configure()

看错误描述应该是DPDK在初始化配置网卡的时候出了问题,我的网卡是NetXtreme II BCM57810 10 Gigabit Ethernet 168e,DPDK版本20.05(OpenNetVM自带)

这个宏的位置在${RTE_SDK}/x86_64-native-linuxapp-gcc/include/rte_ethdev.h ,内容为:

#define DEV_RX_OFFLOAD_CHECKSUM (DEV_RX_OFFLOAD_IPV4_CKSUM | \

DEV_RX_OFFLOAD_UDP_CKSUM | \

DEV_RX_OFFLOAD_TCP_CKSUM)其中

#define DEV_RX_OFFLOAD_IPV4_CKSUM 0x00000002

#define DEV_RX_OFFLOAD_UDP_CKSUM 0x00000004

#define DEV_RX_OFFLOAD_TCP_CKSUM 0x00000008这个宏的主要作用就是使能网卡的硬件offload功能,具体说就是就是将报文的ip,tcp, udp的校验和计算交给网卡计算,节省cpu开销,从而提高吞吐量的手段

再进一步定位rte_eth_dev_configure里报错的这段代码,可以看到就是把dev_conf里的rx的offloads配置和dev_info里的网卡offloads能力做了一个比较,如果值不相等就报错

/* Any requested offloading must be within its device capabilities */

if ((dev_conf->rxmode.offloads & dev_info.rx_offload_capa) !=

dev_conf->rxmode.offloads) {

RTE_ETHDEV_LOG(ERR,

"Ethdev port_id=%u requested Rx offloads 0x%"PRIx64" doesn't match Rx offloads "

"capabilities 0x%"PRIx64" in %s()\n",

port_id, dev_conf->rxmode.offloads,

dev_info.rx_offload_capa,

__func__);

ret = -EINVAL;

goto rollback;

}再看onvm_init.c里的实际配置

static const struct rte_eth_conf port_conf = {

.rxmode = {

.mq_mode = ETH_MQ_RX_RSS,

.max_rx_pkt_len = RTE_ETHER_MAX_LEN,

.split_hdr_size = 0,

.offloads = DEV_RX_OFFLOAD_CHECKSUM,

},

.rx_adv_conf = {

.rss_conf = {

.rss_key = rss_symmetric_key, .rss_hf = ETH_RSS_IP | ETH_RSS_UDP | ETH_RSS_TCP | ETH_RSS_L2_PAYLOAD,

},

},

.txmode = {.mq_mode = ETH_MQ_TX_NONE,

.offloads = (DEV_TX_OFFLOAD_IPV4_CKSUM | DEV_TX_OFFLOAD_UDP_CKSUM | DEV_TX_OFFLOAD_TCP_CKSUM)},

};onvm配置的rxmode.offloads就是宏定义的DEV_RX_OFFLOAD_CHECKSUM,值为0xe

而BCM57810网卡的参数为0x800,是一个关于支持巨型帧的宏

#define DEV_RX_OFFLOAD_JUMBO_FRAME 0x00000800BCM57810网卡使用的驱动是bnx2x,在dpdk的bnx2x_ethdev.c文件里 可以找到dev_info实际的设置

static int

bnx2x_dev_infos_get(struct rte_eth_dev *dev, struct rte_eth_dev_info *dev_info)

{

struct bnx2x_softc *sc = dev->data->dev_private;

dev_info->max_rx_queues = sc->max_rx_queues;

dev_info->max_tx_queues = sc->max_tx_queues;

dev_info->min_rx_bufsize = BNX2X_MIN_RX_BUF_SIZE;

dev_info->max_rx_pktlen = BNX2X_MAX_RX_PKT_LEN;

dev_info->max_mac_addrs = BNX2X_MAX_MAC_ADDRS;

dev_info->speed_capa = ETH_LINK_SPEED_10G | ETH_LINK_SPEED_20G;

dev_info->rx_offload_capa = DEV_RX_OFFLOAD_JUMBO_FRAME;

dev_info->rx_desc_lim.nb_max = MAX_RX_AVAIL;

dev_info->rx_desc_lim.nb_min = MIN_RX_SIZE_NONTPA;

dev_info->rx_desc_lim.nb_mtu_seg_max = 1;

dev_info->tx_desc_lim.nb_max = MAX_TX_AVAIL;

return 0;

}确实是只有一个dev_info->rx_offload_capa = DEV_RX_OFFLOAD_JUMBO_FRAME;

所以看起来是dpdk写的驱动里不支持onvm写的关于checksum的offloads功能,查下官方文档3. BNX2X Poll Mode Driver — dpdk 0.11 documentation https://dpdk-docs.readthedocs.io/en/latest/nics/bnx2x.html

https://dpdk-docs.readthedocs.io/en/latest/nics/bnx2x.html

3.1. Supported Features

BNX2X PMD has support for:

- Base L2 features

- Unicast/multicast filtering

- Promiscuous mode

- Port hardware statistics

- SR-IOV VF

3.2. Non-supported Features

The features not yet supported include:

- TSS (Transmit Side Scaling)

- RSS (Receive Side Scaling)

- LRO/TSO offload

- Checksum offload

- SR-IOV PF

- Rx TX scatter gather

RSS、 Checksum offload这些都没支持

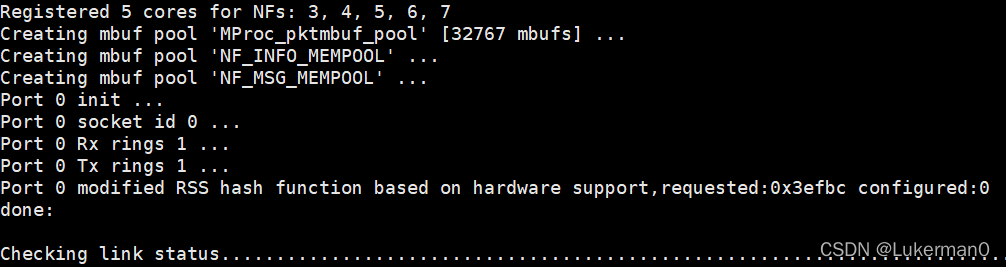

于是修改onvm_init.c里的网卡配置,使其与BNX2X驱动支持的功能匹配,修改代码如下

static const struct rte_eth_conf port_conf = {

.rxmode = {

.mq_mode = ETH_MQ_RX_RSS,

.max_rx_pkt_len = RTE_ETHER_MAX_LEN,

.split_hdr_size = 0,

//.offloads = DEV_RX_OFFLOAD_CHECKSUM,

.offloads = 0x800,

},

.rx_adv_conf = {

.rss_conf = {

.rss_key = rss_symmetric_key, .rss_hf = ETH_RSS_IP | ETH_RSS_UDP | ETH_RSS_TCP | ETH_RSS_L2_PAYLOAD,

},

},

.txmode = {.mq_mode = ETH_MQ_TX_NONE,

//.offloads = (DEV_TX_OFFLOAD_IPV4_CKSUM | DEV_TX_OFFLOAD_UDP_CKSUM | DEV_TX_OFFLOAD_TCP_CKSUM)},

.offloads = 0x0},

};重新编译onvm,这次可以跑起来了,但是RSS,checksum offloads这些功能显然都用不了了,所以结论是条件允许的话最好还是使用像i40e这些驱动支持的网卡运行onvm。。。

针对DPDK在OpenNetVM环境下配置特定型号网卡时出现的错误进行了解析,并通过调整网卡offload功能成功解决了该问题。

针对DPDK在OpenNetVM环境下配置特定型号网卡时出现的错误进行了解析,并通过调整网卡offload功能成功解决了该问题。

https://www.cnblogs.com/lyt-666/p/13410548.html

https://www.cnblogs.com/lyt-666/p/13410548.html

3400

3400

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?