Spark Memory Management mechanism

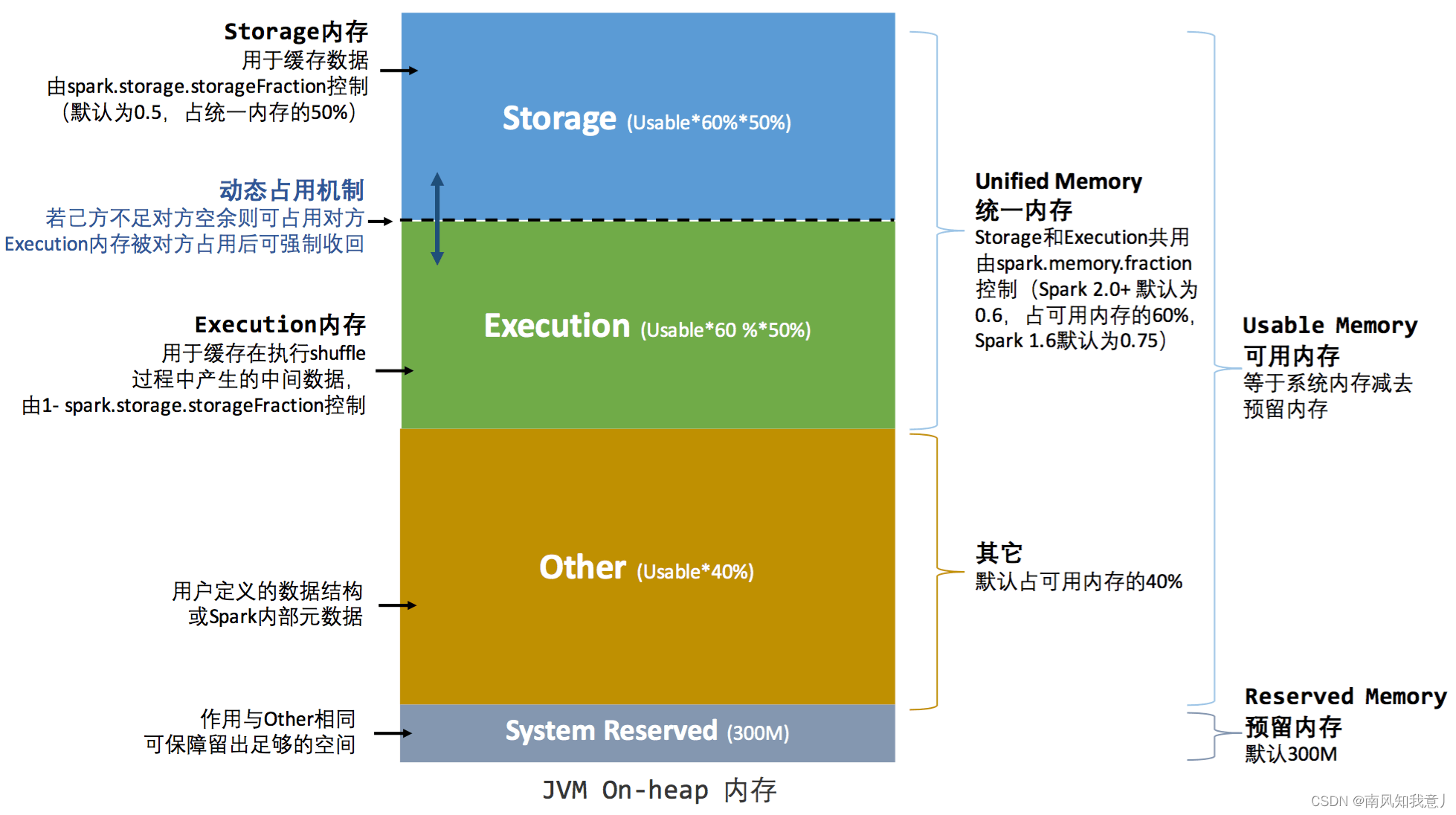

一、内存参数

| Property Name | Default |

|---|---|

| spark.memory.fraction | 0.6 |

| spark.memory.storageFraction | 0.5 |

| RESERVED_SYSTEM_MEMORY_BYTES | 300M |

| Runtime.getRuntime.maxMemory | 约为java heap memory * 0.89 |

1.简图:

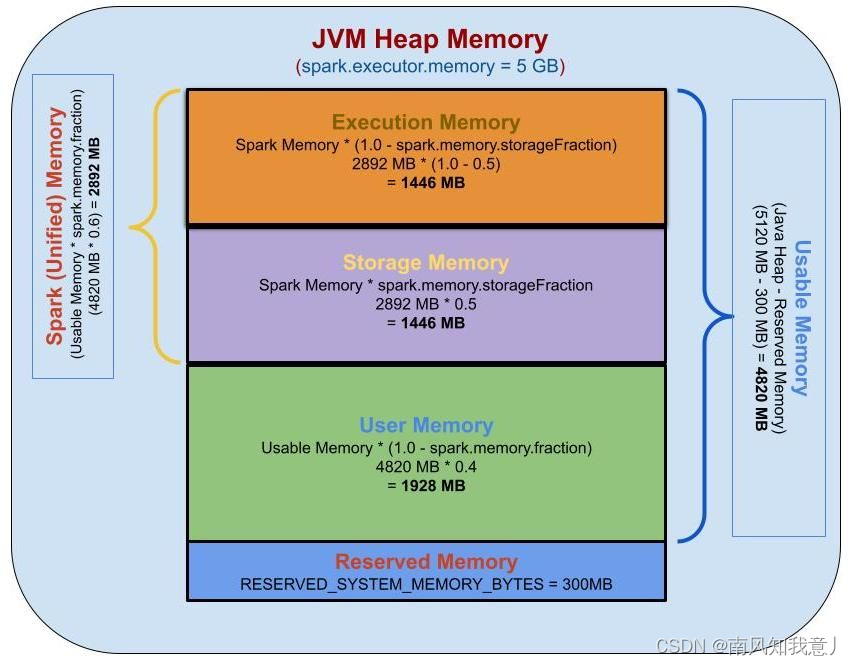

2.示例:

Calculate the Memory for 5GB executor memory:

To calculate Reserved memory, User memory, Spark memory, Storage memory, and Execution memory, we will use the following parameters:

spark.executor.memory=5g

spark.memory.fraction=0.6

spark.memory.storageFraction=0.5

Java Heap Memory = 5 GB

= 5 * 1024 MB

= 5120 MB

Reserved Memory = 300 MB

Usable Memory = (Java Heap Memory — Reserved Memory)

= 5120 MB - 300 MB

= 4820 MB

User Memory = Usable Memory * (1.0 — spark.memory.fraction)

= 4820 MB * (1.0 - 0.6)

= 4820 MB * 0.4

= 1928 MB

Spark Memory = Usable Memory * spark.memory.fraction

= 4820 MB * 0.6

= 2892 MB

Spark Storage Memory = Spark Memory * spark.memory.storageFraction

= 2892 MB * 0.5

= 1446 MB

Spark Execution Memory = Spark Memory * (1.0 - spark.memory.storageFraction)

= 2892 MB * ( 1 - 0.5)

= 2892 MB * 0.5

= 1446 MB

Reserved Memory — 300 MB — 5.85%

User Memory — 1928 MB — 37.65%

Spark Memory — 2892 MB — 56.48%

二、Spark 内存分配在Spark UI的表现

0.前置知识

1.Runtime.getRuntime.maxMemory(Max Memory)

Runtime.getRuntime.maxMemory

--executor-memory 1024M Max Memory : 910.5 MB

--executor-memory 2048M Max Memory : 1820.5 MB

大概是89%,下面会给出具体的计算公示

我们设置了 --executor-memory ,但是 Spark 的 Executor 端通过 Runtime.getRuntime.maxMemory 拿到的内存其实没这么大,这个数据是怎么计算的?

Runtime.getRuntime.maxMemory 是程序能够使用的最大内存,其值会比实际配置的执行器内存的值小。这是因为内存分配池的堆部分分为 Eden,Survivor 和 Tenured 三部分空间,而这里面一共包含了两个 Survivor 区域,而这两个 Survivor 区域在任何时候我们只能用到其中一个,所以我们可以使用下面的公式进行描述:

ExecutorMemory = Eden + 2 * Survivor + Tenured

Runtime.getRuntime.maxMemory = Eden + Survivor + Tenured

上面的值可能因为你的 GC 配置不一样得到的数据不一样,但是上面的计算公式是一样的。

2.不同版本bytes 变 MB 转换规则

2.1Spark 2.x

//1G = 1000MB

function formatBytes(bytes, type) {

if (type !== 'display') return bytes;

if (bytes == 0) return '0.0 B';

var k = 1000;

var dm = 1;

var sizes = ['B', 'KB', 'MB', 'GB', 'TB', 'PB', 'EB', 'ZB', 'YB'];

var i = Math.floor(Math.log(bytes) / Math.log(k));

return parseFloat((bytes / Math.pow(k, i)).toFixed(dm)) + ' ' + sizes[i];

}

2.2 Spark 3.x

//1G = 1024MB

function formatBytes(bytes, type) {

if (type !== 'display') return bytes;

if (bytes <= 0) return '0.0 B';

var k = 1024;

var dm = 1;

var sizes = ['B', 'KiB', 'MiB', 'GiB', 'TiB', 'PiB', 'EiB', 'ZiB', 'YiB'];

var i = Math.floor(Math.log(bytes) / Math.log(k));

return parseFloat((bytes / Math.pow(k, i)).toFixed(dm)) + ' ' + sizes[i];

}

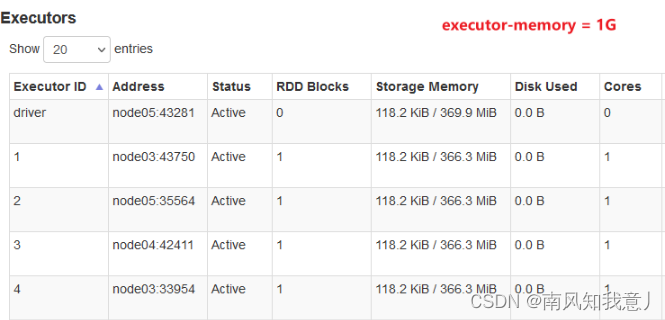

1.Spark UI with On Heap

- 1.提交参数

spark-shell \

--driver-memory 1g \

--executor-memory 1g

- 2.Spark UI表现

- 3.Storage Memory计算

Java Heap Memory = 1 GB

Runtime.getRuntime.maxMemory = 1024MB * 0.89 = 911.36MB

Reserved Memory = 300 MB

Usable Memory = 911.36MB - 300MB = 611.36MB

User Memory = 611.36MB * 0.4 = 244.544MB

Spark Memory = 611.36MB * 0.6 = 366.816MB

Spark Storage Memory = 366.816MB * 0.5 = 183.408MB

Spark Execution Memory = 366.816MB * 0.5 = 183.408MB

从spark UI我们得知, Storage Memory value 是 366.3MB 由此可知 :

Storage Memory = Spark Storage Memory + Spark Execution Memory = Spark Memory

= 366.816MB

2.Spark UI with OffHeap Enabled

- 1.提交参数

spark-shell \

--driver-memory 1g \

--executor-memory 1g \

--conf spark.memory.offHeap.enabled=true \

--conf spark.memory.offHeap.size=5g

- 2.On Heap Memory

根据上文可知Storage Memory = 366.3MB

- 3.Off Heap Memory

spark.memory.offHeap.size = 5 GB = 5 * 1024 MB = 5120 MB

- 3.3 Storage Memory

Storage Memory = On Heap Memory + Off Heap Memory

= 5120MB + 366MB

3.Spark Storage Memory 计算程序demo

// JVM Arguments: -Xmx5g

public class SparkMemoryCalculation {

private static final long MB = 1024 * 1024;

private static final long RESERVED_SYSTEM_MEMORY_BYTES = 300 * MB;

private static final double SparkMemoryStorageFraction = 0.5;

private static final double SparkMemoryFraction = 0.6;

public static void main(String[] args) {

long systemMemory = Runtime.getRuntime().maxMemory();

long usableMemory = systemMemory - RESERVED_SYSTEM_MEMORY_BYTES;

long sparkMemory = convertDoubletLong(usableMemory * SparkMemoryFraction);

long userMemory = convertDoubletLong(usableMemory * (1 - SparkMemoryFraction));

long storageMemory = convertDoubletLong(sparkMemory * SparkMemoryStorageFraction);

long executionMemory = convertDoubletLong(sparkMemory * (1 - SparkMemoryStorageFraction));

printMemoryInMB("Heap Memory\t\t", systemMemory);

printMemoryInMB("Reserved Memory", RESERVED_SYSTEM_MEMORY_BYTES);

printMemoryInMB("Usable Memory\t", usableMemory);

printMemoryInMB("User Memory\t\t", userMemory);

printMemoryInMB("Spark Memory\t", sparkMemory);

printMemoryInMB("Storage Memory\t", storageMemory);

printMemoryInMB("Execution Memory", executionMemory);

System.out.println();

printStorageMemoryInMB("Spark Storage Memory", sparkMemory);

printStorageMemoryInMB("Storage Memory UI \t", storageMemory);

printStorageMemoryInMB("Execution Memory UI", executionMemory);

}

private static void printMemoryInMB(String type, long memory) {

System.out.println(type + " \t=\t"+ (memory/MB) +" MB");

}

private static void printStorageMemoryInMB(String type, long memory) {

System.out.println(type + " \t=\t"+ (memory/(1000*1000)) +" MB");

}

private static Long convertDoubletLong(double val) {

return new Double(val).longValue();

}

}

总结

参考:

https://community.cloudera.com/t5/Community-Articles/Spark-Memory-Management/ta-p/317794

大佬文章写的宛如艺术品

最后,送大家一句话

“知识,哪怕是知识的幻影,也会成为你的铠甲,保护你不被愚昧反噬”(来自知乎——《为什么读书?》)

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?