错误信息

环境:hive-v3.1.2,ranger-v2.1.1

已对租户授权hive表权限、hdfs对应目录权限,但是用beeline连接hiveserver2执行insert overwrite directory命令报错:

jdbc:hive2://hive03.example.com:10000/default> insert overwrite directory '/tmp/testdb3/r1' ROW FORMAT DELIMITED FIELDS TERMINATED BY ',' stored as textfile select * from test;

Error: Error while compiling statement: FAILED: HiveAccessControlException Permission denied: user [zhangsan] does not have [WRITE] privilege on [/tmp/*] (state=42000,code=40000)

错误分析

这个错误信息来自ranger-hive-plugin,在校验权限时,如果输出路径不是hdfs://开头的,则跳过hdfs权限校验,进入hive自己的策略校验。

部分代码如下:

// 如果path的scheme是hdfs,则连接hdfs进行权限校验

if(hiveObjType == HiveObjectType.URI && isPathInFSScheme(path)) {

FsAction permission = getURIAccessType(hiveOpType);

if(!isURIAccessAllowed(user, permission, path, getHiveConf())) {

throw new HiveAccessControlException(String.format("Permission denied: user [%s] does not have [%s] privilege on [%s]", user, permission.name(), path));

}

continue;

}

// 否则将生成RangerHiveAccessRequest,进行hive策略的校验

RangerHiveAccessRequest request = new RangerHiveAccessRequest(resource, user, groups, roles, hiveOpType, accessType, context, sessionContext);

hivePlugin.isAccessAllowed(request, auditHandler);

解决方案

解决方法1:insert overwrite directory路径加上hdfs://前缀

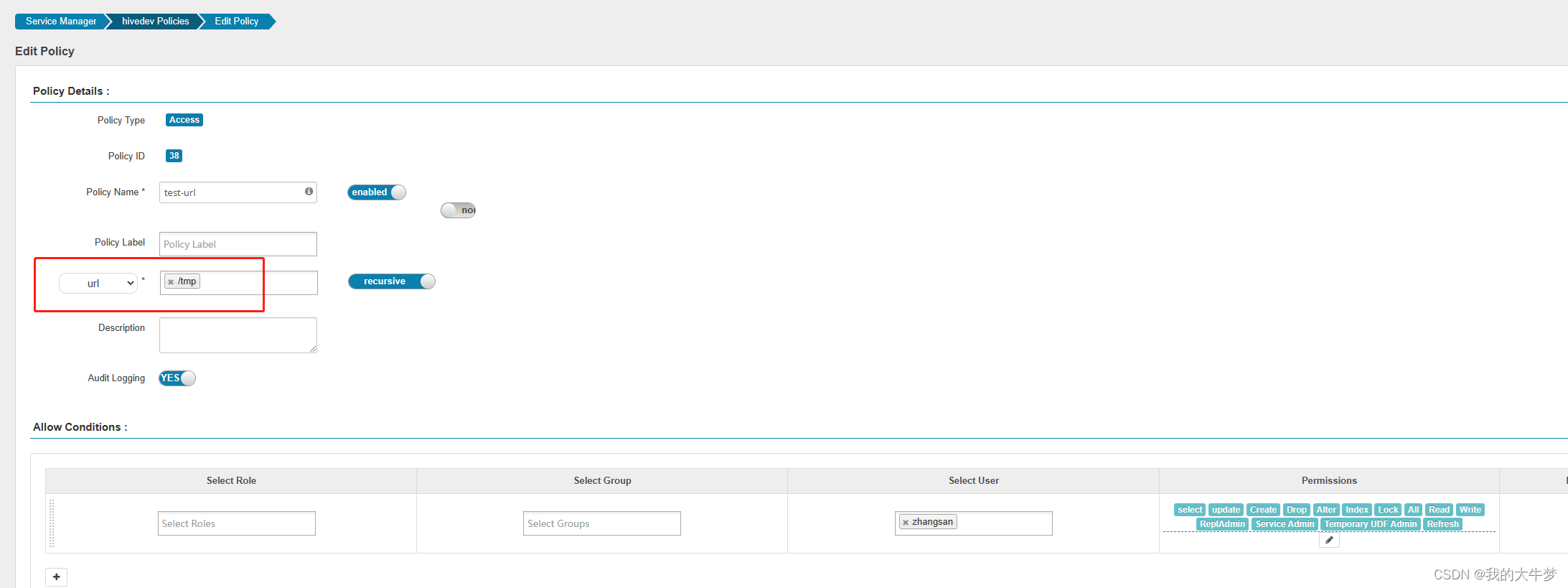

解决方法2:登录ranger,在hive服务下增加url策略,将自己的输出路径加上:

4466

4466

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?