Sqoop数据转换问题

数据转换失败

问题记录

使用Sqoop数据转换工具将Hive数据库hoteldata中的rawdata表中的数据导出到MySQL中

执行命令

[root@hadoop ~]# sqoop export \

> --connect "jdbc:mysql://localhost:3306/hoteldata?userUnicode=true&characterEncoding=utf-8" \

> --username root \

> --password root \

> --table rawdata \

> --input-fields-terminated-by "," \

> --export-dir /t1

执行日志

Warning: /opt/apps/sqoop/../accumulo does not exist! Accumulo imports will fail.

Please set $ACCUMULO_HOME to the root of your Accumulo installation.

21/04/10 13:22:06 INFO sqoop.Sqoop: Running Sqoop version: 1.4.7

21/04/10 13:22:06 WARN tool.BaseSqoopTool: Setting your password on the command-line is insecure. Consider using -P instead.

21/04/10 13:22:06 INFO manager.MySQLManager: Preparing to use a MySQL streaming resultset.

21/04/10 13:22:06 INFO tool.CodeGenTool: Beginning code generation

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/apps/hadoop/share/hadoop/common/lib/slf4j-log4j12-1.7.10.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/apps/hbase-1.2.1/lib/phoenix-4.14.1-HBase-1.2-client.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/apps/hbase-1.2.1/lib/slf4j-log4j12-1.7.5.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory]

21/04/10 13:22:07 INFO manager.SqlManager: Executing SQL statement: SELECT t.* FROM `rawdata` AS t LIMIT 1

21/04/10 13:22:07 INFO manager.SqlManager: Executing SQL statement: SELECT t.* FROM `rawdata` AS t LIMIT 1

21/04/10 13:22:07 INFO orm.CompilationManager: HADOOP_MAPRED_HOME is /opt/apps/hadoop

注: /tmp/sqoop-root/compile/d3d530fe35a3713fda1a73ba2cbd8b4f/rawdata.java使用或覆盖了已过时的 API。

注: 有关详细信息, 请使用 -Xlint:deprecation 重新编译。

21/04/10 13:22:09 INFO orm.CompilationManager: Writing jar file: /tmp/sqoop-root/compile/d3d530fe35a3713fda1a73ba2cbd8b4f/rawdata.jar

21/04/10 13:22:09 INFO mapreduce.ExportJobBase: Beginning export of rawdata

21/04/10 13:22:09 INFO Configuration.deprecation: mapred.jar is deprecated. Instead, use mapreduce.job.jar

21/04/10 13:22:10 INFO Configuration.deprecation: mapred.reduce.tasks.speculative.execution is deprecated. Instead, use mapreduce.reduce.speculative

21/04/10 13:22:10 INFO Configuration.deprecation: mapred.map.tasks.speculative.execution is deprecated. Instead, use mapreduce.map.speculative

21/04/10 13:22:10 INFO Configuration.deprecation: mapred.map.tasks is deprecated. Instead, use mapreduce.job.maps

21/04/10 13:22:10 INFO client.RMProxy: Connecting to ResourceManager at /0.0.0.0:8032

21/04/10 13:22:15 INFO input.FileInputFormat: Total input paths to process : 1

21/04/10 13:22:15 INFO input.FileInputFormat: Total input paths to process : 1

21/04/10 13:22:15 INFO mapreduce.JobSubmitter: number of splits:4

21/04/10 13:22:15 INFO Configuration.deprecation: mapred.map.tasks.speculative.execution is deprecated. Instead, use mapreduce.map.speculative

21/04/10 13:22:16 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1618026055219_0010

21/04/10 13:22:16 INFO impl.YarnClientImpl: Submitted application application_1618026055219_0010

21/04/10 13:22:16 INFO mapreduce.Job: The url to track the job: http://node4.co:8088/proxy/application_1618026055219_0010/

21/04/10 13:22:16 INFO mapreduce.Job: Running job: job_1618026055219_0010

21/04/10 13:22:23 INFO mapreduce.Job: Job job_1618026055219_0010 running in uber mode : false

21/04/10 13:22:23 INFO mapreduce.Job: map 0% reduce 0%

21/04/10 13:22:34 INFO mapreduce.Job: map 100% reduce 0%

21/04/10 13:22:36 INFO mapreduce.Job: Job job_1618026055219_0010 failed with state FAILED due to: Task failed task_1618026055219_0010_m_000003

Job failed as tasks failed. failedMaps:1 failedReduces:0

21/04/10 13:22:36 INFO mapreduce.Job: Counters: 8

Job Counters

Failed map tasks=4

Launched map tasks=4

Data-local map tasks=4

Total time spent by all maps in occupied slots (ms)=35459

Total time spent by all reduces in occupied slots (ms)=0

Total time spent by all map tasks (ms)=35459

Total vcore-milliseconds taken by all map tasks=35459

Total megabyte-milliseconds taken by all map tasks=36310016

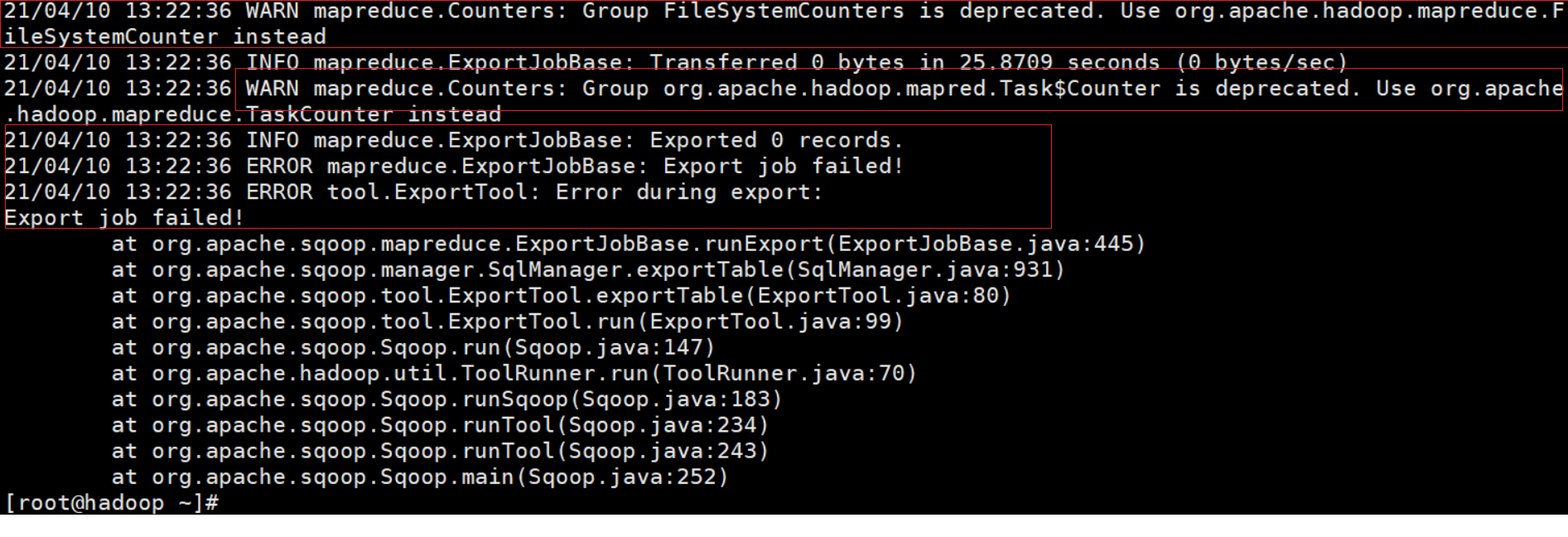

21/04/10 13:22:36 WARN mapreduce.Counters: Group FileSystemCounters is deprecated. Use org.apache.hadoop.mapreduce.FileSystemCounter instead

21/04/10 13:22:36 INFO mapreduce.ExportJobBase: Transferred 0 bytes in 25.8709 seconds (0 bytes/sec)

21/04/10 13:22:36 WARN mapreduce.Counters: Group org.apache.hadoop.mapred.Task$Counter is deprecated. Use org.apache.hadoop.mapreduce.TaskCounter instead

21/04/10 13:22:36 INFO mapreduce.ExportJobBase: Exported 0 records.

21/04/10 13:22:36 ERROR mapreduce.ExportJobBase: Export job failed!

21/04/10 13:22:36 ERROR tool.ExportTool: Error during export:

Export job failed!

at org.apache.sqoop.mapreduce.ExportJobBase.runExport(ExportJobBase.java:445)

at org.apache.sqoop.manager.SqlManager.exportTable(SqlManager.java:931)

at org.apache.sqoop.tool.ExportTool.exportTable(ExportTool.java:80)

at org.apache.sqoop.tool.ExportTool.run(ExportTool.java:99)

at org.apache.sqoop.Sqoop.run(Sqoop.java:147)

at org.apache.hadoop.util.ToolRunner.run(ToolRunner.java:70)

at org.apache.sqoop.Sqoop.runSqoop(Sqoop.java:183)

at org.apache.sqoop.Sqoop.runTool(Sqoop.java:234)

at org.apache.sqoop.Sqoop.runTool(Sqoop.java:243)

at org.apache.sqoop.Sqoop.main(Sqoop.java:252)

排查问题的过程

首次查看控制台输出的日志

优先查看ERROR级别的日志,本案例中错误日志没有太多价值的信息,这时候需要查看WARN级别的日志,有的时候WARN日志能提供高价值的信息,比如导致ERROR的原因。

其次借助Yarn查看日志

本案例中控制台输出的日志并没有很多关键信息,这个时候就需要使用YARN的时候了。

修改yarn-site.xml配置文件

切换到Hadoop的安装目录,使用vim etc/hadoop/yarn-site.xml命令修改``yarn-site.xml配置文件。将一下配置添加到yarn-site.xml配置文件中,将node4.co修改为Hadoop`主节点的主机名。

<property>

<name>mapreduce.jobhistory.address</name>

<value>node4.co:10020</value>

</property>

<property>

<name>mapreduce.jobhistory.webapp.address</name>

<value>node4.co:19888</value>

</property>

<!-- 开启yarn 日志 -->

<property>

<name>yarn.log-aggregation-enable</name>

<value>true</value>

<description>开启日志聚集功能,任务执行完之后,将日志文件自动上传到文件系统(如HDFS文件系统), 否则通过node4.co:8088页面查看日志文件的时候,会报错 "Aggregation is not enabled. Try the nodemanager at node4.co:54951"</description>

</property>

<property>

<name>yarn.log-aggregation.retain-seconds</name>

<value>302400</value>

<description>日志文件保存在文件系统(如HDFS文件系统)的最长时间,默认值是-1,即永久有效。 这里配置的值是:7天 = 3600 * 24 * 7 = 302400</description>

</property>

<!--指定yarn.log.server.url所在节点-->

<property>

<name>yarn.log.server.url</name>

<value>http://node4.co:19888/jobhistory/logs</value>

</property>

<!--配置resourcemanager的web ui 的监控页面-->

<property>

<name>yarn.resourcemanager.webapp.address</name>

<value>node4.co:8088</value>

</property>

修改完配置文件后,重启Hadoop程序,启动yarn的历史服务:

# 关闭HDFS和Yarn

stop-all.sh

# 启动HDFS和Yarn

start-all.sh

# 启动Yarn的历史服务

mr-jobhistory-daemon.sh start historyserver

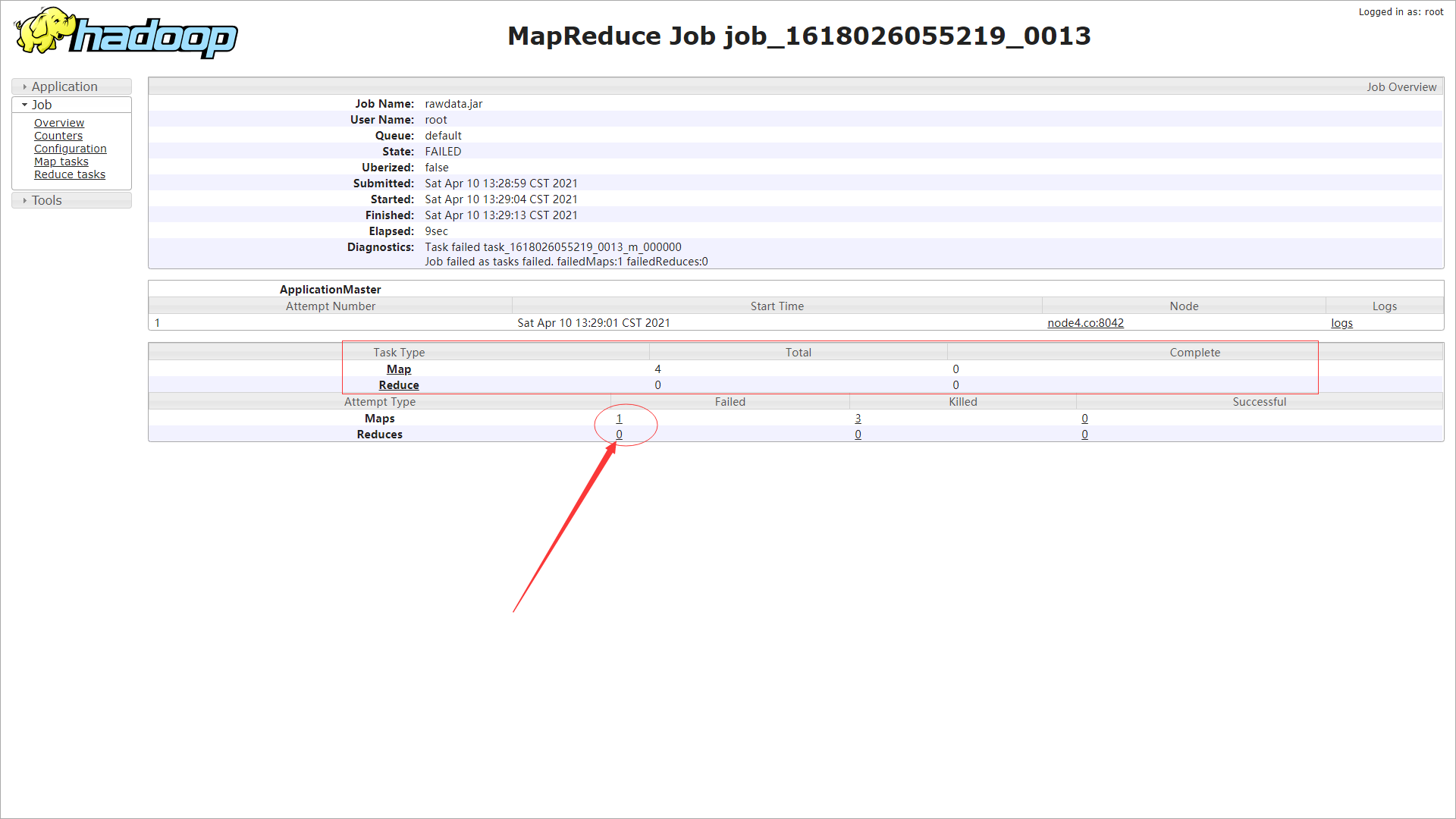

进入Yarn Web控制台

找到控制台打印的Job地址进入Yarn的Web UI中 ,如使用浏览器打开 http://node4.co:8088/proxy/application_1618026055219_0013/。

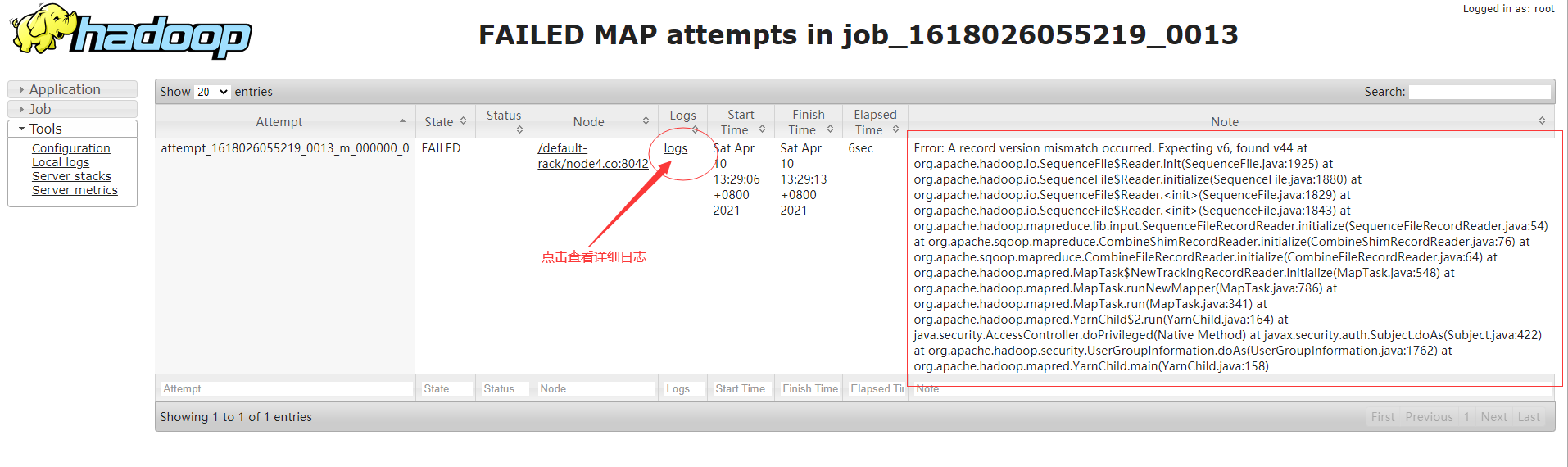

进入Web控制台可以到程序在Map阶段就出现了问题,并没有进入到Reduce阶段,查看Map阶段的日志。

查看执行日志

查看详细日志

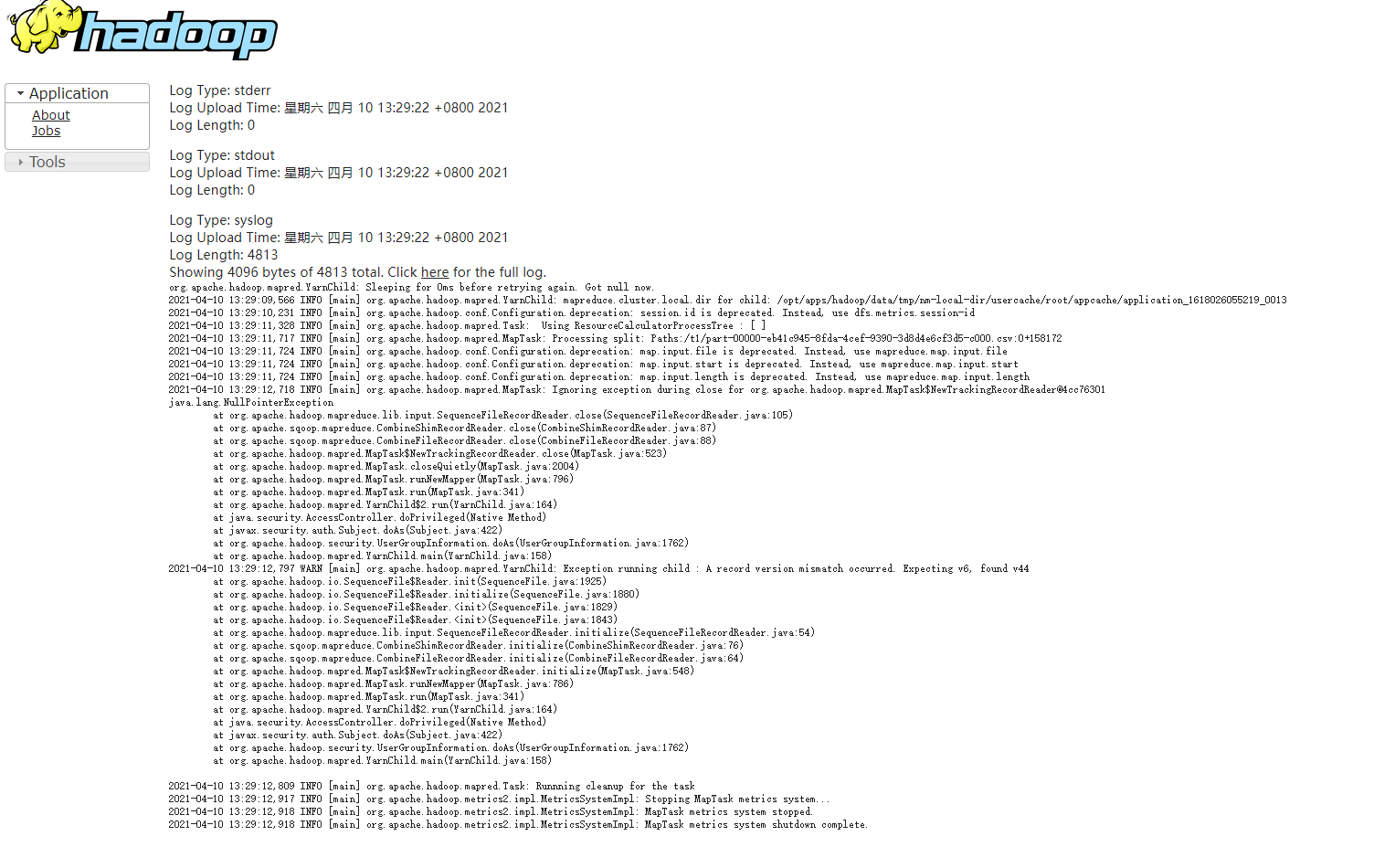

Log Type: stderr

Log Upload Time: 星期六 四月 10 13:29:22 +0800 2021

Log Length: 0

Log Type: stdout

Log Upload Time: 星期六 四月 10 13:29:22 +0800 2021

Log Length: 0

Log Type: syslog

Log Upload Time: 星期六 四月 10 13:29:22 +0800 2021

Log Length: 4813

Showing 4096 bytes of 4813 total. Click here for the full log.

org.apache.hadoop.mapred.YarnChild: Sleeping for 0ms before retrying again. Got null now.

2021-04-10 13:29:09,566 INFO [main] org.apache.hadoop.mapred.YarnChild: mapreduce.cluster.local.dir for child: /opt/apps/hadoop/data/tmp/nm-local-dir/usercache/root/appcache/application_1618026055219_0013

2021-04-10 13:29:10,231 INFO [main] org.apache.hadoop.conf.Configuration.deprecation: session.id is deprecated. Instead, use dfs.metrics.session-id

2021-04-10 13:29:11,328 INFO [main] org.apache.hadoop.mapred.Task: Using ResourceCalculatorProcessTree : [ ]

2021-04-10 13:29:11,717 INFO [main] org.apache.hadoop.mapred.MapTask: Processing split: Paths:/t1/part-00000-eb41c945-8fda-4cef-9390-3d8d4e6cf3d5-c000.csv:0+158172

2021-04-10 13:29:11,724 INFO [main] org.apache.hadoop.conf.Configuration.deprecation: map.input.file is deprecated. Instead, use mapreduce.map.input.file

2021-04-10 13:29:11,724 INFO [main] org.apache.hadoop.conf.Configuration.deprecation: map.input.start is deprecated. Instead, use mapreduce.map.input.start

2021-04-10 13:29:11,724 INFO [main] org.apache.hadoop.conf.Configuration.deprecation: map.input.length is deprecated. Instead, use mapreduce.map.input.length

2021-04-10 13:29:12,718 INFO [main] org.apache.hadoop.mapred.MapTask: Ignoring exception during close for org.apache.hadoop.mapred.MapTask$NewTrackingRecordReader@4cc76301

java.lang.NullPointerException

at org.apache.hadoop.mapreduce.lib.input.SequenceFileRecordReader.close(SequenceFileRecordReader.java:105)

at org.apache.sqoop.mapreduce.CombineShimRecordReader.close(CombineShimRecordReader.java:87)

at org.apache.sqoop.mapreduce.CombineFileRecordReader.close(CombineFileRecordReader.java:88)

at org.apache.hadoop.mapred.MapTask$NewTrackingRecordReader.close(MapTask.java:523)

at org.apache.hadoop.mapred.MapTask.closeQuietly(MapTask.java:2004)

at org.apache.hadoop.mapred.MapTask.runNewMapper(MapTask.java:796)

at org.apache.hadoop.mapred.MapTask.run(MapTask.java:341)

at org.apache.hadoop.mapred.YarnChild$2.run(YarnChild.java:164)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1762)

at org.apache.hadoop.mapred.YarnChild.main(YarnChild.java:158)

2021-04-10 13:29:12,797 WARN [main] org.apache.hadoop.mapred.YarnChild: Exception running child : A record version mismatch occurred. Expecting v6, found v44

at org.apache.hadoop.io.SequenceFile$Reader.init(SequenceFile.java:1925)

at org.apache.hadoop.io.SequenceFile$Reader.initialize(SequenceFile.java:1880)

at org.apache.hadoop.io.SequenceFile$Reader.<init>(SequenceFile.java:1829)

at org.apache.hadoop.io.SequenceFile$Reader.<init>(SequenceFile.java:1843)

at org.apache.hadoop.mapreduce.lib.input.SequenceFileRecordReader.initialize(SequenceFileRecordReader.java:54)

at org.apache.sqoop.mapreduce.CombineShimRecordReader.initialize(CombineShimRecordReader.java:76)

at org.apache.sqoop.mapreduce.CombineFileRecordReader.initialize(CombineFileRecordReader.java:64)

at org.apache.hadoop.mapred.MapTask$NewTrackingRecordReader.initialize(MapTask.java:548)

at org.apache.hadoop.mapred.MapTask.runNewMapper(MapTask.java:786)

at org.apache.hadoop.mapred.MapTask.run(MapTask.java:341)

at org.apache.hadoop.mapred.YarnChild$2.run(YarnChild.java:164)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1762)

at org.apache.hadoop.mapred.YarnChild.main(YarnChild.java:158)

2021-04-10 13:29:12,809 INFO [main] org.apache.hadoop.mapred.Task: Runnning cleanup for the task

2021-04-10 13:29:12,917 INFO [main] org.apache.hadoop.metrics2.impl.MetricsSystemImpl: Stopping MapTask metrics system...

2021-04-10 13:29:12,918 INFO [main] org.apache.hadoop.metrics2.impl.MetricsSystemImpl: MapTask metrics system stopped.

2021-04-10 13:29:12,918 INFO [main] org.apache.hadoop.metrics2.impl.MetricsSystemImpl: MapTask metrics system shutdown complete.

最后使用排除法

在Yarn日志中得知程序运行中出现了java.lang.NullPointerException异常,查找资料得知程序遇到如下情况时会抛出该异常:

- 调用null对象的实例方法。

- 访问或修改null对象的字段。

- 把长度null当作一个数组。

- 像访问或修改null阵列一样访问或修改插槽。

- 投掷null就好像它是一个Throwable 价值。

- 应用程序应该抛出此类的实例来指示null对象的其他非法使用。

- NullPointerException对象可以由虚拟机构造,就像抑制被禁用和/或堆栈跟踪不可写一样。

根据经验判断程序可能是在处理数据时候出现了异常,导致数据读取失败,数据中可能存在某些错误。这时候找到并下载sqoop导出数据的文件,使用VSCode工具打开数据,经过查找发现并没有出现特殊字符但发现数据是用是UTF-8编码,这时我做出了一个大胆猜想:会不会是数据编码导致的异常,于是我将数据编码修改为UTF-8 with BOM编码,上传数据重新执行Sqoop任务,这时惊讶的发现居然就这样可以了。

成功彩蛋

[root@hadoop ~]# sqoop export \

> --connect "jdbc:mysql://localhost:3306/hoteldata?userUnicode=true&characterEncoding=utf-8" \

> --username root \

> --password root \

> --table rawdata \

> --input-fields-terminated-by "," \

> --export-dir /t1

Warning: /opt/apps/sqoop/../accumulo does not exist! Accumulo imports will fail.

Please set $ACCUMULO_HOME to the root of your Accumulo installation.

21/04/10 13:26:29 INFO sqoop.Sqoop: Running Sqoop version: 1.4.7

21/04/10 13:26:29 WARN tool.BaseSqoopTool: Setting your password on the command-line is insecure. Consider using -P instead.

21/04/10 13:26:29 INFO manager.MySQLManager: Preparing to use a MySQL streaming resultset.

21/04/10 13:26:29 INFO tool.CodeGenTool: Beginning code generation

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/apps/hadoop/share/hadoop/common/lib/slf4j-log4j12-1.7.10.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/apps/hbase-1.2.1/lib/phoenix-4.14.1-HBase-1.2-client.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/apps/hbase-1.2.1/lib/slf4j-log4j12-1.7.5.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory]

21/04/10 13:26:29 INFO manager.SqlManager: Executing SQL statement: SELECT t.* FROM `rawdata` AS t LIMIT 1

21/04/10 13:26:29 INFO manager.SqlManager: Executing SQL statement: SELECT t.* FROM `rawdata` AS t LIMIT 1

21/04/10 13:26:29 INFO orm.CompilationManager: HADOOP_MAPRED_HOME is /opt/apps/hadoop

注: /tmp/sqoop-root/compile/a53e3d17beaade04ec3e390ab937efe1/rawdata.java使用或覆盖了已过时的 API。

注: 有关详细信息, 请使用 -Xlint:deprecation 重新编译。

21/04/10 13:26:31 INFO orm.CompilationManager: Writing jar file: /tmp/sqoop-root/compile/a53e3d17beaade04ec3e390ab937efe1/rawdata.jar

21/04/10 13:26:31 INFO mapreduce.ExportJobBase: Beginning export of rawdata

21/04/10 13:26:31 INFO Configuration.deprecation: mapred.jar is deprecated. Instead, use mapreduce.job.jar

21/04/10 13:26:32 INFO Configuration.deprecation: mapred.reduce.tasks.speculative.execution is deprecated. Instead, use mapreduce.reduce.speculative

21/04/10 13:26:32 INFO Configuration.deprecation: mapred.map.tasks.speculative.execution is deprecated. Instead, use mapreduce.map.speculative

21/04/10 13:26:32 INFO Configuration.deprecation: mapred.map.tasks is deprecated. Instead, use mapreduce.job.maps

21/04/10 13:26:33 INFO client.RMProxy: Connecting to ResourceManager at /0.0.0.0:8032

21/04/10 13:26:37 INFO input.FileInputFormat: Total input paths to process : 1

21/04/10 13:26:37 INFO input.FileInputFormat: Total input paths to process : 1

21/04/10 13:26:37 INFO mapreduce.JobSubmitter: number of splits:4

21/04/10 13:26:37 INFO Configuration.deprecation: mapred.map.tasks.speculative.execution is deprecated. Instead, use mapreduce.map.speculative

21/04/10 13:26:37 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1618026055219_0012

21/04/10 13:26:38 INFO impl.YarnClientImpl: Submitted application application_1618026055219_0012

21/04/10 13:26:38 INFO mapreduce.Job: The url to track the job: http://node4.co:8088/proxy/application_1618026055219_0012/

21/04/10 13:26:38 INFO mapreduce.Job: Running job: job_1618026055219_0012

21/04/10 13:26:45 INFO mapreduce.Job: Job job_1618026055219_0012 running in uber mode : false

21/04/10 13:26:45 INFO mapreduce.Job: map 0% reduce 0%

21/04/10 13:26:55 INFO mapreduce.Job: map 25% reduce 0%

21/04/10 13:26:56 INFO mapreduce.Job: map 100% reduce 0%

21/04/10 13:26:57 INFO mapreduce.Job: Job job_1618026055219_0012 completed successfully

21/04/10 13:26:57 INFO mapreduce.Job: Counters: 30

File System Counters

FILE: Number of bytes read=0

FILE: Number of bytes written=564092

FILE: Number of read operations=0

FILE: Number of large read operations=0

FILE: Number of write operations=0

HDFS: Number of bytes read=649581

HDFS: Number of bytes written=0

HDFS: Number of read operations=19

HDFS: Number of large read operations=0

HDFS: Number of write operations=0

Job Counters

Launched map tasks=4

Data-local map tasks=4

Total time spent by all maps in occupied slots (ms)=36199

Total time spent by all reduces in occupied slots (ms)=0

Total time spent by all map tasks (ms)=36199

Total vcore-milliseconds taken by all map tasks=36199

Total megabyte-milliseconds taken by all map tasks=37067776

Map-Reduce Framework

Map input records=3170

Map output records=3170

Input split bytes=491

Spilled Records=0

Failed Shuffles=0

Merged Map outputs=0

GC time elapsed (ms)=942

CPU time spent (ms)=4400

Physical memory (bytes) snapshot=663785472

Virtual memory (bytes) snapshot=8512688128

Total committed heap usage (bytes)=384303104

File Input Format Counters

Bytes Read=0

File Output Format Counters

Bytes Written=0

21/04/10 13:26:57 INFO mapreduce.ExportJobBase: Transferred 634.3564 KB in 24.7745 seconds (25.6052 KB/sec)

21/04/10 13:26:57 INFO mapreduce.ExportJobBase: Exported 3170 records.

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?