import scrapy

from scrapy.linkextractors import LinkExtractor

from scrapy.spiders import CrawlSpider, Rule

from scrapy_redis.spiders import RedisCrawlSpider

class MovieSpider(RedisCrawlSpider):

# 爬虫任务名

name = "movie"

# 爬虫任务的域名范围

allowed_domains = ["movie.douban.com"]

# 注释掉爬虫任务的起始链接

# start_urls = ["https://movie.douban.com/top250"]

# 设置redis_key,通过redis_key向redis数据库中获取请求链接

redis_key = 'start_urls'

# rules是一个元组数据,规则

# Rule是一个类,用于实例化一个对象。LinkExtractor也是类,用于实例化对象

# 在LinkExtractor类中,需要记住allow(正则)和restrict_xpaths(xpath)参数,用于筛选数据

# follow是用于设置页面跟随的开关,用来指定是否需要翻页抓取,为True代表翻页

rules = (Rule(LinkExtractor(allow='^https://movie.douban.com/subject/\d+/$'), callback="parse_item", follow=False),

Rule(LinkExtractor(restrict_xpaths='//span[@class="next"]/a'), follow=True),)

# 回调函数,用于处理下载器返回的响应

def parse_item(self, response):

title = response.xpath('//span[@property="v:itemreviewed"]/text()').get()

score = response.xpath('//strong[@property="v:average"]/text()').get()

url = response.url

text_list = response.xpath('//span[@property="v:summary"]/text()').getall()

text = ''.join(text_list)

text = text.replace('\n', '').replace(' ', '').replace('\u3000', '')

item = {'标题': title, '评分': score, '网址': url, '简介': text}

return item

# Define your item pipelines here

#

# Don't forget to add your pipeline to the ITEM_PIPELINES setting

# See: https://docs.scrapy.org/en/latest/topics/item-pipeline.html

# useful for handling different item types with a single interface

from itemadapter import ItemAdapter

import pymongo

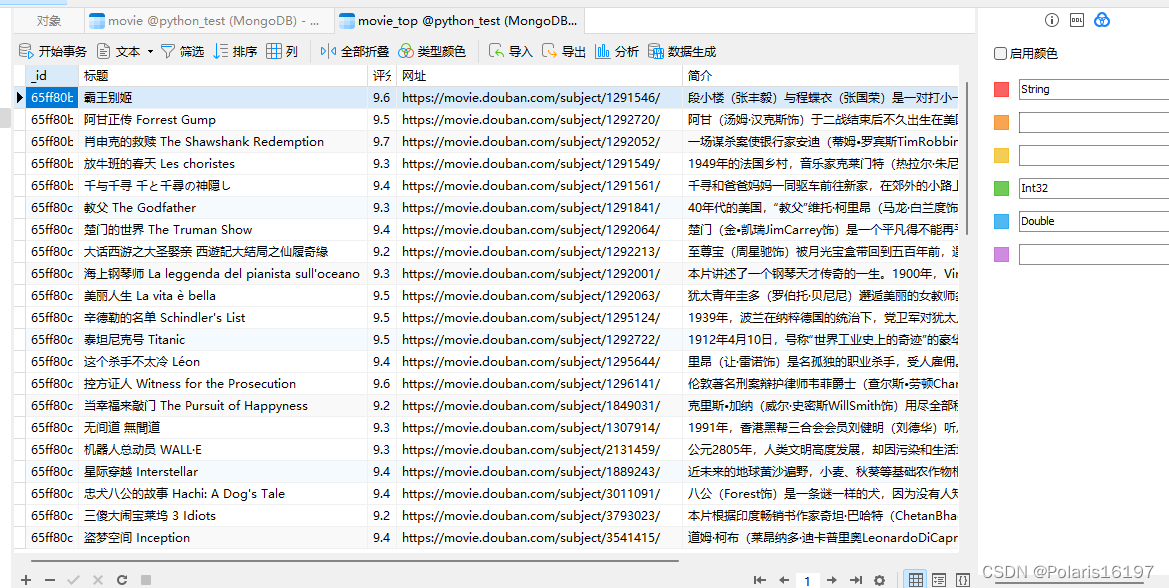

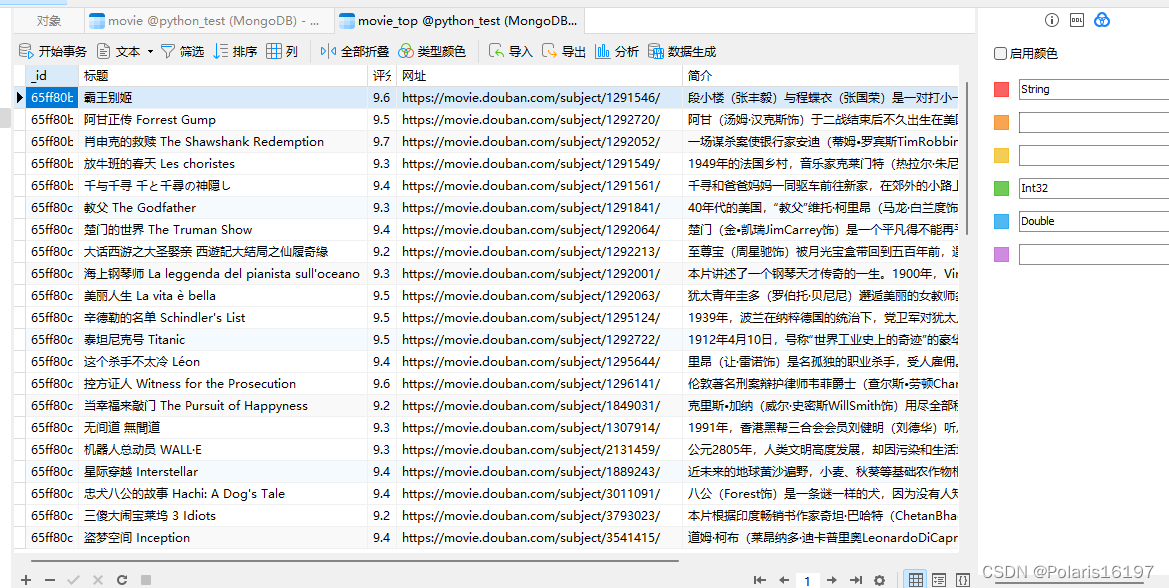

class DoubanMoviePipeline:

# 使用init来初始化mongodb数据库的指定

def __init__(self):

# 连接mongodb数据库中的python_test数据库,并指定movie_top集合

self.client = pymongo.MongoClient()

self.db = self.client['python_test']

self.collection = self.db['movie_top']

def process_item(self, item, spider):

print(item)

self.collection.insert_one(item)

return item

from scrapy import Request

from itemadapter import ItemAdapter

from scrapy.pipelines.images import ImagesPipeline

# 自定义图片管道类

class DoubanImagePipeline(ImagesPipeline):

# 重写get_media_requests方法发送请求下载图片

def get_media_requests(self, item, info):

# 通过ItemAdapter来获取图片管道的image_urls字段

urls = ItemAdapter(item).get(self.images_urls_field, [])

# 遍历出image_urls字段中的链接,并发送下载图片的请求

return [Request(u, meta={'image': item}) for u in urls]

# 重写file_path方法来指定图片的保存位置和名称

def file_path(self, request, response=None, info=None, *, item=None):

# image_guid = hashlib.sha1(to_bytes(request.url)).hexdigest()

# 获取请求的图片数据

item = request.meta.get('image')

# 获取请求的图片链接的图片名

image_name = item['image_name']

# 设置保存的文件夹和文件名

return f"images/{image_name}.jpg"

# Scrapy settings for douban_movie project

#

# For simplicity, this file contains only settings considered important or

# commonly used. You can find more settings consulting the documentation:

#

# https://docs.scrapy.org/en/latest/topics/settings.html

# https://docs.scrapy.org/en/latest/topics/downloader-middleware.html

# https://docs.scrapy.org/en/latest/topics/spider-middleware.html

BOT_NAME = "douban_movie"

SPIDER_MODULES = ["douban_movie.spiders"]

NEWSPIDER_MODULE = "douban_movie.spiders"

# Crawl responsibly by identifying yourself (and your website) on the user-agent

USER_AGENT = "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/114.0.0.0 Safari/537.36"

# Obey robots.txt rules

ROBOTSTXT_OBEY = False

# Configure maximum concurrent requests performed by Scrapy (default: 16)

CONCURRENT_REQUESTS = 3

# Configure a delay for requests for the same website (default: 0)

# See https://docs.scrapy.org/en/latest/topics/settings.html#download-delay

# See also autothrottle settings and docs

DOWNLOAD_DELAY = 1

# The download delay setting will honor only one of:

# CONCURRENT_REQUESTS_PER_DOMAIN = 16

# CONCURRENT_REQUESTS_PER_IP = 16

# Disable cookies (enabled by default)

# COOKIES_ENABLED = False

# Disable Telnet Console (enabled by default)

# TELNETCONSOLE_ENABLED = False

# Override the default request headers:

# DEFAULT_REQUEST_HEADERS = {

# "Accept": "text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8",

# "Accept-Language": "en",

# }

# Enable or disable spider middlewares

# See https://docs.scrapy.org/en/latest/topics/spider-middleware.html

# SPIDER_MIDDLEWARES = {

# "douban_movie.middlewares.DoubanMovieSpiderMiddleware": 543,

# }

# Enable or disable downloader middlewares

# See https://docs.scrapy.org/en/latest/topics/downloader-middleware.html

# DOWNLOADER_MIDDLEWARES = {

# "douban_movie.middlewares.DoubanMovieDownloaderMiddleware": 543,

# }

# Enable or disable extensions

# See https://docs.scrapy.org/en/latest/topics/extensions.html

# EXTENSIONS = {

# "scrapy.extensions.telnet.TelnetConsole": None,

# }

from scrapy.pipelines.images import ImagesPipeline

# Configure item pipelines

# See https://docs.scrapy.org/en/latest/topics/item-pipeline.html

ITEM_PIPELINES = {

# 普通文本数据保存的管道

"douban_movie.pipelines.DoubanMoviePipeline": 300,

# 图片数据保存的管道

# "scrapy.pipelines.images.ImagesPipeline": 301,

# 注册自定义的图片管道

# "douban_movie.pipelines.DoubanImagePipeline": 302

}

# 指定图片保存的位置,保存到项目的当前路径下

# IMAGES_STORE = './'

# Enable and configure the AutoThrottle extension (disabled by default)

# See https://docs.scrapy.org/en/latest/topics/autothrottle.html

# AUTOTHROTTLE_ENABLED = True

# The initial download delay

# AUTOTHROTTLE_START_DELAY = 5

# The maximum download delay to be set in case of high latencies

# AUTOTHROTTLE_MAX_DELAY = 60

# The average number of requests Scrapy should be sending in parallel to

# each remote server

# AUTOTHROTTLE_TARGET_CONCURRENCY = 1.0

# Enable showing throttling stats for every response received:

# AUTOTHROTTLE_DEBUG = False

# Enable and configure HTTP caching (disabled by default)

# See https://docs.scrapy.org/en/latest/topics/downloader-middleware.html#httpcache-middleware-settings

# HTTPCACHE_ENABLED = True

# HTTPCACHE_EXPIRATION_SECS = 0

# HTTPCACHE_DIR = "httpcache"

# HTTPCACHE_IGNORE_HTTP_CODES = []

# HTTPCACHE_STORAGE = "scrapy.extensions.httpcache.FilesystemCacheStorage"

# Set settings whose default value is deprecated to a future-proof value

REQUEST_FINGERPRINTER_IMPLEMENTATION = "2.7"

TWISTED_REACTOR = "twisted.internet.asyncioreactor.AsyncioSelectorReactor"

FEED_EXPORT_ENCODING = "utf-8"

# ===========================================

# 这里是配置Scrapy-Redis信息

# 设置redis作为过滤器,防止请求重复的数据

DUPEFILTER_CLASS = "scrapy_redis.dupefilter.RFPDupeFilter"

# 设置Redis作为任务调度器

SCHEDULER = "scrapy_redis.scheduler.Scheduler"

# 设置调度器持久化,保证数据的断点续爬

SCHEDULER_PERSIST = True

# 设置日志等级信息

LOG_LEVEL = 'DEBUG'

# 如果redis是部署在云端的,需要配置Redis的连接

# REDIS_URL = 'redis://user:pass@hostname:6379'

2万+

2万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?