目录

1.概念

- 序列化 (Serialization) 是指把结构化对象转化为字节流

- 反序列化 (Deserialization) 是序列化的逆过程. 把字节流转为结构化对象. 当要在进程间传递对象或持久化对象的时候, 就需要序列化对象成字节流, 反之当要将接收到或从磁盘读取的字节流转换为对象, 就要进行反序列化

- Java 的序列化 (Serializable) 是一个重量级序列化框架, 一个对象被序列化后, 会附带很多额外的信息 (各种校验信息, header, 继承体系等), 不便于在网络中高效传输. 所以, Hadoop自己开发了一套序列化机制(Writable), 精简高效. 不用像Java对象类一样传输多层的父子关系, 需要哪个属性就传输哪个属性值, 大大的减少网络传输的开销

- Writable 是 Hadoop 的序列化格式, Hadoop 定义了这样一个 Writable 接口. 一个类要支持可序列化只需实现这个接口即可

- 另外 Writable 有一个子接口是 WritableComparable, WritableComparable是既可实现序列化, 也可以对key进行比较, 我们这里可以通过自定义 Key 实现 WritableComparable 来实现我们的排序功能

2.需求分析

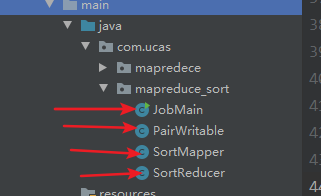

3.具体代码

3.1 自定义类型和比较器

package com.ucas.mapreduce_sort;

import org.apache.hadoop.io.WritableComparable;

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

/**

* @author GONG

* @version 1.0

* @date 2020/10/9 16:55

*/

public class PairWritable implements WritableComparable<PairWritable> {

// 组合key,第一部分是我们第一列,第二部分是我们第二列

private String first;

private int second;

public PairWritable() {

}

public PairWritable(String first, int second) {

this.set(first, second);

}

/**

* 方便设置字段

*/

public void set(String first, int second) {

this.first = first;

this.second = second;

}

/**

* 反序列化

*/

@Override

public void readFields(DataInput input) throws IOException {

this.first = input.readUTF();

this.second = input.readInt();

}

/**

* 实现序列化

*/

@Override

public void write(DataOutput output) throws IOException {

output.writeUTF(first);

output.writeInt(second);

}

/*

* 重写比较器,实现排序规则

*/

public int compareTo(PairWritable o) {

//每次比较都是调用该方法的对象与传递的参数进行比较,

//说白了就是第一行与第二行比较完了之后的结果与第三行比较,

//得出来的结果再去与第四行比较,依次类推

int comp = this.first.compareTo(o.first);

if (comp != 0) {

return comp;

} else { // 若第一个字段相等,则比较第二个字段

return Integer.valueOf(this.second).compareTo(

Integer.valueOf(o.getSecond()));

}

}

public int getSecond() {

return second;

}

public void setSecond(int second) {

this.second = second;

}

public String getFirst() {

return first;

}

public void setFirst(String first) {

this.first = first;

}

@Override

public String toString() {

return "PairWritable{" +

"first='" + first + '\'' +

", second=" + second +

'}';

}

}3.2 Mapper

package com.ucas.mapreduce_sort;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

/**

* @author GONG

* @version 1.0

* @date 2020/10/9 16:53

*/

public class SortMapper extends Mapper<LongWritable, Text, PairWritable, IntWritable> {

private PairWritable mapOutKey = new PairWritable();

private IntWritable mapOutValue = new IntWritable();

@Override

public void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

String lineValue = value.toString();

String[] strs = lineValue.split("\t");

//设置组合key和value ==> <(key,value),value>

mapOutKey.set(strs[0], Integer.valueOf(strs[1]));

mapOutValue.set(Integer.valueOf(strs[1]));

context.write(mapOutKey, mapOutValue);

}

}

3.3 Reducer

package com.ucas.mapreduce_sort;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

/**

* @author GONG

* @version 1.0

* @date 2020/10/9 16:53

*/

public class SortReducer extends Reducer<PairWritable, IntWritable, Text, IntWritable> {

private Text outPutKey = new Text();

@Override

public void reduce(PairWritable key, Iterable<IntWritable> values, Context context) throws IOException, InterruptedException {

//迭代输出

for (IntWritable value : values) {

outPutKey.set(key.getFirst());

context.write(outPutKey, value);

}

}

}3.4 Main入口

package com.ucas.mapreduce_sort;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.conf.Configured;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.TextInputFormat;

import org.apache.hadoop.mapreduce.lib.output.TextOutputFormat;

import org.apache.hadoop.util.Tool;

import org.apache.hadoop.util.ToolRunner;

/**

* @author GONG

* @version 1.0

* @date 2020/10/9 16:55

*/

public class JobMain extends Configured implements Tool {

@Override

public int run(String[] args) throws Exception {

Configuration conf = super.getConf();

conf.set("mapreduce.framework.name", "local");

Job job = Job.getInstance(conf, JobMain.class.getSimpleName());

job.setJarByClass(JobMain.class);

job.setInputFormatClass(TextInputFormat.class);

TextInputFormat.addInputPath(job, new Path("hdfs://192.168.0.101:8020/input/sort"));

TextOutputFormat.setOutputPath(job, new Path("hdfs://192.168.0.101:8020/out/sort_out"));

job.setMapperClass(SortMapper.class);

job.setMapOutputKeyClass(PairWritable.class);

job.setMapOutputValueClass(IntWritable.class);

job.setReducerClass(SortReducer.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

boolean b = job.waitForCompletion(true);

return b ? 0 : 1;

}

public static void main(String[] args) throws Exception {

Configuration entries = new Configuration();

int run = ToolRunner.run(entries, new JobMain(), args);

System.exit(run);

}

}

4.运行并查看结果

4.1 准备工作

创建sort.txt

在hdfs中创建input文件夹,并且把sort.txt放进去

4.2 打包jar

需要先清理一下clean,然后双击打包

4.3 运行jar包,查看结果

将jar上传到 /export/software

运行:hadoop jar day03_mapreduce_wordcount-1.0-SNAPSHOT.jar com.ucas.mapreduce_sort.JobMain

296

296

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?