本文演示如何使用Web API将数据从Elasticsearch导出到CSV文件。 使用_search扫描和滚动从Elasticsearch检索数据。 该API可以非常快速地检索数据,无需任何排序。 然后使用来自Jordan Gray的WebApiContrib.Formatting.Xlsx将数据导出到CSV文件。 导出的进度显示在使用SignalR(MVC razor视图)的HTML页面中。

建立

导出使用在上一篇文章中创建的人物索引。 使用别名persons访问此索引persons_v2。 由于索引几乎没有数据,约20000条记录,导出可以导出为单个CSV文件或单个块。

Person类用于从Elasticsearch检索数据,并将数据导出到CSV文件。 根据需要添加不同的属性。

public class Person

{

[Key]

public int BusinessEntityID { get; set; }

[Required]

[StringLength(2)]

public string PersonType { get; set; }

public bool NameStyle { get; set; }

[StringLength(8)]

public string Title { get; set; }

[Required]

[StringLength(50)]

public string FirstName { get; set; }

[StringLength(50)]

public string MiddleName { get; set; }

[Required]

[StringLength(50)]

public string LastName { get; set; }

[StringLength(10)]

public string Suffix { get; set; }

public int EmailPromotion { get; set; }

[Column(TypeName = "xml")]

public string AdditionalContactInfo { get; set; }

[Column(TypeName = "xml")]

public string Demographics { get; set; }

public Guid rowguid { get; set; }

[DisplayFormat(DataFormatString = "{0:D}")]

[ExcelColumn(UseDisplayFormatString = true)]

public DateTime ModifiedDate { get; set; }

public bool Deleted { get; set; }

}现在数据模型已经准备就绪,可以实现导出。 这是在PersonsCsvExportController类中完全实现的。 GetPersonsCsvExport方法获取数据,将其导出为CSV文件,并使用SignalR将诊断消息添加到HTML页面。

using System.Collections.Generic;

using System.Diagnostics;

using System.Net.Http.Headers;

using System.Text;

using System.Web.Http;

using ElasticsearchCRUD;

using ElasticsearchCRUD.ContextSearch;

using Microsoft.AspNet.SignalR;

using WebApiCSVExportFromElasticsearch.Models;

namespace WebApiCSVExportFromElasticsearch.Controllers

{

public class PersonsCsvExportController : ApiController

{

private readonly IHubContext _hubContext = GlobalHost.ConnectionManager.GetHubContext<DiagnosisEventSourceService>();

[Route("api/PersonsCsvExport")]

public IHttpActionResult GetPersonsCsvExport()

{

_hubContext.Clients.All.addDiagnosisMessage(string.Format("Csv export starting"));

// force that this method always returns an excel document.

Request.Headers.Accept.Clear();

Request.Headers.Accept.Add(new MediaTypeWithQualityHeaderValue("application/vnd.ms-excel"));

_hubContext.Clients.All.addDiagnosisMessage(string.Format("ScanAndScrollConfiguration: 1s, 300 items pro shard"));

_hubContext.Clients.All.addDiagnosisMessage(string.Format("sending scan and scroll _search"));

_hubContext.Clients.All.addDiagnosisMessage(BuildSearchMatchAll());

var result = new List<Person>();

using (var context = new ElasticsearchContext("http://localhost:9200/", new ElasticsearchMappingResolver()))

{

context.TraceProvider = new SignalRTraceProvider(_hubContext, TraceEventType.Information);

var scanScrollConfig = new ScanAndScrollConfiguration(new TimeUnitSecond(1), 300);

var scrollIdResult = context.SearchCreateScanAndScroll<Person>(BuildSearchMatchAll(), scanScrollConfig);

var scrollId = scrollIdResult.ScrollId;

_hubContext.Clients.All.addDiagnosisMessage(string.Format("Total Hits: {0}", scrollIdResult.TotalHits));

int processedResults = 0;

while (scrollIdResult.TotalHits > processedResults)

{

var resultCollection = context.SearchScanAndScroll<Person>(scrollId, scanScrollConfig);

scrollId = resultCollection.ScrollId;

result.AddRange(resultCollection.PayloadResult);

processedResults = result.Count;

_hubContext.Clients.All.addDiagnosisMessage(string.Format("Total Hits: {0}, Processed: {1}", scrollIdResult.TotalHits, processedResults));

}

}

_hubContext.Clients.All.addDiagnosisMessage(string.Format("Elasticsearch proccessing finished, starting to serialize csv"));

return Ok(result);

}

//{

// "query" : {

// "match_all" : {}

// }

//}

private Search BuildSearchMatchAll()

{

return new Search()

{

Query = new Query(new MatchAllQuery())

};

}

}Elasticsearch扫描并滚动与ElasticsearchCRUD

扫描和滚动功能可用于快速选择数据,无需任何排序,因为排序是一项很花费时间的操作。 使用_search API(SearchCreateScanAndScroll)的第一个请求定义了扫描的查询,并返回此查询的匹配总数以及scrollId。

此scrollId然后用于检索下一个滚动。 扫描配置有ScanAndScrollConfiguration类。 该类定义在定义的时间限制内要检索的项目数量(最大)。 如果定义了300,并且索引具有5个分片,则如果服务器可以在时间限制内完成此操作,则1500个文档将被滚动请求。

所有以下滚动请求都会返回一个新的scrollId,然后将其用于下一个滚动(n + 1)。 直到扫描中的所有文档都被选中为止。

using (var context = new ElasticsearchContext("http://localhost:9200/", new ElasticsearchMappingResolver()))

{

var scanScrollConfig = new ScanAndScrollConfiguration(1, TimeUnits.Second, 300);

var scrollIdResult = context.SearchCreateScanAndScroll<Person>(BuildSearchMatchAll(), scanScrollConfig);

var scrollId = scrollIdResult.ScrollId;

int processedResults = 0;

while (scrollIdResult.TotalHits > processedResults)

{

var resultCollection = context.SearchScanAndScroll<Person>(scrollId, scanScrollConfig);

scrollId = resultCollection.ScrollId;

// Use the data here: resultCollection.PayloadResult

processedResults = result.Count;

}

}ElasticsearchCRUD TraceProvider使用SignalR

该示例还跟踪使用SignalR的所有ElasticsearchCRUD消息。 创建一个IHubContext,然后在SignalRTraceProvider中使用它

private readonly IHubContext _hubContext = GlobalHost.ConnectionManager.GetHubContext<DiagnosisEventSourceService>();

context.TraceProvider = new SignalRTraceProvider(_hubContext, TraceEventType.Information);如果跟踪事件级别的值低于构造函数中定义的最小值,则TraceProvider会向所有客户端发送消息。

using System;

using System.Diagnostics;

using System.Text;

using ElasticsearchCRUD.Tracing;

using Microsoft.AspNet.SignalR;

namespace WebApiCSVExportFromElasticsearch

{

public class SignalRTraceProvider : ITraceProvider

{

private readonly TraceEventType _traceEventTypelogLevel;

private readonly IHubContext _hubContext;

public SignalRTraceProvider(IHubContext hubContext, TraceEventType traceEventTypelogLevel)

{

_traceEventTypelogLevel = traceEventTypelogLevel;

_hubContext = hubContext;

}

public void Trace(TraceEventType level, string message, params object[] args)

{

if (_traceEventTypelogLevel >= level)

{

var sb = new StringBuilder();

sb.AppendLine();

sb.Append(DateTime.Now.ToString("yyyy-MM-dd HH:mm:ss") + ": ");

sb.Append(string.Format(message, args));

_hubContext.Clients.All.addDiagnosisMessage(string.Format("{0}: {1}", level, sb.ToString()));

}

}

public void Trace(TraceEventType level, Exception ex, string message, params object[] args)

{

if (_traceEventTypelogLevel >= level)

{

var sb = new StringBuilder();

sb.AppendLine();

sb.Append(DateTime.Now.ToString("yyyy-MM-dd HH:mm:ss") + ": ");

sb.Append(string.Format(message, args));

sb.AppendFormat("{2}: {0} , {1}", ex.Message, ex.StackTrace, ex.GetType());

_hubContext.Clients.All.addDiagnosisMessage(string.Format("{0}: {1}", level, sb.ToString()));

}

}

}

}SignalR可以从NuGet下载并设置如下:(有关更多信息,请参阅ASP.NET SignalR文档)

public class Startup

{

public void Configuration(IAppBuilder app)

{

app.MapSignalR();

}

}

public class DiagnosisEventSourceService : Hub

{

}SignalR HTML客户端配置如下:

<h4>Diagnosis</h4>

<div class="container">

<ol id="discussion"></ol>

</div>

@section scripts {

<!--Script references. -->

<!--The jQuery library is required and is referenced by default in _Layout.cshtml. -->

<!--Reference the SignalR library. -->

<script src="~/Scripts/jquery.signalR-2.1.2.js"></script>

<!--Reference the autogenerated SignalR hub script. -->

<script src="~/signalr/hubs"></script>

<!--SignalR script to update the chat page and send messages.-->

<script>

$(function () {

// Reference the auto-generated proxy for the hub.

var signalRService = $.connection.diagnosisEventSourceService;

// Create a function that the hub can call back to display messages.

signalRService.client.addDiagnosisMessage = function (message) {

// Add the message to the page.

$('#discussion').append('<li>' + htmlEncode(message) + '</li>');

};

// Start the connection.

$.connection.hub.start();

});

// This optional function html-encodes messages for display in the page.

function htmlEncode(value) {

var encodedValue = $('<div />').text(value).html();

return encodedValue;

}

</script>

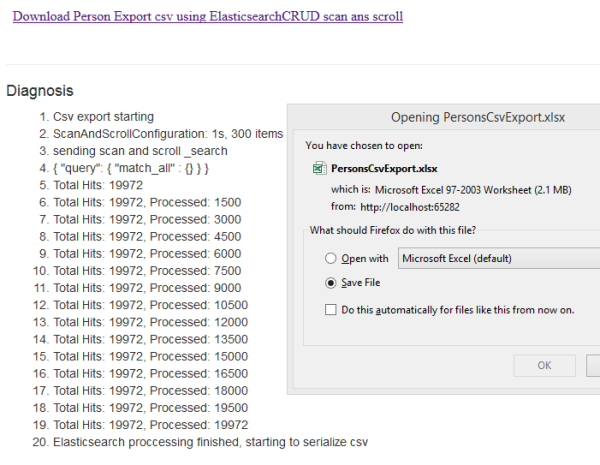

}可以在Home / Index MVC视图中查看或使用下载和诊断:

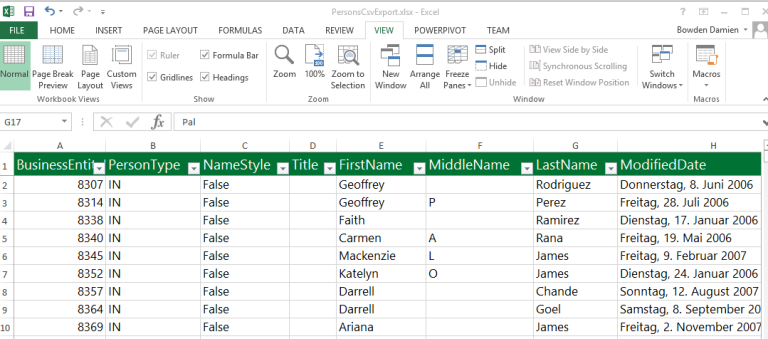

并且下载的CSV与Elasticsearch数据导出:

如果您需要在未分类的领地中从Elasticsearch中选择大量的数据,扫描和滚动将非常有用。 这对于备份,重新建立索引或将数据导出到不同的介质中很有用。

338

338

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?