idea中运行代码:

import org.apache.spark.SparkConf;

import org.apache.spark.api.java.JavaSparkContext;

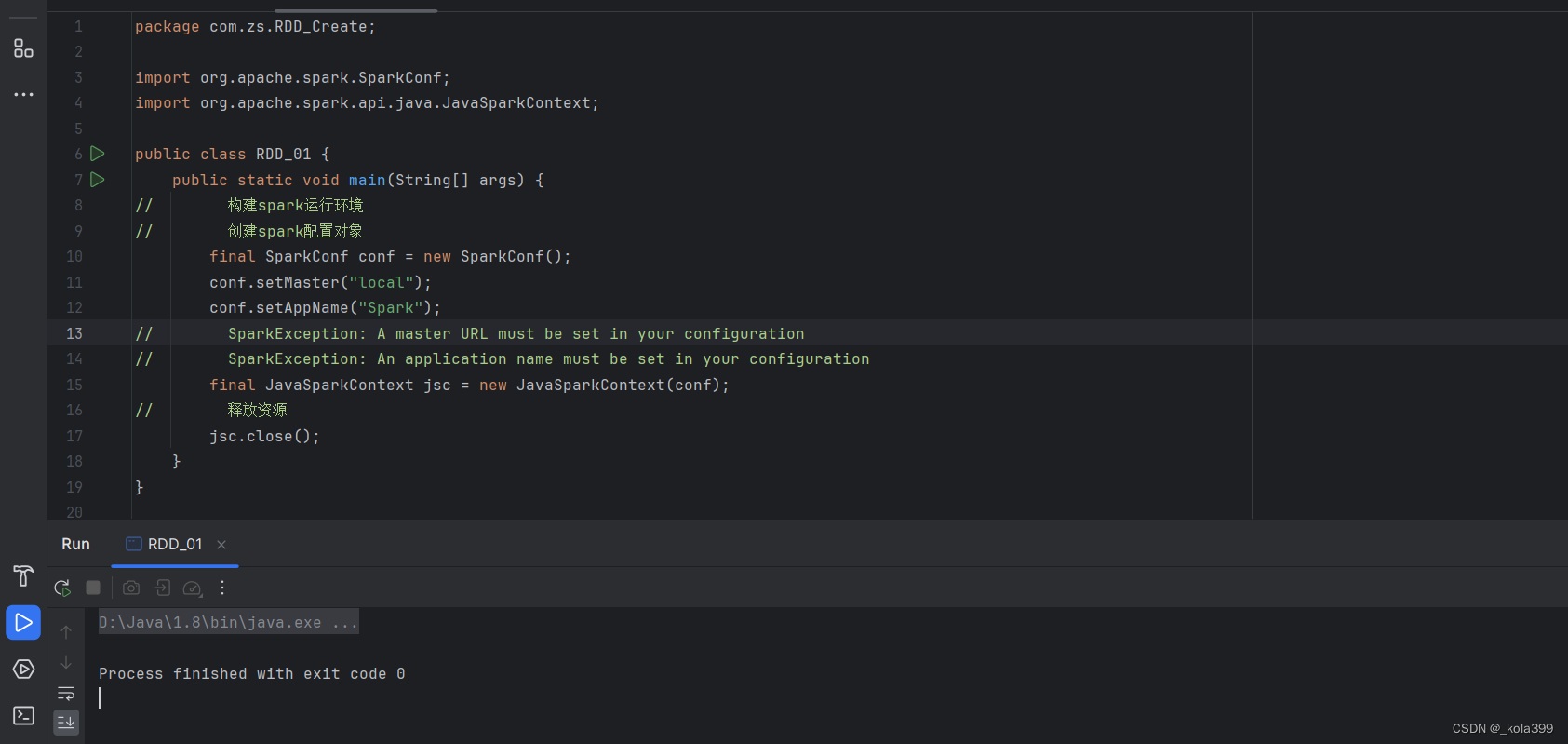

public class RDD_01 {

public static void main(String[] args) {

// 构建spark运行环境

// 创建spark配置对象

final SparkConf conf = new SparkConf();

conf.setMaster("local");

conf.setAppName("Spark");

// SparkException: A master URL must be set in your configuration

// SparkException: An application name must be set in your configuration

final JavaSparkContext jsc = new JavaSparkContext(conf);

// 释放资源

jsc.close();

}

}报错:

Exception in thread "main" java.lang.ExceptionInInitializerError

at org.apache.spark.unsafe.array.ByteArrayMethods.<clinit>(ByteArrayMethods.java:56)

at org.apache.spark.memory.MemoryManager.defaultPageSizeBytes$lzycompute(MemoryManager.scala:264)

at org.apache.spark.memory.MemoryManager.defaultPageSizeBytes(MemoryManager.scala:254)

at org.apache.spark.memory.MemoryManager.$anonfun$pageSizeBytes$1(MemoryManager.scala:273)

at scala.runtime.java8.JFunction0$mcJ$sp.apply(JFunction0$mcJ$sp.java:23)

at scala.Option.getOrElse(Option.scala:189)

at org.apache.spark.memory.MemoryManager.<init>(MemoryManager.scala:273)

at org.apache.spark.memory.UnifiedMemoryManager.<init>(UnifiedMemoryManager.scala:58)

at org.apache.spark.memory.UnifiedMemoryManager$.apply(UnifiedMemoryManager.scala:207)

at org.apache.spark.SparkEnv$.create(SparkEnv.scala:320)

at org.apache.spark.SparkEnv$.createDriverEnv(SparkEnv.scala:194)

at org.apache.spark.SparkContext.createSparkEnv(SparkContext.scala:279)

at org.apache.spark.SparkContext.<init>(SparkContext.scala:464)

at org.apache.spark.api.java.JavaSparkContext.<init>(JavaSparkContext.scala:58)

at com.zs.RDD_Create.RDD_01.main(RDD_01.java:15)

Caused by: java.lang.IllegalStateException: java.lang.NoSuchMethodException: java.nio.DirectByteBuffer.<init>(long,int)

at org.apache.spark.unsafe.Platform.<clinit>(Platform.java:113)

... 15 more

Caused by: java.lang.NoSuchMethodException: java.nio.DirectByteBuffer.<init>(long,int)

at java.base/java.lang.Class.getConstructor0(Class.java:3761)

at java.base/java.lang.Class.getDeclaredConstructor(Class.java:2930)

at org.apache.spark.unsafe.Platform.<clinit>(Platform.java:71)

... 15 more问题主要出在Spark和Java版本的不兼容上:实际上是因为idea中用的JDK版本太高了(我的是JDK21)

解决方法:

1.访问 Oracle JDK 8下载页面,下载jdk8;我下载的是jdk-8u411-windows-x64.exe;

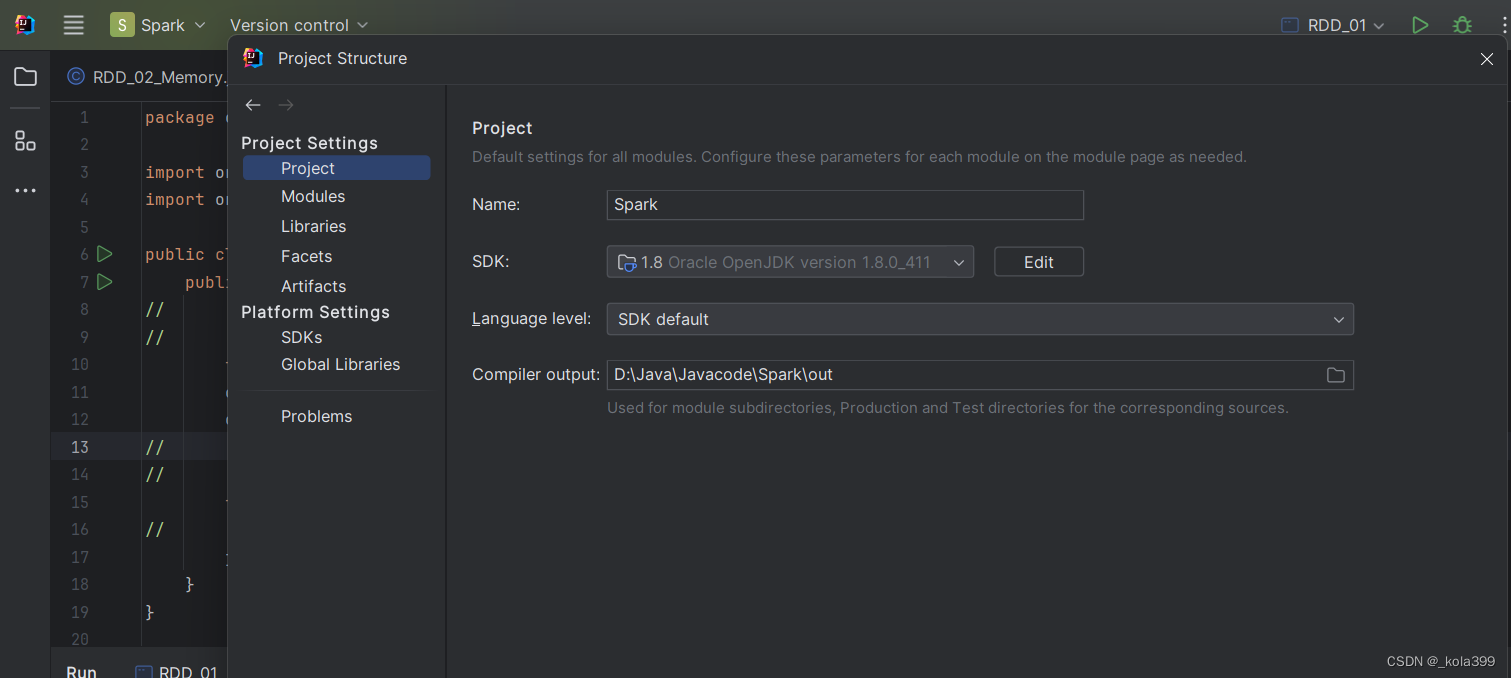

2.在IntelliJ IDEA中,点击菜单栏上的 File,然后选择 Project Structure;

3.设置Project SDK和Modules的SDK为刚下载好的JDK8的文件路径;

4.配置Maven使用Java 8(因为我的maven项目pom.xml中声明使用JDK21,这里会导致异常报错:无效的版本),所以需要改成JDK8:

<properties>

<maven.compiler.source>1.8</maven.compiler.source>

<maven.compiler.target>1.8</maven.compiler.target>

</properties>

5.此时可以新建一个文件来验证配置:(如果出现的是刚下载的1.8版本就OK了)

public class TestJavaVersion {

public static void main(String[] args) {

System.out.println(System.getProperty("java.version"));

}

}

6.此时再去运行刚才的代码就不会出错了:

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?