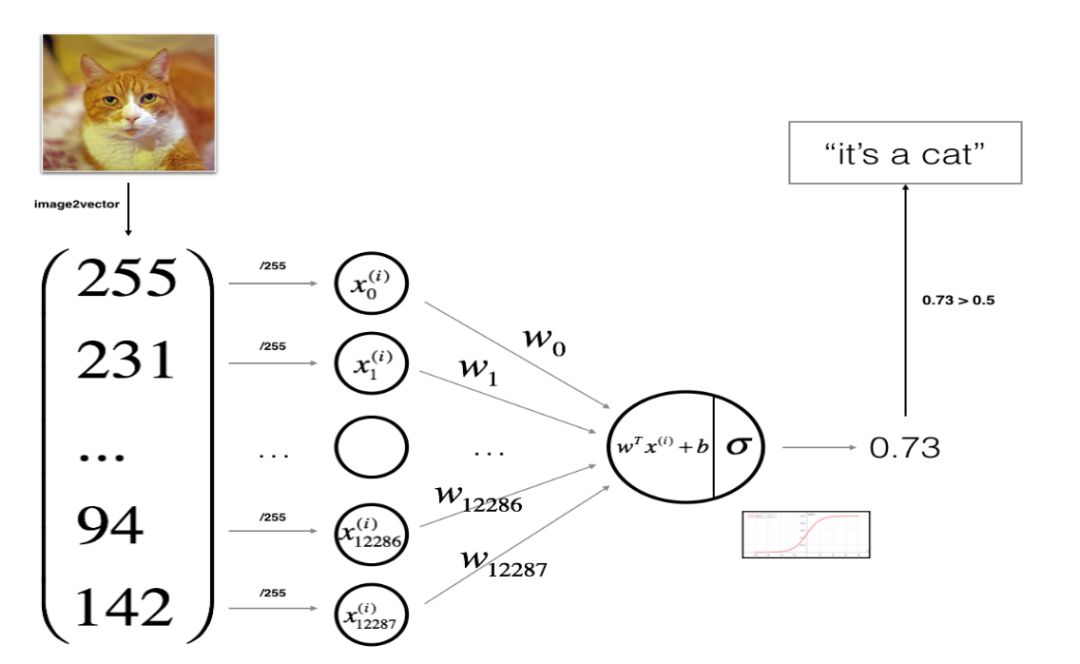

上面就是最简单的单层感知器,由多个输入,一个输出。

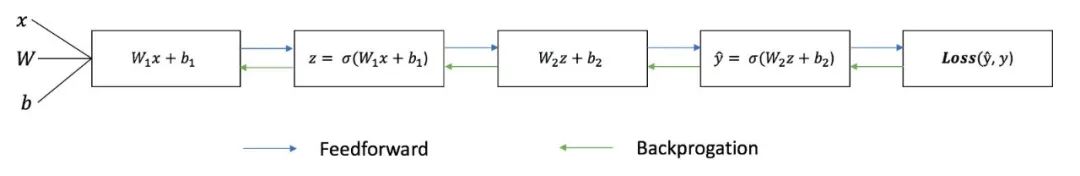

这个是一个完整的流程,做到右是正向传播,右到左是更新权值。

虽然现在有TF,CAFF可以一句话写出下面这些,不过对于努力提高的同学来说,用基础代码实现基础功能还是很有意义的。

现在开始用numpy搭建网络:

import numpy as np

#激活函数

def sigmoid(x)

return 1/(1+np.exp(-x))

#初始化

def initilize_with_zeros(dim)

w=np.zeros((dim,1))

b=0.0

return w,b

#前向传播

def propagate(w, b, X, Y):

#前向传播

A = sigmoid(np.dot(w.T, X) + b)

#交叉损失熵

cost = -1/m * np.sum(Y*np.log(A) + (1-Y)*np.log(1-A))

#以下剩余代码是计算对应梯度(方向误差传播的比例)

m = X.shape[1]

#梯度不是这样求的,但是这样保留了比例关系,简便了计算

dw = np.dot(X, (A-Y).T)/m

db = np.sum(A-Y)/m

assert(dw.shape == w.shape)

assert(db.dtype == float)

#构造损失函数

cost = np.squeeze(cost)

assert(cost.shape == ())

grads = { 'dw': dw,

'db': db

}

return grads, cost

def backward_propagation(w, b, X, Y, num_iterations, learning_rate, print_cost=False):

#损失列表容器

cost = []

#num_iterations迭代次数

for i in range(num_iterations):

grad, cost = propagate(w, b, X, Y)

#更新权值

dw = grad['dw']

db = grad['db']

w = w - learing_rate * dw

b = b - learning_rate * db

#每100步训练储存一次cost

if i % 100 == 0:

cost.append(cost)

if print_cost and i % 100 == 0:

print("cost after iteration %i: %f" %(i, cost))

params = {"dw": w,

"db": b

}

grads = {"dw": dw,

"db": db

}

return params, grads, costs

def predict(w, b, X):

#m是样本数

m = X.shape[1]

#有m个结果

Y_prediction = np.zeros((1, m))

w = w.reshape(X.shape[0], 1)

A = sigmoid(np.dot(w.T, X)+b)

for i in range(A.shape[1]):

if A[:, i] > 0.5:

Y_prediction[:, i] = 1

else:

Y_prediction[:, i] = 0

assert(Y_prediction.shape == (1, m))

return Y_prediction#对代码做一下简单封装

def model(X_train, Y_train, X_test, Y_test, num_iterations = 2000, learning_rate = 0.5, print_cost = False):

w, b = initialize_with_zeros(X_train.shape[0])

parameters, grads, costs = backward_propagation(w, b, X_train, Y_train, num_iterations, learning_rate, print_cost)

w = parameters["w"]

b = parameters["b"]

Y_prediction_train = predict(w, b, X_train)

Y_prediction_test = predict(w, b, X_test)

print("train accuracy: {} %".format(100 - np.mean(np.abs(Y_prediction_train - Y_train)) * 100))

print("test accuracy: {} %".format(100 - np.mean(np.abs(Y_prediction_test - Y_test)) * 100))

d = {"costs": costs,

"Y_prediction_test": Y_prediction_test,

"Y_prediction_train" : Y_prediction_train,

"w" : w,

"b" : b,

"learning_rate" : learning_rate,

"num_iterations": num_iterations}

return d

596

596

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?