我们在上传大文件的时候往往需要采用分片的方式,Amazon虽然提供了这种方式,但是不能实现我们后端在(宕机/重启)之后导致的文件丢失,大文件上传到一半可能会丢失数据的问题。

本文将利用本地线程池加数据库记录的方式,实现后端(宕机/重启)后恢复之前正在上传的文件,顺便利用SSE(Server Send Event)实现(宕机/重启)后进度条的还原。

首先引入maven,我用的是下面这个,目前最新版本1.12.731。

<dependency>

<groupId>com.amazonaws</groupId>

<artifactId>aws-java-sdk-s3</artifactId>

<version>1.12.731</version>

</dependency>

<dependency>

<groupId>commons-io</groupId>

<artifactId>commons-io</artifactId>

<version>2.16.1</version>

</dependency>配置AmazonS3客户端

新建WebConfig,这里用BasicAWSCredentials创建基础的AWS认证,并在Spring中注入AmazonS3 的bean方便后续使用

// WebConfig.java

@Configuration

public class WebConfig {

private static final String accessKey = "你的accessKey";

private static final String secretKey = "你的secretKey";

@Bean

public AmazonS3 s3() {

BasicAWSCredentials awsCredentials = new BasicAWSCredentials(accessKey, secretKey);

return AmazonS3Client.builder()

.withRegion(Regions.CN_NORTHWEST_1)// 可以根据自己的需求进行选择

.withCredentials(new AWSStaticCredentialsProvider(awsCredentials))

.build();

}

@Bean

public ExecutorService executorService() {

return Executors.newFixedThreadPool(10);

}

@Bean

public ConcurrentMap<String, MyThread> uploadingFileMap() {

return new ConcurrentHashMap<>();

}

}文件上传

让我们首先实现一个基础版本的分段文件上传

新建一个文件S3UploadController

// S3UploadController.java

private static final String bucketName = "testbuckname";

private final AmazonS3 s3;

private File curFile = null;

long filePosition = 0;

@PostMapping("/file")

public String file(MultipartFile file) throws IOException {

//重复上传直接忽略掉

if (curFile != null) {

return "等待其他文件上传完毕";

}

// 基于客户端认证创建TransferManager,它的主要功能是上传文件请求,等待上传完毕。

TransferManager tm = TransferManagerBuilder.standard().withS3Client(s3).build();

InitiateMultipartUploadRequest initRequest = new InitiateMultipartUploadRequest(bucketName, monthAndDayDir() + file.getOriginalFilename());

InitiateMultipartUploadResult initResponse = s3.initiateMultipartUpload(initRequest);

if (file.getOriginalFilename() == null) {

return "file is null";

}

// 文件上传位置(B)

filePosition = 0;

// 分片大小5MB

long partSize = 5 * 1024 * 1024;

int idx = file.getOriginalFilename().lastIndexOf(".");

// 创建临时文件,为什么要创建临时文件不用传入的file?,下面方法中只能用File类型,需要将MultipartFile转为File类型

curFile = File.createTempFile(file.getOriginalFilename().substring(0, idx), file.getOriginalFilename().substring(idx));

// 流传输,需要commons-io,要引入pom

FileUtils.copyInputStreamToFile(file.getInputStream(), curFile);

long contentLength = file.getSize();

// 监听

List<PartETag> partETags = new ArrayList<>();

// 这里需要开一个线程使用SSE 监听,下面会描述

MyThread myThread = new MyThread(tm, bucketName, curFile, 0, uploadingFileMap, initResponse.getUploadId(), links, service, partETags);

// 线程存到本地线程里面

uploadingFileMap.put(initResponse.getUploadId(), myThread);

// 运行

executorService.submit(myThread);

// 分段上传开始

for (int i = 1; filePosition < contentLength; i++) {

partSize = Math.min(partSize, (contentLength - filePosition));

UploadPartRequest uploadRequest = new UploadPartRequest()

.withBucketName(bucketName)

.withKey(monthAndDayDir() + file.getOriginalFilename())

.withUploadId(initResponse.getUploadId())

.withPartNumber(i)

.withFileOffset(filePosition)

.withFile(curFile)

.withPartSize(partSize);

UploadPartResult uploadResult = s3.uploadPart(uploadRequest);

partETags.add(uploadResult.getPartETag());

filePosition += partSize;

}

// 上传结束 如果后端挂了执行不到此处

CompleteMultipartUploadRequest compRequest = new CompleteMultipartUploadRequest(bucketName, monthAndDayDir() + file.getOriginalFilename(),

initResponse.getUploadId(), partETags);

s3.completeMultipartUpload(compRequest);

curFile.delete();

curFile = null;

return "ok";

}

// 返回今天的格式化日期 05/30/

private String monthAndDayDir() {

SimpleDateFormat format = new SimpleDateFormat("MM/dd/");

return format.format(new Date());

}进度条

创建MyThread.java用户监听上传进度

注意开启异步,否则后续监听会卡住

// MyThread.java

@AllArgsConstructor

@NoArgsConstructor

@Slf4j

@EnableAsync

public class MyThread implements Runnable {

TransferManager tm;

String bucketName;

File file;

long process = 0;

ConcurrentMap<String, MyThread> uploadingFileMap;

String uploadId;

Map<String, SseEmitter> links;

S3FileService service;

List<PartETag> partETags;

@Override

@Async

public void run() {

PutObjectRequest request = new PutObjectRequest(bucketName, file.getName(), file);

request.setGeneralProgressListener(progressEvent -> {

switch (progressEvent.getEventType()) {

case ProgressEventType.REQUEST_BYTE_TRANSFER_EVENT:

// 传输中

process += progressEvent.getBytesTransferred();

// "1"是ID,自己起,这里写死演示

if (links.containsKey("1")) {

SseEmitter emitter = links.get("1");

try {

emitter.send((double) process / file.length() * 100d);

} catch (IOException e) {

log.info("你的主机中的软件中止了一个已建立的连接。");

}

}

break;

case ProgressEventType.TRANSFER_PART_FAILED_EVENT:

// 传输失败,此处不是后端宕机

break;

case ProgressEventType.TRANSFER_COMPLETED_EVENT:

// 传输完成

}

});

Upload upload = tm.upload(request);

try {

upload.waitForUploadResult();

uploadingFileMap.remove(uploadId);

} catch (InterruptedException e) {

throw new RuntimeException(e);

}

}

}回到S3UploadController.java新建客户端连接服务端的接口

private static final Map<String, SseEmitter> links = new ConcurrentHashMap<>();

@GetMapping(value = "/conn/{id}", produces = {MediaType.TEXT_EVENT_STREAM_VALUE})

public SseEmitter conn(@PathVariable String id) throws IOException {

SseEmitter emitter = links.get(id);

if (!links.containsKey(id)) {

links.put(id, new SseEmitter(1000L * 60));

emitter = links.get(id);

emitter.send(SseEmitter.event().data("连接成功"));

emitter.onCompletion(() -> links.remove(id));

emitter.onError((err) -> links.remove(id));

}

return emitter;

}前端用EventSource连接

// /api用于前端接口的转发

const es = new EventSource('/api/s3/upload/conn/1')

es.onmessage = (e: any) => {

if (e.data != '连接成功') {

let sz = e.data

totalProgress.value = sz * 1

}

}

es.onopen = (e: any) => {

console.log('connection');

}

es.onerror = (e: any) => {

console.log('error');

}连接成功会推送文件上传进度的信息。

为什么不用axios自带的监听器?

如果后端宕机了还怎么监听?

列出分段上传

@GetMapping("/ing")

public R ing() {

ListMultipartUploadsRequest allMultpartUploadsRequest = new ListMultipartUploadsRequest("testbuckname");

return R.ok(s3.listMultipartUploads(allMultpartUploadsRequest));

}R是封装的相应实体类,如下

import lombok.*;

import lombok.experimental.Accessors;

import java.io.Serial;

import java.io.Serializable;

/**

* 响应信息主体

*/

@ToString

@NoArgsConstructor

@AllArgsConstructor

@Accessors(chain = true)

public class R<T> implements Serializable {

@Serial

private static final long serialVersionUID = 1L;

private static final Integer SUCCESS = 200;

private static final Integer FAIL = 500;

@Getter

@Setter

private int code;

@Getter

@Setter

private String msg;

@Getter

@Setter

private T data;

public static <T> R<T> ok() {

return restResult(null, SUCCESS, "success");

}

public static <T> R<T> ok(T data) {

return restResult(data, SUCCESS, "success");

}

public static <T> R<T> ok(T data, String msg) {

return restResult(data, SUCCESS, msg);

}

public static <T> R<T> failed() {

return restResult(null, FAIL, "error");

}

public static <T> R<T> failed(String msg) {

return restResult(null, FAIL, msg);

}

public static <T> R<T> failed(T data) {

return restResult(data, FAIL, "error");

}

public static <T> R<T> failed(T data, String msg) {

return restResult(data, FAIL, msg);

}

public static <T> R<T> restResult(T data, int code, String msg) {

R<T> apiResult = new R<>();

apiResult.setCode(code);

apiResult.setData(data);

apiResult.setMsg(msg);

return apiResult;

}

}中止分段上传

@GetMapping("/stop")

public R stop() {

ListMultipartUploadsRequest allMultpartUploadsRequest = new ListMultipartUploadsRequest("testbuckname");

MultipartUploadListing multipartUploadListing = s3.listMultipartUploads(allMultpartUploadsRequest);

List<String> ans = new ArrayList<>();

for (MultipartUpload multipartUpload : multipartUploadListing.getMultipartUploads()) {

s3.abortMultipartUpload(new AbortMultipartUploadRequest(bucketName, multipartUpload.getKey(), multipartUpload.getUploadId()));

ans.add(multipartUpload.getUploadId());

}

return R.ok(ans);

}重启续传

在服务端(宕机/重启)后,我们要恢复上传,就必须要记录下之前上传的信息

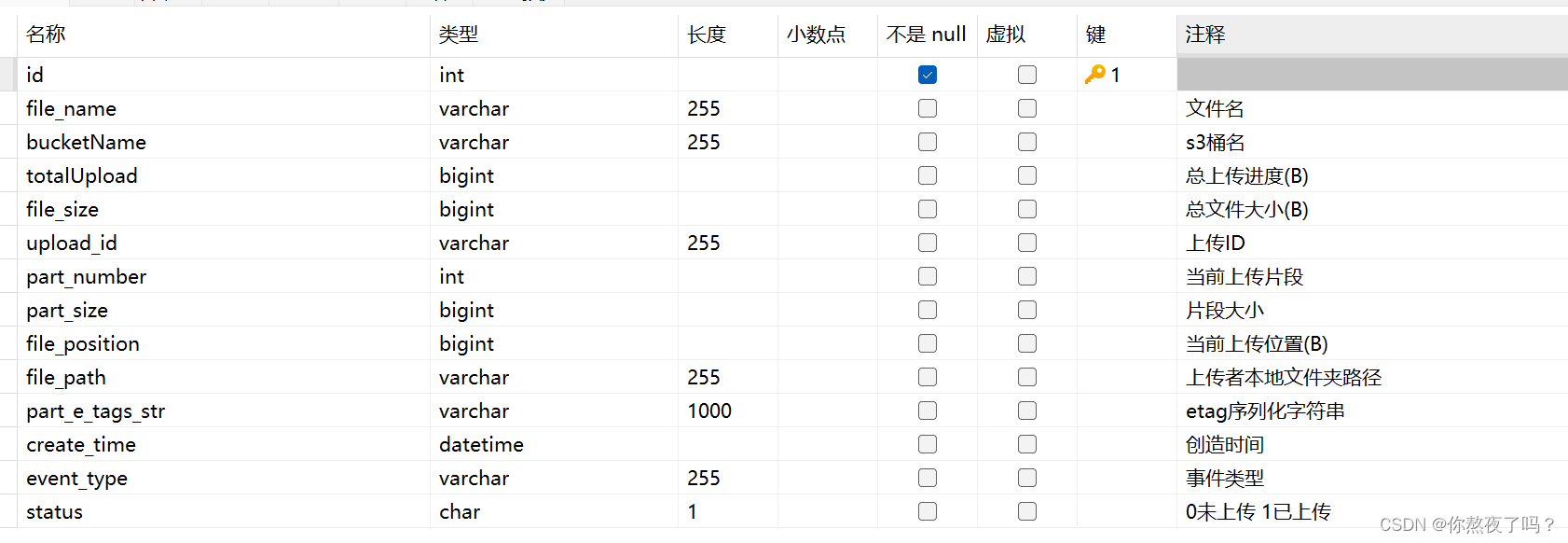

大概是这些,看图,建数据库存储。

建表语句如下

SET NAMES utf8mb4;

SET FOREIGN_KEY_CHECKS = 0;

DROP TABLE IF EXISTS `s3_file`;

CREATE TABLE `s3_file` (

`id` int NOT NULL AUTO_INCREMENT,

`file_name` varchar(255) CHARACTER SET utf8mb4 COLLATE utf8mb4_bin NULL DEFAULT NULL COMMENT '文件名',

`bucketName` varchar(255) CHARACTER SET utf8mb4 COLLATE utf8mb4_bin NULL DEFAULT NULL COMMENT 's3桶名',

`totalUpload` bigint NULL DEFAULT NULL COMMENT '总上传进度(B)',

`file_size` bigint NULL DEFAULT NULL COMMENT '总文件大小(B)',

`upload_id` varchar(255) CHARACTER SET utf8mb4 COLLATE utf8mb4_bin NULL DEFAULT NULL COMMENT '上传ID',

`part_number` int NULL DEFAULT NULL COMMENT '当前上传片段',

`part_size` bigint NULL DEFAULT NULL COMMENT '片段大小',

`file_position` bigint NULL DEFAULT NULL COMMENT '当前上传位置(B)',

`file_path` varchar(255) CHARACTER SET utf8mb4 COLLATE utf8mb4_bin NULL DEFAULT NULL COMMENT '上传者本地文件夹路径',

`part_e_tags_str` varchar(1000) CHARACTER SET utf8mb4 COLLATE utf8mb4_bin NULL DEFAULT NULL COMMENT 'etag序列化字符串',

`create_time` datetime NULL DEFAULT NULL COMMENT '创造时间',

`event_type` varchar(255) CHARACTER SET utf8mb4 COLLATE utf8mb4_bin NULL DEFAULT NULL COMMENT '事件类型',

`status` char(1) CHARACTER SET utf8mb4 COLLATE utf8mb4_bin NULL DEFAULT NULL COMMENT '0未上传 1已上传',

PRIMARY KEY (`id`) USING BTREE

) ENGINE = InnoDB AUTO_INCREMENT = 16 CHARACTER SET = utf8mb4 COLLATE = utf8mb4_bin ROW_FORMAT = Dynamic;

SET FOREIGN_KEY_CHECKS = 1;用Mybatis Plus生成一下操作数据库对应的Service,entity,Mapper。

完成之后继续👇

在S3UploadController编写继续上传接口

@PostMapping("/up/{uploadId}")

public R continueUpload(@PathVariable String uploadId) {

List<MultipartUpload> multipartUploads = service.getNotUploadedIds().getMultipartUploads();

List<String> uploadIdList = multipartUploads.stream().map(MultipartUpload::getUploadId).toList();

int idx = uploadIdList.indexOf(uploadId);

if (idx == -1) {

return R.failed("uploadId被遗弃或者已经完成");

}

MultipartUpload upload = multipartUploads.get(idx);

S3File s3File = service.getOne(Wrappers.<S3File>lambdaQuery().eq(S3File::getUploadId, uploadId));

// 传upload是因为之前存储的是临时文件的文件名,与真实的文件名有出入,直接用upload里面的文件名就可以了。

continueUpload(s3File, upload);

return R.ok("继续上传 _._");

}

private void continueUpload(S3File s3File, MultipartUpload upload) {

TransferManager tm = TransferManagerBuilder.standard().withS3Client(s3).build();

// 根据原先存储的路径新建File

File tempFile = new File(s3File.getFilePath());

long partSize = s3File.getPartSize();

s3File.setPartETags(JSONObject.parseObject(s3File.getPartETagsStr(), new TypeReference<List<PartETag>>() {

}));

List<PartETag> partETags = s3File.getPartETags();

MyThread myThread = new MyThread(tm, bucketName, tempFile, partETags.size() * s3File.getPartSize(), uploadingFileMap, s3File.getUploadId(), links, service, partETags);

uploadingFileMap.put(s3File.getUploadId(), myThread);

executorService.submit(myThread);

long filePosition = partETags.size() * s3File.getPartSize();

// 根据上次上传的分片位置,继续上传。为什么?因为之前/file接口里面异步监听进度的代码,S3上传分片的速度和监听的进度不一致,以实际的partETags为准。

for (int i = partETags.size() + 1; filePosition < s3File.getFileSize(); i++) {

partSize = Math.min(partSize, (s3File.getFileSize() - s3File.getFilePosition()));

UploadPartRequest uploadRequest = new UploadPartRequest()

.withBucketName(bucketName)

.withKey(upload.getKey())

.withUploadId(s3File.getUploadId())

.withPartNumber(i)

.withFileOffset(s3File.getFilePosition())

.withFile(tempFile)

.withPartSize(partSize);

UploadPartResult uploadResult = s3.uploadPart(uploadRequest);

partETags.add(uploadResult.getPartETag());

filePosition += partSize;

}

CompleteMultipartUploadRequest compRequest = new CompleteMultipartUploadRequest(bucketName, upload.getKey(), s3File.getUploadId(), partETags);

s3.completeMultipartUpload(compRequest);

tempFile.delete();

}此时有新的问题,如何记录(宕机/重启)后的相关数据呢?

新建XXX.java,在(宕机/重启)前执行

@Component

@RequiredArgsConstructor

public class XXX implements DisposableBean {

private final ConcurrentMap<String, MyThread> uploadingFileMap;

private final S3FileService s3FileService;

@Override

public void destroy() {

System.out.println("-----检查是否上传完毕-----");

for (Map.Entry<String, MyThread> entry : uploadingFileMap.entrySet()) {

MyThread myThread = entry.getValue();

S3File s3File = new S3File();

s3File.setFileName(monthAndDayDir() + myThread.file.getName());

s3File.setBucketname(myThread.bucketName);

// 写死了分片大小,可以修改,依旧按照partETags的实际上传进度。

s3File.setTotalupload(myThread.partETags.size() * (long) (5 * 1024 * 1024));

s3File.setFileSize(myThread.file.length());

s3File.setUploadId(myThread.uploadId);

s3File.setPartNumber(myThread.partETags.size());

s3File.setPartSize((long) (5 * 1024 * 1024));

s3File.setFilePosition(myThread.partETags.size() * (long) (5 * 1024 * 1024));

s3File.setFilePath(myThread.file.getPath());

s3File.setPartETagsStr(JSONObject.toJSONString(myThread.partETags));

s3File.setCreateTime(new Date());

s3File.setEventType("Abnormal thread exit");

s3File.setStatus('0');

if (s3FileService.count(Wrappers.<S3File>lambdaQuery().eq(S3File::getUploadId, myThread.uploadId)) == 0) {

s3FileService.save(s3File);

} else {

s3FileService.update(s3File, Wrappers.<S3File>lambdaQuery().eq(S3File::getUploadId, myThread.uploadId));

}

}

}

private String monthAndDayDir() {

SimpleDateFormat format = new SimpleDateFormat("MM/dd/");

return format.format(new Date());

}

}此时调用重新上传的接口,完成时,列出进行中的分段已经没有我们之前上传完成的分段,大功告成!

为什么使用MyThread监听进度条

①方便使用SSE进行进度条的监听

②方便存储一些续传文件相关信息,宕机后可传入参数快速实现进度条监听。

2699

2699

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?