版本

Hadoop3.1.0

https://archive.apache.org/dist/hadoop/common/hadoop-3.1.0/

jdk1.8

zookeeper3.4.6

https://archive.apache.org/dist/zookeeper/zookeeper-3.4.6/

环境

3台centos6节点

配置免密钥

ssh-keygen -t rsa -P '' -f ~/.ssh/id_rsa

cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

namenode节点的公钥文件需要拷贝到其他节点的公钥文件中

关闭防火墙

service iptables stop

chkconfig --level 3 iptables off

hadoop-3.1.0/etc/hadoop中需要配置workers,hadoop-env.sh,core-site.xml,hdfs-site.xml,mapred-site.xml,yarn-site.xml

workers里面写上datanode节点名或地址

hadoop-env.sh中添加export JAVA_HOME=/usr/java/default

core-site.xml中

<property>

<name>fs.defaultFS</name>

<value>hdfs://mycluster</value>

</property>

<property>

<!--该目录不存在或者为空-->

<name>hadoop.tmp.dir</name>

<value>/opt/hadoop</value>

</property>

<property>

<name>ha.zookeeper.quorum</name>

<value>node1:2181,node2:2181,node3:2181</value>

</property>

<property>

<name>hadoop.native.lib</name>

<value>true</value>

</property>

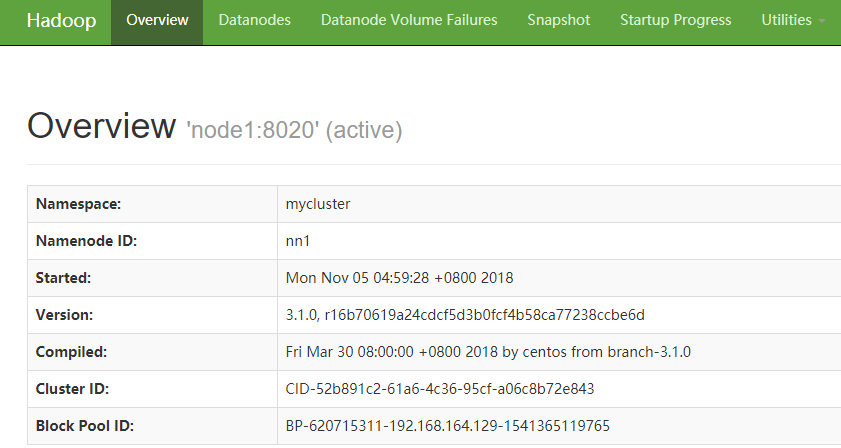

hdfs-site.xml中

<property>

<name>dfs.nameservices</name>

<value>mycluster</value>

</property>

<property>

<name>dfs.ha.namenodes.mycluster</name>

<value>nn1,nn2</value>

</property>

<property>

<name>dfs.namenode.rpc-address.mycluster.nn1</name>

<value>node1:8020</value>

</property>

<property>

<name>dfs.namenode.rpc-address.mycluster.nn2</name>

<value>node2:8020</value>

</property>

<property>

<name>dfs.namenode.http-address.mycluster.nn1</name>

<value>node1:50070</value>

</property>

<property>

<name>dfs.namenode.http-address.mycluster.nn2</name>

<value>node2:50070</value>

</property>

<property>

<name>dfs.namenode.shared.edits.dir</name>

<value>qjournal://node1:8485;node2:8485;node3:8485/mycluster</value>

</property>

<property>

<name>dfs.client.failover.proxy.provider.mycluster</name>

<value>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider</value>

</property>

<property>

<name>dfs.ha.fencing.methods</name>

<value>sshfence</value>

</property>

<property>

<name>dfs.ha.fencing.ssh.private-key-files</name>

<!--密钥的私钥文件-->

<value>/root/.ssh/id_rsa</value>

</property>

<property>

<!--journal目录不存在或者为空-->

<name>dfs.journalnode.edits.dir</name>

<value>/opt/journal/data</value>

</property>

<property>

<name>dfs.ha.automatic-failover.enabled</name>

<value>true</value>

</property>

mapred-site.xml

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>yarn.app.mapreduce.am.env</name>

<value>HADOOP_MAPRED_HOME=/opt/hadoop-3.1.0</value>

</property>

<property>

<name>mapreduce.map.env</name>

<value>HADOOP_MAPRED_HOME=/opt/hadoop-3.1.0</value>

</property>

<property>

<name>mapreduce.reduce.env</name>

<value>HADOOP_MAPRED_HOME=/opt/hadoop-3.1.0</value>

</property>

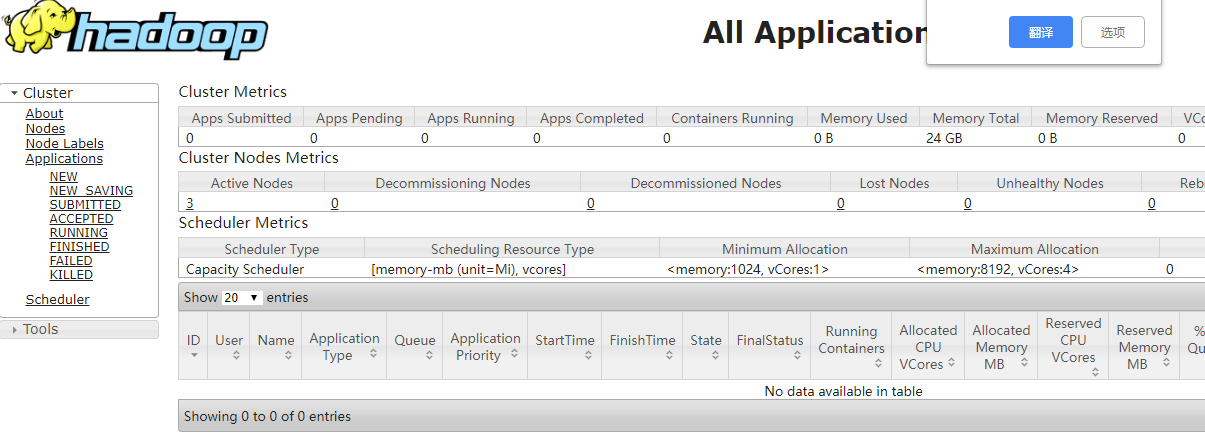

yarn-site.xml

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.resourcemanager.ha.enabled</name>

<value>true</value>

</property>

<property>

<name>yarn.resourcemanager.cluster-id</name>

<value>cluster1</value>

</property>

<property>

<name>yarn.resourcemanager.ha.rm-ids</name>

<value>rm1,rm2</value>

</property>

<property>

<name>yarn.resourcemanager.hostname.rm1</name>

<value>node1</value>

</property>

<property>

<name>yarn.resourcemanager.hostname.rm2</name>

<value>node2</value>

</property>

<property>

<name>yarn.resourcemanager.zk-address</name>

<value>node1:2181,node2:2181,node3:2181</value>

</property>

<property>

<name>yarn.resourcemanager.webapp.address.rm1</name>

<value>node1:8088</value>

</property>

<property>

<name>yarn.resourcemanager.webapp.address.rm2</name>

<value>node2:8088</value>

</property>

sbin目录修改start-dfs.sh,stop-dfs.sh,start-yarn.sh,stop-yarn.sh文件

start-dfs.sh,stop-dfs.sh中添加

HDFS_NAMENODE_USER=root

HDFS_DATANODE_USER=root

HDFS_JOURNALNODE_USER=root

HDFS_ZKFC_USER=root

start-yarn.sh,stop-yarn.sh文件中添加

YARN_RESOURCEMANAGER_USER=root

YARN_NODEMANAGER_USER=root

分发节点

启动三台节点的journalnode

hadoop-3.1.0/sbin/hadoop-daemon.sh start journalnode

格式化其中一台namenode并启动

hadoop-3.1.0/bin/hdfs namenode -format

hadoop-3.1.0/bin/hadoop-daemon.sh start namenode

另一台同步元数据

hadoop-3.1.0/bin/hdfs namenode -bootstrapStandby

元数据同步到zk

hadoop-3.1.0/bin/hdfs zkfc -formatZK

启动

hadoop-3.1.0/sbin/start-all.sh

配置环境变量(选做)

vim /etc/profile.d/hadoop.sh

export HADOOP_HOME=/home/hadoop-3.1.0

export PATH=$PATH:$HADOOP_HOME/bin:$HADOOP_HOME/sbin

可在浏览器中查看50070和8088端口

928

928

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?