前言

前面两篇主要说了关于watcher在客户端和服务端的相关实体类和功能接口的相关代码,这一篇把前面的两篇的这些实体类和功能接口以及整个watcher的相关框架串联起来,整体地说一下zk的watcher的注册,触发等运行的机制。

总的来说,ZK的watcher机制,主要可以分为三个阶段:

- 客户端注册watcher;

- 服务端处理watcher;

- 客户端回调watcher。

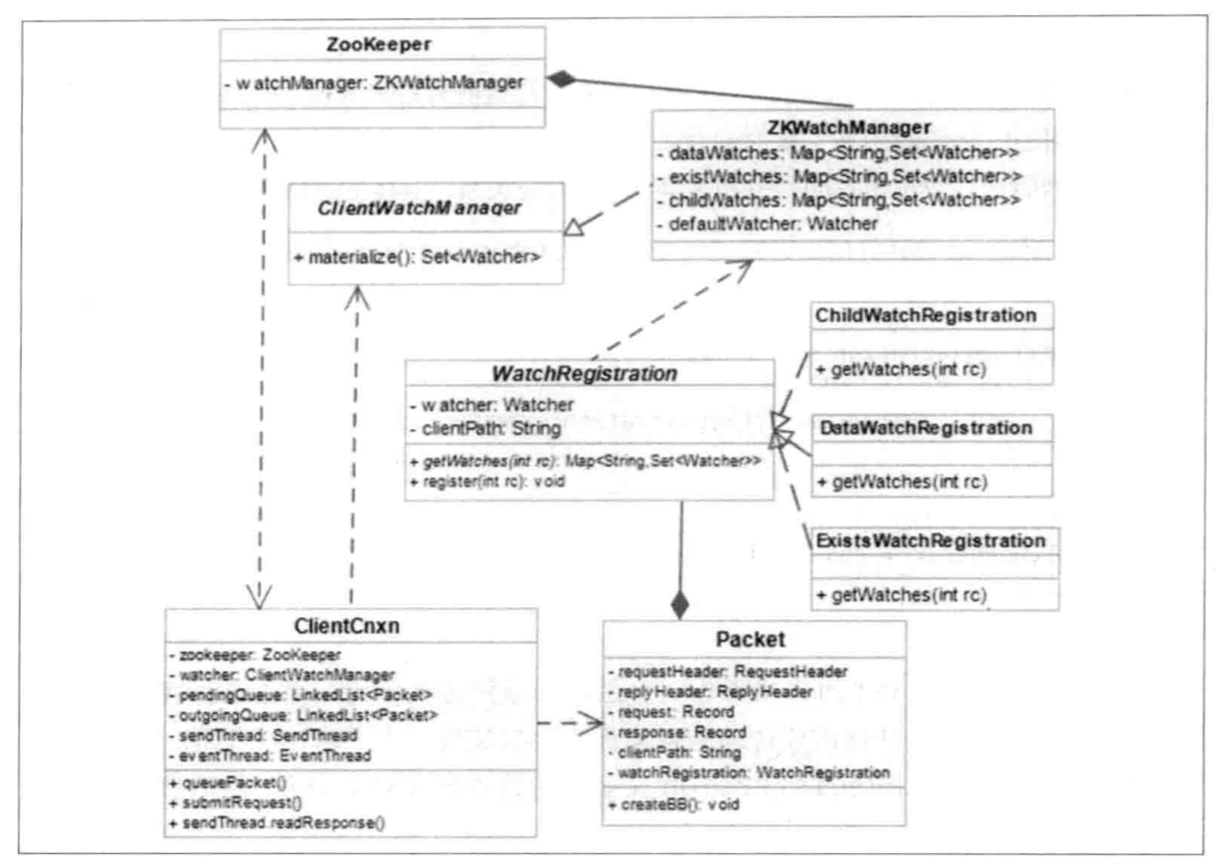

这三个过程的相关类的交互关系如下:

注册

使用过ZK原生api的同学都清楚,向zookeeper中注册watcher的接口大概有如下几个:

- 建立zk连接时传入的watcher;

- 通过getdata, exist, getchildren来设置watcher,而它们又各有同步和异步两种形式。

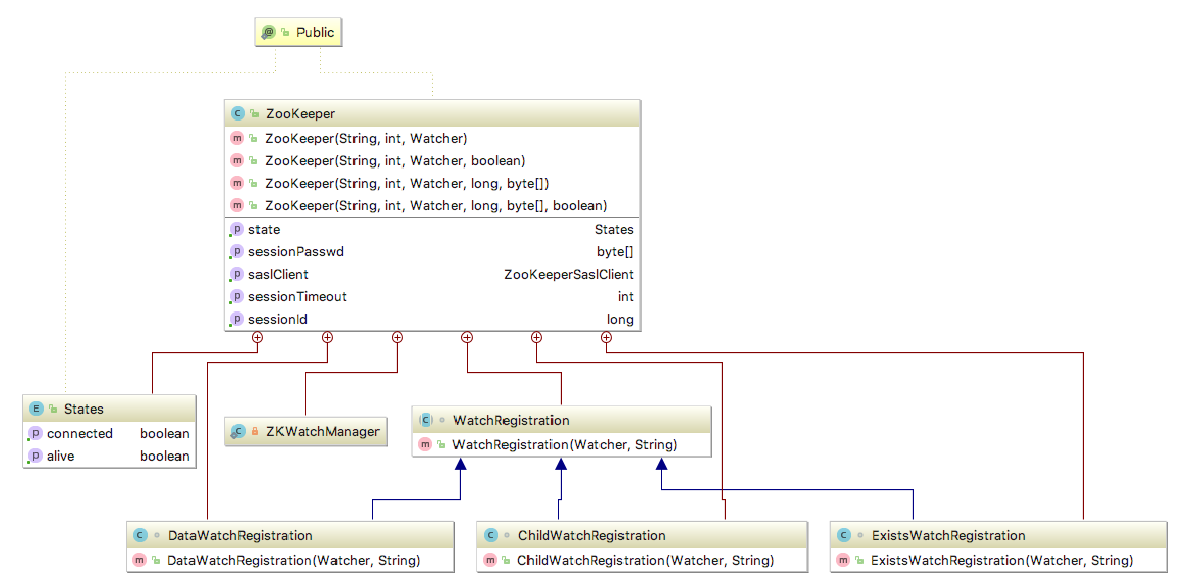

在zk的代码中,这两类注册的方式都在Zookeeper类中, Zookeeper类的内部结构如下:

通过原生的api去set watcher大概有如下的方法:

//构造器

public ZooKeeper(String connectString, int sessionTimeout, Watcher watcher)

public ZooKeeper(String connectString, int sessionTimeout, Watcher watcher, boolean canBeReadOnly)

public ZooKeeper(String connectString, int sessionTimeout, Watcher watcher, long sessionId, byte[] sessionPasswd)

public ZooKeeper(String connectString, int sessionTimeout, Watcher watcher, long sessionId, byte[] sessionPasswd, boolean canBeReadOnly)

//getData

public byte[] getData(final String path, Watcher watcher, Stat stat)

public void getData(final String path, Watcher watcher, DataCallback cb, Object ctx)

//exists

public Stat exists(final String path, Watcher watcher)

public void exists(final String path, Watcher watcher, StatCallback cb, Object ctx)

//getchildren

public List<String> getChildren(final String path, Watcher watcher)

public void getChildren(final String path, Watcher watcher, ChildrenCallback cb, Object ctx)

public List<String> getChildren(final String path, Watcher watcher, Stat stat)

public void getChildren(final String path, Watcher watcher, Children2Callback cb, Object ctx)首先是第一类通过构造器注册的:

public ZooKeeper(String connectString, int sessionTimeout, Watcher watcher,

boolean canBeReadOnly)

throws IOException

{

LOG.info("Initiating client connection, connectString=" + connectString

+ " sessionTimeout=" + sessionTimeout + " watcher=" + watcher);

//把传入的watcher注册到default的watcher中,(留心就可以发现getdata,exists,getchildren提供了参数为boolean类型,参数名为watch的接口,调用这些接口触发的就是default的watcher)

watchManager.defaultWatcher = watcher;可以看到,通过构造器传入的默认watcher会注册到ZKWatchManager类型的变量watchManager中。

然后是通过另外三个接口注册的watcher,其实也是分为两种情况的,以getData为例,getData方法的watcher参数在有的接口中为boolean型有的中为Watcher类型。

Watcher类型:

public byte[] getData(final String path, Watcher watcher, Stat stat)

throws KeeperException, InterruptedException

{

final String clientPath = path; //记录znode的path

PathUtils.validatePath(clientPath);//path的合法性验证

// the watch contains the un-chroot path

WatchRegistration wcb = null;

if (watcher != null) {

wcb = new DataWatchRegistration(watcher, clientPath);//生成datawatch的对象

}

final String serverPath = prependChroot(clientPath);//chroot是zk启动时配置的默认前缀,前面有提到过的

//生成request的相关类

RequestHeader h = new RequestHeader();

h.setType(ZooDefs.OpCode.getData);

GetDataRequest request = new GetDataRequest();

request.setPath(serverPath);

request.setWatch(watcher != null);

GetDataResponse response = new GetDataResponse();

//发送请求

ReplyHeader r = cnxn.submitRequest(h, request, response, wcb);

if (r.getErr() != 0) {

throw KeeperException.create(KeeperException.Code.get(r.getErr()),

clientPath);

}

if (stat != null) {

DataTree.copyStat(response.getStat(), stat);

}

return response.getData();

}简单看一下getData的watcher注册流程,getChildren和exists和getData和这个也是类似的。

Boolean类型:

public byte[] getData(String path, boolean watch, Stat stat)

throws KeeperException, InterruptedException {

//可以看到就是调用了Watcher类型的接口,只是如果传入的是true,那么就默认使用在构造器中传入的默认watcher

return getData(path, watch ? watchManager.defaultWatcher : null, stat);

}可以通过上面的接口看到,通过接口设置的watcher会生成对应类型的watcher。在Zookeeper类中,WatchRegistration是一个抽象类,是三种负责注册的类(DataWatchRegistration, ChildWatchRegistration, ExistsWatchRegistration)的父类。

WatchRegistration

/**

* Register a watcher for a particular path.

*/

abstract class WatchRegistration {

private Watcher watcher; //注册的watcher

private String clientPath; //znode的path

public WatchRegistration(Watcher watcher, String clientPath)//构造器

{

this.watcher = watcher;

this.clientPath = clientPath;

}

//抽象方法,获取znode path和对应的watcher set的map关系

abstract protected Map<String, Set<Watcher>> getWatches(int rc);

/**

* Register the watcher with the set of watches on path.

* @param rc the result code of the operation that attempted to

* add the watch on the path.

*/

//根据添加watcher的response码来注册watcher

//在clientCnxn中调用p.watchRegistration.register(p.replyHeader.getErr());

public void register(int rc) {

if (shouldAddWatch(rc)) {//如果rc即response code为0则添加

Map<String, Set<Watcher>> watches = getWatches(rc);//获取所有已经注册的path和watcher的map关系

synchronized(watches) {

Set<Watcher> watchers = watches.get(clientPath);//找到此次注册的watcher的znode的path

if (watchers == null) {//若之前没有watcher,则新建watcher的set

watchers = new HashSet<Watcher>();

watches.put(clientPath, watchers);

}

watchers.add(watcher);//把watcher添加到全局对应关系中

}

}

}

/**

* Determine whether the watch should be added based on return code.

* @param rc the result code of the operation that attempted to add the

* watch on the node

* @return true if the watch should be added, otw false

*/

protected boolean shouldAddWatch(int rc) {//判断是否应该添加

return rc == 0; //rc=0即为添加信号

}

}看过了父类的方法,三个子类的就很好理解了:

/** Handle the special case of exists watches - they add a watcher

* even in the case where NONODE result code is returned.

*/

class ExistsWatchRegistration extends WatchRegistration {

public ExistsWatchRegistration(Watcher watcher, String clientPath) {

super(watcher, clientPath);//父类构造方法

}

@Override

protected Map<String, Set<Watcher>> getWatches(int rc) {

//这里有点疑问,为什么会有data的watches

//应该是加watch的时候可能会出现no node的情况,这种情况下才放到existwatches里去处理,不然都是datawatches

return rc == 0 ? watchManager.dataWatches : watchManager.existWatches;

}

@Override

protected boolean shouldAddWatch(int rc) {

//返回码是0或者是NONODE时添加

return rc == 0 || rc == KeeperException.Code.NONODE.intValue();

}

}

class DataWatchRegistration extends WatchRegistration {

public DataWatchRegistration(Watcher watcher, String clientPath) {

super(watcher, clientPath);

}

@Override

protected Map<String, Set<Watcher>> getWatches(int rc) {

//获取datawatch

return watchManager.dataWatches;

}

}

class ChildWatchRegistration extends WatchRegistration {

public ChildWatchRegistration(Watcher watcher, String clientPath) {

super(watcher, clientPath);

}

@Override

protected Map<String, Set<Watcher>> getWatches(int rc) {

//获取childwatch

return watchManager.childWatches;

}

}看到这里,结合之前两篇说的内容,可以知道,当利用API添加watch时,zk客户端会把watcher生成对应的Registration对象,然后发送添加请求到服务端,根据服务端的返回结果把Registration对象注册到ZKWatchManager对应的watch map中。接下来详细说下客户端发送请求的流程(getData为例)。

首先是getData的请求部分代码:

//生成request的相关类

RequestHeader h = new RequestHeader();

h.setType(ZooDefs.OpCode.getData);//设置请求类型

GetDataRequest request = new GetDataRequest();//生成可序列化的getdatarequest

request.setPath(serverPath);//设置path和watcher

request.setWatch(watcher != null);

GetDataResponse response = new GetDataResponse();//生成可序列化的getdataresponse

//发送请求

ReplyHeader r = cnxn.submitRequest(h, request, response, wcb);

if (r.getErr() != 0) {

throw KeeperException.create(KeeperException.Code.get(r.getErr()),

clientPath);

}可以看到,DataWatchRegistration和request/response对象一进入了clientCnxn的submitRequest方法。

public ReplyHeader submitRequest(RequestHeader h, Record request,

Record response, WatchRegistration watchRegistration)

throws InterruptedException {

ReplyHeader r = new ReplyHeader();

//把传入的参数传入queuePacket

Packet packet = queuePacket(h, r, request, response, null, null, null,

null, watchRegistration);

synchronized (packet) {

while (!packet.finished) {//判断packet是否处理完,没有就wait

packet.wait();

}

}

return r;

}Packet queuePacket(RequestHeader h, ReplyHeader r, Record request,

Record response, AsyncCallback cb, String clientPath,

String serverPath, Object ctx, WatchRegistration watchRegistration)

{

Packet packet = null;

// Note that we do not generate the Xid for the packet yet. It is

// generated later at send-time, by an implementation of ClientCnxnSocket::doIO(),

// where the packet is actually sent.

//在这里并没有为packet生成xid

synchronized (outgoingQueue) {

packet = new Packet(h, r, request, response, watchRegistration);//初始化packet

packet.cb = cb;//属性赋值,注册watcher时回调为空

packet.ctx = ctx;

packet.clientPath = clientPath;

packet.serverPath = serverPath;

if (!state.isAlive() || closing) {//判断当前的连接状态

conLossPacket(packet);

} else {

// If the client is asking to close the session then

// mark as closing

if (h.getType() == OpCode.closeSession) {

closing = true;

}

outgoingQueue.add(packet);//把packet放入队列中(生产者)

}

}

sendThread.getClientCnxnSocket().wakeupCnxn();

return packet;

}问题来了,每次在队列中添加了一个watch的registration之后是谁消费的呢?

在ClientCnxn类中有一个sendThread线程的run方法里,clientCnxnSocket.doTransport(to, pendingQueue, outgoingQueue, ClientCnxn.this);这个方法就是负责消费队列里的registration的。

其中,ClientCnxnSocketNIO是clientCnxnSocket的默认实现类,后面会大致说一下。

在ClientCnxnSocketNIO的doTransport方法中调用了doIO(pendingQueue, outgoingQueue, cnxn);方法,这里是负责具体的序列化及IO的工作。其中p.createBB();方法是负责具体的序列化的工作。

public void createBB() {

try {

ByteArrayOutputStream baos = new ByteArrayOutputStream();//stream和archive的初始化

BinaryOutputArchive boa = BinaryOutputArchive.getArchive(baos);

boa.writeInt(-1, "len"); // We'll fill this in later

if (requestHeader != null) {

requestHeader.serialize(boa, "header");//序列化header,内容包含znode的路径和是否有watcher,这里很重要,server解析这里知道某个znode是否被watch

}

if (request instanceof ConnectRequest) {

request.serialize(boa, "connect");//序列化connect

// append "am-I-allowed-to-be-readonly" flag

boa.writeBool(readOnly, "readOnly");

} else if (request != null) {

request.serialize(boa, "request");//序列化request

}

baos.close();

this.bb = ByteBuffer.wrap(baos.toByteArray());

this.bb.putInt(this.bb.capacity() - 4);

this.bb.rewind();

} catch (IOException e) {

LOG.warn("Ignoring unexpected exception", e);

}

}可以看到,zk只会把request和header进行初始化,也就是说,尽管watchregistration也作为一个参数传入,但是在序列化时并没有去吧watchregistration转换成二进制。这也就代表了客户端每调用一次watcher的注册接口,watcher本身并不会被发送到服务端去。这样做的好处是如果所有的watcher实体都被上传到服务端去,随着集群规模的扩大,那么服务端的压力就会越来越大。而zk这样的处理方式则很好的保证了zk的性能不会随着规模和watcher1数量的扩展出现明显的下降。

注册到ZKWatchManager

在doIO里有readResponse方法负责读取从server端获取的byte。其中finishPacket会从Packet中取出Watcher并注册到ZKWatchManager中。

private void finishPacket(Packet p) {

if (p.watchRegistration != null) {//registration不为空

p.watchRegistration.register(p.replyHeader.getErr());//根据返回码注册

}

if (p.cb == null) {//同步方式

synchronized (p) {

p.finished = true;

p.notifyAll();//响应之前的wait

}

} else {//异步方式

p.finished = true;

eventThread.queuePacket(p);

}

}这里的register就是之前说过的三种watchregistration里的register方法了。这样就把watcher注册到了zk客户端中,同时服务端也获取到了每个znode是否被watch。

注册总结

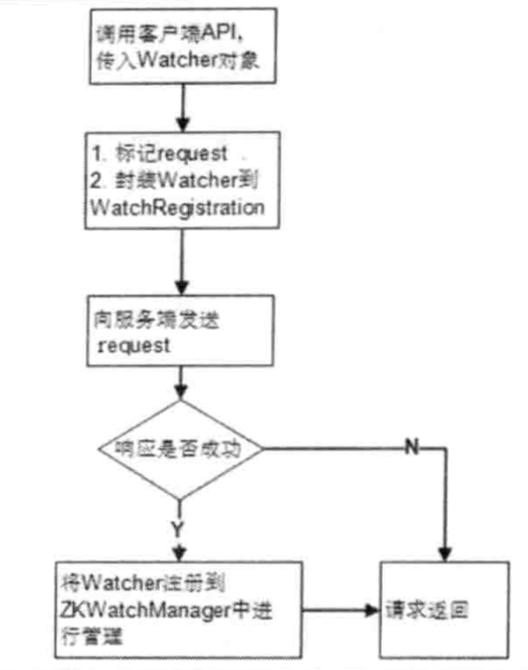

《从paxos到zk》中有一张图描述了注册的整个过程:

总结一下流程:

- 用户通过三种接口或者zk构造器方式传入watcher对象;

- 封装Packet对象(包含znode是否watch的信息),并把packet放入队列;

- ClientCnxn.sendThread是队列的消费者,讲packet取出并序列化(此时只序列化了znode是否watch的消息,并没有序列化整个watchregistration)发送给server;

- server处理后返回结果给客户端,这个具体过程后面详细说;

- ClientCnxn.sendThread读取server端的回复,并把znode和watcher的对应关系注册到ZKWatchManager中。

服务端处理

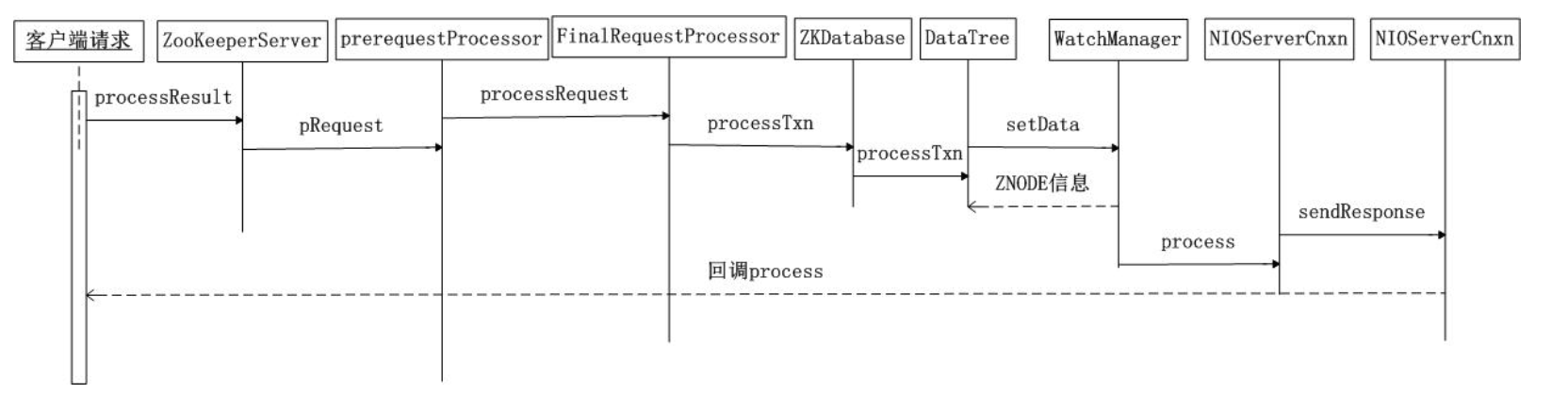

首先,通过简单的时序图来了解下server端处理watcher的流程:

按照这个流程来了解server端是如何处理watcher相关的请求的。以FinalRequestProcessor中processRequest里getdata类型的请求为例:

case OpCode.getData: {

lastOp = "GETD";

//初始化getdata的request,内容是path和是否watch( 初始化均为空)

GetDataRequest getDataRequest = new GetDataRequest();

ByteBufferInputStream.byteBuffer2Record(request.request,

getDataRequest);//把request反序列化到getDataRequest

DataNode n = zks.getZKDatabase().getNode(getDataRequest.getPath());//获取对应的node

if (n == null) {//异常处理

throw new KeeperException.NoNodeException();

}

PrepRequestProcessor.checkACL(zks, zks.getZKDatabase().aclForNode(n),

ZooDefs.Perms.READ,

request.authInfo);//检查是否有权限访问

Stat stat = new Stat();

byte b[] = zks.getZKDatabase().getData(getDataRequest.getPath(), stat,

getDataRequest.getWatch() ? cnxn : null);//注意这里,获取是否watch

rsp = new GetDataResponse(b, stat);//包装response

break;

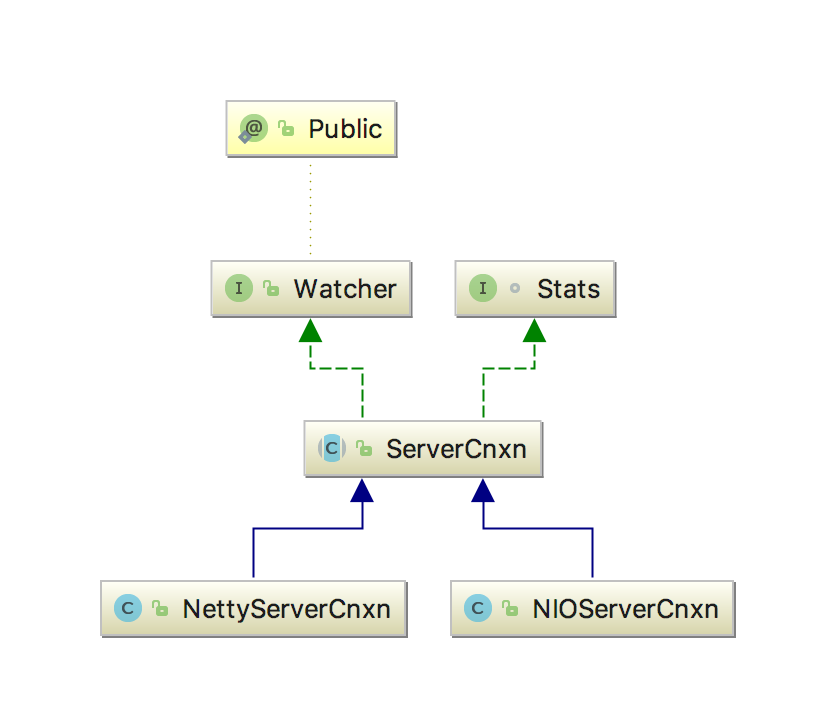

}getDataRequest.getWatch() ? cnxn : null上面代码中,这里有点特殊的地方,如果watch存在会获取一个名为cnxn的ServerCnxn类型的变量。ServerCnxn,代表了一个服务端与客户端的连接。类图如下:

可以看到ServerCnxn实现了Watcher接口,实际上后面的回调也是通过serverCnxn实现的。

同时在processRequest里,客户端传过来的消息会被传入ZookeeperServer的processTxn方法。

if (request.hdr != null) {

TxnHeader hdr = request.hdr;//header,包含请求类型

Record txn = request.txn;//request,路径和是否watch

rc = zks.processTxn(hdr, txn);

}public ProcessTxnResult processTxn(TxnHeader hdr, Record txn) {

ProcessTxnResult rc;

int opCode = hdr.getType();

long sessionId = hdr.getClientId();

rc = getZKDatabase().processTxn(hdr, txn);//dataTree相关的操作,更新树

if (opCode == OpCode.createSession) {//是否是创建连接

if (txn instanceof CreateSessionTxn) {

CreateSessionTxn cst = (CreateSessionTxn) txn;

sessionTracker.addSession(sessionId, cst

.getTimeOut());

} else {

LOG.warn("*****>>>>> Got "

+ txn.getClass() + " "

+ txn.toString());

}

} else if (opCode == OpCode.closeSession) {//是否是关连接

sessionTracker.removeSession(sessionId);

}

return rc;

}这是和datatree相关的一些操作。而上面的getdata的请求处理中进入了zkdatabase的getdata方法,而它的内部实际上调用了datatree的getdata方法。

public byte[] getData(String path, Stat stat, Watcher watcher)

throws KeeperException.NoNodeException {

DataNode n = nodes.get(path);//获取对应的node

if (n == null) {

throw new KeeperException.NoNodeException();

}

synchronized (n) {

n.copyStat(stat);//更新stat

if (watcher != null) {

dataWatches.addWatch(path, watcher);//注册watcher

}

return n.data;//返回节点的data

}

}dataWatches.addWatch(path, watcher);之前介绍过Server端的WatchManager有两类,一类是data的,另一类是child的。根据接口的不同加入不同的集合里。这样就完成了在server端的注册。

总结一下server端的处理:

1.维护datatree;

2.把watcher注册到watchmanager中。

之前很奇怪server端不是不存储watcher吗,为啥还要在server端注册到watchmanager中。下面具体说下触发的流程就清晰了。

触发watcher

其实看看ServerCnxn的子类就知道了:

synchronized public void process(WatchedEvent event) {

ReplyHeader h = new ReplyHeader(-1, -1L, 0);//包装header

if (LOG.isTraceEnabled()) {

ZooTrace.logTraceMessage(LOG, ZooTrace.EVENT_DELIVERY_TRACE_MASK,

"Deliver event " + event + " to 0x"

+ Long.toHexString(this.sessionId)

+ " through " + this);

}

// Convert WatchedEvent to a type that can be sent over the wire

WatcherEvent e = event.getWrapper();//把event包装(连接和事件)

sendResponse(h, e, "notification");

}这样就好理解了,serverCnxn的回调是为了告诉客户端去调用哪些watcher里的process。

而为什么会有这个回调呢,比如现在已经在server注册了一个watcher,现在通过setdata把znode的值改掉,这时就会触发watcher。

前面提到的ZookeeperServer的processTxn方法中,会调用zkdatabase的processTxn方法,事实上调用了datatree的processTxn方法。

case OpCode.setData:

SetDataTxn setDataTxn = (SetDataTxn) txn;

rc.path = setDataTxn.getPath();

rc.stat = setData(setDataTxn.getPath(), setDataTxn

.getData(), setDataTxn.getVersion(), header

.getZxid(), header.getTime());//调用datatree的setdata方法

break;public Stat setData(String path, byte data[], int version, long zxid,

long time) throws KeeperException.NoNodeException {

...

dataWatches.triggerWatch(path, EventType.NodeDataChanged);//触发watch

}triggerWatch方法前面已经说过了,可以结合前一篇博客以及上面关于ServerCnxn的实现类process方法的代码一起看就知道事实上server告诉客户端相应的event发生了。

客户端在ClientCnxn的readResponse方法中处理接收到的消息。

if (replyHdr.getXid() == -1) {//通知为watcherEvent

// -1 means notification

if (LOG.isDebugEnabled()) {

LOG.debug("Got notification sessionid:0x"

+ Long.toHexString(sessionId));

}

WatcherEvent event = new WatcherEvent();//路径、连接状态和事件

event.deserialize(bbia, "response");

// convert from a server path to a client path

//从server的path->客户端定义的真实path,因为可能有预定义的chrootPath存在

if (chrootPath != null) {//判断是否有预定义的chrootpath

String serverPath = event.getPath();

if(serverPath.compareTo(chrootPath)==0)

event.setPath("/");

else if (serverPath.length() > chrootPath.length())//加上chrootPath

event.setPath(serverPath.substring(chrootPath.length()));

else {

LOG.warn("Got server path " + event.getPath()

+ " which is too short for chroot path "

+ chrootPath);

}

}

WatchedEvent we = new WatchedEvent(event);//路径、连接状态和事件

if (LOG.isDebugEnabled()) {

LOG.debug("Got " + we + " for sessionid 0x"

+ Long.toHexString(sessionId));

}

eventThread.queueEvent( we );//加入eventThread的队列

return;

}这里watchedEvent被放入了队列,进入了queueEvent方法。

public void queueEvent(WatchedEvent event) {

if (event.getType() == EventType.None

&& sessionState == event.getState()) {//判断链接状态和事件

return;

}

sessionState = event.getState();//获取session的连接状态

// materialize the watchers based on the event

//WatcherSetEventPair为事件和event的对应关系

WatcherSetEventPair pair = new WatcherSetEventPair(

watcher.materialize(event.getState(), event.getType(),

event.getPath()),

event);

// queue the pair (watch set & event) for later processing

waitingEvents.add(pair);//加入消费的队列

}watcher.materialize(event.getState(), event.getType(),event.getPath())这里的materialize之前讲client的watcher存储时说过,实际上就是从ZKWatchManger中取出对应的watcher集合。

最终,在EventThread的run方法中调用了processEvent方法进行每个event对应的所有watcher的回调。

if (event instanceof WatcherSetEventPair) {//如果是event和watcher的对应关系

// each watcher will process the event

WatcherSetEventPair pair = (WatcherSetEventPair) event;//向下转型

for (Watcher watcher : pair.watchers) {//遍历

try {

watcher.process(pair.event);//回调!!!!!!!最终的真正的watcher的调用。

} catch (Throwable t) {

LOG.error("Error while calling watcher ", t);

}

}

}补充

前面说ClientCnxnSocketNIO是clientCnxnSocket的默认实现类,这里详细解释下。在Zookeeper类型构造器中:

public ZooKeeper(String connectString, int sessionTimeout, Watcher watcher,

boolean canBeReadOnly)

throws IOException

{

...

cnxn = new ClientCnxn(connectStringParser.getChrootPath(),

hostProvider, sessionTimeout, this, watchManager,

//这里获取ClientCnxnSocket的实例

getClientCnxnSocket(), canBeReadOnly);

cnxn.start();

}private static ClientCnxnSocket getClientCnxnSocket() throws IOException {

//查看系统设置,可能配置了实现

String clientCnxnSocketName = System

.getProperty(ZOOKEEPER_CLIENT_CNXN_SOCKET);

if (clientCnxnSocketName == null) {

clientCnxnSocketName = ClientCnxnSocketNIO.class.getName();//取得ClientCnxnSocketNIO的类名

}

try {

//反射生成实例然后返回

return (ClientCnxnSocket) Class.forName(clientCnxnSocketName).getDeclaredConstructor()

.newInstance();

} catch (Exception e) {

IOException ioe = new IOException("Couldn't instantiate "

+ clientCnxnSocketName);

ioe.initCause(e);

throw ioe;

}

}可以看到,ZK在生成许多实现类时使用了反射的特性,以后再项目中也可以考虑使用反射来做,这样可以使项目的配置等更加的灵活。

总结

Watcher特性的体现

- 无论在client还是server,watcher一旦被触发,zk都会移除watcher,体现了其一次性,这样的设计也有效地减轻了server端的压力;

- 从代码分析中可以看到,watcher都是放置在list中,有对应的thread生产和消费,具有串行执行的特点;

- 在client发送通知到server的过程中,只会告诉server端1.发生了什么事件,2.znode路径,3.是否watch。至于watcher的内容根本不会被同步到server端,server端存储的watcher是保存当前连接的serverCnxn对象,这样就充分体现了zk的轻量性!

Watcher触发

- server端监听目前有watcher的所有path,对不同的EventType(事件)进行不同的处理,如果不涉及watcher则这部分不会有相应的处理,如果有watcher的path被触发,则会通知client。

- 因为server端保存的是和client端的链接,所以server端可以知道每个znode的特定watcher属于哪个client,这样每个watcher只会在对应的client上触发。

Client端发送请求的具体类型和Server端接受

if (request instanceof ConnectRequest) {在creatBB方法中request是ConnectRequest类或者null,在server端接收的时候以Record接口类型接受的。

思考

ServerCnxn是否在Session中也有用,或者session全是用socket连接保持的?应该不是,这样过与消耗资源。

ClientCnxn的readResponse方法其他几种返回的xid没有仔细看,有空可以再看看。

参考

https://www.ibm.com/developerworks/cn/opensource/os-cn-apache-zookeeper-watcher/index.html

http://www.cnblogs.com/leesf456/p/6291004.html

https://www.jianshu.com/p/90ff3e723356

结合前两篇介绍Client和Server端的watcher

464

464

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?