网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。

一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

将代码存储在/usr/local/hadoop/reducer.py 中,这个脚本的作用是从mapper.py 的STDIN中读取结果,然后计算每个单词出现次数的总和,并输出结果到STDOUT。

同样,要注意脚本权限:chmod +x reducer.py

#!/usr/bin/env python

from operator import itemgetter

import sys

current_word = None

current_count = 0

word = None

# input comes from STDIN

for line in sys.stdin:

# remove leading and trailing whitespace

line = line.strip()

# parse the input we got from mapper.py

word, count = line.split('\t', 1)

# convert count (currently a string) to int

try:

count = int(count)

except ValueError:

# count was not a number, so silently

# ignore/discard this line

continue

# this IF-switch only works because Hadoop sorts map output

# by key (here: word) before it is passed to the reducer

if current_word == word:

current_count += count

else:

if current_word:

# write result to STDOUT

print '%s\t%s' % (current_word, current_count)

current_count = count

current_word = word

# do not forget to output the last word if needed!

if current_word == word:

print '%s\t%s' % (current_word, current_count)

测试你的代码(cat data | map | sort | reduce)

我建议你在运行MapReduce job测试前尝试手工测试你的mapper.py 和 reducer.py脚本,以免得不到任何返回结果

这里有一些建议,关于如何测试你的Map和Reduce的功能:

hadoop@derekUbun:/usr/local/hadoop$ echo "foo foo quux labs foo bar quux" | ./mapper.py

foo 1

foo 1

quux 1

labs 1

foo 1

bar 1

quux 1

hadoop@derekUbun:/usr/local/hadoop$ echo "foo foo quux labs foo bar quux" |./mapper.py | sort |./reducer.py

bar 1

foo 3

labs 1

quux 2

using one of the ebooks as example input

(see below on where to get the ebooks)

hadoop@derekUbun:/usr/local/hadoop$ cat book/book.txt |./mapper.pysubscribe 1

to 1

our 1

email 1

newsletter 1

to 1

hear 1

about 1

new 1

eBooks. 1

在Hadoop平台上运行Python脚本

为了这个例子,我们将需要一本电子书,把它放在/usr/local/hadpoop/book/book.txt之下

hadoop@derekUbun:/usr/local/hadoop$ ls -l book

总用量 636

-rw-rw-r-- 1 derek derek 649669 3月 12 12:22 book.txt

复制本地数据到HDFS

在我们运行MapReduce job 前,我们需要将本地的文件复制到HDFS中:

hadoop@derekUbun:/usr/local/hadoop$ hadoop dfs -copyFromLocal /usr/local/hadoop/book book

hadoop@derekUbun:/usr/local/hadoop$ hadoop dfs -ls

Found 3 items

drwxr-xr-x - hadoop supergroup 0 2013-03-12 15:56 /user/hadoop/book

执行 MapReduce job现在,一切准备就绪,我们将在运行Python MapReduce job 在Hadoop集群上。像我上面所说的,我们使用的是HadoopStreaming 帮助我们传递数据在Map和Reduce间并通过STDIN和STDOUT,进行标准化输入输出。

hadoop@derekUbun:/usr/local/hadoop$hadoop jar contrib/streaming/hadoop-streaming-1.1.2.jar

-mapper /usr/local/hadoop/mapper.py

-reducer /usr/local/hadoop/reducer.py

-input book/\*

-output book-output

在运行中,如果你想更改Hadoop的一些设置,如增加Reduce任务的数量,你可以使用“-jobconf”选项:

hadoop@derekUbun:/usr/local/hadoop$hadoop jar contrib/streaming/hadoop-streaming-1.1.2.jar

-jobconf mapred.reduce.tasks=4

-mapper /usr/local/hadoop/mapper.py

-reducer /usr/local/hadoop/reducer.py

-input book/\*

-output book-output

如果上面两个运行出错,请参考下面一段代码。注意,重新运行,需要删除dfs中的output文件

bin/hadoop jar contrib/streaming/hadoop-streaming-1.1.2.jar

-mapper task1/mapper.py

-file task1/mapper.py

-reducer task1/reducer.py

-file task1/reducer.py

-input url

-output url-output

-jobconf mapred.reduce.tasks=3

一个重要的备忘是关于Hadoop does not honor mapred.map.tasks 这个任务将会读取HDFS目录下的book并处理他们,将结果存储在独立的结果文件中,并存储在HDFS目录下的book-output目录。之前执行的结果如下:

hadoop@derekUbun:/usr/local/hadoop$ hadoop jar contrib/streaming/hadoop-streaming-1.1.2.jar -jobconf mapred.reduce.tasks=4 -mapper /usr/local/hadoop/mapper.py -reducer /usr/local/hadoop/reducer.py -input book/\* -output book-output

13/03/12 16:01:05 WARN streaming.StreamJob: -jobconf option is deprecated, please use -D instead.

packageJobJar: [/usr/local/hadoop/tmp/hadoop-unjar4835873410426602498/] [] /tmp/streamjob5047485520312501206.jar tmpDir=null

13/03/12 16:01:06 INFO util.NativeCodeLoader: Loaded the native-hadoop library

13/03/12 16:01:06 WARN snappy.LoadSnappy: Snappy native library not loaded

13/03/12 16:01:06 INFO mapred.FileInputFormat: Total input paths to process : 1

13/03/12 16:01:06 INFO streaming.StreamJob: getLocalDirs(): [/usr/local/hadoop/tmp/mapred/local]

13/03/12 16:01:06 INFO streaming.StreamJob: Running job: job_201303121448_0010

13/03/12 16:01:06 INFO streaming.StreamJob: To kill this job, run:

13/03/12 16:01:06 INFO streaming.StreamJob: /usr/local/hadoop/libexec/../bin/hadoop job -Dmapred.job.tracker=localhost:9001 -kill job_201303121448_0010

13/03/12 16:01:06 INFO streaming.StreamJob: Tracking URL: http://localhost:50030/jobdetails.jsp?jobid=job_201303121448_0010

13/03/12 16:01:07 INFO streaming.StreamJob: map 0% reduce 0%

13/03/12 16:01:10 INFO streaming.StreamJob: map 100% reduce 0%

13/03/12 16:01:17 INFO streaming.StreamJob: map 100% reduce 8%

13/03/12 16:01:18 INFO streaming.StreamJob: map 100% reduce 33%

13/03/12 16:01:19 INFO streaming.StreamJob: map 100% reduce 50%

13/03/12 16:01:26 INFO streaming.StreamJob: map 100% reduce 67%

13/03/12 16:01:27 INFO streaming.StreamJob: map 100% reduce 83%

13/03/12 16:01:28 INFO streaming.StreamJob: map 100% reduce 100%

13/03/12 16:01:29 INFO streaming.StreamJob: Job complete: job_201303121448_0010

13/03/12 16:01:29 INFO streaming.StreamJob: Output: book-output

hadoop@derekUbun:/usr/local/hadoop$

如你所见到的上面的输出结果,Hadoop 同时还提供了一个基本的WEB接口显示统计结果和信息。

当Hadoop集群在执行时,你可以使用浏览器访问 http://localhost:50030/ :

检查结果是否输出并存储在HDFS目录下的book-output中:

hadoop@derekUbun:/usr/local/hadoop$ hadoop dfs -ls book-output

Found 6 items

-rw-r--r-- 2 hadoop supergroup 0 2013-03-12 16:01 /user/hadoop/book-output/_SUCCESS

drwxr-xr-x - hadoop supergroup 0 2013-03-12 16:01 /user/hadoop/book-output/_logs

-rw-r--r-- 2 hadoop supergroup 33 2013-03-12 16:01 /user/hadoop/book-output/part-00000

-rw-r--r-- 2 hadoop supergroup 60 2013-03-12 16:01 /user/hadoop/book-output/part-00001

-rw-r--r-- 2 hadoop supergroup 54 2013-03-12 16:01 /user/hadoop/book-output/part-00002

-rw-r--r-- 2 hadoop supergroup 47 2013-03-12 16:01 /user/hadoop/book-output/part-00003

hadoop@derekUbun:/usr/local/hadoop$

可以使用dfs -cat 命令检查文件目录

hadoop@derekUbun:/usr/local/hadoop$ hadoop dfs -cat book-output/part-00000

about 1

eBooks. 1

the 1

to 2

hadoop@derekUbun:/usr/local/hadoop$

下面是原英文作者mapper.py和reducer.py的两个修改版本:

mapper.py

#!/usr/bin/env python

"""A more advanced Mapper, using Python iterators and generators."""

import sys

def read\_input(file):

for line in file:

# split the line into words

yield line.split()

def main(separator='\t'):

# input comes from STDIN (standard input)

data = read_input(sys.stdin)

for words in data:

**网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。**

**[需要这份系统化资料的朋友,可以戳这里获取](https://bbs.csdn.net/topics/618545628)**

**一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!**

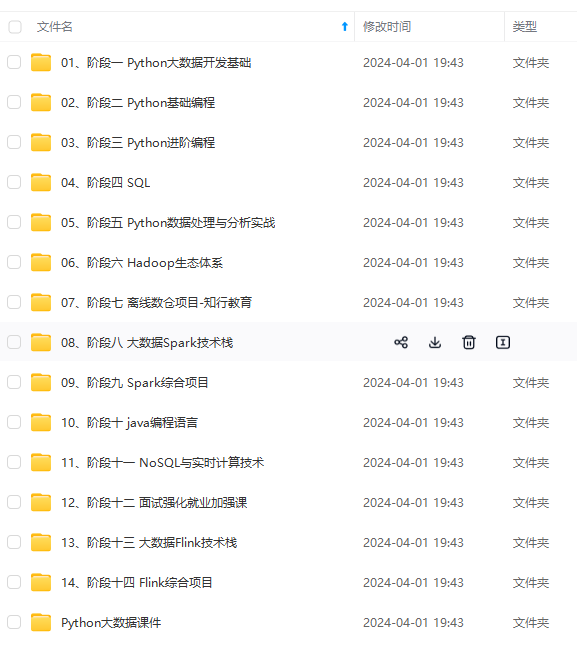

[外链图片转存中...(img-rmJ1FNUb-1715728585490)]

**网上学习资料一大堆,但如果学到的知识不成体系,遇到问题时只是浅尝辄止,不再深入研究,那么很难做到真正的技术提升。**

**[需要这份系统化资料的朋友,可以戳这里获取](https://bbs.csdn.net/topics/618545628)**

**一个人可以走的很快,但一群人才能走的更远!不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!**

6817

6817

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?