1.需求:

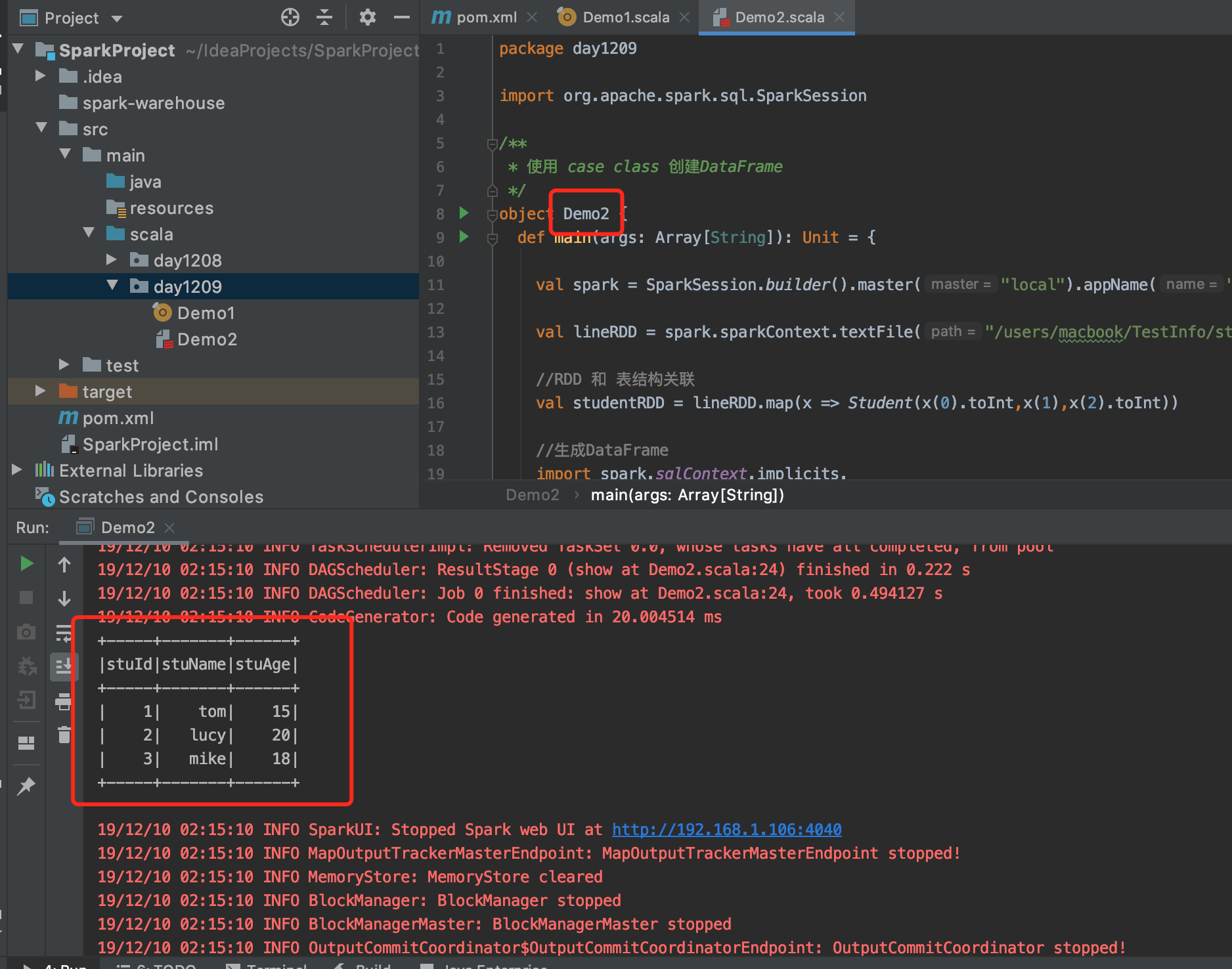

使用 case class 创建DataFrame

2.数据源:

(1)student.txt

1 tom 15

2 lucy 20

3 mike 18

3.编写代码

(1)添加依赖:

pom.xml

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_2.11</artifactId>

<version>2.1.0</version>

</dependency>

<!-- https://mvnrepository.com/artifact/org.apache.spark/spark-sql -->

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-sql_2.11</artifactId>

<version>2.1.0</version>

</dependency>

(2)Demo2.scala

package day1209

import org.apache.spark.sql.SparkSession

/**

* 使用 case class 创建DataFrame

*/

object Demo2 {

def main(args: Array[String]): Unit = {

val spark = SparkSession.builder().master("local").appName("CaseClassDemo").getOrCreate()

val lineRDD = spark.sparkContext.textFile("/users/macbook/TestInfo/student.txt").map(_.split("\t"))

//RDD 和 表结构关联

val studentRDD = lineRDD.map(x => Student(x(0).toInt,x(1),x(2).toInt))

//生成DataFrame

import spark.sqlContext.implicits._

val studentDF = studentRDD.toDF

studentDF.createOrReplaceTempView("student")

spark.sql("select * from student").show

spark.stop()

}

}

//定义 case class 相当于schema

case class Student(stuId:Int,stuName:String,stuAge:Int)

4.结果:

本文介绍如何使用Scala中的CaseClass来创建Apache Spark DataFrame。通过解析student.txt文件中的学生信息,将其转换为RDD,并进一步转化为DataFrame进行SQL查询操作。文章详细展示了从依赖添加到代码实现的全过程。

本文介绍如何使用Scala中的CaseClass来创建Apache Spark DataFrame。通过解析student.txt文件中的学生信息,将其转换为RDD,并进一步转化为DataFrame进行SQL查询操作。文章详细展示了从依赖添加到代码实现的全过程。

66

66

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?